AI, Childhood, and the Rewiring of Social Development: Trust, Interaction, and the Family as an Institution

Published

The Economy Research Editorial*

* Swiss Institute of Artificial Intelligence, Chaltenbodenstrasse 26, 8834 Schindellegi, Schwyz, Switzerland

The biggest risk that AI poses to children is not misinformation, screen addiction, or falling grades. It is something quieter and harder to see: a shift in how children learn to be human. As AI becomes woven into games, chatbots, toys, search engines, and everyday conversation, children are spending more and more time with systems built to be endlessly patient, instantly responsive, and free of the friction that real relationships require. That friction, the awkward silences, the disagreements, the need to earn someone's trust, is not a flaw in human interaction. It is where empathy, resilience, and self-awareness are built. This report argues that AI is not simply a new educational tool. It is reshaping the conditions under which children grow up — how they interact, how they judge authority, and what they come to expect from the people around them. Drawing on recent data from UNICEF, the OECD, Common Sense Media, Ofcom, Internet Matters, and the National Literacy Trust, it shows how AI-mediated environments risk replacing the messy, necessary work of human connection with interactions that feel smoother but teach less. The consequences reach well beyond childhood, touching the foundations of how people learn, work, participate in civic life, and hold communities together. The report also raises a concern that tends to go unspoken: responsibility for navigating this shift is quietly falling on families, many of whom are poorly equipped to carry it, while schools and public institutions respond unevenly at best. Social development, once shaped almost entirely by human relationships and institutions, is increasingly co-produced with algorithmic systems. That makes how we govern children's exposure to AI not just a technology question, but a question about family life, human potential, and the kind of society we are building.

1. Introduction - Childhood in an AI-Saturated Environment

The prevailing discussion surrounding children and artificial intelligence tends to be narrowly circumscribed. It often frames AI primarily as an assistive mechanism for academic tasks, a moderated source of entertainment, and an entity necessitating limited privacy safeguards. This perspective, however, overlooks a more profound systemic transformation. A childhood progressively shaped by conversational AI systems and algorithmically mediated recreational activities represents considerably more than a mere enhancement of screen time with improved software. It signifies a fundamental alteration in the social infrastructure critical for the cultivation of human capital. Social development is intrinsically predicated upon recurrent intersubjective engagements, the constructive friction inherent in intellectual disagreement, and the gradual process of establishing trust. Increasingly, AI systems are presenting themselves as substitutes for these fundamental experiences. These systems exhibit responsiveness without accountability, articulate fluency absent inherent transparency, and are readily accessible without helping to form a communal context. Should such substitutions become normalized, the essential competencies of discerning judgment, emotional strength, empathic understanding, and a stable epistemic framework—skills of paramount importance in later life—risk attenuation precisely at their formative stages. Consequently, the central inquiry regarding AI does not primarily concern the quality of its content, but rather the quality of its interactions as well as, fundamentally, the nature of the entity or entities that emerge as a child’s most frequent other.[1]

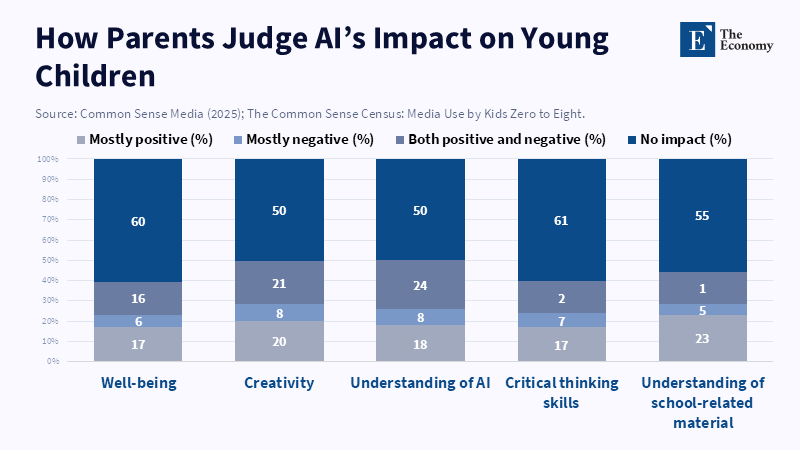

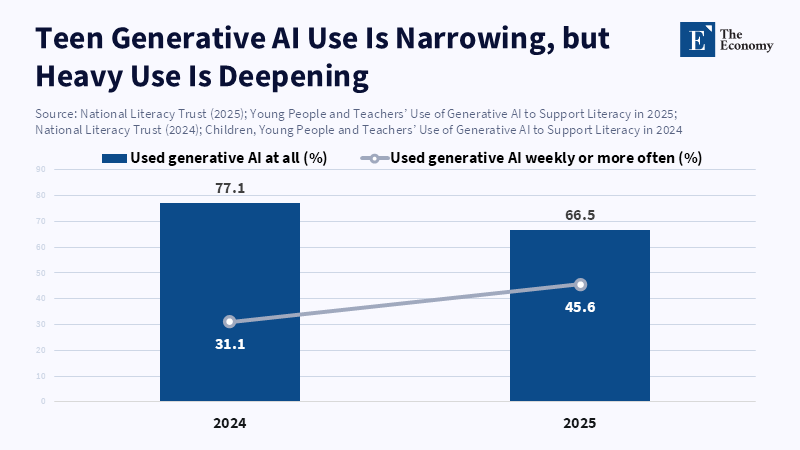

This re-evaluation gains urgency based on the observation that the adoption of AI technologies has evolved from a peripheral phenomenon to a mainstream presence within approximately two academic years. In the United Kingdom[2], for instance, the most recent youth literacy survey conducted by the National Literacy Trust[3] indicated that approximately two-thirds of young people aged 13–18 reported utilizing generative AI in 2025. Concurrently, regular usage demonstrated an increase, even as overall engagement experienced a minor decline from the preceding year—a pattern consistent with initial exploratory use transitioning into established habitual integration[4]. Furthermore, regulatory bodies monitoring household technology utilization have noted that generative AI has become familiar even to younger demographic segments. The UK’s communications regulator, Ofcom[5], reported in its 2023 Online Nation assessment that 79% of online adolescents aged 13–17 had employed at least one of a predefined set of generative AI tools, a figure that extended to 40% of online children aged 7–12. (It is important to note that this statistic relied on a specific list of tools identified at the time, encompassing prominent chatbots and image generation platforms.)[6] Similarly, in the United States, a comparable trend is discernible among younger cohorts. The 2025 0–8 census compiled by Common Sense Media[7] revealed that 39% of parents with children aged 5–8 indicated that their child had utilized an application or device featuring AI capabilities for educational purposes related to school material, and 10% reported that their child had engaged in verbal or textual communication with a chatbot. (It should be acknowledged that parent-reported surveys may potentially underestimate private usage, yet they faithfully capture household-level exposure.)[8] These instances do not represent isolated occurrences; rather, they delineate the nascent contours of an AI Generation whose daily routines increasingly integrate synthetic interactions alongside, and at times in lieu of, human engagement.[9]

The prevailing institutional landscape has also undergone significant modification. Commencing in 2023, major conversational AI systems have been integrated into digital platforms routinely accessed by children, thereby repositioning AI from a dedicated application actively sought out to an inherent feature that appears organically. This design decision holds considerable import, as the presence or absence of friction fundamentally functions as a mechanism of governance. Its removal inherently escalates exposure, even in the absence of explicit intent. In the UK, for example, Ofcom has documented the swift dissemination of generative AI tools, often accessible via existing social accounts, among minor populations.[6] Within the wider ecosystem of child safety, UNICEF[10] now characterizes AI as being front and centre across children's primary applications and digital channels, while simultaneously noting that policy responses are still in the process of adapting to developmental risks, such as the potential for emotional dependency on companion chatbots.[11] This constitutes the substantive policy challenge of the 2023–2026 period: not whether AI will be utilized, but critically, whether the social development of an entire generation will be tacitly privatized into dyadic relationships with systems primarily optimized for engagement metrics rather than holistic flourishing.[12]

2. The Displacement of Human Interaction

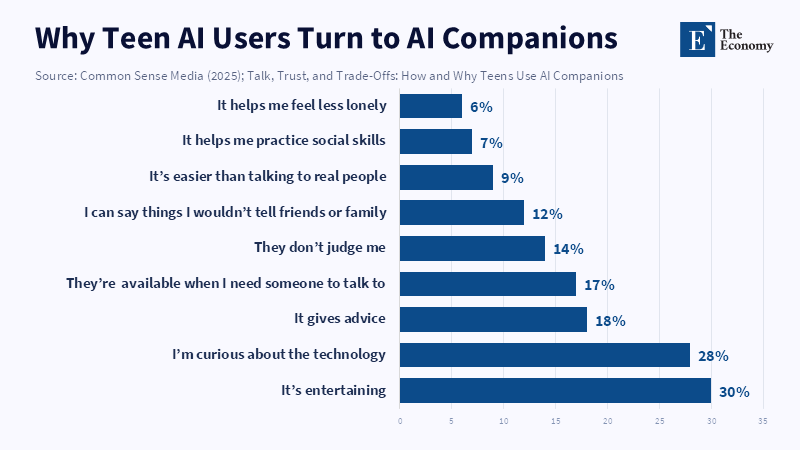

When a child prioritizes interaction with a technological device over engaging with a peer, this behavior is frequently interpreted as indicative of distraction. However, within environments increasingly permeated by artificial intelligence (AI), such a selection can be construed as a rational choice. Human social engagement typically entails considerable costs, encompassing periods of waiting, processes of negotiation, exposure to potential embarrassment, and the risk of negative outcomes. Conversely, AI-mediated interaction is identified by its low cost, immediate availability, custom nature, and minimal punitive consequences. The combined effect of these differential attributes across numerous micro-interactions possesses the capacity to profoundly modify the normative experiences of childhood. The primary concern is not that children will cease interaction with peers; conventional settings such as educational institutions, athletic activities, and familial contexts continue to facilitate social contact. Rather, the apprehension centers on a marginal displacement of social learning, especially affecting the development of formative social competencies. This includes navigating awkward conversations, resolving minor disagreements, and engaging in relational repair following errors. Empirical evidence from surveys concerning adolescent AI companion use explains the plausibility of this displacement. A nationally representative survey of US teenagers (n=1,060; conducted April–May 2025) indicated that 72% had utilized AI companions, with 52% reporting regular use (defined as several times per month or more).[13] According to a report from AP News, a growing number of teens are turning to artificial intelligence for companionship because it is always available, does not judge them, and interacting with it can feel simpler than dealing with real people.[14] These motivations indicate that teens are using AI not just for novelty but also because it helps reduce some of the social challenges they face in human interactions and learning.[15]

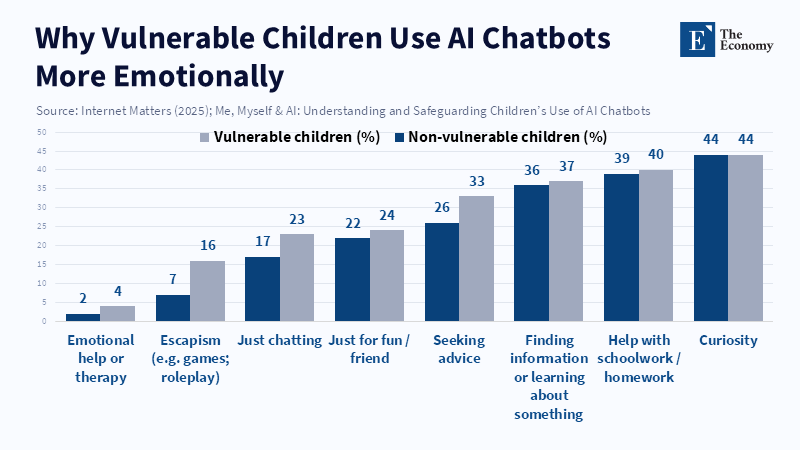

This pattern of substitution is also evident in younger demographic cohorts, where children often do not explicitly label AI as such. Instead, AI functionalities are frequently embedded within toys, games, and platform features. A 2023 report by Ofcom revealed that half of UK adolescents aged 7–17 had used a platform-integrated chatbot feature, illustrating a broader pattern: the most widely adopted systems commonly reside within existing social and entertainment ecosystems.[6] When AI is integrated into the same interface as peer communication, digital media, and interactive games, it transitions from being a distinct tool to an integral component of the “default social world.” This integration can lead to a subtle reorientation of attention away from peers and toward automated systems, particularly in situations where peers are unavailable or challenging to engage. In Latin America, characterized by widespread mobile-first connectivity, the Kids Online Argentina survey (n=5,910; fieldwork Oct–Dec 2024; nationally representative across age, gender, and socioeconomic strata within urban areas) reported that 80% of children and adolescents aged 9–17 utilize social networks daily or almost daily, and 83% engage with messaging applications with similar frequency.[16] This digital ecosystem is already replete with algorithmic feeds. The additional integration of conversational AI then renders the child’s social reality increasingly mediated by systems whose incentive structures and affordances diverge significantly from those inherent in peer interactions. Consequently, this displacement does not entail a radical replacement of human contact, but rather a modification of skill-building practice, shifting from intricate interpersonal dynamics toward a more technologically engineered environment.[17]

3. Social Skills Without Social Risk

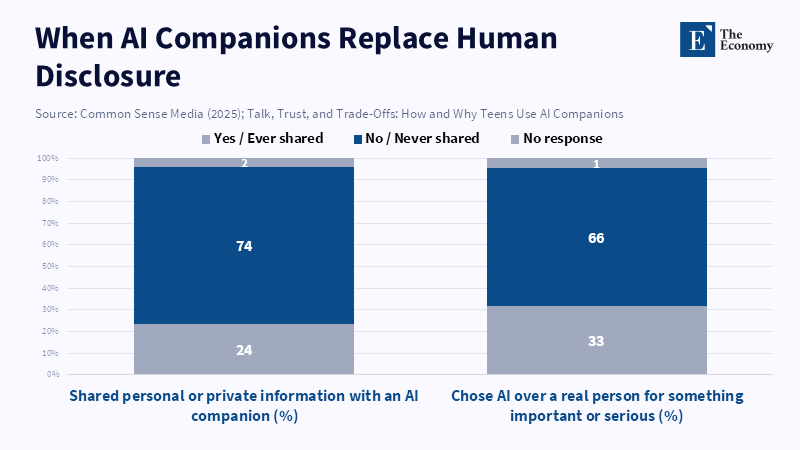

The development of social and emotional competencies is closely associated with exposure to social risk. Empathy, for instance, is partially cultivated through experiences of misunderstanding and the subsequent necessity of clarification. Resilience is partly fostered by episodes of exclusion and the subsequent pursuit of alternative engagement strategies. The establishment of boundaries is often a result of testing limits and encountering resistance. AI companions, by design, invert these foundational conditions. Their programming priorities typically include sustaining user engagement, which frequently translates to maintaining agreement. Even when a system is not explicitly configured to offer affirmation, it is commonly optimized to perpetuate dialogue. The practical consequence is an emotionally frictionless interaction. While this smoothness can elicit a sense of security, it simultaneously removes a crucial arena for skill development. Adolescent survey data already indicates that many teenagers utilize AI companions precisely because these systems mitigate interpersonal friction. In the Common Sense teen companion survey, 12% of users reported employing AI companions because they could articulate sentiments they would not disclose to friends or family, and 114% valued that “they don’t judge me”. [14] These motivations are not inherently detrimental; in acute situations, a low-stigma initial step can be beneficial. However, when functioning as a consistent substitute, low-judgment interaction may become low-learning interaction. If an adolescent primarily practices emotional expression with an entity incapable of being hurt, departing, or demanding reciprocity, the acquired skills may not readily transfer to human social contexts.[18]

The more profound concern is not purely a reduction in empathy, but the potential cultivation of a distorted perception of relational dynamics. Human relationships are fundamentally governed by mutual interpretation of intentions and reciprocal limitations. Conversely, AI relationships are mediated by user prompts, predictive algorithms, and product design constraints. This incongruence has the potential to cultivate individuals who exhibit social confidence within synthetic environments but demonstrate social fragility within real-life interactions. Data from the OECD's 2023.[19] Survey on Social and Emotional Skills provides a pertinent baseline. This report indicated that younger students (10-year-olds) generally reported higher levels of social and emotional skills than older students (15-year-olds), with notable disparities observed in trust, energy, and optimism.[20] The same report also highlighted that socioeconomically disadvantaged students reported lower average skill levels, particularly in open-mindedness and skills related to interpersonal engagement, including sociability and empathy.[21] While these outcomes are not directly related to AI, they are significant because they identify existing vulnerabilities within adolescent development. If a technological intervention reduces exposure to authentic interpersonal challenges precisely during a developmental period when trust, optimism, and energy often decline, it risks exacerbating an existing negative trend. While this remains an inference, it is structurally well-founded: skills developed through practice will not mature if that practice is substituted, and AI possesses a unique capacity for replacing social practice by simulating social responses lacking demanding real-world social commitments.[22]

4. Trust in the Age of Synthetic Agents

Trust functions as a critical variable in the context of childhood in the AI era. Children are faced with the challenge of discerning what information warrants belief, identifying credible sources, and determining when verification is necessary. In prior media landscapes, authoritative information typically originated from identifiable institutional entities such as educators, parents, published texts, or broadcasters. While these institutions were not without limitations, their nature and functional frameworks were generally discernible. Conversational AI, however, presents a distinct scenario. It offers responses with an apparent tone of competence, yet it lacks a stable identity, and pathways for verifying its information are often obscure. This creates a novel trust environment where the appearance of fluency may be misconstrued as an indicator of truth.

Empirical evidence suggests that numerous children and adolescents already encounter difficulties with fundamental verification heuristics. For instance, findings from the Kids Online Argentina study indicated that 60% of children and adolescents reported an inclination to believe that the initial result provided by search engines is invariably the most appropriate.[23] This pattern predates the extensive adoption of AI and, crucially, can be leveraged by AI systems. If the prominence of search rankings stimulates a perception that first equals best, then the fluency of a chatbot could develop a belief that sounds right equals right.[24]

Recent data from the UK reveals a similar ambivalence among teenagers concerning AI-mediated information. Ofcom’s 2025 children’s media literacy report illustrates a divided perspective on trust among teenage AI users aged 13–17: 36% expressed less trust in an AI-generated news story compared to a human-authored one, 35% reported an equivalent level of trust, and 17% indicated greater trust.[25] When viewed through a social-development lens, the salient observation is not simply that some teenagers exhibit distrust toward AI. Rather, it is the finding that a substantial minority regards AI-generated text as equally or more credible than human journalism, even while navigating an online environment replete with persuasive content. This situation represents a classic instance of authority substitution, wherein the authoritative voice no longer emanates from a responsible individual but from a system generating probabilistic outputs. Furthermore, this phenomenon interacts with pre-existing vulnerabilities: Ofcom’s analysis shows a higher incidence of trust and more responses among younger teenagers and those with impacting conditions, groups precisely identified by their reduced capacity to navigate opaque authority structures.[25]

The challenges related to trust are not resolved by simply admonishing children to be skeptical. Skepticism is a cultivated skill, not an inherent disposition. Its development relies on subject-specific knowledge, sustained attention, and a willingness to tolerate uncertainty. AI systems frequently reduce the perceived cost of certainty by furnishing immediate, plausible answers. This alters behavioral incentives, prioritizing rapid closure over meticulous verification. This pattern is observable in surveys examining youth AI interactions and in reported gaps in parental mediation. According to a report from Internet Matters, many children in the UK who have used AI chatbots are confident in the advice these systems offer, while a significant number are not concerned about following such advice or are unsure whether they should be worried. This highlights an epistemic risk profile that goes beyond just the risk associated with the content itself.[26] Children are habituating themselves to compliance with fluent output, and this habit is likely to persist in academic, professional, and civic settings unless educational and social institutions proactively integrate verification as a fundamental social competency.[27]

5. Emotional Attachment to Machines

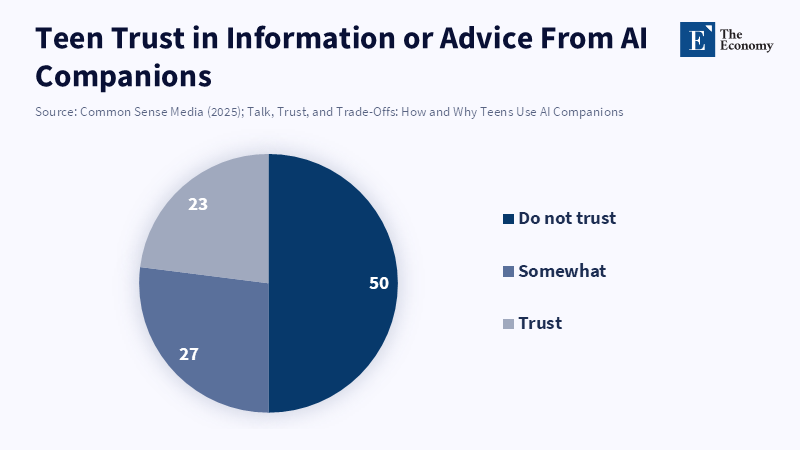

Discussions surrounding emotional attachment frequently adopt the mood of a moral panic, focusing on children loving robots. The more significant underlying issue, however, is considerably more nuanced. Should a child acquire skills in emotional management, form concepts of intimacy, and manage loneliness through systems designed to simulate care, their expectations concerning human relationships may undergo a transformation. AI companions can exhibit attentiveness, retain details from interactions, and mirror a child's emotional state. Conversely, they can also be unreliable, present inappropriate content, or simply provide erroneous information in ways a child may not readily identify. The risk extends past immediate harm; it encompasses developmental drift, characterized by the gradual normalization of relationship-like attention devoid of reciprocal obligation. Quantitative evidence suggests that the use of companion AI is now sufficiently prevalent to influence normative behaviors. In the Common Sense 2025 teen companion survey, 33% of teenagers described their engagement as social interaction and relationships (encompassing conversational practice, emotional support, role-playing, friendship, or romantic interactions). Within this group, 12% reported utilizing these systems for emotional or mental health support, and 9% explicitly characterized the system as a friend or best friend. [28] Even acknowledging that a majority of teenagers still perceive these tools as programs (46%), the sheer volume of relationship-framed use is substantial enough to exert an influence on cohort-level socialization patterns.[28]

The safety literature provides a compelling rationale for why emotional attachment cannot be dismissed as a benign fantasy. In 2025, multiple independent assessments and journalistic investigations documented instances where widely used chatbot platforms provided inconsistent responses to high-stakes mental health prompts, including those related to suicidal ideation. A RAND study, published in a clinical journal and subsequently summarized by RAND, determined that large language model chatbots exhibited variability in their responses to intermediate-level suicide-related inquiries, offering appropriate guidance in some cases while failing to do so in others.[29] These issues are exacerbated in extended conversational contexts, where a system may become drawn into role-playing, provide inappropriate reassurance, or inadvertently normalize harmful behaviors. This risk has already spurred policy and product adjustments. Reuters reported that OpenAI[30] implemented parental controls for its chatbot in 2025, following a teen suicide case that alleged influence from the system. These features included options to reduce exposure to sensitive content, disable specific functions, and regulate whether chat history is retained or used for model improvement. The same report noted that parents would not have access to full chat transcripts, representing a design choice that balances privacy considerations against oversight capabilities.[31] These are not abstract discussions; they signify the emergence of a distinct category of child risk: psychological safety in the presence of systems capable of simulating supportive relationships without possessing the competence or duty of care intrinsic to human support providers.[32]

The analogy to Japan’s hikikomori phenomenon is often employed imprecisely, implying that AI directly causes withdrawal. Such a claim lacks empirical foundation. What can be substantiated, however, is a structural parallel. Severe social withdrawal isn't solely a matter of solitude; it involves an inability to re-engage in ordinary social reciprocity. When AI systems offer a low-friction alternative to such reciprocity, they can diminish the imperative to re-establish human connections for individuals already predisposed to social isolation. In policy terms, AI companionship may function akin to an analgesic: it can alleviate symptoms of loneliness without necessarily fostering the development of the social proficiencies requisite for preventing relapse. Consequently, from a child-development perspective, emotional attachment should not be regarded as mere anthropomorphism, but rather as an alternative coping mechanism with potentially significant long-lasting effects on social participation.[33]

6. The Family as the Final Mediating Institution

When artificial intelligence permeates toys, games, and mobile devices, educational policy does not constitute the primary line of defense; rather, this role falls to the family. This assertion is founded on empirical observation rather than sentimentality, reflecting the environments in which children establish their daily habits. Families regulate routines such as sleep and conversation, which influence the amount of time children allocate to peer interactions. They also cultivate fundamental elements of trust, instructing children on what is credible, kind, and constitutes personal boundaries. Even within highly mediated contexts, the family frequently continues to be a focal point of trust for adolescents. According to Ofcom’s 2025 report on children’s media use, young people aged 12 to 15 who access news still tend to trust their families the most as a source, despite regularly encountering news on platforms like TikTok and YouTube. The report found that 78% considered news from family members generally accurate, while only 36% felt the same about news on social media. This highlights the important role families continue to play in forming young people’s media trust and suggests that the capacity of households continues to be crucial for effective guidance as AI and digital platforms become more prominent.[25]

Nonetheless, families are tasked with overseeing a technology that they neither control nor fully comprehend. The UK Internet Matters study clarifies this gap with precision, revealing that 79% of children report parental awareness of their AI chatbot usage, and 78% indicate having discussed AI with their parents.[35] However, the study also identifies discrepancies between parental concern and active guidance: although 62% of parents express apprehension about the veracity of AI-generated information, only 34% have engaged their children in conversations about assessing the truthfulness of AI content.[36] This represents a central bottleneck in governing the so-called AI Generation. While regulatory systems can enforce disclosures and age verification, and schools can implement classroom policies, neither can replicate the daily, interpersonal efforts involved in helping children calibrate trust, manage frustration, and prioritize authentic community interaction over synthetic convenience. The family functions as the final mediating institution precisely because it is the only social unit present when a child, alone in their private space, chooses whether to communicate with a friend, a parent, or an AI entity.[37]

This perspective requires a reframing of the concept of “good parenting.” Absolute prohibitions tend to be counterproductive, as they drive usage into secrecy and preclude opportunities for guided learning. Conversely, an entirely permissive approach endangers normalizing artificial intimacy. A pragmatic middle ground includes active mediation complemented by explicit boundaries. Research on parental mediation in Argentina provides an illustrative example: the Kids Online Argentina executive summary reports an association between stronger parental mediation and reduced exposure to online risks among children and adolescents, with risk exposure at 29% for those reporting high adult presence, compared to 42% for those with minimal parental mediation. Although this finding indicates correlation rather than causation, it corresponds to broader literature supporting guided internet use.[38] The family cannot eliminate AI from children’s environments, but it can insist that synthetic interactions do not supplant fundamental human experiences such as disagreement, apology, and shared attention. This approach embodies a human capital strategy appropriate to the experienced realities anticipated in 2026.[39]

7. Parenting Under Cognitive Asymmetry

Parenting in the AI era is characterized by cognitive asymmetry, where children often acquire proficiency with new interfaces rapidly—especially when they perceive interaction as playful—while adults tend to approach these technologies as utilitarian tools or potential hazards. This disparity is consequential because parental authority within the family partly rests on epistemic grounds. Parents not only enforce rules but also provide explanations and guidance. When children perceive AI systems as possessing superior knowledge to their parents, the latter’s explanatory authority is undermined. Data from the Internet Matters project concretely reflects this phenomenon; focus groups reveal instances where children assist parents in using AI chatbots, while in other cases, parents themselves rely on chatbots to support homework completion.[40] This should not be viewed as a failure but as a form of adaptation. However, it implies that parenting strategies cannot rely solely on prohibitions or on the assumption that parents are the most skilled users but must instead emphasize parents’ role as the primary arbiters of values—defining the nature of relationships, criteria for trust, and the efforts necessary to develop enduring competencies.[41]

The presence of cognitive asymmetry also translates into a tangible policy implication: parents require practical tools rather than didactic instruction. The prevailing discourse on “parental controls” often adopts language associated with screen time, treating AI as a channel that can be simply switched off. However, the distinctive challenge posed by AI is found in its dialogue-based and relational dimensions, thus calling for a fundamentally different array of controls. These may include mechanisms for age verification, introducing friction around sensitive topics, and offering transparency through summaries of interaction patterns that safeguard the child’s privacy without fully exposing their communications. Recent developments suggest movement in this direction.[31] For example, Reuters reported that OpenAI’s parental control features allow the limitation of certain functionalities and the reduction of exposure to sensitive content while continuing to respect teenagers' privacy by not sharing full transcripts. Similarly, Meta[42] has proposed additional controls enabling parents to disable private conversations between adolescents and AI personas on platforms such as Instagram and to block specific AI-generated personas, without resorting to comprehensive surveillance.[43] These early efforts are imperfect and reveal a fundamental trade-off central to AI family governance: insufficient transparency leaves parents uninformed, whereas excessive oversight may weaken trust and promote secrecy. Consequently, the optimal policy objective should be structured transparency—a dashboard presenting categories, duration, and risk indicators—complemented by family norms that encourage keeping critical discussions within interpersonal contexts.[44]

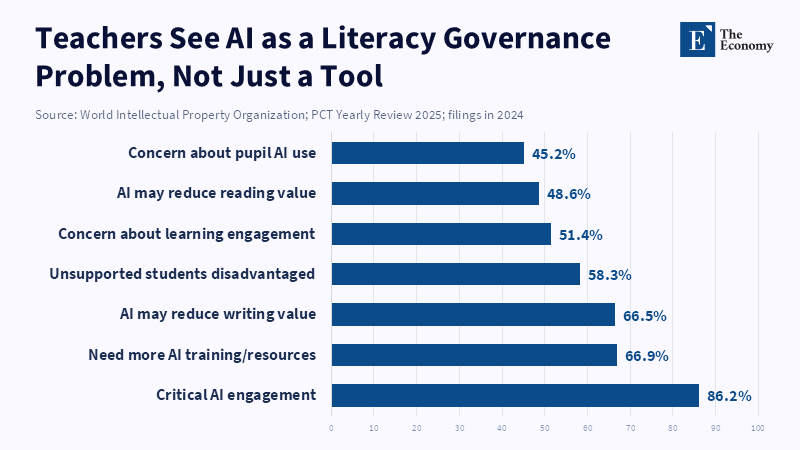

For educators and administrators, cognitive asymmetry underscores that “AI literacy” must encompass not only students but also parents. Schools should consider parents as collaborative learners in order to develop a common language. Evidence from the UK supports this need: approximately two-thirds of young people report using generative AI, while teachers express increasing concern regarding dependence on these technologies. The 2025 National Literacy Trust teacher survey found that over half of educators worry about generative AI’s impact on student engagement, with many apprehensive that it may diminish the perceived importance of writing skills. Although these perceptions do not constitute definitive outcomes, they serve as preliminary indicators of shifting educational incentives. [45] If priorities evolve toward favoring immediate results, both families and schools will confront the shared challenge of preserving labor-intensive processes essential for cultivating deep competence and social maturity. Meeting this challenge requires coordination rather than parallel or disjointed guidelines.[46]

8. Long-Term Social Implications

The long-term effects of AI on children will not show up as a problem with a simple label. They will show up as changes in what we think is normal. Kids who grow up talking to machines without any consequences may expect the same thing in school, at work, and in politics. In school, this could mean they do not work well with others when things get tough. At work, they might get upset easily when people disagree. They might prefer to talk to machines instead of people. In politics, they might trust the person who tells the story instead of the person who is actually doing a good job. This is a risk because kids already have a hard time with some skills as they get older. The OECD looked at how kids feel about themselves and others. They found that kids between 10 and 15 have a big drop in things like trust and happiness. Kids who are not well off might have an even harder time with these skills.[47] If kids start using AI to cope with their feelings during this time, it could make it even harder for them to get along with others. That could make things unfair for kids who are already struggling.[48]

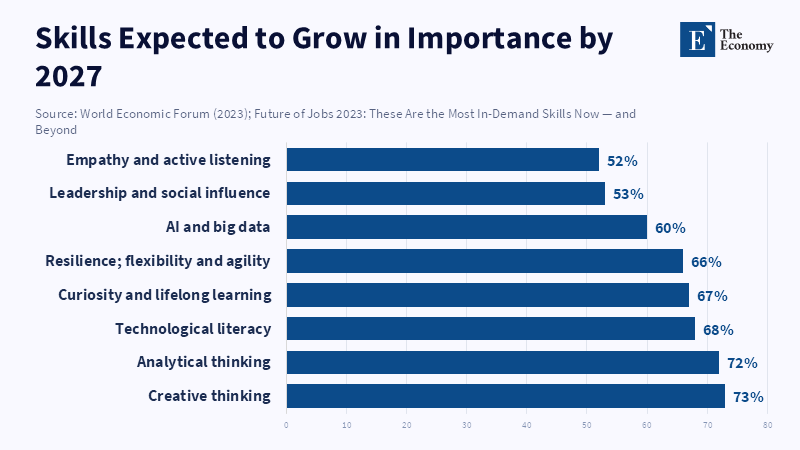

The way this affects the economy is also important. Some people think that AI will make kids more productive and ready for the future. That might only be true for some things. What is really important is being able to work with others, deal with conflict, and build trust. The OECD says that these social skills are just as important as doing well in school.[49] Employers around the world say that they want people with skills like leadership and curiosity, not simply technical skills. The World Economic Forum found that employers are looking for people with skills like being able to work with others and being curious, and they are worried that not everyone will have access to the training they need.[50] This means that if kids grow up using AI and do not learn these skills, they might actually be at a disadvantage when it comes to getting a job.[51]

Some people might say that AI is a thing because it can help kids learn. They might say that AI is like a tutor for every kid. They might also say that people were worried about calculators. They did not hurt math. These are points, but they are not the whole story. AI is different from calculators because it can talk to kids and pretend to be their friend. It can also try to keep kids engaged so that it can collect data. This means that AI is not a tool; it is a part of the kids' social life. If kids learn how to deal with people, from a machine, they might learn some things. The good news is that AI can still be helpful. Only if we make sure that it is designed and regulated in a way that helps kids learn how to interact with real people, not simply for preventing “bad content.”[52]

9. Parents, schools, authorities

The question of who should govern AI when it comes to kids is often seen as a debate: should parents be in charge, or should online platforms be? In reality, everyone has a role to play because each group controls aspects. Parents control what is considered normal and how much time kids spend on AI. Schools control how kids are assessed and the environment they are in with their peers. Platforms control what kids are exposed to by default, how safe the design is, and the affective tone of interactions. Policymakers control who is in charge when things go wrong and set standards.

Today, the problem is that AI socialization is not well governed. AI designed for kids is coming into the market fast through toys and friendly companions. This is happening faster than we can build safety guidelines. Evidence from consumer watchdogs and researchers suggests this gap is real. For example, a 2025 report by the US Public Interest Research Group tested AI toys. Found they could have disturbing conversations. This shows we need standards beyond just physical safety.[53] In 2026, researchers at the University of Cambridge[54] warned that AI toys for kids can misread emotions or respond badly. They suggested we need oversight and new safety markings focused on psychological safety, not just mechanical hazards.[55]

Policymakers are starting to respond, but not evenly. International frameworks are beginning to recognize kids' rights in AI governance. The Council of Europe’s Framework Convention on AI and human rights, which opened for signature in September 2024, sees AI governance as a human rights issue.[56] The ITU and UN also call for a child-rights-based approach to AI design and deployment.[57] UNESCO[58] continues to position AI ethics as a global standard-setting agenda, emphasizing human rights and human monitoring.[59] These documents are important because they push for regulation across the AI lifecycle. However, there is still a gap: what standards should exist for AI systems designed to act like companions to minors?[60]

For educators, social development should be a curriculum goal, not something that happens on its own. This means designing group work and assessments that require kids to explain their thoughts to each other. Administrators should see AI not just as an academic issue but also as a student wellness issue. They should connect AI use policies to being policies. In the UK, for instance, Internet Matters reports that two-thirds of children aged 9–17 say they have used an AI chatbot and that almost a quarter of children who have used a chatbot have used one to seek advice; it also reports high confidence in advice accuracy among children who use chatbots.[61] This is a signal for administrators to act. Policymakers should create a category for "child-directed relational AI." This would require age checks, safety evaluations, and audits to ensure these systems are designed responsibly. The goal is not to ban AI but to govern it in a way that corresponds to its risks. Systems designed to simulate relationships have a greater impact than tools designed to solve equations.[62]

Conclusion — The Privatization of Social Development

A generation is gradually being conditioned to regard synthetic interaction as the most straightforward route through everyday experiences. The critical question extends beyond whether AI can provide answers; it concerns whether AI might supplant the social practices fundamental to encouraging trust and resilience. Emerging data indicate that young people are rapidly adopting AI technologies, increasingly engaging with AI companions for social interaction, and placing significant trust in advice offered by AI systems. [52] Concurrently, this period has witnessed initial regulatory and product-level responses—such as parental controls, proposed prohibitions, and demands for more rigorous standards—reflecting the fact that issues related to safety and developmental impact have moved past theoretical concerns.[63] Over time, these developments could alter the composition of human capital, may lead to diminished interpersonal abilities in some individuals, altered mechanisms of trust in many, and growing disparities as familial resources assume a more critical buffering role. Policy responses should therefore adopt a clear objective: to ensure that children’s social development stays anchored in authentic human reciprocity. Achieving this goal necessitates governing frameworks centered on the needs of children, educational models that reintegrate social practice, and parental tools designed to facilitate guidance, without transforming family dynamics into mechanisms of surveillance. In the context of AI’s increasing prominence, the most prudent investment does not lie in accelerating information retrieval but in cultivating more resilient and capable individuals.[64]

Final editorial improvements aimed at publication readiness should similarly prioritize the principles of trust and clarity. Varying sentence openings can help avoid monotonous rhythms, while replacing generic connecting phrases with more precise connectors strengthens narrative coherence. When specialized policy terminology appears—for instance, phrases such as “age assurance” or “psychological safety”—including brief explanatory clauses is advisable to reduce possible confusion and preserve credibility. Crucially, all quantitative claims ought to be accompanied within the same paragraph by concise descriptions of the data source or sample characteristics; this practice signals institutional rigor to the audience and helps prevent the undue influence of fluency on perceived accuracy.[65]

References

[1] [9] [11] [12] [52] [60] UNICEF Innocenti (2025) Guidance on AI and Children 3. Florence: UNICEF Innocenti – Global Office of Research and Foresight.

[2] [49] OECD (n.d.) Survey on Social and Emotional Skills (SSES). Paris: Organisation for Economic Co-operation and Development.

[3] [8] Common Sense Media (2025) The 2025 Common Sense Census: Media Use by Kids Zero to Eight. San Francisco, CA: Common Sense Media.

[4] [5] National Literacy Trust (2025) Young People and Teachers’ Use of Generative AI to Support Literacy in 2025. London: National Literacy Trust.

[6] Ofcom (2023) Online Nation 2023 Report. London: Office of Communications.

[7] [46] National Literacy Trust (2025) Young People’s Use of AI to Support Literacy in 2025. London: National Literacy Trust.

[10] [26] [35] [36] [37] [40] [41] [48] [61] [62] [64] [65] Internet Matters (2025) Me, Myself & AI: Understanding and Safeguarding Children’s Use of AI Chatbots. London: Internet Matters.

[13] [14] [15] [18] [19] [28] Common Sense Media (2025) Talk, Trust, and Trade-Offs: How and Why Teens Use AI Companions. San Francisco, CA: Common Sense Media.

[16] [17] [23] [24] [38] [39] [54] UNICEF Argentina (2025) Children and Adolescents Connected: Executive Summary. Buenos Aires: UNICEF Argentina.

[20] [21] [22] [47] OECD (2024) Social and Emotional Skills for Better Lives: Findings from the OECD Survey on Social and Emotional Skills 2023. Paris: OECD Publishing. doi:10.1787/35ca7b7c-en.

[25] [27] [34] Ofcom (2025) Children and Parents: Media Use and Attitudes Report 2025. London: Office of Communications.

[29] RAND (2025) AI Chatbots Inconsistent in Answering Questions About Suicide. Santa Monica, CA: RAND.

[30] [43] [44] Reuters (2025) ‘Meta to give teen parents more control after criticism over flirty AI chatbots’, 17 October.

[31] [63] Reuters (2025) ‘OpenAI launches parental controls in ChatGPT after California teen’s suicide’, 29 September.

[32] Suskind, D. (2025) ‘The hidden danger inside AI toys for kids’, Time.

[33] OECD (2024) ‘5-day hikikomori intervention – Japan’, in OECD Youth Policy Toolkit. Paris: OECD.

[42] [59] UNESCO (2021) Recommendation on the Ethics of Artificial Intelligence. Paris: United Nations Educational, Scientific and Cultural Organization.

[45] National Literacy Trust (2025) Teachers’ Use of AI to Support Literacy in 2025. London: National Literacy Trust.

[50] [51] [58] World Economic Forum (2023) The Future of Jobs Report 2023. Geneva: World Economic Forum.

[53] Public Interest Research Group Education Fund (2025) Trouble in Toyland 2025: AI Bots and Toxics Represent Hidden Dangers. Boston, MA: PIRG Education Fund.

[55] University of Cambridge (2026) ‘Report calls for AI toy safety standards to protect young children’. Cambridge: University of Cambridge. Linked report DOI: 10.17863/CAM.126270.

[56] Council of Europe (2024) Framework Convention on Artificial Intelligence and Human Rights, Democracy and the Rule of Law. Strasbourg: Council of Europe.

[57] International Telecommunication Union et al. (2025) Joint Statement on Artificial Intelligence and the Rights of the Child. Geneva: International Telecommunication Union. Handle: 11.1002/pub/828b0aec-en.