Beyond the Prompt: Domain Knowledge, AI Competence, and the Rise of Super-Human Labor

Published

1 The Economy Research, 71 Lower Baggot Street, Dublin 2, Co. Dublin, D02 P593, Ireland

2 Swiss Institute of Artificial Intelligence, Chaltenbodenstrasse 26, 8834 Schindellegi, Schwyz, Switzerland

The discussion of artificial intelligence remains overly focused on tool literacy rather than the knowledge that generates tool productivity. This paper claims that the most salient new line of division of the AI era is not between haves and have-nots, but between human beings whocan steer AI through knowledge, judgment, and conceptual scope and those who cannot. In this reading, AI is not presented as a generic productivity-enhancing instrument, but as a structural change that fundamentally transforms how human capital is built and compensated, how organizations and institutions compete and adapt, and how workers are ranked within the labor market. The analysis defends three claims: The importance of domain expertise over AI tool literacy is still deeper, as generative models enhance prior knowledge rather than make it obsolete. Conversely, AI competence becomes a basic qualification requirement at the high-end of the organization, because strategic decision-making is increasingly mediated by how well one can frame, evaluate, and operate AI-enabled solutions. Conversely, at the labor level, what matters more dramatically is the emergence of a new worker type-someone who combines deep substantive knowledge, inter-domain knowledgeability, and AI fluency-increasing the leverage of what any single worker can achieve. Ultimately, I conclude that the most pressing challenge for policy in the AI age is not the proliferation of access to AI systems, but rather the maintenance and expansion of those forms of knowledge, judgment, and organizational structure that enable human capital development rather than further deepening existing inequalities.

1. Introduction - Reframing AI Competence and Human Capital

Current debates about artificial intelligence focus on the wrong questions. Instead of asking whether people must learn to code, master prompting, or constantly keep up with every new gadget to remain relevant, we should ask who possesses the judgment to control these tools. The difference is no longer between those with and without AI tools, but between those capable of controlling them and those who are not. The real issue does not lie with the tool itself, but in the knowledge, conceptual vision and experience required to make the tool function usefully. AI can accelerate execution, but only a well-trained intellect can accurately define the problem, evaluate answers, and distinguish the useful from the plausible, something the tool alone cannot provide.

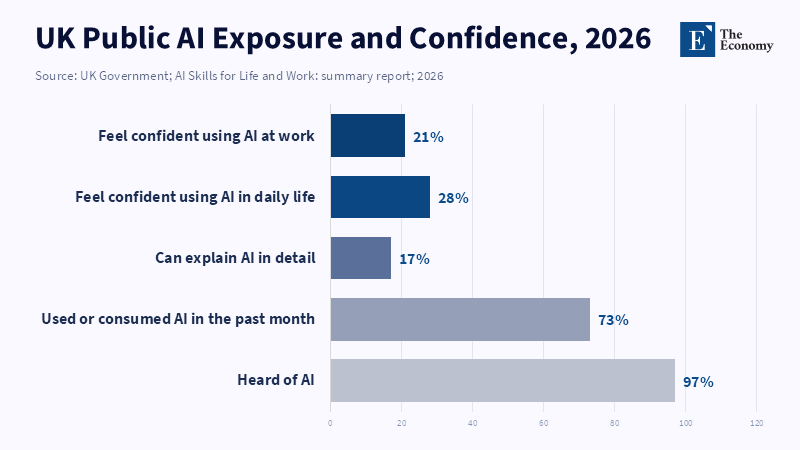

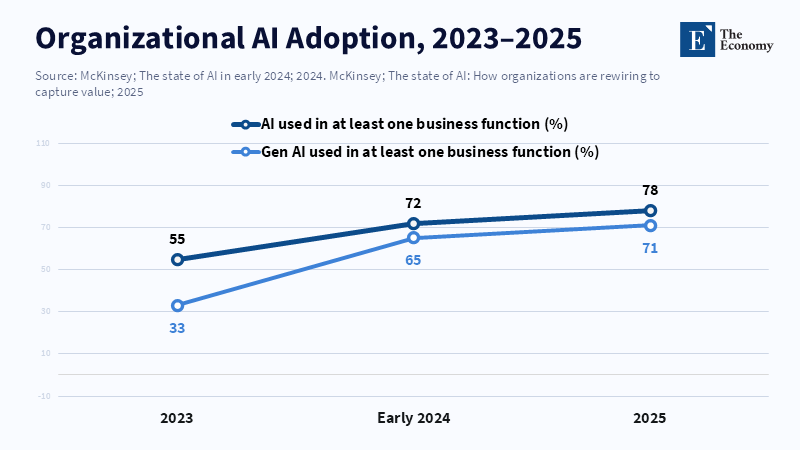

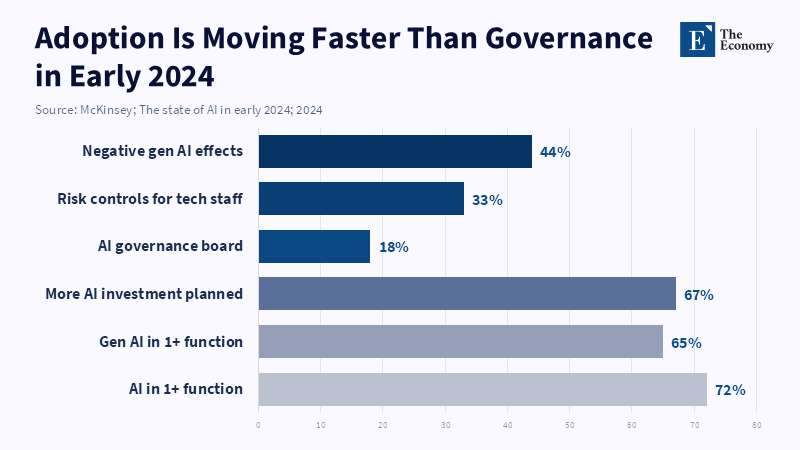

This observation is more urgent in the period 2023–26, as the spread of generative AI throughout classrooms, workplaces and administrations has significantly outpaced the updates to corporate standards and policies.[1] Essays, code and summaries can now be produced by students in mere seconds; employees can automate drafting, research, and routine analysis at a speed which seemed inconceivable just a few years prior. Nonetheless, the swift rise in output quality has instilled a flawed perception of competence: familiarity with an AI interface or quickly generated polished responses is now widely considered expertise in itself; this is not true. The artifact is being mistaken for a capability.

The practical problem here is that, instead of eliminating the need for domain knowledge, AI often makes it more critical. A non-lawyer, non-financier, non-scientist or non-educator and trained specialist can receive equivalent output in terms of fluency from a given AI system, but is not performing the same task. One is retrieving language; the other is wielding it with judgment as a novel instrument. This is also why tool literacy does not easily substitute for deep domain knowledge: in serious tasks, the essential activity is not writing prose but determining relevancy, identifying error, analyzing trade-offs and understanding what the model has left out, rather than only interface skill.[2]

At the same time, it would also be wrong to staunchly defend domain expertise while decrying AI competency as subordinate or supplemental. Doing so is to underestimate the rapid spread of AI literacy as a baseline requirement in institutions. Teachers failing to integrate AI create tests that can no longer ascertain learning; managers, who fail to recognize its functional implications, are doomed to make ill-considered business decisions and to misjudge value. The emerging labor market does not reward knowledge alone or tools alone. It increasingly rewards the union of both.[3]

This report asserts that the more vital development is not a zero-sum battle between domain expertise and AI skills, but rather the emergence of a worker that merges significant substantive knowledge with the capacity to leverage AI in a variety of tasks and scenarios. Given AI's ability to compress execution time, the reward will now likely shift toward higher-order skills like formulation, synthesis, validation, judgment and cross-domain flexibility. That shift privileges a new class of labor adept at performing more with less working time, and more across more domains. The following sections develop this argument in the form of three claims: that domain expertise will likely remain more foundational than tool literacy, that AI competence is becoming increasingly important at the executive levels of institutional decision-making, and that at the level of the workforce, AI is advancing a new kind of work. The real question then is not which is more important, tools or knowledge, but how AI is altering the relative value of both, and how this new equilibrium will remake education, management, and the labor market.

2. Knowledge about the area > AI Tool

First and foremost, it must be said that tools themselves cannot impart expertise. At first glance, the idea that all one must do is learn a few prompt inputs or a few lines of code to wield AI as a tool for an "on-demand expert" seems believable but is disproven by logic and data alike. A famous quote by Brookings fellow Michael Lokshin is that we have been wrong all along about the bottleneck to productivity – it was never the keyboard but "the knowledge behind it". To put this simply, a fancy AI front-end without any appreciation of the underlying content is like an English translator without a sense of legal drafting-it will get the words across, but the content will be meaningless. AI tools must be steered by human understanding about what the appropriate question is and whether the answer produced by the tool is accurate. "Prompt engineering" is good for creating instant novelty, but it will not provide a shortcut for good judgment or for field-specific reasoning.

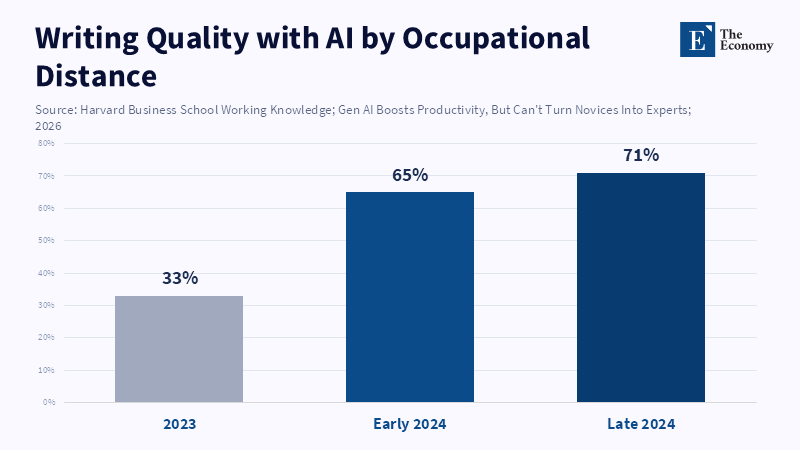

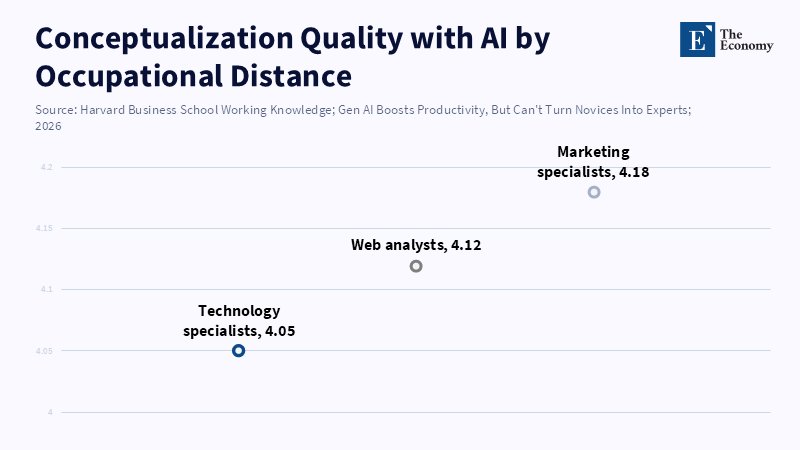

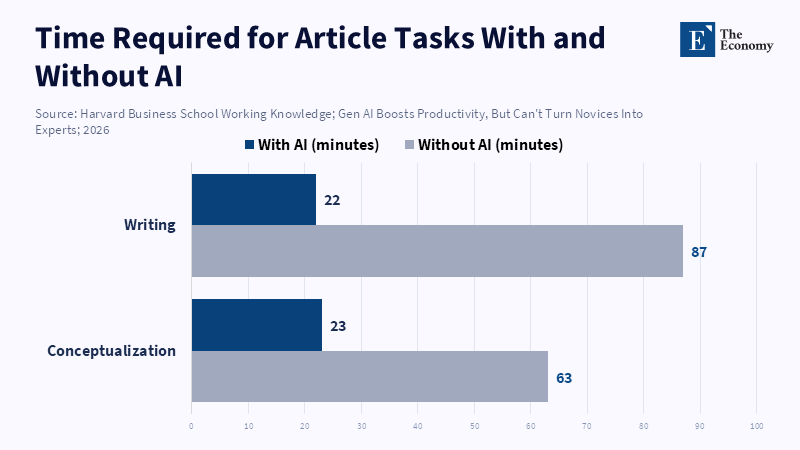

This has also been borne out empirically. In one controlled study at a financial firm, employees in marketing and engineering were assigned the task of writing investment articles using generative AI, even though the participants had limited pre-existing domain knowledge.[4] Although all participants were able to use AI to organize ideas, there was still a huge difference between participants with and without domain knowledge. According to The AI Proficiency Report, while AI tools allowed employees to take on tasks outside of their usual areas—helping marketing specialists and web analysts to produce articles—those with less experience in writing, such as IT specialists, did not achieve the same level of clarity plus competence in their work.[5] The report notes that most employees use AI for elementary tasks, showing that while AI enables workers to stretch beyond their usual roles, it does not turn novices into experts. As an author of one study states, generative AI "essentially hits a wall when people lack sufficient expertise".[6] Essentially, it comes down to the fact that if a technically proficient employee without writing or finance knowledge uses AI, the product will not be as good as if the employee were a domain-expert marketer tasked with using the tool.

Alternatively stated, AI is best used for idea generation and not for production.[7] According to a report from ScienceDirect, while AI adoption has brought recognized benefits in productivity and automation, 74 percent of participants showed mixed or negative sentiments, often worrying about job security and data privacy. From this, we see that AI is good for boosting the human capacity for thought rather than replacing it. Marketing specialists can "find the information they need more efficiently and use it to fill the gaps"[8] with their knowledge and the help of AI, whereas those lacking knowledge are "lacking either the knowledge base or the skills required for effective use".[8] Simply put, according to Bojinov et al. At the Harvard Business School, if you already possess domain knowledge, AI can "lift it up to near-expert performance"; otherwise, the "effects are largely superficial".[9]

This phenomenon has been seen across multiple domains.[10] It has been seen in education that teachers need to understand and build on AI outputs, even though AI can support personal learning, identifying at-risk students, providing summaries of material, etc.). Doctors can use AI to process hundreds and thousands of radiology images every hour, but medical diagnosis depends on their medical expertise. A banker can use AI to generate analyses of markeconcerning market concerns, concerning market patterns, through professional expertise, and these analyses can be translated into sound investment advice. Many vendor claims about AI “tripling productivity” often overlook actual research findings. According to a study reported by CNBC, tech support agents using AI tools saw a 14% average productivity boost, with even greater gains for less experienced workers, who completed tasks 35% faster. Crudely interpreted, this implies that one only needs one-third the workforce, but as Brent Dykes states for Forbes, this "seductive equation"-which seems to imply a two-thirds reduction in staffing needs- is misleading.[11] According to Harvard Business School researchers, while generative AI increases productivity, it does not replace the expertise needed to create high-quality work; AI-generated outputs often still require substantiated editing by subject matter experts to secure accuracy and usefulness.[12]

According to McKinsey & Company, while AI is rapidly changing the way people work and can assist with many tasks, it cannot fully replace the unique decision-making and interpretive abilities of humans.[13] As a result, the most effective outcomes are achieved when human knowledge is enhanced by AI tools, rather than relying on AI alone.[14] Although AI can take over some tasks, such as research or simple writing and data processing, it cannot automate the critical cognitive tasks of interpretation and judgment.[15] It follows, then, that simple technical know-how, such as typing prompt inputs and running models, is no longer a key differentiator.[16] The aforementioned Brookings piece concludes that "the challenge is in creating the knowledge, resources and incentives to obtain accurate information" – i.e., the T-shaped or field-specific skills that AI alone cannot spontaneously generate. Our first key takeaway, therefore, is that human capital is fundamental; the ratio of knowledge to the tool is what actually matters. Using a tool without subject-matter expertise will be inefficient, whereas using a tool once subject-matter mastery has been acquired, such as learning a mathematical theorem or literary analysis technique, will produce exponentially better results.

3. AI Competence is Increasingly Becoming a Requirement at Top Levels

Just as AI's ability to add value at the task level is enhanced through specialization, so is an organization's value through its top levels. Awareness of this trend is rapidly propagating up through corporate and institutional hierarchies. Generative AI was on the C-suite's agenda in 2023: a quarter of C-suite executives had used generative AI personally at work, and over a quarter of organizations had placed the topic of AI strategy on their board's agenda.[17] Now, senior leaders understand that as well, in an AI-driven economy their role is going to require a different skill set in addition to expert support teams. Now, senior leaders recognize that, in an AI-saturated economy, their roles will also require new skill sets, along with the support of expert teams.

Consequently, AI competence is moving from being a niche skill to an executive one. Several authors, including McKinsey, have suggested that digital transformation successes will largely be led by "domain leaders": middle managers who have deep business knowledge in their industry, but also an "AI muscle". Such people know their organization's problems and their customers' needs (as C-level individuals know), but also have a sufficient level of technical skill to develop AI-based solutions. It is as if they are "bilingual" in the business and AI worlds, capable of translating strategic targets into data science roadmaps and AI results back into business terms.

Unfortunately, many currently lack these skills. According to LinkedIn News, three times more C-suite executives around the world are now adding AI skills such as prompt engineering and working with generative AI tools to their profiles compared to two years ago, suggesting that many Fortune 500 leaders are actively developing AI expertise on the job. This entails a significant risk for formulating strategic decisions, where a proper application of AI is critical. Many of the aforementioned Fortune 500 organizations report that more "tech-capable" domain owners are perhaps the most important factor in an organization's successful use of AI. As McKinsey puts it, "the real competitive advantage with AI comes from having business leadeis able to bridge bridge business problems with the technology provided by the technology provides", and without it, investments can stall in the proof-of-concept stage.

Other research confirms this trend. In the 2023 AI survey, McKinsey reported that organizations already implementing AI predict major workforce changes: many foresee job reductions in some areas, and significant up-skilling programs in others.[18] This recognizes that work radically changed and was radically altered. It is especially clear that knowledge-based sectors like finance, health care, and education will be more disrupted than traditional industries like manufacturing[18]. Those leading innovation in the use of AI also appear to be the ones able to see its possibilities and adjust business strategy accordingly.[19] In short, for C-level leaders, blending AI knowledge with domain strategy is fast becoming critical for business success.

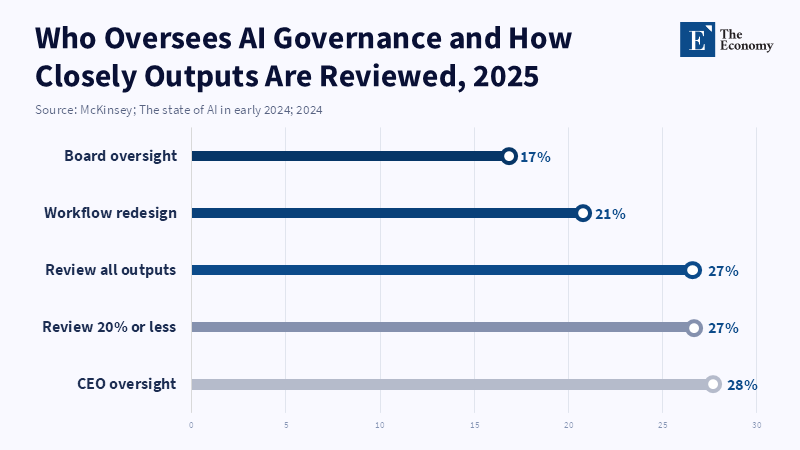

However, organizations vary in their readiness. While many boards are developing AI roadmaps, few organizations have defined AI governance procedures. Only one in five firms had any type of policy related to generative AI use by employees in 2023, according to McKinsey.[20] This implies that many organizations are keen to develop AI capabilities, but have not put necessary limits or guidelines in place. Such a mismatch is typical: leaders know AI is important, but organizations have been slow in adapting. For administrators and decision-makers, the conclusion is clear: organizations must build AI skills across all levels of leadership, both by providing training (more than just an introductory overview) and by embedding AI literacy in executive curricula. This will allow leaders to become "bilingual" in their fields, instead of tripping over technical impediments. Accountability of senior leaders to develop these skills within their teams must also be reinforced. The data clearly show that "AI-ignorant" leaders risk misguided strategy or an underestimation of the coming labor disruption, because as stated previously, "having these domain owners [with AI skills] is probably the single most key role any business needs for its AI transformations".

4. At the Working Level: The Emergence of Super-Human Labor

At the operational or entry level, the trends of AI integration look a bit different. This is where superhuman laborers (SHLs), which we define here as individuals who have breadth across a number of domains coupled with a strong ability to utilize AI tools, are likely to appear. While top executives use AI to transform business lines, these individuals utilize AI to amplify personal productivity. SHLs with several specializations are able to absorb work from many different roles, while having AI handle the simpler tasks. SHLs, in effect, serve as an amplification agent for T-shaped individuals.

The T-shaped professional model itself is once again in the spotlight. The traditional definition of a T-shaped professional was having great skill in a single domain (vertical bar) combined with general knowledge across a broad number of other domains (horizontal bar).[21] These days, breadth is more important than ever, and this T-shaped model will be utilized in conjunction with AI. While a single domain specialization offers leverage in that area, familiarity across other domains will allow workers to work in related areas (HBS showed that transfer across similar domains occurred with ease via AI, but not domains distant from one another).[22] Although AI is very capable of systematic tasks at a very high level and domain, the ability to "connect the dots" and come up with novel solutions is still an important component of the human worker. The SHL will be someone who not only masters a single domain but can, with help from AI, function within other problem domains, from marketing to basic programming to data analysis. A worker in finance with general software skills might use AI to generate reports and analyze marketing data or even to draft legal forms. This is like the old polymath concept, except that AI greatly reduces learning time.

Evidence shows this effect clearly. A study by IG Group comparing experts and novices indicated that AI made roughly equivalent gains at the stage of ideation, where both beginner and expert workers (ranging from web analysts to software engineers) were able to generate an article outline to similar levels of quality when aided by AI.[23] According to a review published in 2023, experts tend to focus more on the relevant aspects of a task, such as identifying important information in graphs, which enables them to complete tasks at a higher quality level compared to non-experts. This suggests that successfully completing complex, ideational tasks may require both support in conceptualizing the problem and specific skills for executing solutions. A SHL, or an AI-empowered T-shaped worker, can perform this bridging because they are experts in one domain (thus having mental models of how problems and information are structured within that domain, like engineers understanding information flow), but can apply this context to other domains because of cultural knowledge. In contrast, a narrowly specialized individual dropped into another domain would lack context even when supported by AI.

Taken systematically, the emergence of SHLs will lead to a stratified workforce. Worker skills are becoming valuable to the extent that they allow workers to leverage AI to achieve greatly increased output. In practical terms, an SHL will be able to save colleagues’ work across many projects by using AI to perform the menial tasks. These workers will also require great adaptiveness and will often outperform their colleagues, which is one way businesses already distinguish "AI high-performers" and teams who utilize AI effectively. One similar study, again from McKinsey, suggests workforces will be impacted significantly, resulting in job-to-job reductions and reskilling. The job reductions are likely to come from narrow specialists unable to operate under this new model, and the reskilling will focus on increasing SHLs.

Socially, SHLs could further reduce hierarchy by increasing the scope of what one person can accomplish. This will not be a move towards pure automation where workers are eliminated but a change in how many different, narrowly-specialized tasks one versatile worker will be capable of doing with the assistance of AI. While this increases the potential for and compensation for SHLs, others may be left behind. Those who cannot utilize the technology, or those with only limited expertise in a single domain, could be in jeopardy. Similar to how a good calculator user still requires knowledge of math, an AI-competent SHL will require solid underlying skills.

In essence, success at the working level of the AI transition is driven not by isolated specialists but by interdisciplinary teams and versatile individuals. These individuals may be motivated by a heightened "learning incentive", knowing that a secondary skill (like data literacy for a finance worker) will yield great returns in combination with AI.[24] The concept of "super-human laborer" can be explained as AI-powered, driven by the exponential leverage of the T-shaped profile.[25] This can be seen as a third path: in addition to AI tool novices and domain experts, there are AI-enhanced T-shaped specialists. The combination of instruments and insights in synergy should be celebrated. A UK trainer suggested that "people bringing real domain knowledge and adding serious technical capability" represents a "powerful combination" of skills.[26]

There are obvious tradeoffs as well. Automating certain tasks could disincentivize mastery of the underlying technical skills among many employees. It is plausible that over the next decade, organizations will favor those with diverse secondary skills over specialists. The result could be increasing workforce polarization between well-paid SHLs and routine workers who perform maintenance tasks. Curriculum development and training should reflect these trends: students must be taught decision-making skills ands and breadth, not just mastery of a narrow field or a short AI primer. We will return to the implications later.

5. Conclusion - Human Capital in the Age of AI

It's tempting to think that with the emergence of AI, simple solutions can fix it. Simply learn to code, use a new app or see AI as another neutral tweak to productivity. These views are incomplete, however. It is important to remember that AI is only a complement to human capital and that ultimately with strong, robust and flexible knowledge and skills, one will triumph. A simplistic view of the long term-that AI can replace a typewriter or search engine-is deeply misleading; it represents a structural change.[27] We’ve seen that inexperienced users only see partial benefits of AI, whereas the domain possesses broader and more adaptable skills that can attain expert-level performance or outperform even traditional experts using AI. As such, AI will favor those individuals in the T-shape who can both learn the underlying scientific and ethical principles and use AI in an appropriate way.

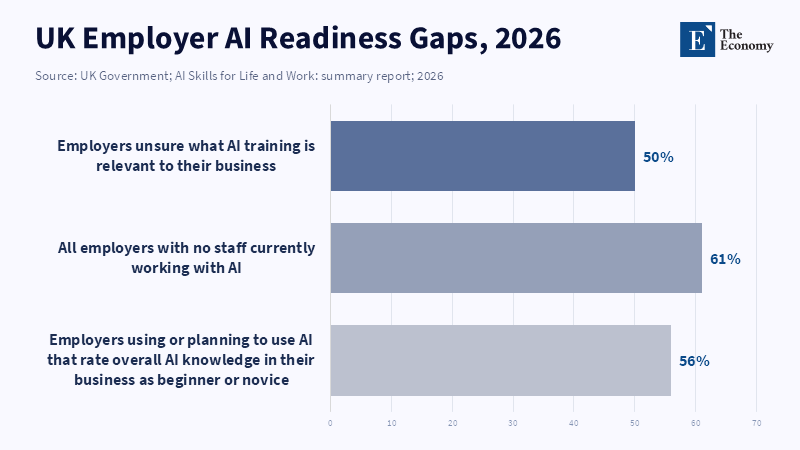

There are several pragmatic consequences for action. For educators, it implies doubling down on fundamentals and conceptual rigor. Educators have to emphasize building scientific, mathematical, ethical, and communicative foundations that cannot be substituted by any tool, and also encourage the questioning and verification of AI outputs. Instead of rote, fact-based assessments, teachers must emphasize projects, discussion, and creative problem-solving that require critical human thought. For administrators, it means creating explicit policies andcies and support systems.[28] Schools and universities create explicit policies for the acceptable use of AI in learning and assessment, and implement broad AI training for all instructors that focuses on domain pedagogy rather than tool literacy.[29] They should also invest in institutional-level support rather than relying on individual faculty members to get up to speed. To policymakers and workforce developers, it means we must continue to invest in lifelong learning and implement "AI apprenticeships", similar to those implemented in the UK. [30] Simultaneously, we must refocus on solid fundamentals in basic education to prepare youth for an AI- enhanced future.[31] Labor-market strategies have to prioritize interdisciplinarity and also develop credentials or recognition mechanisms for broadly applicable human skills rather than narrow specializations.[32]

Fundamentally, our assertion that humans with specialized, yet flexible skills are necessary to realize AI's value must guide any action we take. There can be no presumption that AI will automatically democratize knowledge; instead, we need to recognize that any access to information provided by AI must be supplemented by the human capacity for context, ethics, and domain expertise. Thus, policymakers should emphasize the maintenance and promotion of these human characteristics and, practically speaking, support expert training, mentorship, secure careers that foster deep knowledge accumulation, together with strong training that centers on challenges of real-life problem-solving.[33]

The AI revolution will indeed reward a select few-those who built upon the new tool with depth. As we've argued, output and invention come not from the tool itself, but from the people who wield it. This is of extreme consequence-economies that embrace T-shaped skills and restructure their institutions accordingly can leverage AI for growth angrowth and lessening. Those who fail to recognize this may see productivity gains go exclusively to existing human capital or even stagnation.[34] Strengthening human capacity at all levels- so that it can synergize with AI-is the correct course of action, and it has the potential to turn this moment of disruption into an opportunity for a more productive, skilled, and balanced society.

References

[1] OECD (2023) OECD Skills Outlook 2023: Skills for a Resilient Green and Digital Transition. Paris: OECD Publishing. doi:10.1787/27452f29-en.

[2] Lokshin, M. (2026) ‘It was never the keyboard: Why domain knowledge, not digital skills, determines AI productivity’, Brookings, 3 April.

[3] [17] [18] [19] [20] McKinsey & Company (2023) ‘The state of AI in 2023: Generative AI’s breakout year’, McKinsey, 1 August.

[4] [5] [6] [7] [8] [9] [12] [22] [23] Rand, B. (2026) ‘Gen AI Boosts Productivity, But Can’t Turn Novices Into Experts’, Working Knowledge, 16 March.

[10] Tauqir, M. (2026) ‘AI Without Domain Knowledge: Creates Noise, Not Development’, Medium, 3 February.

[11] Dykes, B. (2026) ‘Why AI’s Productivity Promise Falls Apart Without Human Expertise’, Forbes, 27 January.

[13] [14] [15] [16] Maor, D., Lamarre, E. and Smaje, K. (2025) ‘Building the AI muscle of your business leaders’, McKinsey, 1 December.

[21] [25] Moghaddam, Y., Bess, C., Demirkan, H. and Spohrer, J. (2016) ‘T-Shaped: The New Breed of IT Professional’, Cutter Consortium, 26 September.

[24] [26] Skills England, McFadden, P., Smith of Malvern, J. and Narayan, K. (2026) ‘AI apprenticeship to close digital skills gap holding back millions of workers’, GOV.UK, 17 March.

[27] Lalljee, J. (2026) ‘AI boosts productivity for workers but could hurt them long-term, study finds’, Axios, 10 February.

[28] Johnson, L. (2025) ‘AI Fluency Is The New Literacy Test For Leaders’, Forbes, 2 October.

[29] Passlack, N., Hammerschmidt, T. and Posegga, O. (2026) ‘With Great Power Comes Great Responsibility: What Shapes AI Literacy for Responsible Interactions of Knowledge Workers With AI?’, Information Systems Frontiers, 28, pp. 11–46. doi:10.1007/s10796-025-10648-5.

[30] Green, A. (2024) ‘Artificial intelligence and the changing demand for skills in the labour market’, OECD Artificial Intelligence Papers, No. 14. Paris: OECD Publishing. doi:10.1787/88684e36-en.

[31] Dhaliwal, A. (2026) ‘Intelligence At Scale: How AI Is Reorganizing The Tech Workforce’, Forbes Business Council, 10 February.

[32] Umenyiora, L. (2025) ‘The Divided Demands of AI Literacy’, AACSB Insights, 22 December.

[33] Lassebie, J. (2023) ‘Skill needs and policies in the age of artificial intelligence’, in OECD Employment Outlook 2023: Artificial Intelligence and the Labour Market. Paris: OECD Publishing. doi:10.1787/08785bba-en.

[34] Park, J. and Kim, D. (2026) ‘Co-evolution of skill structure and labor market processes in US regions, 2003–2023’, Cities, 172, 106830. doi:10.1016/j.cities.2026.106830.