Reconstructing Labor in the Age of Zero Marginal Production

Published

The Economy Research Editorial*

* Swiss Institute of Artificial Intelligence, Chaltenbodenstrasse 26, 8834 Schindellegi, Schwyz, Switzerland

Productivity has increased in certain sectors, yet the overall quality of working life has deteriorated visibly. The primary issue does not lie in widespread unemployment but in the progressive deterioration of job quality, occurring even as economies develop greater capacity to produce more output with reduced human labor input. This paper argues that artificial intelligence should be understood less as a mere tool and more as a structural influence that diminishes the economic necessity of human labor in numerous tasks, while simultaneously intensifying scarcity in areas that still demand human involvement. The pressing policy challenge is therefore not to preserve existing jobs per se, but to redesign the labor framework to redirect human effort toward domains such as care, education, and the maintenance of institutions, while also preventing AI-driven management from transforming increased production capacity into more intense and degraded forms of work.[1]

1 Introduction - The Paradox of Unemployed Growth

The paradox of growth accompanied by unemployment is already clear in the discrepancy between the measured rate of technological development and the ordinary experiences of workers. In 2024, global private investment in generative AI reached $33.9 billion, while total private AI investment in the United States was estimated at $109.1 billion. These data show a major shift in capital allocation toward machine inference as a factor of production, rather than merely marginal software improvement.[2] Nevertheless, this escalation in investment has not been matched by improvements in workers’ subjective experience with work. Measures of global engagement declined in 2024, and a substantial proportion of workers reported daily stress, challenging accounts that link innovation directly and mechanically to better job quality.[3] The paradox does not lie simply in the coexistence involving innovation and worker hardship—a well-documented phenomenon—but in the timing: a technology promoted as delivering broad productivity gains emerges during a phase when many workers experience their jobs as increasingly surveilled, fragmented, and lacking dignity, despite headline job statistics remaining stable.[4]

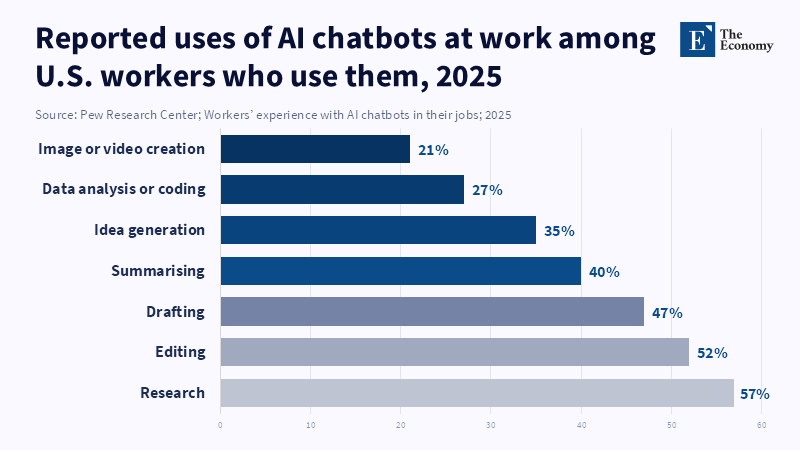

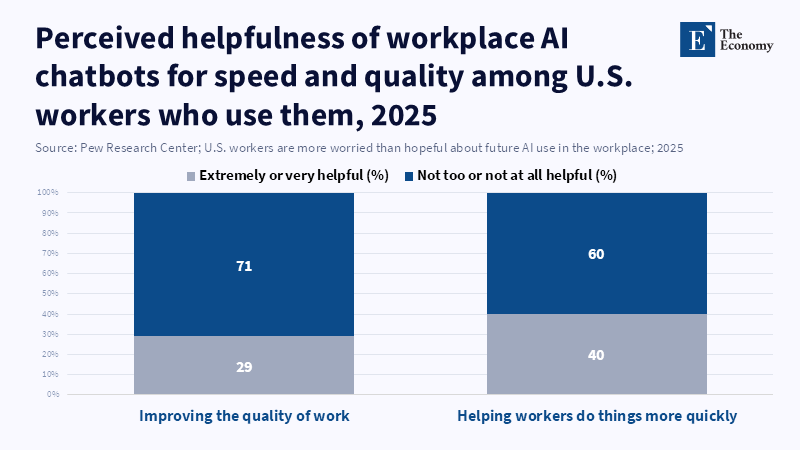

The traditional perspective portrays AI as a neutral force that boosts output per worker, implying that the main challenge entails managing temporary displacement. This viewpoint, however, overlooks second-order effects on economic incentives and institutional arrangements. As marginal costs for producing certain cognitive outputs—such as drafting text, generating code, summarizing documents, and producing plausible analyses—approach zero, the economic roles of many white-collar positions change even if employment levels do not immediately reflect this shift. Empirical studies help illuminate this process. Access to generative AI assistants has been linked to substantial productivity gains in specific tasks, including lowered time requirements and improved quality in professional writing, as well as measurable performance enhancements in customer support contexts.[5] While these outcomes are often framed positively, they also signal a caution: as tasks become less costly, employers face a choice between redistributing the gains through greater autonomy, reduced hours, or higher pay, or appropriating the surplus by imposing stricter targets and increased oversight. Early institutional evidence suggests the latter approach prevails, since the digital infrastructure enabling cognitive automation simultaneously facilitates pervasive measurement, surveillance, and algorithmic management.[6]

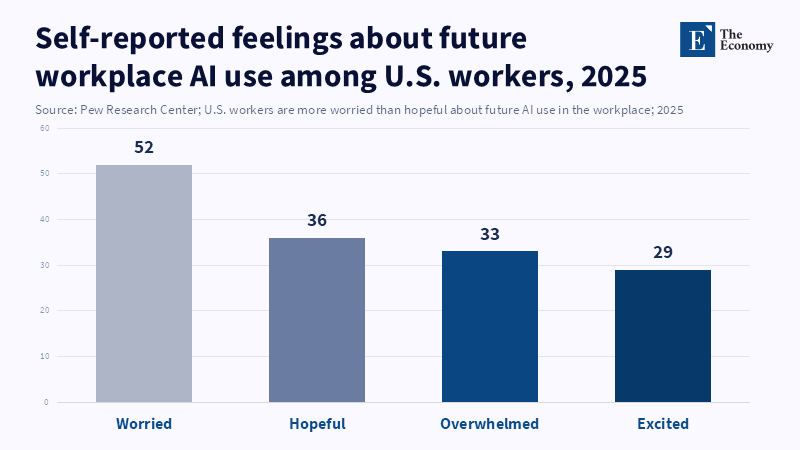

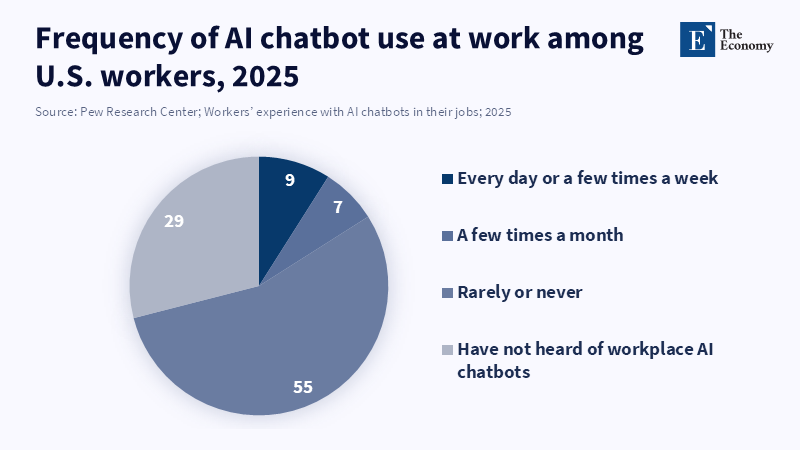

This intersection of factors underpins the heightened urgency seen between 2023 and 2026 compared to earlier waves of automation. Artificial intelligence is not only being integrated into production processes but also embedded within management practices. A broad cross-national survey of employers found algorithmic management tools to be widely adopted, especially in the United States and Europe, with some technologies extending to direct monitoring of employee communications.[7] Concurrently, the diffusion of AI across the workforce is uneven, creating a political economy in which a minority accrues clear benefits while a majority confronts new demands and risks, lacking equal bargaining power. In the United States, by September 2025, approximately one in five workers reported using AI in their jobs, and overall sentiment skewed more toward apprehension than optimism on AI's future impact on work.[8] Thus, the institutional challenges do not revolve solely around the abstract concern of whether AI displaces jobs. Rather, they concern the extent to which societies allow AI to become an instrument that converts resource abundance into deteriorated labor conditions, and whether systems that develop human capital are restructured to direct labor toward areas where human contribution keeps structural significance.[9]

2. The False Debate: Job Destruction vs. Job Creation

The persistence of the debate between job destruction and job creation results from the relative ease of quantifying employment statistics compared to the difficulty of assessing conditions within jobs. However, the more pertinent question from a policy perspective concerns the reasons why productivity gains do not consistently result in reduced working hours, increased wages, or improved working conditions for the average worker. The wage channel appears to be under considerable strain. According to the International Labour Organization[10], real wages have shown only a fragile recovery following the inflation shock, with global real wage growth estimated at 1.8% in 2023 and projected at 2.7% in 2024. Simultaneously, significant wage inequality persists, and distributional patterns differ across countries' income levels.[11] In the context of the Organization for Economic Co-operation and Development (OECD)[12], while real wages are reported to be increasing broadly, they remain below early-2021 levels in approximately half of member countries. This illustrates that macroeconomic recovery does not necessarily translate into improved household economic security.[13] The introduction of artificial intelligence (AI) into this environment elicits apprehension that, even when genuine productivity improvements occur, social benefits may be limited, as the basic trade-off—working harder to achieve a better quality of life—has already weakened.[14]

The dynamics related to working hours offer supplementary insights, not because working time is invariably static, but because reductions in hours generally require deliberate policy steps & collective bargaining rather than arising spontaneously from productivity gains.[15] The attention garnered by recent initiatives, such as various trials and debates concerning a four-day workweek, underscores that such developments are exceptions instead of intrinsic outcomes of increased productivity. According to the OECD, AI can reduce the need for workers to perform dangerous or boring tasks and may improve job satisfaction, increase autonomy, and even improve mental health, suggesting that productivity gains from AI have the potential to benefit both output and work-life balance. Consequently, while employment statistics might appear stable, the experience that is lived frequently involves intensified workplaces and more actively managed workdays.[16]

Now, the analogy of “enshittification” gains analytical relevance beyond its initial provocativeness. In digital services, this term describes a trajectory from giving priority to user experience to focusing on monetization imperatives, culminating in a phase of degradation driven by limited alternatives for captive users.[17] Employment systems may reflect a comparable progression when workers encounter heightened insecurity. Within such a context, the immediate productivity benefits of AI can coincide with a deterioration in job quality. Cross-national data on algorithmic management exemplifies this institutional shift, characterized by extensive adoption, increased workplace monitoring, and continuing issues related to accountability, transparency, and worker well-being.[7] Restricting the discussion to job counts focuses solely on destruction-versus-creation risks, overlooking the fundamental concern: the expansion of surplus labor capacity across numerous cognitive sectors, alongside governance shortcomings that permit this surplus to be leveraged to intensify and cheapen labor rather than enhance worker welfare.[18]

3. The Degradation of Work as a Pre-AI Condition

The deterioration of work prior to the assimilation of AI should not be regarded as a marginal issue; rather, it constitutes the basic context within which AI operates. One productive approach is to conceptualize job quality as a quantifiable policy variable rather than a mere cultural grievance. The OECD’s framework on job quality distinctly categorizes earnings, job security, and working conditions, highlighting factors such as job strain that arise when demands exceed available resources like autonomy and support.[19] Concurrently, global surveys reveal that a considerable proportion of workers experience stress and withdrawal.[3] Although these phenomena are not directly attributable to AI in a narrow sense, they create conditions under which AI is more likely to exacerbate negative outcomes. In situations where work is already characterized by high strain, technologies that enable intensified monitoring and accelerated output cycles tend to increase this strain rather than reduce it.[16]

The decline in institutional mechanisms supporting worker bargaining power predates the advent of generative AI and helps explain why the benefits of productivity advances have not been broadly shared. Over recent decades, trade union membership has declined steadily across advanced economies; OECD data suggest union density has roughly halved since 1985, dropping from approximately 30% to around 15% by 2023/24.[20] This erosion is significant because historically, collective bargaining has been a key channel through which workers converted productivity gains into improvements in time, wages, or protections. As coverage of collective bargaining contracts diminishes, the default allocation of productivity gains tends to favor capital owners and top earners, thereby widening the gap between output growth and typical compensation levels.[21] In this environment, AI need not eliminate jobs to cause social stress—it suffices for AI to decrease the marginal value of numerous tasks more rapidly than systemic arrangements might renegotiate the conditions affecting job quality.[22]

Discussions of AI’s consequences often frame its entry into the workforce primarily as the automation of menial tasks; however, empirical evidence indicates that AI is frequently deployed alongside improved managerial control systems. For instance, the 2025 OECD employer survey characterizes algorithmic management as software that automates functions traditionally performed by human managers and documents extensive implementation of such systems, including observation technologies.[7] Additional data from Europe show that algorithmic systems go beyond platform-based work, with substantial proportions of workers reporting programmatic monitoring and algorithmic task assignments in various workplaces.[23] In platform work—often regarded as the most extreme case—the Eurofound–European Labour Authority survey reports continuous time tracking for approximately three-quarters of workers and comprehensive control mechanisms affecting a significant minority.[24] These developments are consequential as far as they alter cognitive and motivational dynamics, encouraging laborers to focus on conformity to performance measures, anticipate automated evaluation, and accept reduced autonomy as a prerequisite toward maintaining employment. Over time, these pressures risk eroding faculties valued by society, such as judgment, initiative, and responsibility, because the prevailing system rewards adherence to machine-defined criteria rather than independent decision-making.[25]

4. Structural Misallocation of Human Labor

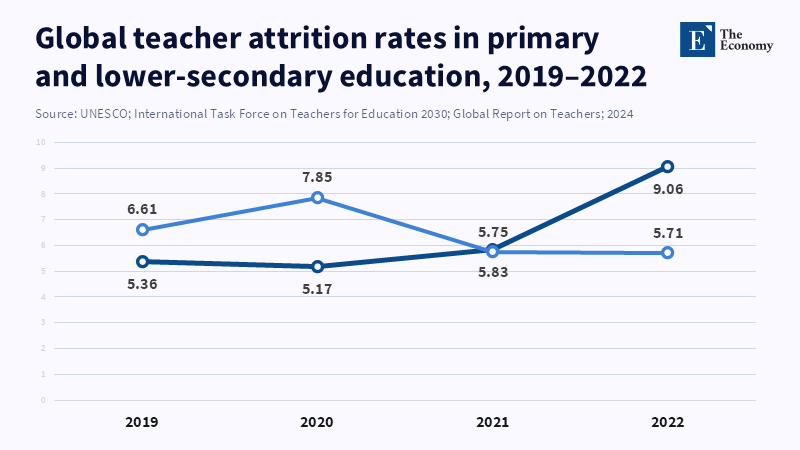

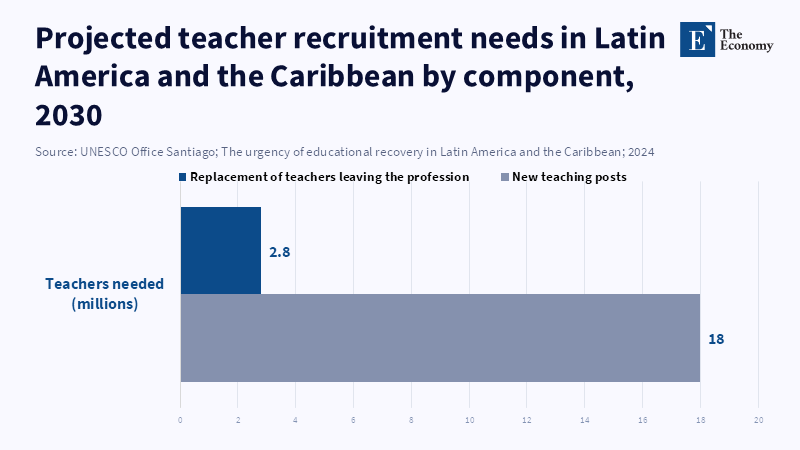

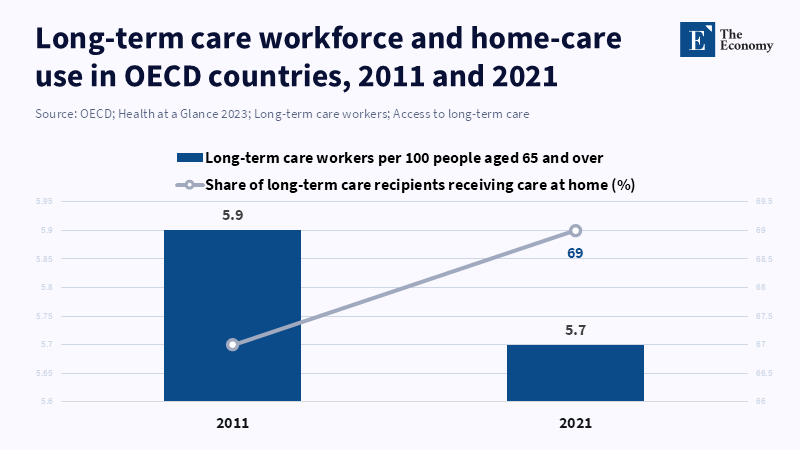

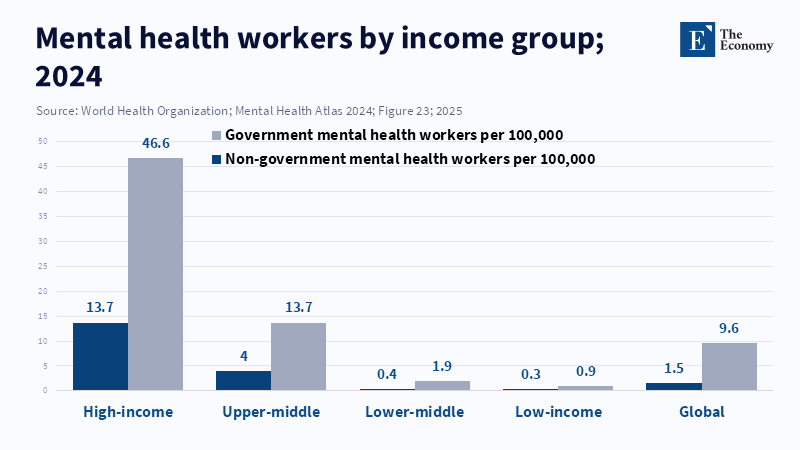

The structural misallocation of human labor is often regarded as a mild inefficiency. However, in the context of the AI era, it appears as an important fault line because the sectors most amenable to AI integration do not correspond to those deemed socially essential. Education illustrates this difference. A leading global report projects a need for an additional 44 million primary and secondary teachers by 2030 to achieve universal schooling objectives, while simultaneously highlighting increasing attrition rates and deteriorating recruitment trends in many educational systems.[26] The social consequences of this deficit are tangible: larger class sizes, reduced course offerings, and diminished individualized attention are foreseeable outcomes, all of which directly impede human capital development.[27] Similar patterns of scarcity appear in healthcare, elder care, and mental health sectors. For instance, within OECD countries’ long-term care, wages for workers are reported to be approximately 20% below the economy-wide average, while the sector struggles with pronounced recruitment and retention challenges amid the growing demand driven by population ageing.[28] The World Health Organization further notes severe capacity constraints in mental health services, citing a global median of 13 mental health workers per 100,000 people, alongside ongoing underinvestment in corresponding budgets.[29] These domains are not readily amenable to AI-based scaling of human labor since their outputs fundamentally rely on relational interactions, in which quality is intricately linked to time and attention.[30]

Counterbalancing this deficit is an overconcentration of talent in high-wage, high-status sectors whose marginal productivity is increasingly substitutable through AI technologies. This unevenness is evident even in straightforward wage comparisons: as of February 2026, average weekly earnings in the U.S. information sector stood at approximately $2,058, in financial activities at about $1,834, and in private education and health services at roughly $1,181. Such a wage structure incentivizes skilled labor to gravitate towards sectors with higher financial rewards rather than those with the greatest social need.[31] The argument is not that finance or technology lacks social utility, but rather that market prices systematically undervalue sectors such as care, education, and essential institutional functions, although their importance for continued economic growth. AI’s capacity to lower marginal costs across multiple cognitive tasks may diminish demand for certain high-paying production-oriented roles; however, it does not intrinsically elevate compensation or status within care-related sectors. Absent deliberate policy steps, this trend is likely to produce an excess of labor in AI-exposed fields alongside persistent shortages in human-centered areas—a form of misallocation that is both economically inefficient and politically volatile.[32]

Such misallocation assumes further significance because it influences the “development ladder” of skills within organizations and professions. Traditionally, many white-collar career paths have depended on entry-level positions where novices engage in codifiable tasks while gradually acquiring tacit knowledge. As AI systems increasingly perform parts of these codifiable functions, firms are incentivized to reduce hiring at the entry level and to expect “AI-ready” outputs from fewer employees, thereby threatening the apprenticeship processes that are key to developing future experts.[33] Concurrently, education systems encounter pressures to integrate AI into learning environments, which may either enhance or undermine human capital depending on the regulatory framework in place. UNESCO’s recommendations on generative AI in education highlight the importance of human-centered policies focused on safeguarding agency, equity, and the fundamental objectives of learning—implicitly acknowledging that uncontrolled AI deployment could degrade educational quality.[34] The structural issue, therefore, goes beyond workforce mobility between sectors; it concerns the preservation of institutions responsible for skill formation and civic capacity development amid accelerating task commoditization induced by AI.[35]

5. What do they mean by the "People-First" System as Labor Reallocation

The concept of a People-First system in the context of labor reallocation entails moving beyond the unrealistic aim of preserving all existing jobs. As AI progressively reduces the labor intensity of various outputs, efforts to protect specific job categories often overlook the deterioration of working conditions within them, since firms maintain roles primarily by extracting greater productivity from a smaller workforce. A more viable goal is to secure individuals’ economic security and redirect their labor toward socially necessary functions that retain meaningful human elements. This approach is anchored by empirical evidence reflecting two concurrent realities: AI’s capacity to enhance task-level productivity throughout numerous cognitive areas, and ongoing societal shortages in sectors such as education, care, and mental health, which are not addressable solely through increased efficiency.[36] Consequently, a people-first strategy entails planned labor reallocation supported by institutional reforms designed to shift human effort away from marginal cognitive tasks and toward relational services and the maintenance of institutions.

This pragmatic framework further clarifies why the deterioration of work conditions, sometimes described as the enshittification of labor, is better understood as a failure of allocation rather than a failure of technology. Platform degradation seldom results from technological malfunction; rather, it stems from incentive structures that shift focus from user value to extraction.[17] Analogously, labor markets may react in similar ways. If AI enhances output per hour, an effective system might translate this into reduced working hours, improved staffing, and higher-quality services within education and healthcare. However, existing evidence concerning algorithmic management and associated worker stress suggests a different trend: intensification of labor through increased measurement and standardization, particularly where workers’ bargaining power is limited.[37] A people-first system manages these issues by reforming the underlying allocation institutions instead of adopting a moralistic stance on corporate behavior. It establishes explicit targets for staffing and service quality in essential sectors, raises compensation where chronic underpayment impedes recruitment, and restricts AI-driven surveillance and metric manipulation that reduces workers to mere inputs in performance dashboards rather than recognizing them as agents of service.[38]

5.1. Care Economy as the New Labor Core

Focusing on the care economy as a central axis for labor policy directly responds to areas where shortages remain acute. The rising need for long-term care presents a clear workforce challenge; for instance, the OECD estimates that the proportion of long-term care workers relative to total employment needs to increase by approximately 30% within the next decade, despite the often-low pay and difficult working conditions these roles entail.[39] Similarly, education faces an immediate shortage of teachers to support human capital development, rather than a distant prognostication.[40] According to the Bureau of Health Workforce, fields such as teaching, nursing, allied health professions, and care or therapy are determined by workforce levels and budget priorities that can lead to implicit limits on care.[29] These roles share an important aspect that large-scale AI systems have difficulty matching: the ability to build trust and respond to complex, context-dependent human needs. Therefore, strategies for labor reallocation should treat these fields as fundamental components of macroeconomic infrastructure rather than marginal services, and they must facilitate transitions for workers displaced from highly AI-exposed sectors without inflicting prohibitive income losses or undermining quality standards.[41]

5.2. AI as a Complement, Not Substitute

The proposition that AI should serve as a complement instead of a substitute constitutes a deliberate institutional design choice rather than a mere slogan. Such a position requires enforcement because corporate incentives frequently favor using AI to reduce staffing levels, increase surveillance, and homogenize decision-making procedures.[7] Evidence suggests that AI integration is most effective when it alleviates bureaucratic burdens and repetitive tasks while leaving human actors responsible for judgment, relational functions, and accountability. This distinction is particularly important in realms like care and education, where service quality is difficult to restore once compromised, and metrics can be manipulated. The OECD’s survey on algorithmic management raises recurring concerns regarding accountability, explainability, and protection of worker health—issues that emerge primarily when AI systems are positioned as independent decision-makers rather than tools under human governance.[7] Accordingly, a people-first framework calls for concrete constraints: limiting management practices based on surveillance, ensuring workers’ rights to understand, contest, and rectify AI-mediated evaluations, and establishing procurement criteria that favor technologies enhancing autonomy and service quality instead of merely maximizing throughput.[42]

For educators, this perspective implies that curricula should frame AI not solely as a vehicle for skill acquisition but as a civic and cognitive system influencing learning processes and the evaluation of future professionals. UNESCO’s guidelines stress human-centered implementation and capacity development, which translates into creating assessment systems that reward reasoning, creativity, and judgment over polished presentation alone, as well as fostering students’ abilities to verify, critique, and contextualize machine-generated content.[34] For administrators, the implication pertains to governance: institutional policies should specify AI’s permissible roles (such as documentation, scheduling, and drafting) and identify tasks it cannot replace (including grading judgments, disciplinary and clinical decisions without supervision), complemented by auditable records and appeal mechanisms concerning AI-influenced results.[43] Policymakers, in turn, should orient labor strategies toward expanding capacity within care and education through adjusted pay scales, staffing norms, and professional training pathways, while regulating algorithmic management to prevent productivity improvements from translating into work degradation.[44]

6. Institutional Reconstruction

Institutional reconstruction is necessary because labor reallocation does not advance effectively through price mechanisms when legal, cultural, and credential-related barriers restrict mobility. While it may look straightforward to suggest that displaced engineers transition into teaching or that redundant analysts shift to caregiving roles, defining conditions under which those moves are rational for individuals and maintaining service quality is more complex. Teacher shortages, for instance, arise not only from inadequate recruitment but also from challenges related to retention and working conditions; these issues cannot be resolved solely by redirecting job seekers into classrooms.[27] Similarly, shortages in long-term care stem from factors such as low pay, insufficient recognition, and adverse working conditions; according to the OECD, these are central impediments to attracting workers. Constraints on mental health capacity reflect workforce scarcity and prolonged underinvestment rather than a mere lack of demand. In each sector, market adjustments occur slowly since the work involved is emotionally taxing, regulated, and frequently undercompensated relative to its social importance. Concurrently, AI accelerates adjustment pressures in vulnerable sectors by reducing task costs, introducing the risk of a disorderly transition where labor surpluses coexist with unmet social needs.[45]

This institutional challenge is exacerbated by cross-border competition and the mobility of capital and digital labor. Approaches that depend solely on firm-level compliance risk being ineffective when firms can relocate production, outsource, or automate tasks. This potential does not negate the relevance of joint action but stresses the importance of defining appropriate governance units. Systems of social dialogue and collective bargaining can guide outcomes; however, they must adapt to encompass algorithmic management and AI-mediated work design beyond customary concerns like wages.[46] The OECD’s findings on algorithmic management reveal a specific institutional deficiency: although many firms claim to have governance mechanisms, few provide meaningful options for worker opt-out or strong access to data and correction processes, indicating that governance remains superficial in critical areas.[7] Therefore, a reconstruction agenda requires enforceable rights and standards rather than mere encouragement for “responsible AI.”

6.1. Tripartite AI Governance

Tripartite AI governance becomes essential as AI transforms both production and management functions. Conventional tripartite frameworks, comprising government, employers, and labor, were originally established to address relatively straightforward issues such as working hours and minimum wages. In AI-mediated workplaces, contested issues now include data collection, surveillance intensity, automated evaluation, and AI-driven pacing and target-setting.[25] The policy goal is not to impede AI adoption broadly but to delineate acceptable applications that uphold human dignity and support skill development. Achieving this demands an inclusive institution capable of establishing standards for disclosure and contestability, mandating health impact assessments for intensive monitoring practices, and coordinating enforcement efforts to prevent responsible employers from being disadvantaged by those externalizing negative effects.[47]

6.2. Revaluation of Care Work

Revaluation of care work constitutes the fiscal foundation of any effective reallocation strategy. Shortages in long-term care and education do not arise from a lack of potential applicants but rather from an inconsistency between compensation, status, and the demands and social value of these roles.[48] Enhancing pay and improving working conditions in these professions will necessitate public funding, since private markets often fail to reflect their social value adequately without excluding low-income populations. The OECD explicitly connects the growth in long-term care demand with the need for workforce expansion and highlights how low wages and difficult working conditions disincentivize potential employees.[39] In education, the extensive scale of teacher shortages and attrition suggests that isolated, school-level interventions are insufficient; systemic investments to improve working environments and professional esteem are vital.[49] Similarly, in mental health, workforce expansion and budget prioritization are imperatives to maintain accessible services rather than default rationing.[29] Thus, revaluation is not simply a matter of moral persuasion, but a form of macroeconomic infrastructure investment designed toward stabilizing sectors that AI is least able to substitute.

6.3. Workforce Reallocation

Workforce reallocation remains the most delicate part, requiring a realistic approach. Large-scale career shifts during midlife have historically proven challenging, as proved by uneven adoption trends among age cohorts in earlier technological transitions. However, this reality should not lead to resignation; instead, reallocation policies must be structured as progressive steps rather than sudden leaps. Such measures include paid transition periods, supervised residencies in teaching and caregiving, modular credentialing recognizing prior competencies, and wage insurance to mitigate reductions during retraining.[50] Institutions should also prepare for the possibility that AI may undermine traditional entry-level pathways in white-collar sectors, making it harder for younger workers to acquire tacit knowledge via established apprenticeship models.[33] The risk reinforces the argument for creating alternative career ladders into care and education roles, where organized mentorship is materially designed and endorsed rather than presumed to arise spontaneously from firm incentives.

7. From Labor Market to Labor System

A labor market primarily distributes effort through price mechanisms, with welfare adjustments applied marginally. In contrast, a labor system distributes effort by explicit institutions that decide which types of work are considered socially necessary, establish the standards such work must meet, and define workers’ rights regarding protection from degradation. This distinction is grounded in historical practice rather than conceptual theory. For instance, policy reforms introduced during the 1930s set minimum standards for wages and working hours, not due to market failure per se, but because market outcomes were socially harmful.[51] The current situation shares a similar characteristic: the challenge is not insufficient production capacity, but rather a disparity between what can be produced and what society needs, compounded by weak bargaining power and insufficient governance. AI exacerbates this divide by reducing marginal costs across numerous cognitive tasks, while sectors such as care remain limited by time and human presence constraints.[52]

This systemic perspective also elucidates why certain counterarguments, though partially valid, do not fully address the issue. The assertion that AI increases productivity is supported by task-level analyses and may hold true for functions on scale.[5] However, the policy mistake lies in presuming that productivity improvements necessarily translate into enhanced welfare. Wage data illustrate the precariousness of this assumption; even as real wages recover in some regions, inequality persists at elevated levels, and wage rates stay below pre-inflation peaks across many OECD countries.[53] Similarly, the claim that AI democratizes knowledge has some validity, since access to advanced language models can reduce barriers to drafting, translation, and summarization. Nonetheless, democratizing output does not equate to democratizing power. Within environments with extensive algorithmic management and pervasive monitoring, the same AI infrastructure that broadens access can also intensify control, especially where workers lack collective bargaining strength.[54] The historical comparison to calculators overlooks a key difference: calculators augmented computational effectiveness but did not evolve into generalized managerial systems that oversee workers, assign tasks, and assess performance among industries. AI’s capacity to fulfill these managerial roles is incomprehensible and more clear, opting for a labor-system framework entails specific governance decisions.[25]

For policymakers, a primary focus should be on regulating algorithmic management as a matter of worker protection, by setting up minimum standards for transparency, paths for contesting decisions, and restrictions on intrusive surveillance, with these standards enforced through binding regulations rather than voluntary guidelines.[25] It also implies using fiscal policy to adjust the economic valuation of care and education sectors, enabling them to absorb labor without degrading quality, by linking staffing levels and working conditions to public funding.[55] Furthermore, rebuilding collective bargaining mechanisms or functional equivalents is necessary, given the notable decline in union density across many OECD economies and the resultant structural weakness of individual negotiations.[56] For educators and administrators, this perspective stresses the significance of protecting human capital development through redesigned assessments that focus on reasoning and verification, institutional policies that prevent AI-produced content from replacing authentic learning, and investment in human relationships, which is key for effective education and care.[57] The aim of these interventions is not to idealize work, but to avert a transition toward minimal marginal production that simultaneously entails heightened marginal suffering.

Conclusion: The End of Work vs. The Redefinition of Work

The debate between The End of Work and The Redefinition of Work should be understood as a policy decision implicitly shaping current discussions. Artificial intelligence is diminishing the labor involved in numerous cognitive tasks, occurring alongside the proliferation of algorithmic management tools, while job quality, bargaining power, and real-wage stability remain fragile.[58] The concern does not lie in the obsolescence of human workers, but rather in the risk that societies will confine individuals to deteriorated forms of employment, even as economic capacity grows to produce more output with fewer working hours. Concurrently, sectors such as the care economy and education continue to experience insufficient staffing and undervaluation.[55] A practical approach consists of shifting from defending jobs as fixed entities to defending labor as a societal function: this entails elevating the status of caregiving and teaching, implementing regulations on AI-based workplace controls, and developing transition mechanisms that secure both income and dignity.[59] Absent these institutional reforms, a gradual and subdued crisis is foreseeable, characterized by the erosion of skill development pipelines, weakening of public services, and productivity gains primarily helping corporate efficiency rather than social well-being. Therefore, the imperative is clear: AI governance should extend to work design, accompanied by restructuring labor systems so that increased abundance translates into reduced drudgery rather than perpetuating industrial insecurity.[60]

References

[1] [4] [6] [7] [16] [18] [25] [32] [35] [37] [42] [43] [47] [54] [58] [62] Milanez, A., Lemmens, A. & Ruggiu, C. (2025), ‘Algorithmic management in the workplace: New evidence from an OECD employer survey’, OECD Artificial Intelligence Papers, no. 31, OECD Publishing, Paris, doi:10.1787/287c13c4-en.

[2] Stanford Institute for Human-Centered Artificial Intelligence (2025), Artificial Intelligence Index Report 2025, Stanford University, Stanford, CA, doi:10.48550/arXiv.2504.07139.

[3] Gallup (2025), State of the Global Workplace 2025, Gallup, Washington, DC.

[5] [12] [22] [36] [45] [52] [61] Noy, S. & Zhang, W. (2023), ‘Experimental evidence on the productivity effects of generative artificial intelligence’, Science, vol. 381, no. 6654, pp. 187–192, doi:10.1126/science.adh2586.

[8] Lin, L. (2025), ‘About 1 in 5 U.S. workers now use AI in their job, up since last year’, Pew Research Center, 6 October.

[9] [26] [27] [40] [41] [49] International Task Force on Teachers for Education 2030 & UNESCO (2024), Global Report on Teachers: Addressing teacher shortages and transforming the profession, UNESCO, Paris.

[10] [24] Eurofound 2026, ‘Algorithmic control: How digital surveillance is shaping online platform work in Europe’, article, Eurofound, Dublin.

[11] International Labour Organization 2024, Global Wage Report 2024–25: Is wage inequality decreasing globally?, ILO, Geneva, doi:10.54394/CJQU6666.

[13] [53] OECD 2025, ‘Executive summary’, in OECD Employment Outlook 2025, OECD Publishing, Paris, doi:10.1787/194a947b-en.

[14] [21] Schwellnus, C., Kappeler, A. & Pionnier, P.-A. 2017, ‘Decoupling of wages from productivity: Macro-level facts’, OECD Economics Department Working Papers, no. 1373, OECD Publishing, Paris, doi:10.1787/d4764493-en.

[15] Eurofound 2025, ‘Four days a week? Europe debates shorter working times’, article, Eurofound, Dublin.

[17] [51] Tcherneva, P.R. 2025, The Death of the Social Contract and the Enshittification of Jobs, Working Paper no. 1100, Levy Economics Institute of Bard College, Annandale-on-Hudson, NY.

[19] OECD 2024, OECD Employment Outlook 2024: The Net-Zero Transition and the Labour Market, OECD Publishing, Paris, doi:10.1787/ac8b3538-en.

[20] [56] OECD 2025, Membership of unions and employers’ organisations, and bargaining coverage, OECD Publishing, Paris, doi:10.1787/fe47107c-en.

[23] Eurofound 2025, ‘A new world of work – challenges and opportunities: EWCS 2024 first findings’, digital story, Eurofound, Dublin.

[28] OECD 2023, Health at a Glance 2023: OECD Indicators, OECD Publishing, Paris, doi:10.1787/7a7afb35-en.

[29] [30] World Health Organization 2025, ‘Over a billion people living with mental health conditions – services require urgent scale-up’, news release, World Health Organization, Geneva, 2 September.

[31] U.S. Bureau of Labor Statistics 2026, ‘Employment and average weekly earnings by industry’, chart, U.S. Department of Labor, Washington, DC.

[33] Davis, J.S. 2026, ‘AI is simultaneously aiding and replacing workers, wage data suggest’, Federal Reserve Bank of Dallas, Dallas, TX, 24 February.

[34] [57] [60] Miao, F. & Holmes, W. 2023, Guidance for generative AI in education and research, UNESCO, Paris.

[38] [39] [44] [48] [50] [55] [59] OECD 2023, Beyond Applause? Improving Working Conditions in Long-Term Care, OECD Publishing, Paris, doi:10.1787/27d33ab3-en.

[46] OECD n.d., ‘Collective bargaining and social dialogue’, OECD Topics, OECD, Paris.