Data AI vs Bio AI: Why Education Policy Is Backing the Wrong Intelligence

Published

Modified

Data AI looks powerful, but it is energy-hungry and weak in noisy, causal settings Bio AI offers a more efficient and more adaptive path by using living neural systems Education policy should stop training only for today’s chatbots and prepare for this wider AI future

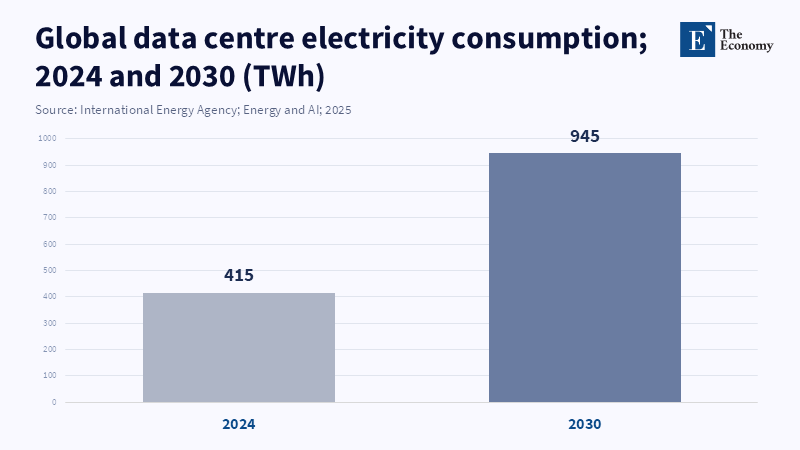

By 2030, global data center electricity use is projected to reach about 945 terawatt-hours per year. That number matters more than the latest chatbot benchmark. It tells us that the dominant form of Data AI is not just a software story. It is a power story, an infrastructure story, and a public-cost story. At the same time, a small biological system made from 200,000 living human neurons has learned to play Doom on a chip. The performance is rough, but the signal is clear. A very different path in AI is taking shape. Bio AI does not begin with bigger datasets, bigger models, and bigger server farms. It begins with living neural tissue that learns through feedback, adapts in real time, and may do some forms of computation at a tiny fraction of the energy cost. Education policy has treated Data AI as the whole future. That is the mistake. The real task now is to prepare learners for an AI landscape in which Bio AI may become the more serious long-term intelligence model.

Bio AI is not a stunt

The Doom experiment matters because it was not a magic trick dressed up as science fiction. It was built on a 2022 peer-reviewed result in which neurons on a chip learned to play Pong within minutes using closed-loop feedback. That earlier work showed something more important than game play. According to a special issue on AI-assisted feedback, systems that incorporated feedback demonstrated learning, whereas those without did not, indicating that the cells were actively engaged rather than passively relying on software operating around them. According to Game Developer, the recent Doom demonstration introduced a more complex task for AI by incorporating elements such as fast movement, hidden pathways, and intense action, building on previous ideas of activity changing in response to consequences. Even its defenders admit the neurons are bad at the game. That is not the point. The point is that Bio AI has moved from a narrow proof of concept to a more complex setting, while staying tied to real neural adaptation rather than vast statistical pattern matching.

What makes Bio AI different is not that it is alive in a sensational sense. It is that it computes through the properties of living tissue. According to a 2011 study published in Neurocomputing, biological systems, including brain-like neural networks, exhibit nonlinear, chaotic behavior that enables a range of possible solutions to a problem, with built-in mechanisms that guide these solutions toward the intended goal rather than toward chaos. That is closer to embodied adaptation than to next-token prediction. The same research path is now being framed for medical use as well as computing. Cortical Labs has said its CL1 platform is meant for real-time neural learning, disease modeling, and drug testing. That matters for education because it shifts the meaning of AI literacy. If Bio AI grows, future students will need more than prompt writing and model evaluation. They will need a working grasp of neuroscience, electrophysiology, lab-grown tissue, and the ethics of systems that sit halfway between software and biology.

Bio AI also matters because it signals a different logic of efficiency. The human brain runs on roughly 20 watts. No one should pretend that a petri dish computer today gives us a fully synthetic brain. But the comparison still matters. Modern Data AI keeps chasing performance by adding more compute, more memory, more cooling, and more capital. Bio AI asks a harder question. What if some forms of useful intelligence come not from scaling brute force, but from using the dense, adaptive, and highly efficient dynamics that biology already solved long ago? That is why the 2023 Brainoware study deserves attention. It showed brain organoids could be used inside a computing framework for tasks such as speech recognition and nonlinear prediction. The field is early. It is messy. But it is no longer fanciful. According to the International Energy Agency, advances in artificial intelligence are advancing rapidly and now have significant implications for both technological innovation and policy decisions, particularly in sectors such as energy.

Data AI has hit a scaling trap

The strengths of Bio AI are becoming more evident as Data AI approaches its limits in how far it can scale. For language, code, and image tasks, Data AI can look astonishing. In low-noise settings, where the world offers huge datasets and the task rewards statistical compression, that approach works well. But success in those areas has led to a bad habit. Policymakers have begun treating pattern success as evidence of general intelligence. It is not. Even recent work on multimodal large language models shows that these systems still fall short of human abilities in causal reasoning, intuitive physics, and social understanding. That gap matters. Many of the hardest problems in education, health, and policy are not clean next-token tasks. They are noisy, embodied, uncertain, and causal. A system that can imitate the surface of knowledge is not the same as a system that can understand why events happen or adapt safely when the environment shifts.

This is where the common defense of Data AI starts to thin out. We are told that scale will fix the weakness. More data will fix it. More reinforcement learning will fix it. More chips will fix it. But that answer now comes with a visible public bill. The IEA projects that data-center electricity demand will roughly double by 2030 to around 945 terawatt-hours. According to Reuters, Nvidia has become the first company to surpass $5 trillion in market value as major technology firms continue to rapidly build out AI infrastructure. Microsoft and OpenAI also recently entered into a deal to boost fundraising efforts, with OpenAI preparing for a potential $1 trillion IPO, highlighting that issues surrounding AI investments and energy demands go far beyond technical concerns. It is a structural choice about where knowledge systems will live and who can afford to build them. If AI remains tied to giant capital budgets and heavy power demand, then the future of intelligence will be shaped less by open learning and more by infrastructure concentration.

The deeper problem is conceptual. Data AI is built on correlation at scale. That makes it powerful, but also brittle in domains where the data are thin, noisy, or misleading. Bio AI will not solve every task. A calculator will still beat a neuron dish at arithmetic. But Bio AI offers a route toward systems that learn through interaction, not just exposure. That matters for robotics, diagnosis, adaptive control, and any setting where the world pushes back. Critics will say this is romantic biology and that neurons on chips are still primitive. Fair enough. Yet the point is not that Bio AI is finished. The point is that it is attacking the right weakness. It is trying to solve the part of intelligence that Data AI still struggles with: grounded adaptation under uncertainty. In the long run, that may prove more important than another marginal gain on a benchmark leaderboard.

Education policy is still training for the wrong AI

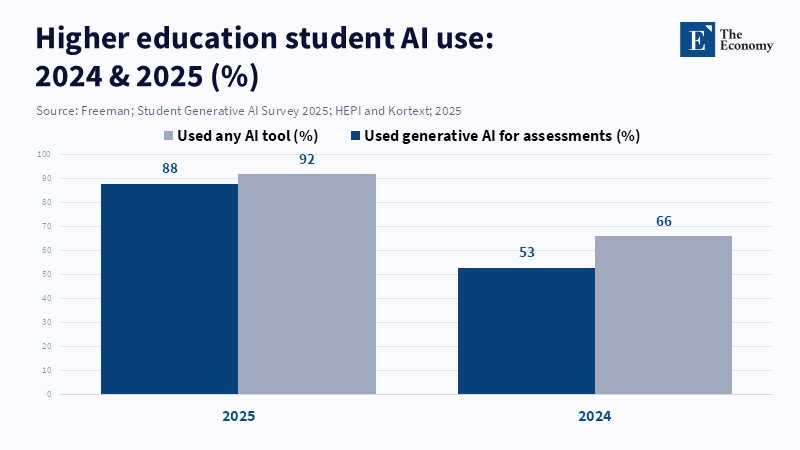

Education has moved fast on AI, but mostly in the wrong direction. Institutions rushed into rules for chatbots, essay detection, automated tutoring, and campus productivity tools. Those moves were understandable. Students are already deep into Data AI. According to a 2025 survey by HEPI, 88 percent of higher education students reported using generative AI tools like ChatGPT for their assessments, with the most common activities being explaining concepts, summarizing articles, and generating research ideas. The portion of students using these tools for assessments increased significantly from 53 percent the previous year. The survey did not provide figures on overall AI tool usage among students or on teachers' AI use. The issue is not whether AI belongs in education. It already does. The issue is which AI future education is normalizing. Right now, most systems are teaching students to be consumers of Data AI. They are not teaching them to understand the material, energy, biological, and ethical foundations of the next wave.

That narrow focus creates three risks. First, it makes AI education too tool-specific. Students learn interface habits instead of intelligence models. Second, it hides the environmental cost of the current stack. A campus can write an AI policy without ever discussing the electricity, water, chips, and data-center buildout that make the policy possible. Third, it leaves biology outside the AI curriculum, even as biology begins to re-enter computation. UNESCO has already argued for a human-centered vision of AI in education. That is the right starting point, but it now needs a sharper edge. Human-centered AI policy cannot mean teaching students to use black-box systems while ignoring the coming merger between AI, neuroscience, and bioengineering. If Bio AI develops faster than expected, the present curriculum will not look forward-looking but will, oddly, seem outdated.

The answer is not to replace computer science with wet labs or to announce that every school needs a biocomputing course tomorrow. The answer is to stop teaching AI as if a single architecture has already won the history of AI. Education policy should treat Bio AI as a live frontier and build a broader literacy around it. That means linking AI education to biology, neuroscience, energy systems, ethics, and regulation. It means teaching students why a statistical model and a living neural substrate are not the same thing, even if both can produce useful outputs. It also means being honest about labor demand. OECD work on AI and skills keeps stressing that education must compare AI capabilities with human skills to judge where machines replace people and where they complement them. Bio AI raises that question again, but in a more serious form, because it may challenge not only white-collar writing tasks but also adaptive, sensor-rich work that Data AI still finds hard.

Bio AI should reset what schools fund, teach, and regulate

The most common critique is that Bio AI is too early to matter for mainstream education policy. That critique sounds prudent, but it fails two tests. First, education systems do not change quickly. By the time a technology is fully mature, curricula, accreditation rules, research funding, and teacher training are already lagging. Second, the basic direction of travel is now visible. We have a peer-reviewed Pong result, a more advanced Doom demonstration, organoid-computing work in a top journal, early commercial biological-computing platforms, and growing interest in medical and robotic applications. That is enough to justify preparation. We do not need to predict that Bio AI will replace every current model. We need to accept that AI is splitting into different families, and that education policy built around a single family will misread the next decade.

A better agenda would do four things at once. It would keep teaching students how present-day Data AI works, because that system still dominates most real use cases. It would add stronger instruction in causality, uncertainty, and embodied intelligence, because those are the very areas where current systems remain weak. It would invest in cross-disciplinary programs that connect computing with neuroscience, synthetic biology, and bioethics. And it would update research governance before campuses accidentally drift into the field. Bio AI raises questions that plagiarism software cannot answer. What counts as training when the substrate is living tissue? What level of oversight should apply to classroom or university research using human-derived cells? How should consent, ownership, and moral status be handled if the field becomes more capable? These are educational questions because universities will shape the norms long before parliaments catch up.

The opening contrast should now be read as a warning. One path in AI is to reach almost 1,000 terawatt-hours of annual data center electricity use by 2030. The other path has just shown that a tiny living neural system can learn, however crudely, inside a game world. Data AI will remain useful. It will keep writing, summarising, coding, and predicting. But its limits are now clearer than its marketing suggests. Bio AI deserves attention not because it is eerie, but because it is credible. It targets the weak spot of the current model: energy-hungry pattern extraction that still struggles with grounded causal adaptation. Education policy should stop acting as if the future of intelligence will be settled by larger chatbots alone. The systems we teach around will shape the systems we later trust. If schools and universities want to prepare students for the real future of AI, Bio AI has to move from the margins of the syllabus to the center of the debate.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Béchard, D.E. (2026) ‘How human neurons on a chip learned to play Doom’, Scientific American, 28 March.

Cai, H., Ao, Z., Tian, C., Wu, Z., Liu, H., Tchieu, J., Gu, M., Mackie, K. and Guo, F. (2023) ‘Brain organoid reservoir computing for artificial intelligence’, Nature Electronics, 6, pp. 1032–1039.

Cortical Labs (2026) CL1. Melbourne: Cortical Labs.

Freeman, J. (2025) Student Generative AI Survey 2025. Policy Note 61. Oxford: Higher Education Policy Institute.

International Energy Agency (2025) Energy and AI. Paris: International Energy Agency.

Kitchen, A.C., Kagan, B.J., Tran, N.T., Habibollahi, F., Khajehnejad, M., Parker, B.J., Bhat, A., Rollo, B., Razi, A. and Friston, K.J. (2022) ‘In vitro neurons learn and exhibit sentience when embodied in a simulated game-world’, Neuron, 110(23), pp. 3952–3969.e8.

Lim, D. (2024) ‘The difference between artificial and biological neurons is beyond comparison’, Medium, 17 October.

Miao, F. and Holmes, W. (2023) Guidance for Generative AI in Education and Research. Paris: UNESCO.

OECD (2025) Results from TALIS 2024: The State of Teaching. Paris: OECD Publishing.

OECD (2026) Artificial intelligence and education and skills. Paris: OECD.

Pelley, R. (2026) ‘A petri dish of human brain cells is currently playing Doom. Should we be worried?’, The Guardian, 16 March.

Robert, J. and McCormack, M. (2025) 2025 EDUCAUSE AI Landscape Study: Into the Digital AI Divide. Louisville, CO: EDUCAUSE.

Schulze Buschoff, L.M., Akata, E., Bethge, M. and Schulz, E. (2025) ‘Visual cognition in multimodal large language models’, Nature Machine Intelligence, 7, pp. 96–106.

Swift, R. (2026) ‘Big Tech’s $635 billion AI spending faces energy shock test, S&P Global says’, Reuters, 31 March.

Wilkins, A. (2026) ‘Human brain cells on a chip learned to play Doom in a week’, New Scientist, 2 March.

Hashemi, S. (2026) ‘A clump of human brain cells on a computer chip learned to play the nostalgic video game “Doom”’, Smithsonian Magazine, 27 March.