AI and the Deformation of Learning: Human Capital in an Age of Assisted Cognition

Published

1 The Economy Research, 71 Lower Baggot Street, Dublin 2, Co. Dublin, D02 P593, Ireland

2 Swiss Institute of Artificial Intelligence, Chaltenbodenstrasse 26, 8834 Schindellegi, Schwyz, Switzerland

Between 2023 and 2026, generative AI systems transitioned from marginal classroom aids to integral components of everyday study practices, disseminating at a pace that outpaced institutional policies and pedagogical adaptations. Drawing on international survey data, extensive field experiments, and broad labor-market forecasts, this study demonstrates that the technology influences more than mere output increases; it disrupts the visible link between performance outcomes and the underlying cognitive effort essential to sustained skill development. In both secondary and higher education, over 80% of students incorporate tools like ChatGPT into assessed assignments, yet fewer than 10% of institutions provide enforceable guidelines. This creates an environment where managing the AI interface supplants critical processes such as retrieval, reflection, and error correction. Experimental evidence supports this inherent trade-off: while AI assistance enhances immediate performance scores, it subsequently diminishes unaided task execution and retention over 45 days. Moreover, investigations into professional tasks indicate that human-AI collaborations frequently perform worse than either the leading individual human expert or the standalone AI model when nuanced judgment is required. These developments coincide with declining foundational skills—OECD data document the sharpest drop in mathematics achievement since PISA assessments began—and unfold within a labor market increasingly valuing verification, adaptive reasoning, and epistemic resilience. Consequently, generative AI should be conceptualized as an infrastructural influence that reshapes which competencies remain scarce. To sustain the reservoir of independent expertise, educational systems must implement assessments that reveal reasoning processes, design interfaces that maintain student engagement, and emphasize verification literacy within core curricula. Without such reforms, credentialing risks becoming superficial, reflecting polished performance unaccompanied by the robust skills beneath.

1. Introduction - The illusion of Enhanced Learning

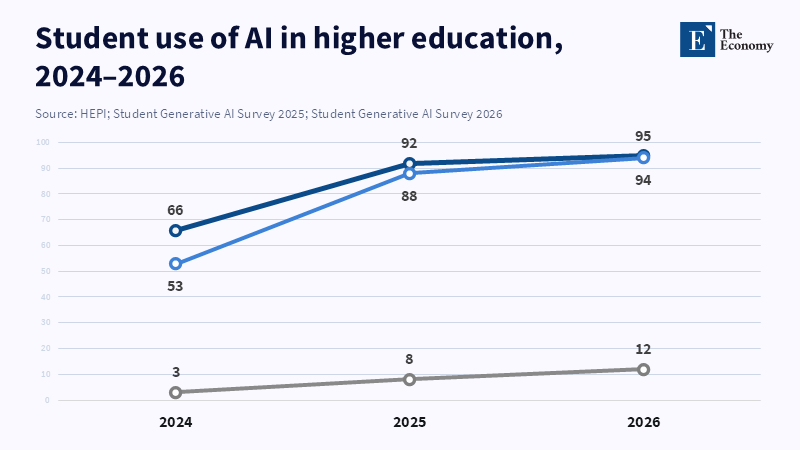

The belief in enhanced learning, often reinforced by rapid student improvement, hides a deeper shift in education. College Board data from June 2024 to June 2025 shows that generative AI use for academic tasks rose from 79% to 84%; 69% of high school students used ChatGPT for assignments in May 2025. Still, about half of students are unsure if AI’s benefits outweigh risks (College Board, 2025). Although generative AI is common, it allows higher-quality work without real skill growth, so students doubt its long-term impact. UK evidence echoes this: the HEPI/Kortext report found 88% of students used generative AI in 2025 assessments, and nearly one-fifth turned in AI-generated or edited work. This wide use raises doubts about whether submissions show true individual mastery (Freeman, 2025). The popular idea that AI simply boosts learning is misleading, since it ignores the main risk: generative AI favors polished outputs over the effort, feedback, correction, and retrieval cycles needed for genuine skill (College Board, 2025; HEPI, 2025).

This issue is urgent for schools, not just an idea. A recent UNESCO survey said almost two-thirds of higher education groups in UNESCO’s networks have made or are making AI rules, showing a bigger policy reaction to how common AI has become. Still, over half of those asked were unsure how to use AI well, and one in four said there were ethical problems, like students depending too much on AI (UNESCO, 2025). Overall, the OECD’s Digital Education Outlook 2023 shows that all OECD countries know how widespread AI use is, but none of the 18 with in-depth data have official rules, and only half have given loose guidance (OECD, 2023). The main worry is not just slow rules, but when rules lag, grades, degrees, and speed become what guide learning. As a result, students learn what current tests can still check (UNESCO, 2023; UNESCO, 2025; OECD, 2023).

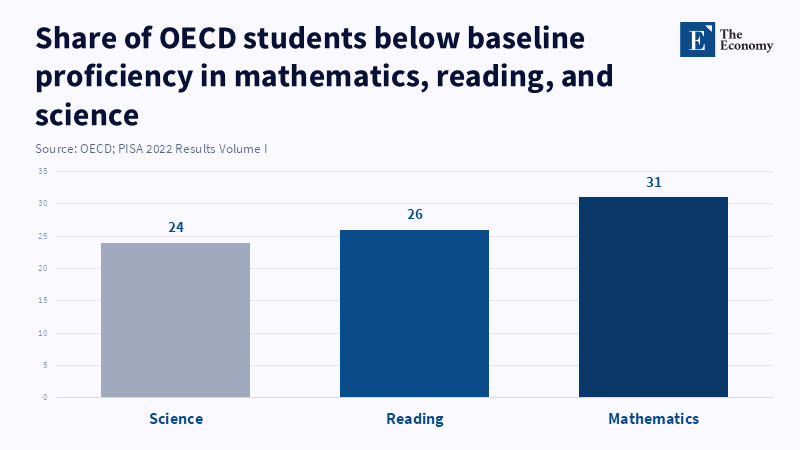

To reframe policy, focus on the real risk: it is not just plagiarism or AI mistakes, though both matter. Generative AI moves cognitive effort from building internal knowledge to managing interfaces—prompting, picking, light editing, and submitting. This looks like progress, but it may actually distort human capital development. This is especially concerning, given that basic skills are already in decline. PISA 2022 results show big drops across OECD countries: math scores fell 15 points and reading scores fell 10 points from 2018 to 2022. The OECD says these drops equal about half to three-quarters of a school year lost for 15-year-olds, based on PISA-linked studies. If teaching shifts to faster results but weakens lasting skills, measures may improve on the surface, but true skill can stagnate or fall. For educators, if assessment keeps rewarding polished work done under lax conditions, the system teaches that independent thinking does not matter. So, change assessments to reward reasoning and learning processes—this is a human capital strategy, not just a moral one. For leaders, do not rely on informal AI norms; switch to clear rules matched to educational goals. For policymakers, treat generative AI as a major change in skill-building and workforce prep. Needed actions: set standards for assessment integrity, data governance, and AI literacy (OECD, 2023; OECD, 2023 PISA; UNESCO, 2023).

2. From Effort to Output: A Structural Shift in Learning

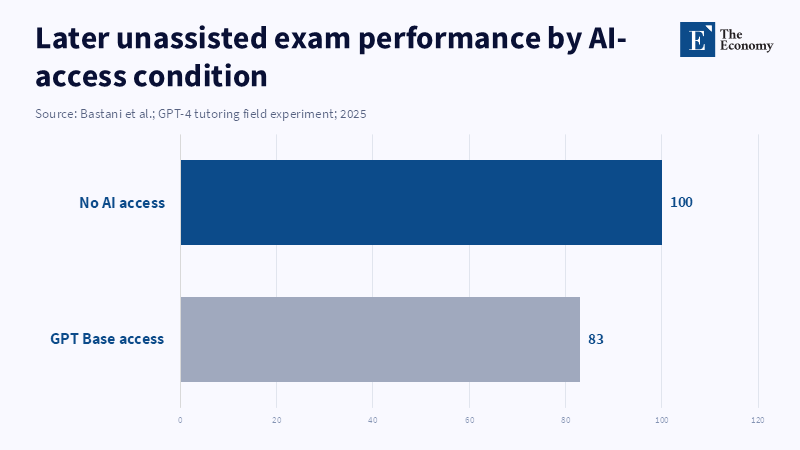

Generative AI has changed how students learn, moving from a path of 'effort, struggle, feedback, mastery' to just 'prompt, output, quick check,' missing learning through mistakes. This is key since learning from mistakes builds skills. In a big experiment with about one thousand high school math students, splitting effort from results was more than a theory. Students used either a basic chat (“GPT Base”) or a more guided tool (“GPT Tutor”). Tech & Learning reports that using GPT-4 AI tutors did not help grades; scores on unassisted tests went down by 17% compared to those not using AI. More studies show that all AI tutors studied hurt learning, and making AI models bigger does not always help. Longer chats make things even worse, with more errors, as Hazra and team found in 2026. Students often use AI answers instead of working out problems for themselves, leading to a focus on short-term results instead of real skills. As a result, students get good at getting answers from imperfect systems, even if the systems are not dependable or match lesson aims (PNAS/PubMed, 2025).

The limits of these AI tools add problems. In the same math study, the “GPT Base” system was right only about half the time; 42% of its answers had logic errors, and 8% had math mistakes. This shows a big issue with AI: its answers can sound sure and smooth but still be wrong, and mistakes vary by problem type—unlike calculators (Zhang and Graf, 2025). This matters for student skills, since fixing errors helps students master material. When students use unreliable AI, they must check answers closely hard skill—or just trust answers that may be wrong, which hurts real understanding. Data also show students using the guided tutor spent about 2 more minutes per session (13% longer), showing that good rules can get students to think more (Rodríguez et al., 2022). For teachers, letting AI be used is not enough. Rules that keep students thinking—like asking how they check for mistakes or use prompts—should be required, not just letting AI give answers, to protect chances to practice. School leaders must make sure the tech and programs used fit teaching goals, because settings can shape what students learn. Policy should make a clear line between AI that helps students learn and AI that replaces them to prevent use that does more harm than good (PNAS field experiment, 2025).

When considering retention, the trade-off between immediate benefits and long-term outcomes becomes evident.

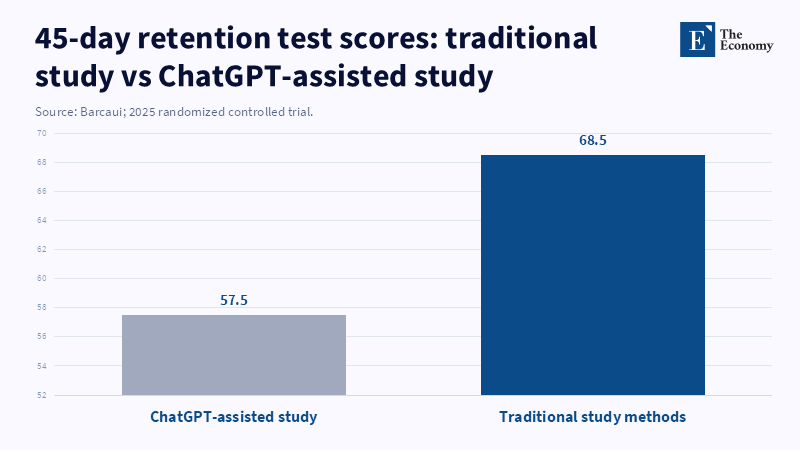

A randomized controlled trial involving undergraduates studying AI-related material found that those who used ChatGPT as a study aid performed significantly worse on a surprise retention test administered 45 days later (57.5% correct) compared to peers using non-AI study methods (68.5%), with a moderate-to-large effect size (Cohen’s d = 0.68) (Barcaui, 2025). The authors attribute this outcome to reduced cognitive effort during study, leading to weaker, more transient memory formation. This finding aligns with the structural shift in learning associated with AI: as the effort required to generate answers decreases, learners engage less during retrieval practice and self-explanation, both of which cognitive science recognizes as essential for durable knowledge. While these retention results should be generalized cautiously across subjects and instructional designs, their significance lies in showing a measurable long-term consequence rather than immediate utility. For educators, this questions the assumption that time saved through AI is inherently beneficial if it replaces cognitive activities vital for retention. Administrators should distinguish between AI use for drafting and for studying, ensuring that deliberate retrieval and explanation tasks are incorporated independently of AI, particularly within foundational courses. At the policy level, national human capital strategies should treat unstructured AI study aids as risks to skill acquisition and prioritize investment in evaluation and the development of effective safeguards, rather than relying solely on market-driven adoption as a pedagogical solution (Barcaui et al., 2025).

3. Critical Thinking Under Delegation

As generative AI becomes a default information source, the skill most at risk is not sentence construction but the capacity to assess truth, relevance, and causality under uncertainty. Delegation to AI alters mental processes by changing cost structures: when reasoning is effortful, and outputs are scarce, learners invest in developing internal models; when outputs are abundant and easily accessible, evaluation becomes the primary bottleneck. Learners without sufficient domain expertise to critically assess AI-generated outputs are prone to automation bias, which is the unwarranted reliance on automated information despite potential inaccuracies. This bias is well documented in decision-support contexts, with systematic reviews in medical informatics showing that reliance on automation can introduce new errors. Influencing factors include trust, confidence, workload, and task-specific experience, while interventions such as targeted training, user accountability, and design features that display confidence metrics can mitigate these effects. The educational implications are substantial: students must verify and integrate AI-produced content that may appear credible but be flawed, yet current incentive structures often prioritize the appearance of correctness over rigorous validation. As a result, critical thinking is frequently reduced to superficial selection and minor editing of outputs rather than constructing arguments based on foundational principles. For educators, this necessitates a pedagogical shift in which verification skills—such as source tracing, claim validation, and counterexample formulation—are explicitly taught rather than assumed outcomes of writing practice, given that AI-generated plausibility complicates error detection. Administrators should revise academic integrity protocols to emphasize verification measures, including citation checks, data provenance declarations, and in-person defenses for substantive assignments. Policymakers should redefine AI literacy to focus on epistemic competencies, including understanding model restrictions, verifying claims, and developing risk awareness, in line with emerging regulatory frameworks in the European Union (Goddard et al., 2011/2012; EU AI literacy Q&A, 2025).

Empirical research increasingly shows that greater reliance on AI correlates with diminished critical thinking, even when based on self-reports and survey methodologies. A 2025 study involving 580 university students reveals that increased dependence on AI correlates with reduced critical thinking abilities, with mental fatigue partially mediating this relationship. Moreover, information literacy exerts a dual moderating effect by attenuating some adverse impacts on critical thinking while simultaneously intensifying fatigue under high AI reliance. Similarly, a separate 2025 survey of 319 knowledge workers reports that elevated confidence in generative AI is associated with lower critical thinking, whereas stronger task-specific self-confidence is associated with enhanced critical analysis. The study further notes a shift in critical thinking toward activities such as verification, integration, and task stewardship (Tian and Zhang, 2025). These conclusions do not establish biological cognitive decline caused by AI; rather, their policy relevance resides in illustrating the relationship among reliance, confidence, and fatigue. In situations where it is widely accessible, cognitive resources shift from deep reasoning toward triage and synthesis. This redistribution bears consequences for human capital development since education encompasses not only skill acquisition but also the cultivation of reflective habits—knowing when to question, verify, and persevere. Accordingly, educators should render metacognition observable by assessing not only final answers but also the verification steps taken, alternative perspectives considered, and anticipated failure modes. Administrators should integrate AI literacy into broader computer literacy initiatives, providing a standardized verification framework throughout departments to prevent fragmented, ad hoc practices. Policymakers must prioritize regulation focused on “high-stakes cognition,” targeting domains where automation bias entails considerable social costs, and support mechanisms for auditability and mitigation research within instructional settings (Tian et al., 2025; Lee et al., 2025).

The unreliability of generative AI in scholarly contexts offers a clear illustration of the need for explicit verification. An experimental observational study examining ChatGPT 3.5’s responses to medical queries found that over two-thirds of the cited references were fabricated, and domain experts identified major factual inaccuracies in approximately one-quarter of the answers (Gravel et al., 2023). Although this dataset does not derive from an educational setting, it denotes a fundamental concern relevant across academic fields: generative systems can produce authoritative outputs—such as citations, journal titles, and plausible article headings—that function rhetorically though lack a factual basis. In educational contexts where source identification is approached as a mechanical task rather than an epistemic exercise, this feature undermines scholarly integrity by promoting superficial legitimacy without substantive engagement. For educators, this necessitates redesigning research assignments to preclude completion through synthetic bibliographies alone, mandating direct interaction with primary sources, in-class triangulation of evidence, and oral examinations probing comprehension of methodologies and findings. Academic support units and libraries should assume frontline roles in cultivating AI-era competencies and must therefore be adequately resourced, given risks encompassing not only academic misconduct but also a wider decline in research literacy across student cohorts. National AI strategies should incorporate provenance infrastructure—standards for citation validation, transparency in AI tools, and institutional repositories—as integral to the public-good responsibilities of education rather than as ancillary compliance actions (Gravel et al., 2023).

4. Creativity in the Age of Generative Systems

The most prominent yet ambiguous promise of generative AI in education is its potential to reduce the friction in idea generation, thereby enabling more individuals to produce greater volumes of content. Empirical studies in knowledge work, including both rigorous experiments and field research, frequently demonstrate that generative AI accelerates output and enhances measured quality for specific tasks. For instance, a preregistered experiment focusing on professional writing found that exposure to ChatGPT decreased the average time required by approximately 40% and improved output quality by 18%, alongside an increase in self-reported subsequent usage, indicating a clear productivity gain. According to a report by Curtonews, a field experiment found that management consultants using the GPT-4 language model were significantly more productive and delivered much higher-quality results than those who did not use the AI tool (Reeman et al., 2024).

However, evidence of productivity gains and enhanced outputs is accompanied by studies reporting potential drawbacks. Some research suggests that reliance on generative AI for idea generation may lead to more homogeneous work or diminished originality when prompts and outputs are not critically evaluated, while other findings indicate its supportive effects are unevenly distributed, benefiting certain users more than others depending on their prior expertise or the nature of the creative task (Noy & Zhang, 2023; Dell’Acqua et al., 2023). This uneven performance along the boundary of AI capability remains especially relevant for education since creativity, in its economically significant form, cannot be equated simply with content generation. Rather, creativity involves integrating generative fluency with judgment under conditions of uncertainty, adherence to discipline-specific constraints, and accountability. As AI reduces the cost of draft generation, the scarce human skill moves towards curation, critical evaluation, and disciplined refinement. The risk is that curricula may adapt by valuing mere output presence rather than quality judgment, effectively teaching students to approach creativity as a matter of managing interfaces. For educators, this necessitates the design of creative tasks that explicitly require judgment: students might be allowed or even encouraged to use generative systems for ideation, but must justify their choices in terms of constraints, trade-offs, and alternatives, and demonstrate how they tested for failure modes—an approach that reflects the reality of navigating variable AI capabilities rather than assuming uniform performance. Administrators should reconceptualize “creative AI” in curricular review not as a novel addition but as a prompt to clarify the true learning objectives related to creativity—such as divergence, constraint compliance, and critical selection—and adjust assessment rubrics accordingly. Policymakers ought to avoid outright bans on creativity-enhancing tools and instead focus on guaranteeing that educational standards emphasize human discernment and accountability, as labor market incentives are likely to favor these skills in an environment with abundant AI-produced content (Noy & Zhang, 2023; Dell’Acqua et al., 2023).

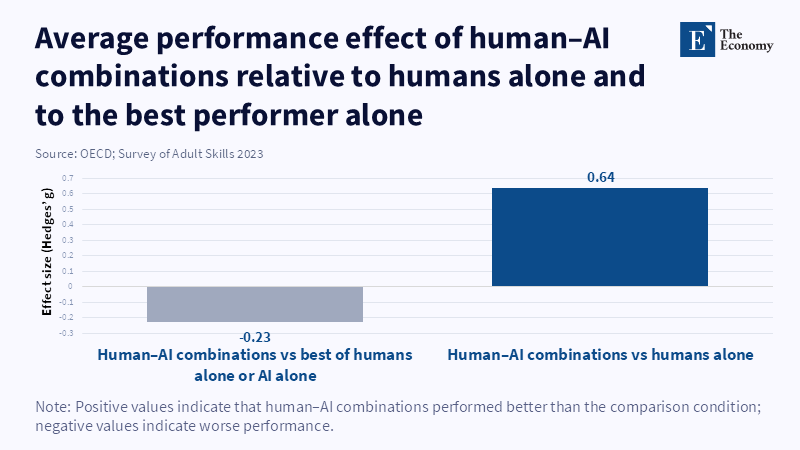

A substantial counterargument is that democratizing content creation benefits human capital development by lowering barriers to drafting, thereby enabling more students to engage in practice, iteration, and expression. While this view has merit, it presupposes that increased use equates to productive practice rather than substitution. Evidence from mathematics tutoring illustrates the substitution effect: when students had access to interfaces that directly produced answers, they frequently depended on these tools as crutches, resulting in deteriorated performance when working unaided later on. Negative effects diminished when the system was designed to provide hints instead of outright answers. The same logic applies to creativity tasks. If generative AI functions as a ready-made “answer” to every blank page, students may engage less in the generative struggle necessary to develop style and refined taste; conversely, if used as a source of prompts or a partner in critique, it may amplify deliberate practice. This outcome depends on design choices and governance rather than being an inherent property of AI. A comprehensive meta-analysis of human–AI collaboration illustrates the complexity of augmentation: on average, human–AI teams performed worse than the best human or AI alone (Hedges’ g = –0.23), with the largest performance declines observed in decision-making tasks, and greater gains in content creation. These findings caution that simply integrating AI into workflows does not guarantee improved results and may undermine performance where judgment is crucial (Vaccaro et al., 2024).

For educators, this implies that acceptance of creative AI is confined to draft generation, while preserving the domain of judgment through assessment methods that require explanation, critique, and revision histories, compelling learners to articulate reasons for the quality of their final outputs. From an administrative perspective, academic regulations should clearly distinguish between “creative generation” and “decisional responsibility,” reflecting professional practices in which AI output is regarded as suggestive rather than authoritative. Finally, policymakers should invest in developing and standardizing assessment models that evaluate judgment and reasoning in creative domains, such as oral defenses or portfolio reviews with process documentation, rather than relying solely on artifacts vulnerable to AI synthesis (PNAS/PubMed, 2025; Vaccaro et al., 2024).

5. Persistence Without Necessity

Historically, the learning economy has relied on a scarce resource: sustained cognitive effort in environments marked by delayed feedback and partial failure. Generative AI alters this dynamic by making many intermediate steps optional. When such tools are available, learners may circumvent frustration rather than converting them into competence; the model generates a plausible continuation, the learner makes minimal edits, and the process concludes without engaging in demanding cognitive activities. This shift has policy implications that extend beyond concerns about reduced attention spans. Specifically, it threatens the development of capacities essential for “deep work”—including extended reasoning, tolerance for delayed rewards, and mistake correction —which remain valuable in labor markets increasingly shaped by AI. Empirical evidence supports this trade-off: in a retention randomized controlled trial, students who used ChatGPT for study performed worse 45 days later than those using traditional methods, consistent with the notion that diminished cognitive effort undermines long-term retention. Similarly, a field experiment in mathematics found that while AI-generated answers improved practice performance, they reduced subsequent unassisted performance, indicating a substitution effect. These findings align with a straightforward mechanism: when learning tasks are perceived as optional because they are easy to complete, persistence is less developed, even though persistence itself has economic value in the labor market.

For educators, this suggests the need to reintroduce “need within curricular structure challenges: requiring independent problem-solving attempts before AI consultation, assessing the quality of reasoning rather than emphasizing solely correct final answers, and incorporating spaced retrieval tasks that cannot be outsourced in real time. For administrators, the challenge is to frame AI policy within broader strategies for student health and capability development, not simply academic integrity; if students come to expect that difficulty can always be avoided, they may choose avoidance. Policymakers should consider reforms that increase the proportion of assessments conducted under conditions where persistence is evident, such as timed problem-solving, oral exams, and supervised laboratories, since institutional practices shape habits, which in turn influence the accumulation of human capital (Barcaui et al., 2025; PNAS/PubMed, 2025).

A common counterargument draws on the analogy of calculators: tools have historically reduced particular frictions, yet educational systems adapted, and learning persisted. However, this analogy falters precisely where policymakers must focus—namely, on epistemic reliability and the locus of reasoning. Calculators provide correct arithmetic given accurate inputs, whereas generative AI outputs are probabilistic and can confidently produce incorrect responses, including in areas where learners are least equipped to identify errors. For example, the high school math study found that AI-generated answers were correct only about half the time on average and contained significant logical errors. This distinction underscores why “outsourcing” cognitive work differs fundamentally from “assistance”: when learners disengage, they not only save time but also lose the ability to detect and correct recurring errors. Furthermore, calculators were integrated into pedagogy alongside explicit conceptual safeguards such as cultivating number sense, estimation skills, and mental arithmetic. In contrast, generative AI’s rapid diffusion often precedes such measures; UNESCO’s 2023 report noted that fewer than 10% of surveyed institutions had formal guidelines.

From an educational perspective, this calls for reestablishing lessons learned during the calculator era: clearly identifying which cognitive skills must remain actively cultivated and designing tasks accordingly. Just as mental arithmetic has often been protected through “no-calculator” policies, reasoning and retrieval skills may require “no-generation” zones. Administrators should consider instituting AI-free learning intervals and environments not out of nostalgia, but as deliberate settings for practice, analogous to how pilots maintain manual flying skills despite reliance on autopilot. For decision makers, the calculator analogy serves best as an argument in favor of governance rather than against it: past successful tool integration occurred in situations where institutions intentionally safeguarded core competencies rather than assuming tool neutrality (PNAS field experiment, 2025; UNESCO, 2023).

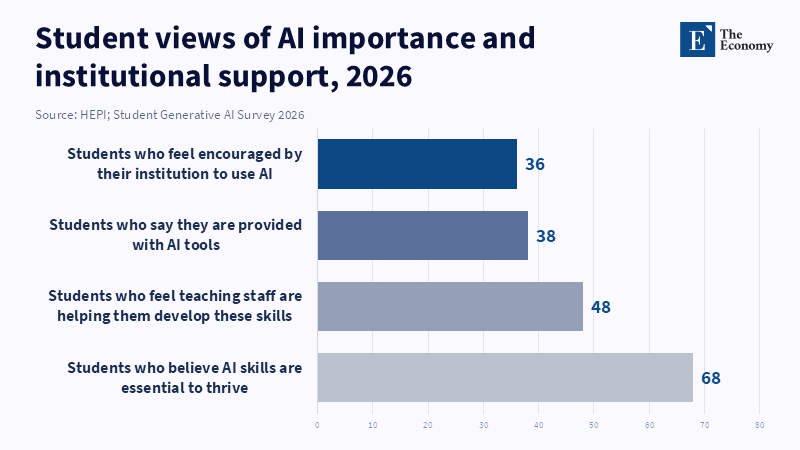

6. Active Engagement vs Passive Optimization

As artificial intelligence becomes more prevalent, student motivation shifts through rational adaptation: students concentrate their efforts on what institutions reward, and institutions primarily reward artifact production. This transformation does not necessarily result in widespread cheating but rather redefines the concept of “studying.” The HEPI/Kortext survey illustrates this shift with notable statistics: in 2025, 88% of surveyed students reported using generative AI for assessments, and 18% indicated that their assessed work included AI-generated or edited text. These data suggest that generative AI has evolved from a peripheral tool to an integral component of assessed work for many learners. As a result, the learning process increasingly centers on managing interfaces—such as prompting, summarizing, and drafting—rather than deeply internalizing subject matter. The survey also highlights a socio-economic digital divide in AI usage, suggesting that this new form of optimization may not democratize learning equally. Instead, it may advantage those with computer literacy and cultural capital, who are better equipped to navigate institutional expectations and use these tools strategically.

A related study by Oxford University Press, referenced by UNESCO, found that 62% of UK students aged 13 to 18 report adverse effects from AI use, including diminished creative thinking and increased dependence on AI tools. This stresses persistent ethical concerns and certainty about how AI should be integrated into academic settings (Oxford University Press, 2024). For educators, these findings show a need to redesign pedagogy to emphasize observable student engagement. Strategies might include having students predict outcomes, explain reasoning, and debug errors; requiring the submission of intermediate products such as drafts and rationales; and assigning meaningful weight to these tasks to discourage reliance on AI-generated outputs as substitutes for cognitive effort. Administrators, in turn, should reconsider academic honor codes and move beyond binary, forbidden frameworks toward nuanced, role- and task-specific guidelines. These would differentiate acceptable forms of AI support—such as brainstorming, feedback, or language editing—from prohibited practices like using AI to generate answers to core learning objectives, accompanied by transparent disclosure.

From a policy perspective, assessment reform should be viewed as a lever affecting workforce quality. If credentials can be acquired with reduced competence, the signaling function of education diminishes, complicating labor market sorting and possibly worsening inequality (HEPI, 2025; UNESCO, 2025). Institutions might be tempted to rely on detection and punitive measures in response to AI use; however, evidence suggests such strategies address symptoms rather than underlying incentives. UNESCO’s 2025 survey contrasts this “regulatory approach,” centered on detection and sanctioning, with iterative methods that integrate AI literacy into curricula and assessments—approaches that correspond more closely with learning by altering incentives rather than merely raising enforcement barriers. The OECD’s Digital Education Outlook 2023 offers a parallel observation: despite extensive adoption of AI tools, few countries have enacted formal regulations, and existing guidance tends to be non-binding. This indicates that educational systems are adapting more organically through practice than through deliberate policy design.

Adopting a perspective that treats AI as educational infrastructure calls for comprehensive institutional redesign. Given the minimal cost of producing plausible text through AI, the educational value of traditional, text-based take-home assignments diminishes unless accompanied by evidence of the learning process. For educators, this means implementing more authentic assessments, including performance-based tasks, supervised writing, or oral joint collaborative work with clearly defined individual contributions. Administrators should incorporate AI policy into quality assurance processes, embedding it within program reviews, learning outcomes, and accreditation standards rather than relying on sporadic enforcement inside classrooms. Officials must recognize the resource implications of these and provide funding to cover transition costs, such as effective supervision, oral evaluation, and performance-based assessments, which demand additional time and staffing. Without such investment, institutions may default to inexpensive artifact-based assessments, thereby reinforcing the problematic dynamics associated with AI integration (UNESCO, 2025; OECD, 2023).

7. Stratification of Human Capital

Generative AI is frequently characterized as a potentially equalizing technology due to its capacity to enhance the performance of lower-skilled individuals throughout various tasks. Empirical evidence partially supports this depiction, which also reveals complexities in its implications. For instance, a field study in customer support found an average productivity increase of 14%, with novices and less skilled workers experiencing larger gains—a 34% rise in issues resolved per hour—whereas highly skilled workers showed minimal improvement, and the conversation quality for the most skilled agents may have declined (Brynjolfsson et al., 2023). Similarly, in professional writing, ChatGPT reduced completion time while improving quality; in consulting tasks on the frontier of AI application, quality and speed increased most significantly among lower performers. These results indicate that generative AI can improve short-term task outputs and thus function as a salient force in that regard.

However, disparities in human capital do not stem solely from performance on isolated assisted tasks but rather from the ability to retain, build upon, and transfer skills when assistance is unavailable or unreliable. According to a recent study by André Barcaui (2025), students who used ChatGPT while studying scored lower on retention tests than those who studied without AI assistance, which suggests that although tools may help close performance gaps in the short term, they can negatively affect long-term knowledge retention and potentially widen differences in underlying skills. Learners with stronger prior knowledge might use AI as a tool to enhance judgment, whereas those with weaker backgrounds may rely on it as a substitute for auto-comprehension. The resulting stratification risk is thus indirect: AI can mask performance disparities while increasing divergence in critical skills such as verification, deep reasoning, and metacognitive control. These skills determine whether AI is used adaptively or as a crutch.

For educational practitioners, this implies a need to focus equity policies on safeguarding skill development by providing all students with structured and supervised AI use designed to support learning rather than encouraging substitution. Administrators should integrate “AI-augmented thinking” into academic support programs, emphasizing disciplined skepticism plus verification over mere reliability, as undue confidence without competence can cause automation bias. Policymakers must prioritize foundational skill acquisition in the AI era because learners with weaker foundations tend to rely more on AI and are less equipped to detect its errors, thereby perpetuating inequality (NBER, 2023; Dell’Acqua et al., 2023; PNAS/PubMed, 2025; Barcaui et al., 2025).

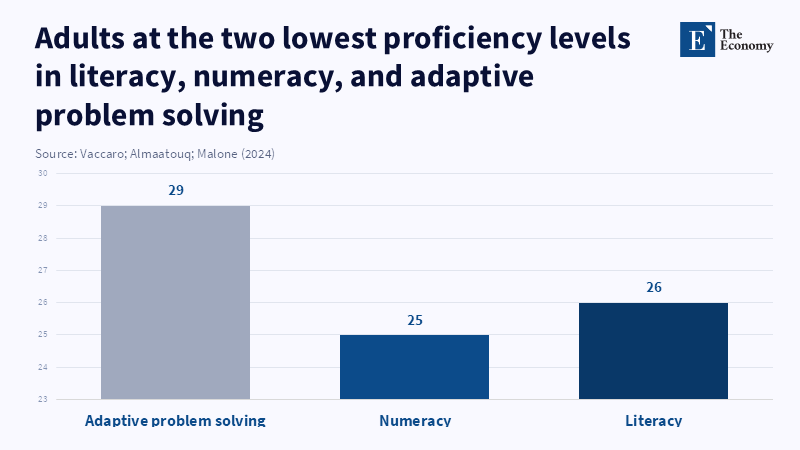

The current distribution of basic skills heightens the political and economic significance of this social stratification. OECD data on adult skills reveal that approximately 26% of adults score at Level 1 or below in literacy, 25% in numeracy, and 29% in adaptive problem-solving, indicating a fragile skill base in areas important for verifying AI outputs and dealing with novel tasks (Schleicher and Scarpetta, 2024). According to the OECD’s PISA 2022 results, average student performance across OECD countries dropped by 10 points in reading and nearly 15 points in mathematics since 2018, which the report equates to about three-quarters of a year of learning. In this context, generative AI may function as an "ability prosthesis" that masks these skill gaps, helping students complete credentials while leaving fundamental weaknesses unresolved until they are expected to perform without AI support. This phenomenon constitutes a hidden liability in economic terms, as it remains invisible during AI-assisted evaluations but later manifests in verification failures, diminished independent performance, and the obligation for extensive remedial interventions.

From an educational perspective, this underlines the importance of curricular designs that enforce mastery of basic skills without reliance on tool substitution, while employing AI to provide feedback and practice that preserve student effort. For administrators, integrating AI into learning analytics requires caution, as rising grades may not reflect genuine competence; implementing low-stakes, supervised diagnostic assessments can estimate unaided proficiency and mitigate reliance on potentially inflated performance indicators. Policymakers should recognize that the credibility of credentials may increasingly depend on national or sectoral standards that evaluate assessed competence, as the economic value of degrees depends on trust in the signals they convey (OECD, 2024; OECD, 2023; PISA).

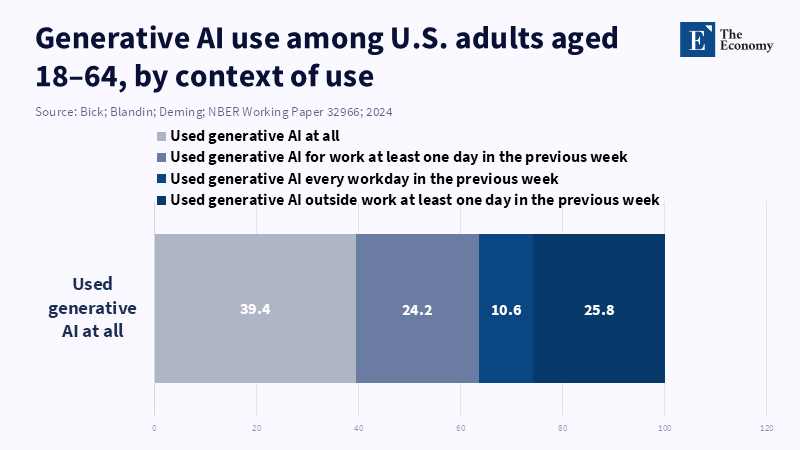

8. Economic Implications: The Future of Labor Supply

The implications of educational deformation for the labor market are additionally amplified by the rapid and extensive adoption of generative AI in the workplace. Nationally representative survey data from the United States indicate that by late 2024, nearly 40% of the population aged 18 to 64 had used generative AI. Among employed respondents, 23% reported using it for work at least once in the previous week, with 9% utilizing it daily. Estimates suggest that generative AI assisted with 1%-5% of total work hours, while self-reported time savings accounted for approximately 1.4% of all work hours. These figures imply that even at initial stages of adoption, generative AI may have macroeconomically significant effects (Bick et al., 2024).

However, short-term productivity improvements do not resolve concerns about the future development of human capital. For example, a field experiment in customer support demonstrated a 14% increase in productivity and accelerated learning for novices, but it was not intended to assess impacts on wages or employment on scale. Analogously, education systems encounter the possibility that early gains in output might mask declines in long-term capabilities. If educational institutions produce more credentialed workers whose underlying skills have been weakened by reliance on AI, the labor market may experience a mismatch, with an oversupply of formal credentials and an undersupply of demonstrably verified competencies. This situation could prompt employers to increase screening, augment on-the-job training, and depend more heavily on a limited pool of highly skilled workers capable of validating and supervising AI-assisted outputs (NBER, 2023; NBER, 2025).

A related concern results from the interaction between exposure to AI in the labor market and educational capacity. Multiple estimates converge on the broad reach of AI exposure: an IMF analysis projects that about 40% of global employment is susceptible to AI, with this figure rising to roughly 60% in advanced economies. Exposure is also notable in emerging markets (40%) and lower-income countries (26%). A complementarity metric suggests that roughly half of the exposed jobs in advanced economies may suffer negative effects, while the remainder could experience productivity enhancements. Similarly, the International Labour Organization’s task-based analysis predicts that augmentation, rather than automation, will predominate in many contexts, though it identifies greater exposure to automation in high-income countries (5.5% of employment potentially affected) than in low-income countries (0.4%). The share of employment with augmentation potential is estimated at 13.4% in high-income countries and 10.4% in low-income countries, with considerable gender differences. OpenAI’s task-alignment study for the U.S. workforce suggests that about 80% of workers could have at least 10% of their tasks influenced by large language models, while approximately 19% may see at least half of their tasks affected (Pizzinelli et al., 2023). Although these estimates differ and should not be aggregated mechanically, their joint implication is clear: skills related to verification, judgment, and adaptive problem solving will become economically critical because AI is transforming tasks extensively rather than only at the margins. This creates the greatest risk of educational deformation: if learners progressively depend on AI outputs rather than engaging in cognitive work that fosters independent understanding, the labor force may become less adept at scrutinizing AI-generated information and less capable when AI systems malfunction. The argument that AI improves productivity is thus valid but incomplete. For instance, a field experiment in math tutoring found that while AI-assisted practice improved immediate performance, it adversely affected later unassisted outcomes. Furthermore, a randomized controlled trial examining retention found lower long-term retention under AI-supported study conditions, suggesting that short-term productivity gains do not necessarily translate into sustainable skill development. Consequently, policies should consider the education-to-work pipeline as an interconnected system where the diffusion of AI in the workplace increases the value of resilient cognitive skills, even as unstructured integration of AI within education may diminish their development (IMF, 2024; ILO, 2023; OpenAI/arXiv, 2023; PNAS/PubMed, 2025; Barcaui et al., 2025).

An effective response requires neither outright prohibition nor unrestricted acceptance of AI, but rather institutional reform designed to preserve human capital as a scarce and crucial resource. The European Union’s emerging regulatory framework on AI literacy offers a useful example—not because it resolves education policy challenges, but because it recognizes literacy as a mandatory aspect of AI deployment. Article 4 of the AI Act mandates that providers and deployers implement measures guaranteeing sufficient AI literacy among staff and other individuals interacting with AI systems. According to the European Commission’s clarification, while Article 4 took effect on February 2, 2025, enforcement will commence on August 3, 2026. This approach is a wider, broader principle for education: sustaining cognitive capabilities cannot rely solely on informal practices once AI technologies become infrastructural. Within formal education, AI literacy should be narrowly and operationally defined as the capacity to (1) identify the inherent uncertainty of AI model outputs, (2) verify claims and citations, (3) understand the suitability of AI for particular tasks and recognize its limitations, and (4) sustain independent performance when AI assistance is constrained. The accompanying tables translate this analysis into concrete actions and identify a research agenda concentrated on mechanisms and measurable effects rather than abstract objectives (EU AI literacy Q&A, 2025; AI Act service desk FAQ, 2025; OECD Digital Education Outlook, 2023).

Conclusion - The Silent Erosion of Human Capital

The primary policy challenge posed by generative AI in education is not limited to isolated instances of student cheating or machine errors, but rather the risk that institutions may accept a system in which performance improves while students’ independent abilities erode. A recent OECD report shows that AI use among students is already widespread, yet most regions lack comprehensive guidelines or enforceable regulations for its application. As a result, learning decisions are largely driven by grade incentives and the default configurations of technological platforms. Experimental research highlights a significant concern: while easy access to AI tools can temporarily boost performance with assistance, it may lead to poorer outcomes when students are required to perform unaided, potentially undermining long-term knowledge retention. Labor market forecasts indicate that as AI becomes more pervasive, the economic value of skills such as verification, judgment, and flexible problem-solving will rise. Education systems risk failing to meet these demands if they promote the delegation of cognitive processes essential for these skills. Addressing this challenge requires specific actions: redesigning assessments to capture reasoning processes, implementing AI tools that preserve student effort, and establishing verification literacy as a core competency. Achieving these objectives necessitates sustained investment and the creation of standards that recognize education as critical infrastructure for developing resilient cognitive abilities. Ultimately, responding effectively to the advent of generative AI in education will mean not only safeguarding essential skills but also proactively envisioning new educational paradigms that harness AI’s potential while maintaining the integrity and adaptability of human learning for the demands of the future.

References

Barcaui, A., 2025. ChatGPT as a cognitive crutch: evidence from a randomized controlled trial on knowledge retention. Social Sciences & Humanities Open, 12. DOI: 10.1016/j.ssaho.2025.102287.

Bick, A., Blandin, A. and Deming, D.J., 2024. The rapid adoption of generative AI. NBER Working Paper 32966. DOI: 10.3386/w32966.

Brynjolfsson, E., Li, D., and Raymond, L.R., 2023. Generative AI at work. NBER Working Paper 31161. DOI: 10.3386/w31161.

College Board, 2025. New research: majority of high-school students use generative AI for schoolwork.

Evaluating a custom chatbot in undergraduate medical education: randomised crossover mixed-methods evaluation of performance, utility, and perceptions, 2025. Medical Education, 59 (9), 1050-1059. DOI: 10.1111/medu.14956.

Evaluation of task-specific productivity improvements using a generative artificial-intelligence personal assistant tool, 2024. Research report.

Freeman, J., 2025. HEPI/Kortext AI survey shows explosive increase in the use of generative AI tools by students. Higher Education Policy Institute.

Gravel, J., D’Amours-Gravel, M. and Osmanlliu, E., 2023. Learning to fake it: limited responses and fabricated references provided by ChatGPT for medical questions. Mayo Clinic Proceedings: Digital Health, 1 (3), 226-234. DOI: 10.1016/j.mcpdig.2023.05.004.

International Labour Organization, 2024. AI and the future of work: global policy brief.

McDonald, N., Johri, A., Ali, A. and Hingle, A., 2024. Generative artificial intelligence in higher education: evidence from an analysis of institutional policies and guidelines. arXiv 2402.01659. DOI: 10.48550/arXiv.2402.01659.

OECD, 2023a. Digital Education Outlook 2023: Towards an Effective Digital Education Ecosystem. Paris: OECD Publishing.

OECD, 2023b. Employment Outlook 2023: Artificial Intelligence and the Labour Market. Paris: OECD Publishing.

OECD, 2023c. PISA 2022 Results (Volume I): Changes in Performance and Equity in Education between 2018 and 2022. Paris: OECD Publishing.

OECD, 2023d. Survey of Adult Skills 2023. Paris: OECD Publishing.

Ofgang, E., 2024. High-school math students used a GPT-4 AI tutor. They did worse. Tech & Learning, 23 September.

OpenAI, 2023. GPTs are GPTs: labour-market impact potential of large language models.

Oxford University Press, 2024. OUP AI survey.

Pizzinelli, C., Panton, A., Tavares, M.M., Cazzaniga, M. and Li, L., 2023. Labor-market exposure to AI: cross-country differences and distributional implications. IMF Working Paper 2023/216. DOI: 10.5089/9798400254802.001.

Tian, J. and Zhang, R., 2025. Learners’ AI dependence and critical thinking: the psychological mechanism of fatigue and the social buffering role of AI literacy. Acta Psychologica, 260. DOI: 10.1016/j.actpsy.2025.105725.

UNESCO, 2025. UNESCO survey: two-thirds of higher-education institutions have or are developing guidance on AI use.

Using scaffolded feed-forward and peer feedback to improve problem-based learning in large classes, 2022. Computers & Education, 182. DOI: 10.1016/j.compedu.2022.104446.

Vaccaro, M., Almaatouq, A. and Malone, T., 2024. When combinations of humans and AI are useful: a systematic review and meta-analysis. Nature Human Behaviour, 8 (12), 2293-2303. DOI: 10.1038/s41562-024-02024-1.

Zhang, L., and Graf, E.A., 2025. Mathematical computation and reasoning errors by large language models. arXiv 2508.09932. DOI: 10.48550/arXiv.2508.09932.