[AI Labor vs. Human Labor] The AI–Labor Cost Mismatch: Why AI Is Still More Expensive Than Human Labor

Published

1 The Economy Research, 71 Lower Baggot Street, Dublin 2, Co. Dublin, D02 P593, Ireland

2 Swiss Institute of Artificial Intelligence, Chaltenbodenstrasse 26, 8834 Schindellegi, Schwyz, Switzerland

This article focuses on an emerging dissonance between AI's economic forecasts and its present costs on the frontier. Between 2023 and today, public and business conversations have frequently invoked an assumed direct progression of large AI investments, followed by swift adoption, followed by wide-scale labor substitution. But that is too simplistic a narrative. The primary argument is that AI does not currently substitute for human labor at a lower cost because it is implemented in more capital-intensive infrastructure rather than commodity software. Large initial investments are still required in compute (processors, GPUs, TPUs), in physical hardware (servers, data centers), in power infrastructure, cooling systems, and networking, as well as governance structures and integration, and the cost of actually running the model is susceptible to metering and to volatile price and performance risk.

This article defines the current moment as an early phase of short-term adoption and cost discrepancy: firms are purchasing AI capacity before cost, pricing, and reliability assumptions have stabilized, and before sufficient scale exists to support broad labor substitution. These are not necessarily signs of AI's failure, but instead markers of an early industrial phase characterized by actual productivity gains, though unequal, and by augmentation, rather than replacement. Labor substitution is only going to proceed piecemeal and in a differentiated manner, starting with standard tasks and moving into the more complex as its cost and implementation advantages increase. Disciplined investment is required. Moreover, clear cost accounting, strong validation methods and attention to power requirements, the labor market, and issues of inequality are necessary policy and business responses.

Introduction - The AI–Labor Cost Paradox

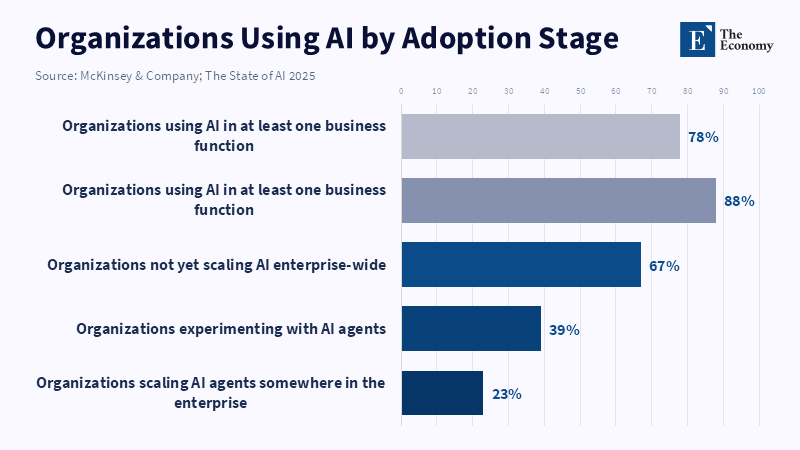

A fairly simple public narrative has dominated the discourse on AI since 2023. Enormous capital expenditures were to be matched by enormous rates of adoption, which were, in turn, to translate into dramatic rates of labor displacement. This narrative captured one phenomenon and skipped two others. The captured phenomenon was enormous pressure on deployment. In 2024, 78 percent of organizations used AI in at least one business function, whereas 65 percent of organizations reported in a global 2024 survey that they used generative AI on an ongoing basis, double the share of ten months prior.[1] The two skipped phenomena were the staggering size of private AI investment (totaling $109.1B in 2024 in the largest national economy, including $33.9B specifically for generative AI globally in 2024)[2] and the fact that, rather than the economy adopting abundant cheap AI, enormous capital is now invested before the technology's cost has come down to human levels, before the infrastructure is widely available and before reliable performance has been demonstrated at scale.[3]

The missed step in the prevailing narrative is not a technological step, but an economic one. AI is not a technology currently substituting for human labor within firms in the same cost form as that labor. Salary labor is a recurrent operating expenditure whose variability is bounded by existing contracts, headcount decisions and routines. Frontier AI, instead, arrives first as an infrastructure cost. It requires hardware, data center infrastructure, high-speed networking, ample cooling, electricity purchases, software integration, control and coordination layers, a whole new range of compliance and governance considerations, model validation routines, etc. Before issues of model error and the resulting productivity gains and losses, there is already a fundamentally different balance-sheet proposition facing the firm. The question the firm faces is not simply, "Can the AI perform X part of a job?" but, "Can it perform X part of a job more cheaply, more reliably and more predictably than humans can once the full infrastructure cost is included?" The evidence to date is not nearly as favorable to that question for immediate labor displacement as it is for adoption.

This article is an attempt to correct the common narrative on the path of AI in the United States between 2023 and 2026. The current phase of enormous investment, deployment and task-automation does not signal that AI has become an abundant, cheap substitute for human labor. Rather, it represents a phase when frontier AI is spreading far faster than its cost is coming down or its reliability can be established, creating a short but potent mix of a strong incentive to buy AI, before costs will be stabilized and performance levels have become sufficient to automate. Consequently, a firm simultaneously faces enormous pressure to acquire this technology and a variety of situations in which human resources must remain, to review or confirm AI-generated output, in fact, re-engaging with a task that it thought might have been automated away by this latest phase of investment.

These corrective points are important as a policy framework is emerging, which rests on assumptions that could not be further from the truth. The costs to run models, as of 2025, are high, though falling rapidly and the applications for AI will soon be everywhere. But capital commitments are outpacing revenues in many parts of the frontier market;[4] electricity demand is spiking, capacity is limited and governance burdens are mounting.[5] It will not just be the fact of miscalculating investments in this system, but institutions will structure labor market relations, budgeting processes and workforce planning around the assumption of cheap, imminent substitution, when the available technology represents very costly, non-uniform and highly specific tasks that cannot, yet, stand in for the vast range of capabilities that human beings collectively possess.

2. The Economic structure of AI today

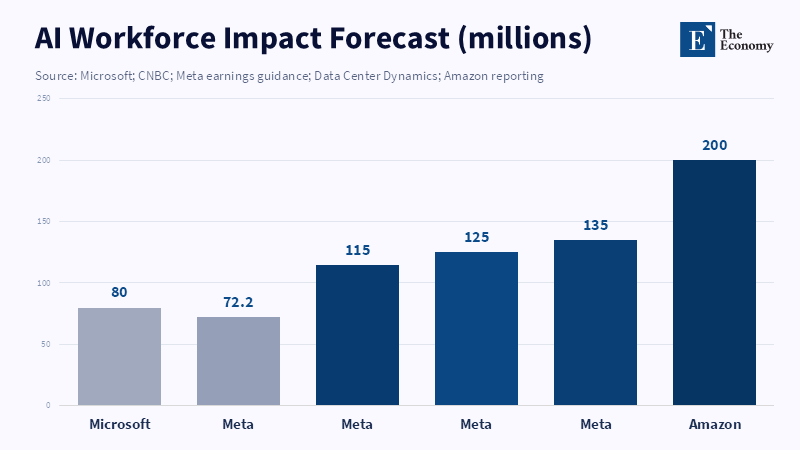

The economics of AI today rests on an asymmetry of expenditures relative to labor; for organizations investing at the highest level to build or deploy AI, it initially comes as a capex expenditure. Recent corporate guidance shows the scale of the capital-expenditure problem. Microsoft committed $80 billion in FY2025 for AI-enabled data-center construction,[6] while Meta Platforms reported capital expenditures of $72.2 billion in 2025 and guided to $115 billion–$135 billion in 2026, with infrastructure identified as the main driver of expense growth.[7] AMAZON is also committed to approximately $200B in CAPEX in 2026,[8] attributing sharply falling free cash flow to increased purchases of property and equipment for AI. These are not simply software payments, the sort of revenue you would associate with buying an ERP system. Instead, these are building blocks for utility-scale infrastructure, digital at the interface layer, physical at the infrastructure layer.[9]

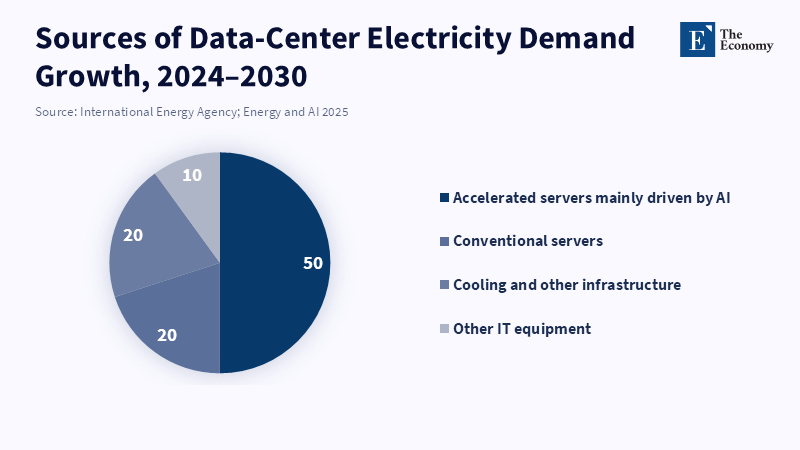

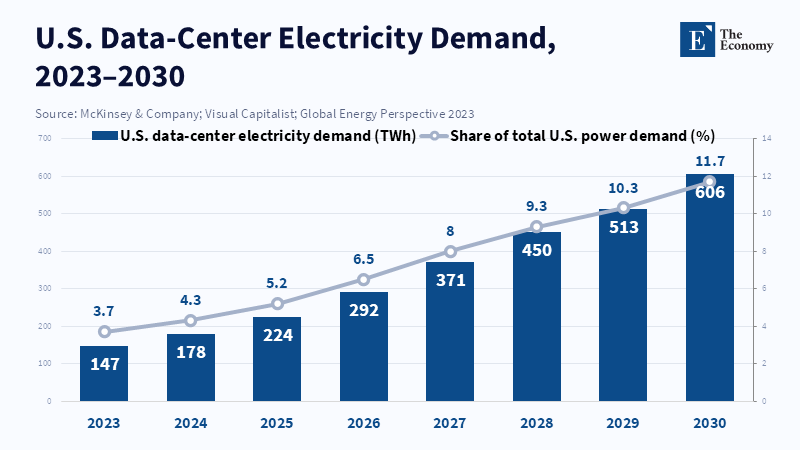

This heavy upfront infrastructure expenditure is merely the first element of the cost stack for today’s most advanced AI systems. The second layer consists of operating costs, predominantly related to physical computer resources. Electricity consumption has nearly doubled for data centers and the infrastructure associated with it. Although a data center could hypothetically be spun up in a year or two, planning for the grid infrastructure is much longer-term and for this report’s frame time, demand grows from 460 TW-hours per year (in 2024) to more than 1,000 TW-hours per year (in 2030)[10] in its base-case scenario. Accelerated hardware associated with AI is the dominant driver of that increased consumption, increasing annual demand roughly 30 percent per year,[11] with cooling and complementary infrastructure adding additional significant loads.

Though renewable energy accounts for roughly half of new electricity sources deployed in this framework, natural gas and coal also form large parts of the new grid to provide flexible and rapid generation for spiking energy demands.[12] So, while some of the costs of the AI system might be reduced to model weights and parameters, the physical layer has proven itself remarkably robust.

In stark contrast, the costs associated with labor have not inflated in this same way. The OECD reports that as of Q1 2025,real wages are still below their early 2021 levels in fully half of OECD countries.[13] And though growth turned positive in 33/37 OECD countries from Q1 2021 levels (averaging 2.5 percent annual growth), a March 2025 wage update indicated that about two-thirds of countries were still below 2021 levels at the close of 2024.[14] The point is not that labor is cheap in an absolute sense. The point is that it has not faced anything resembling an infrastructure price shock. Firms comparing modest year-on-year nominal wage increases to enormous spike-shaped capital-expenditure curves and rapidly increasing recurrent utility costs are not comparing apples to apples. They are comparing the stable recurrent cost of labor with the wildly volatile upfront capex-intensive model, operating under conditions of acute shortage for basic infrastructural inputs. That is the root cause of the overshooting in the substitution story.

This is where the system's oddity comes in. While it seems on the surface that the use case for AI might involve no costs over what it costs to run tokens and so on, for the firm building the underlying systems of AI, we are looking at conditions analogous to those in other industrial building and buying sectors. The short-run problem, then, for firms looking to deploy this technology at scale, is one of getting the machines and the electrical infrastructure required, as these are severely supply-constrained. Until this gap has been overcome, we are in a period in which bosses cannot possibly be thinking about the system as a Cheap Labor Replacement, but rather a Vast, Incredibly Expensive Infrastructure Buildout.

3. The Short-term Mismatch

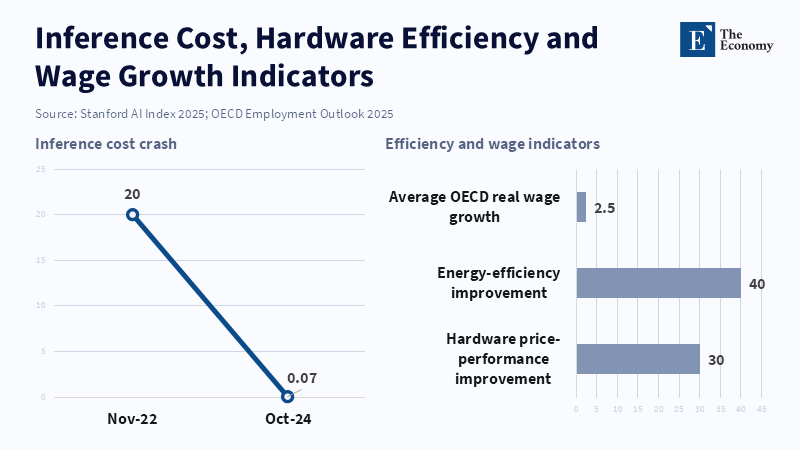

The first short-term mismatch is a temporal one. Corporations are adopting AI in an era of building infrastructure in advance of optimization, the tell tale sign of which is the existence of two competing and counter-indicator facts: cost of inferring withGPT-3.5-level performance decreased from $20 per million tokens to $0.07 between November 2022 and October 2024,[15] hardware price-performance increased about 30% annually and energy efficiency grew about 40% annually;[16] infrastructure (energy and facilities) for running at scale is being hobbled by long build times, grid constraints and substantial capital outlays upfront. The crucial inference is not that costs won’t get lower. It's that firms are buying infrastructure at a time when the economic curve is still falling steeply. They are buying the infrastructure premium in an economy before system maturation has guaranteed labor substitution, economics will stabilize.

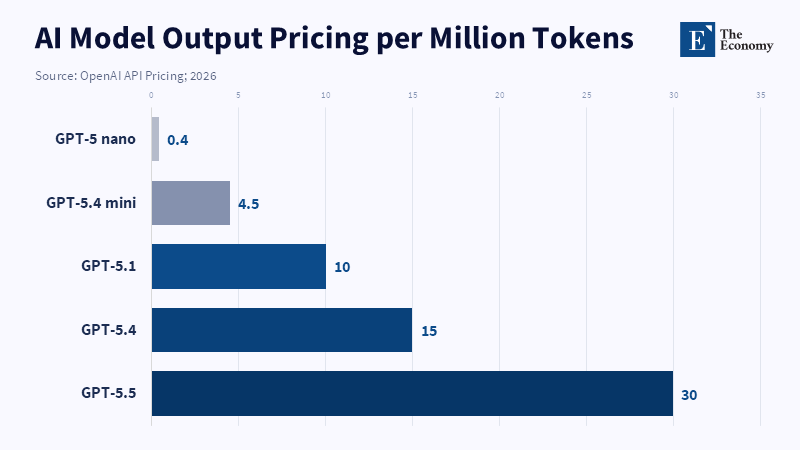

The second is a mismatch of pricing. Adoption and prosumer usage initially benefited from relatively flat or quasi-flat subscription tiers, while the underlying economics of intensive use have always been variable. Both Anthropic's tier system (Pro/Max) and its documentation make it clear that a usage limit is being enforced (for chat and coding alike) and that reaching that limit leads to either the ability to purchase additional usage or migrate to a pay-as-you-go plan;[17] and OpenAI's business-tier Codex product is marketed without a fixed seat cost but on a pay-as-you-go model, with API costs varying dramatically based on model, input, cached input and output tokens.[18] These economic patterns are significant. Flat fees work for initial penetration and adoption. However, as individual power users or even agentic systems achieve high and sporadic token throughput, flat fees are economically unsustainable. Added pressure comes from the fact that many leading model producers are still in substantial financial distress: Reuters in 2024 reported OpenAI would likely be losing $5 billion that year,[19] in large part on compute spend and internal concerns about the ability of revenue growth to support data center costs are documented to have been present in 2026.

The third is a mismatch of expectations. Companies began this cycle hoping for replacement and have instead found augmentation. The International Labor Organization concluded in 2023 that the greatest impact of generative AI on jobs will be augmentation. Their 2025 report reinforced this and stated that while a quarter of global employment is subject to some potential risk, few jobs will be wholly automatable.[20] The limitations that prevent it include infrastructure (and consequently cost and time of access), digital skills and ongoing operational friction. And while this may be a surprise from an external view, usage within a model provider like Anthropic corroborates: Claude AI usage is yet again predominantly augmentation over automation[21] and it remains heavily concentrated in a few tasks like coding, writing and a select number of back-office functions; it has not diffused broadly across the economy. Labor is affected, but not by the simplistic one-for-one replacement many executives envisioned.

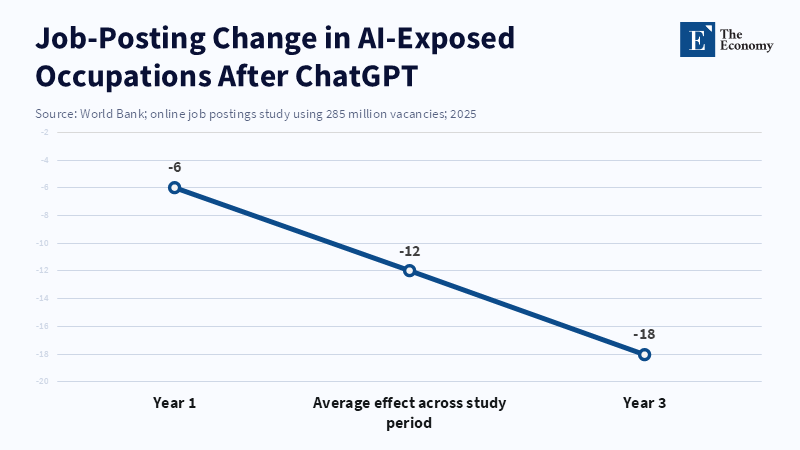

Labor-market evidence supports this interpretation: A 2025 World Bank working paper analyzing 285 million online job posts determined that occupations more exposed to AI substitution were indeed less likely to be posted after the launch of ChatGPT. Specifically, postings fell 12% more among more exposed versus less exposed occupations, growing from 6% after one year to 18% by year three.[22] The jobs least impacted were at the entry-level and administrative support functions. This is precisely where there’s no story of system-wide replacement. Rather, there’s a selective and margin-specific impact on standardized, entry-level, cognitive tasks and labor demand has thus already shifted, even if through a system of both substitution for some narrowly defined clusters of tasks and augmentation of much of the workforce, all while still requiring considerable human oversight to produce stable output.

4. Why AI can still be more expensive than labor?

AI can still be more expensive than labor, despite its appearance of being more productive, because that comparison is framed the wrong way. Human labor is normally purchased as a bundle of predictable availability, tacit judgment, responsibility and boundable error in exchange for a wage-and-benefits package. Frontier AI, however, is purchased as a system of high fixed costs and non-trivial marginal costs. The pricing for the Anthropic API makes this clear: list prices currently run between $1/million input and $5/million output for Claude Haiku 4.5 and $5/million input and $25/million output for Claude Opus 4.7 (in addition to caches and output rates).[23] The Anthropic API even sets spend limits by usage tiers and request and token rate limits.[24] Similarly, the pricing for flagship OpenAI models is tiered for input, cached input and output tokens[25] and then augmented with additional costs for tool use. This is more than just an accounting quirk; it means "AI labor" is not bought in a single lump sum but rather metered, capacity-constrained and sensitive to workflow design. While an employee might be underutilized at times of day, an agentic system's price may actually increase when demand is at its highest.

This leads to the problem of overuse and token economies. While a flat monthly fee creates the illusion of a fixed price for the end user, the underlying costs borne by the provider fluctuate wildly. Indeed, Anthropic's support pages now encourage power users to enable higher usage, upgrade, or use pay-as-you-go plans[26] when they hit included caps. OpenAI's business line also separates subscription plans for seats (intended for collaboration) from the more granular, token-priced plans of developer workflows. The logic is simple: once usage becomes more intensive, agentic, or constant, the metaphor of a subscription falls away and the economic realities of metered infrastructure return. This contributes to the continued pressure on margins in the frontier-model sector: while revenue may scale with usage, the demand for computing resources scales with both usage and intensity. When the two are not tightly aligned, perceived growth in users fails to translate into stable profits.

A deeper concern, however, lies in the question of reliability and oversight. A model may be cheap to operate on a per-token basis but expensive to verify, thereby making it more costly than human labor. Even specialized tools for legal research have been shown to produce between 17% and 34% errors[27] (compared to 58%-82% for general chatbots on legal queries, per Stanford HAI research). Across generations, AI systems continue to exhibit overconfidence, regardless of question difficulty,[28] indicating that language of certainty is an unreliable proxy for epistemological awareness (a 2026 Nature study). Even more critically, a 2025 Nature Communications study showed that out-of-the-box language models deviate from Bayesian standards in probability updating,[29] though they can be taught to do better with targeted instruction. In finance, the Alan Turing Institute has reported that current research is limited to low-risk applications like retrieval and internal drafting, rather than business decision automation and that practical challenges of explainability, accountability and stress-testing continue to be the dominant concern for high-stakes use cases (reports from 2024 and 2025).[30] This is why there's a hidden human layer in much of what people believe is being accomplished entirely by AI: fact-checking, error-correction, escalation, auditability and risk management.

It's important to acknowledge the strongest counterarguments; they are not incorrect but merely more circumscribed than they appear. Experimental studies have demonstrated significant benefits for completion time (40% less) and output quality (18% higher)[31] on mid-level professional writing tasks when users had access to ChatGPT (published in Science). Furthermore, nearly all organizations pursuing leading scaled generative-AI initiatives were reporting tangible ROI in early 2025, with approximately 20% achieving returns of 31% or higher (Deloitte).[32] Late 2025 survey data also indicated that 88% of respondents were already using AI in at least one business function,[33] although enterprise-wide adoption was still in its early stages. These figures confirm that AI offers substantial value, but they do not suggest that AI is already a universally cheaper substitute for human labor. Instead, they indicate that AI performs well in tasks that are bounded, high-volume and language-intensive, particularly when workflows are redesigned around its use. Thus, the calculator or search engine analogy has limitations: calculators are deterministic and cheap and search engine queries retrieve indexed information. Generative AI, in contrast, produces probabilistic outputs with variable quality depending on domain, prompting, context and verification protocols, all of which are powered by scarce computational resources. The issue is not whether AI is useful, but whether it has reached a point where it reliably and affordably replaces human labor on a large scale, which, in most areas, it has not.

5. Conditions for the turning point

This reversal won't be permanent since most of the factors causing high AI cost are a symptom of an early industrial rather than a mature services phase. The most straightforward condition for a turning point is falling costs of computation. In 2025, the Stanford AI Index documents the spectacular fall in inference costs for GPT-3.5-level performance from late 2022 to late 2024, along with annual hardware price-performance and energy efficiency gains. Model and systems optimization have been and continue to reinforce this trajectory. In 2025, DeepSeek intensified the price war through API off-peak price cuts of as much as 75%,[34] in the context of an ongoing cost war ignited by low-cost model launches. Google Research released TurboQuant,[35] a technique for compressing models and achieving efficient key-value cache compression without accuracy loss, in 2026. The broader point is that there are many mathematical avenues toward lowering effective compute costs – better routing, quantization, compression, caching, distillation, workload scheduling – not just simply more hardware.

A second condition is the evolution of pricing. The fiction that all usage can be bundled into profitably flat monthly subscription rates is already in the process of ending. Anthropic has launched large additional usage tiers and has formalized a path for heavy usage. OpenAI has separated its collaboration plan services from its pay-as-you-go coding infrastructure product. A new segmented pricing structure is emerging: subscriptions for light and medium use, metered consumption for agentic and development loads and enterprise arrangements for reserved capacity or guaranteed availability. These pricing structures are more economically efficient than universal flat rates and fundamentally change how firms make adoption decisions. For vendors, they allow for greater monetization and less long-term subsidization of heavy users; for enterprises, they increase price transparency and explicit forecasting, but also lead to greater certainty regarding spending. The effect will be more targeted, rather than broadly diffused, adoption.

The third condition is a reliability threshold. AI doesn't replace labor for scale until it is less expensive and also reliable across many repetitions, auditable and deployed under real-world conditions. This is demonstrated through significant gains in hallucination rates, uncertainty handling, complex reasoning capabilities and the reduction of the need for human review. While there are demonstrably real advances (benchmark performance grew sharply in 2024 and frontier capabilities continued their steady rise), the AI Index notes persistent difficulty with complex reasoning,[36] while financial industry researchers continue to call for accountability, explainability and robustness under distributional changes. Even as infrastructure continues to mature, this reliability barrier will remain an economic divisor. Highly standardized linguistic contexts or templated, routine processes with Stable feedback loops will cross it sooner than the domain-specific domains with unstable data like financial markets, corporate accounting judgments, or legal interpretations that require nuanced understanding. In these domains the limiting factor will not just be price but control.

The maturation of the infrastructure points toward this as well. IEA scenarios reveal that advances in efficiency of hardware, software and system design have a substantial effect on electricity use[37] over a trajectory that is steeper than simply scaling, but these scenarios also project that fossil fuels will be crucial in the interim because demand is outstripping the ability of clean and reliable capacity to keep up. The path to lowered costs will therefore be a gradual aggregation of more conventional hardware, data center operations experience, model efficiency gains and expanded generation capacity. This turning point will not be a moment of uniform price undercutting across labor markets but a steady process of convergence based on cost trends, pricing architectures and reliability benchmarks diverging by industry.

6. Firm-level decision framework

The maximum value will be derived by firms under conditions of high labor costs, routine tasks and the ability to scale results across many identical applications with low customization. This has driven the proliferation of AI tools in software engineering, mundane document creation, customer-service routing and back-office processing. Anthropic's Economic Index found coding to be the highest-cluster use of models in late 2025,[38] comprising about one-third of Claude.ai and almost half of first-party API use, with use by office and administrative tasks, showing increasing use in managing email, documents, scheduling and other workflows. Controlled experimental results confirmed that professional writing skills can be dramatically improved; Klarna’s 2024 launch exemplifies the promise to management:[39] its AI assistant is said to have handled two-thirds of customer service requests, slashed resolution times from 11 minutes to under 2 and achieved the work capacity equivalent of 700 full-time workers.

The conditions under which firms will step back are the opposite of these. Highly regulated industries such as finance are the primary focus of experiments in low-risk information gathering and internal outputs rather than business process automation; accountability, regulatory oversight and the necessity of robust stress testing under shifting conditions became central concerns in that industry's 2025 follow-up report. Law firms also faced the problem of frequent non-trivial hallucination in specialized applications and unique internal functions such as firm-specific accounting processes that blend complex rules with expert judgment and resist automation. In these areas, AI is not just another employee but a source of significant technical, legal and reputational risk. The 2025 announcement of a Klarna correction, wherein the company's CEO suggested that it may have "over-indexed" and needed to readjust,[40] while simultaneously hiring back, demonstrates that initial efficiency gains may not nullify the economic value of service quality.

A mixed systems paradigm currently represents a realistic state: companies are not choosing purely human or purely AI production but operating hybrid models where AI performs preliminary drafts, classification, data retrieval, sorting, summarization, coding support and workflow enhancement and humans execute final review, manage exceptions, exercise client judgment and provide overall accountability. This aligns with broad surveys, which show extensive organizational use of AI with limited scaling and with labor statistics that show decreased demand for vulnerable roles while many jobs are transformed rather than automated, as indicated by World Bank and ILO reports.[41] Mixed, rather than full, integration is the order of the day.

7. Implications for the AI industry

What does this mean? For AI providers, it means they must now monetize at the speed of industry reality. They cannot continue operating in a fantasy world where infrastructure-scale inputs can be priced like consumer applications. Frontier developers are pulled in three different directions at once: huge fixed costs, volatile and often bursty inference demand and a market increasingly sensitive to cost as cheaper alternatives emerge. This can already be observed in massive capex, metered API pricing, spend ceilings, increased higher-tier subscriptions for heavy users and recurring instances of revenue not keeping pace with computational ambition.[42] Providers' strategic task, therefore, is to attract more users. They must align their pricing with actual cost structures, mitigate exposure to hardware and energy costs and avoid developing business models that are overly reliant on the subsidized use of compute-intensive applications.

What this means for businesses is continuous, selective reassessment rather than strict adherence to or rejection of rapid automation. The relevant question isn't "how much will we use AI?" It's "how much will it cost to implement AI relative to labor costs once validation, security checks, redesign of workflows and variable usage are factored in?" This requires a far greater level of discipline in corporate accounting than businesses are accustomed to; pricing changes favoring metered use will force this discipline. Consumers' reliance on fixed-fee subscriptions will decline as their actual usage becomes more transparent. Even if businesses are resistant to this change as their variable costs increase, this resistance could be healthy if it spurs a more rigorous examination of return on investment and more stringent governance. Businesses most likely to succeed will separate automation tasks with high levels of confidence from those with high levels of uncertainty, rather than applying a uniform approach to both.

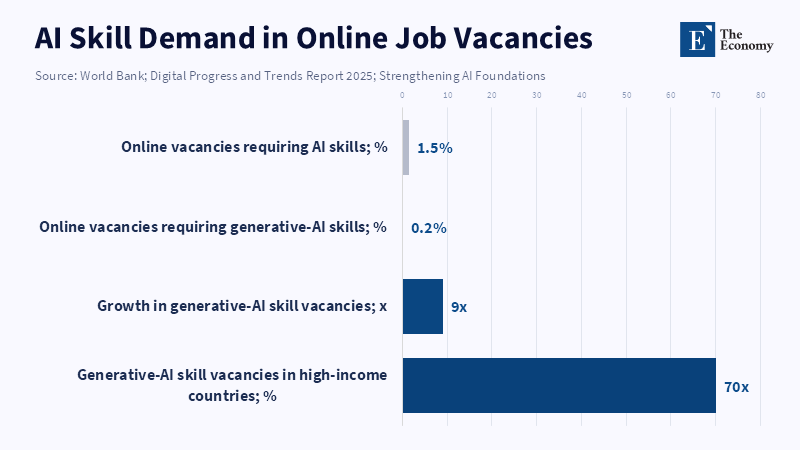

Labor-market implications of this shift are more subtle than both techno-optimistic and techno-pessimistic views allow. Automation isn't necessarily foregone; rather, it is deferred, selective and structurally asymmetric. The observed trend in job postings clearly shows the earliest declines in administrative-support and entry-level professional roles. At the same time, demand for skilled labor is increasing, especially for workers able to combine subject-matter expertise with AI-powered task execution. However, this demand still represents a fraction of the overall labor market; the World Bank's 2025 digital skills report found that just 1.5 percent of all online jobs require AI skills.[43] Although jobs specifying generative AI increased by 9 times from 2021 to 2024, reaching only 0.2 percent of all job postings, of which 70 percent were in high-income countries.[44] Additionally, the World Bank reports that consumer use of generative AI is also sharply skewed towards high-income countries.[45] The likely long-term effect on society, therefore, will not be the immediate loss of work; instead, we are likely to see increased stratification of jobs, a shrinking of entry-level administrative roles, greater rewards for those who can both oversee and integrate AI and widening gaps between the wealthy and the poor internationally, especially as computing infrastructure, technical skills and institutional support remain scarce.

These trends suggest several critical policy interventions: Labor-market policy must prioritize pathways into high-skilled work by ensuring routine tasks continue to remain in demand as entry-level, entry-position, clerical and administrative roles dwindle. Regulatory policy needs to demand greater clarity in enterprise accounting practices regarding the costs of AI systems, the time and money spent on validation and where the locus of decision-making lies for AI systems deployed in high-stakes environments such as financial services, law, or the healthcare-related sector. Infrastructure policy must shift its emphasis from the software side of AI towards ensuring the availability of power and sufficient capacity in the form of updated grid systems, faster permitting processes and adequate competition to avoid creating unnecessarily concentrated computing markets. Finally, inequality policy must acknowledge that the development and deployment of AI are not occurring on a level playing field. Without concrete measures, the gains resulting from decreased computing costs will primarily benefit the companies and nations that already possess greater advantages in capital, energy and specialized human talent.

8. Conclusion - The Hybrid Phase Before AI Becomes Predictable at Scale

We are not at a point of AI failure or definite evidence that the substitution of labor is bound to occur. This is rather an intervening phase that shows a gap between where the technology is expected to be and the economic form in which it exists. AI spread rapidly, but has spread first as expensive infrastructure, whose productivity is actual but intermittent, whose reliability is increased but not perfect and whose organizational worth is deeply determined by where in the process it is located. What all of the evidence from 2023 to 2026 suggests in striking unison is that compute-heavy systems are already impacting labor demand in predictable entry-level and back-office work, but have not yet been made more cheaply predictable than human workers, once full capital cost, energy burden, metering and human validation are taken into account.

Therefore, the proper policy response is neither laissez-faire nor an immediate call to arms. Firms need to move beyond viewing adoption as a surrogate for worth and consider the full economics of substitution. Providers need to account for actual usage and reduce their vulnerability to infrastructure bottlenecks. Policymakers must look towards targeted displacement, protection of entry-level work and the building of the foundations of energy, competition and skills that dictate who wins when the cost curve finally falls. The question is one of timing, not direction. The cost will continue to fall, the models will continue to improve, and at some point, certain tasks will cross the substitution threshold. But the threshold will be crossed unevenly and the institutions that prepare for a protracted hybrid phase rather than an immediate revolution will be those that handle the shift appropriately.

References

[1] McKinsey & Company. The State of AI in 2024; Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2025.

[2] Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2025.

[3] Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2025.

[4] Trefis Team. ‘OpenAI’s Big Spending’. Forbes. 5 November 2025.

[5] International Energy Agency. Electricity 2026.

[6] Microsoft. Statement on FY2025 AI-enabled data-center investment. 2025.

[7] Meta Platforms. 2025 results and 2026 capital-expenditure guidance.

[8] Amazon. 2026 capital-expenditure guidance and property-and-equipment spending disclosures.

[9] McKinsey & Company. ‘AI Data Center Growth: Meeting the Demand’. 2024.

[10] International Energy Agency. Energy and AI: Energy Demand from AI. 2025.

[11] International Energy Agency. Energy and AI: Energy Demand from AI. 2025.

[12] International Energy Agency. Electricity 2026; U.S. Department of Energy. ‘FOTW #1304, August 21, 2023: In 2023, Non-Fossil Fuel Sources Will Account for 86% of New Electric Utility Generation Capacity in the United States’. 21 August 2023.

[13] OECD. OECD Employment Outlook 2025. 2025.

[14] OECD. Real Wages Continue to Recover. 2025.

[15] Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2025; Techopedia. ‘Is AI Cheap Now? Inside the AI Inference Cost Crash’. 2025.

[16] Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2025.

[17] Anthropic. ‘Understanding Usage and Length Limits’. Anthropic Help Center. 2025.

[18] OpenAI. API Pricing. 2026.

[19] Reuters. Reporting on OpenAI losses and compute spending. 2024; Trefis Team. ‘OpenAI’s Big Spending’. Forbes. 5 November 2025.

[20] International Labour Organization. Generative AI and Jobs: A Global Analysis of Potential Effects on Job Quantity and Quality. 2023; International Labour Organization. Generative AI and Jobs: A 2025 Update. 2025.

[21] Anthropic. Anthropic Economic Index. 2025/2026.

[22] World Bank. Working paper on AI exposure and online job postings. 2025.

[23] Anthropic. Claude API Pricing. 2026.

[24] Anthropic. Claude API Rate Limits. 2026.

[25] OpenAI. API Pricing. 2026.

[26] Anthropic. ‘Understanding Usage and Length Limits’. Anthropic Help Center. 2025.

[27] Stanford Institute for Human-Centered Artificial Intelligence. Research on hallucination in legal AI tools.

[28] Nature. Study on AI overconfidence. 2026.

[29] Nature Communications. Study on language models and Bayesian updating. 2025.

[30] Alan Turing Institute. The Impact of Large Language Models in Finance. 2024; ESMA and Alan Turing Institute. LLMs in Finance. 2025.

[31] Noy, S. and Zhang, W. ‘Experimental Evidence on the Productivity Effects of Generative Artificial Intelligence’. Science. 2023.

[32] Deloitte. State of Generative AI in the Enterprise. 2025.

[33] McKinsey & Company. The State of AI. 2025.

[34] Reuters. ‘China’s DeepSeek Cuts Off-Peak Pricing by up to 75%’. 2025.

[35] Google Research. ‘TurboQuant: Redefining AI Efficiency with Extreme Compression’. 2026.

[36] Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2025.

[37] International Energy Agency. Energy and AI: Energy Demand from AI. 2025; International Energy Agency. Electricity 2026.

[38] Anthropic. Anthropic Economic Index. 2025/2026.

[39] Klarna. Company press release on AI assistant and customer-service automation. 2024.

[40] Reuters. Reporting on Klarna’s AI course correction and renewed hiring. 2025.

[41] World Bank. AI and labor-market evidence. 2025; International Labour Organization. Generative AI and Jobs: A 2025 Update. 2025.

[42] Trefis Team. ‘OpenAI’s Big Spending’. Forbes. 5 November 2025; OpenAI. API Pricing. 2026; Anthropic. ‘Understanding Usage and Length Limits’. 2025.

[43] World Bank. Digital Progress and Trends Report 2025: Strengthening AI Foundations. 2025.

[44] World Bank. Digital Progress and Trends Report 2025: Strengthening AI Foundations. 2025.

[45] World Bank. Digital Progress and Trends Report 2025: Strengthening AI Foundations. 2025.