[AI Labor vs. Human Labor] The AI Productivity Illusion Is Not a Labor Strategy

Published

Modified

AI shifts costs more than it cuts them Speed gains often hide rework and risk Firms should use AI to support expertise, not replace it

It is an easy mistake to make in the AI economy to conflate quicker output with increased productivity. A worker writes a report in five minutes, a chatbot responds to a query in seconds, and a coding assistant blobs a screen of seemingly functional code. On paper, the value seems clear. But in reality, the invoice often comes a little later: a high-level worker checks the logic, a lawyer considers the legal risk, a manager edits the client communication, an engineer rewrites the code that looks good but doesn't quite work within an actual system. That is the productivity illusion of AI. This doesn't mean AI has no value. It does mean that many companies count the speed of initial drafts but ignore the cost of the final output. This explains why, in many firms, employment can fall while AI investment rises, without clear proof that total costs are falling. Usually, the work isn't going away. It is being shifted from books into computing, management, rework, and capital.

The AI productivity illusion begins with the wrong cost model

The current discussion frames AI as a replacement for human work, but that is the least insightful way to interpret the evidence. A better vantage point is that AI makes firms reallocate their spending, and makes the question of who bears the load of proof more salient. It can make some procedures quicker, support junior workers, or automate certain repetitive actions. But a task isn't necessarily a job, and increased speed isn't synonymous with increased value. The unit that matters is not the token of output (the gigabyte of sound, the page of text, the block of code, the formulation of a question), but the verified outcome (a decision, a case or project resolved, a matter filed or closed, a code version deployed, a system released, a client outcome realized). When firms mistakenly one for the other, they create a cost model that appears optimized on a presentation slide but not in practice. Salaries are a tangible budget item; token bills, cloud contracts, model upgrades, data cleaning, prompt-security tools, and review queues are often intertwined in a hidden budget. That lets managers kick the can down the hall, and call the new system at a lower tone before the full brunt comes blowing through.

And this is why the AI productivity illusion is so seductive. It counts the seconds a worker spends cranking out a draft, but often neglects the minutes another worker spent reviewing it, testing it, correcting the logic, and explaining the problem. It makes the first handoff look like the whole job. It treats output as "evidence" of productivity. More code, more emails, more reports, more tickets all count as progress. But many companies are now learning that machine speed can cause human drag. The real unit of work should be the finished decision, the settled customer case, the bug-free release, or the adopted professional opinion. By that measure, AI is often an effective weapon. It is not a cheap stand-in for expertise.

Time and delay remain hidden in the cost model. Fifteen minutes for a report to be created should seem acceptable until you realize that such a report is never just a report. It also might require testing for accuracy by a subject matter expert, a legal review of any associated risks, validation of the origin with the data owner, and recommendation of the final output to a superior to determine relevance. This is hardly an exception. It is everyday work in any knowledge environment that is trying to do anything at all important, where errors have a measurable cost. The illusion of AI productivity persists because the machine gets the easiest, visible layer done the fastest. Everything else is still human; the output just looks better and gets there quicker.

Layoffs and AI spending are not proof of savings

The paradox of layoffs versus spending is now key. Leading tech companies have laid off people or slowed headcount growth even as they increased capital expenditure on AI infrastructure, often within the same reporting period. That does not necessarily mean AI is displacing workers more cheaply, nor even that the overall cost is falling. It might mean something less glorious: firms are pulling money out of their labor force just before the performance of the new system is known. The market rewards this because AI expenditure is a proxy for futurity. Boards prefer the language of efficiency; investors prefer a story of scale. But it is at least possible to cut headcount and increase total costs at the same time, if the new model relies on costly computing, limited chips and power, expensive data center capacity, rare engineers, and expensive models to run and maintain.

It's an accounting issue. It is easy to measure layoffs—the pain is obvious and swift. The cost of AI is distributed everywhere - capex, cloud-resell contracts, supplier fees, data-center investments, software and cyber resources, legal reviews, and expert teams. A company might claim to have trimmed its headcount while upgrading its operating base to a more expensive one. That may prove to be a good long-term investment, but it isn't the same thing as showing off the savings. Until the business can demonstrate that AI has cut the total cost of any work completed, a headcount reduction isn't anything but a hiring shift.

The reality is more nuanced than the hype suggests. In formal experiments, generative AI has proven to advance in specific jobs, and those benefits shouldn’t be overlooked. Across a professional-writing task, work was completed more quickly and of higher quality. Customer support agents were able to close more tickets per hour, with the best improvement coming from those with less experience. Even a set of consulting jobs performed better with GPT-4 for those tasks well-suited to the technology. These results are significant. They demonstrate how AI could produce productivity improvements for workers working within the limits of their tools, with clear feedback and a quality standard to optimize toward. However, the same research body notes the drops in performance when work strays beyond the model's capabilities. That is where most meaningful, skilled work lies—in nuance, discontinuity, feedback, and responsibility.

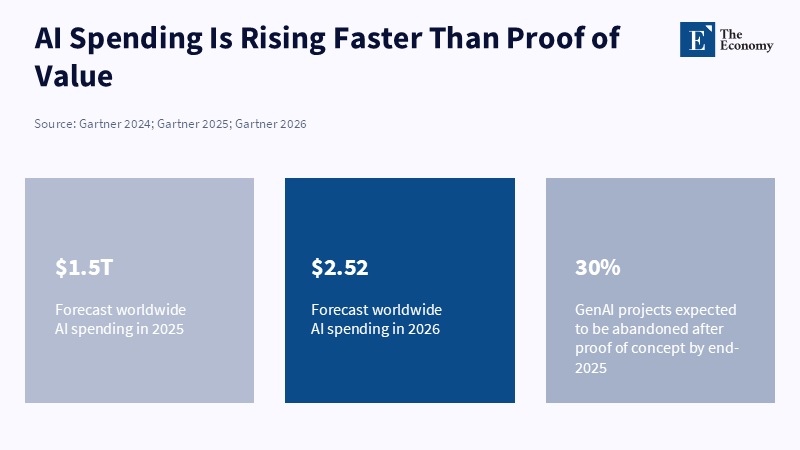

The paradox deepens when capital expenditure is set side by side with project failure. Rapid growth in global AI and IT investment has been accompanied by catastrophic failure in many generative AI projects, which die after proof of concept through bad data, uncontrolled risk appetite, spiraling costs, or inability to justify a business case. This isn't a small detail. For many businesses, it amounts to buying a production system before they have designed the production process. That would be called poor implementation in more traditional information technology projects, but it is often dressed up as a transformation in AI. The key point is that capital expenditure is sticky. A worker can be removed from payroll quickly, but a data center, cloud contract, or model dependency can lock a firm into years of spending.

Expertise is where the illusion breaks

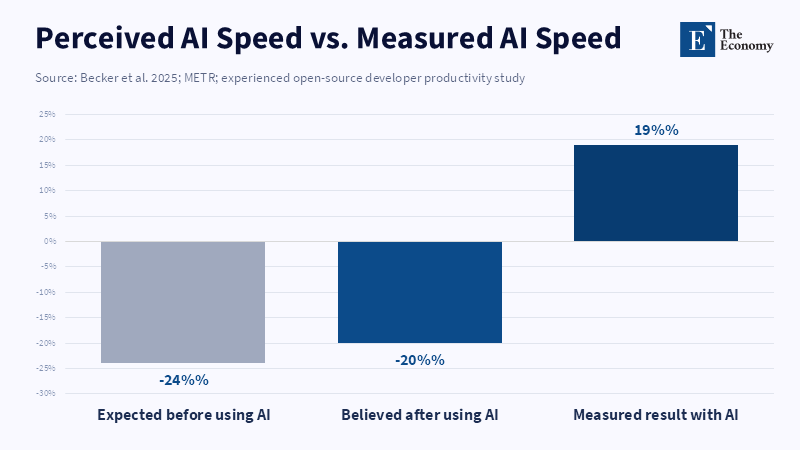

The strongest argument for simple replacement is not sentimental: it's operational. LLMs are fluid systems, not accountable workers, and that distinction informs every serious application. They can summarize, draft, search, categorize, translate, and recommend. They do not shoulder all the responsibility of knowing why a rule applies or who is harmed by a mistaken decision. In the law, research has documented high hallucination rates on legal questions, even when the questions are concrete and verifiable. In software, a 2025 randomized trial found that experienced open source programmers working in known repositories took longer with AI tools, even though they thought the tools made them faster. That discrepancy between felt and actual speed is the AI productivity illusion in its purest form.

The same problem occurs in tax, compliance, finance, health, engineering, insurance, procurement, and public administration. The hardest part is almost never the first draft. It is the multilayered logic. It is the bridge from a regulation to a pattern of facts. It is the lesson learned from prior breaks, the appreciation of other corners, and the resolve to claim that a quick response is dangerous. Companies that dismiss these individuals may see a temporary reduction in cost. But then the control layer thins out, perhaps without all constituencies understanding the risk. Mistakes get taken further afield. Senior professionals have to audit more with less instruction, while less experienced employees spend less time coaching newcomers. The firm gets quicker at producing artifacts, but slower at making judgments.

The old cliché about "just Googling" when you are a professional has not been dismissed. Search can assist a lawyer, accountant, engineer, analyst, or doctor in locating material more quickly. It does not provide the professional judgment needed to determine which material is relevant, which rule is best, and which exception changes the result. AI magnifies this trend. It can reduce the search, drafting, and trial-and-error stages. For some workers, this is a substantial improvement. But it does not eliminate the need for trained judgment in layered cases. A tax answer may have to be from a certain year, location, income category, exemption, filing status, and interaction with other rules. A statistical model may have to have assumptions, sampling philosophy, an error model, and domain-specific knowledge. A model may find patterns, but it cannot inherently understand that the data may require an alternative estimator, weighting scheme, or causal hypothesis. A program may produce a result while being dangerous. In these instances, labor by AI is reliant on labor by humans to be effective.

A better policy would put prices on rework, risk, and learning

And the policy lesson isn't to give up on AI—that would be lazy and would miss actual improvements in routine knowledge work. Instead, the lesson should be to stop viewing AI up to this point as labor savings until the organization can actually measure its entire ecosystem. Every realistic AI business justification should account for the cost of compute, subscriptions, integration, security, prepping data, energy use, evaluating the model, legal review, validation by humans, correcting errors, and losing other skills. It should also account for the cost of work that looks like it's done, but simply raises the cost of effort for somebody else. Recent AI research on "workslops" assigns a name to this challenge: slick AI output that doesn't contain enough juice to make a difference in a task. A workshop can be perilous—it turns someone else's ambient workload into a worker's time savings.

A better approach would be to separate augmentation from substitution until after the budget approval. Use AI where its problem scope is bounded, data is stable, the cost of error is low, and the audit trail is comprehensive. Reserve the human agency and responsibility for tasks that depend on it, such as judgment, responsibility, unexpressed expertise, and outside influences that change. This is not a soft compromise. It is hard economics. Pay attention and change the measures, too. Don't value teams by their volume. Value them by their fewer defects, verified better decisions more quickly, less rework, improved customer value, better audit trail, and greater clarity on learned lessons. Don't accept the platitude that job cuts paid for by AI expenditures confirm the national good. They may not be anything but the sign of a capital shift with inadequate measurement.

The test is simple. If AI really is cheaper than humans, what it costs should hold up in its first audit after the pilot. It needs to stay cheap—even after costs rise in the cloud, after staff sit down with the outputs, after bugs get fixed, after data are protected, after legal protocols are adhered to, and after the next upgrade refreshes the flow. Until then, the AI productivity myth will continue to promote the wrong story. Firms will be praised for replacing visible salaries with invisible systems. The next wave of AI policy has got to be less star-struck by speed and tougher on value. Companies should not just ask whether AI produced faster results; they should ask whether the results were correct, useful, safe, cheaper after review, and trustworthy. That's the difference between automation that boosts productivity and automation that just shuffles work into a harder-to-spot corner.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Becker, J., Rush, N., Barnes, E. and Rein, D. (2025) ‘Measuring the impact of early-2025 AI on experienced open-source developer productivity’, METR, 10 July.

Brynjolfsson, E., Li, D. and Raymond, L.R. (2023) ‘Generative AI at work’, NBER Working Paper Series, No. 31161. Cambridge, MA: National Bureau of Economic Research.

Dahl, M., Magesh, V., Suzgun, M. and Ho, D.E. (2024) ‘Large legal fictions: profiling legal hallucinations in large language models’, Journal of Legal Analysis, 16(1), pp. 64–93.

Dell’Acqua, F., McFowland III, E., Mollick, E.R., Lifshitz-Assaf, H., Kellogg, K.C., Rajendran, S., Krayer, L., Candelon, F. and Lakhani, K.R. (2023) ‘Navigating the jagged technological frontier: field experimental evidence of the effects of AI on knowledge worker productivity and quality’, Harvard Business School Working Paper, No. 24-013.

Gartner, Inc. (2024) ‘Gartner predicts 30% of generative AI projects will be abandoned after proof of concept by end of 2025’, Gartner Press Release, 29 July.

Gartner, Inc. (2025) ‘Gartner says worldwide AI spending will total $1.5 trillion in 2025’, Gartner Press Release, 17 September.

Gartner, Inc. (2026) ‘Gartner says worldwide AI spending will total $2.5 trillion in 2026’, Gartner Press Release, 15 January.

Golan, Y. (2026) ‘The AI productivity illusion: when speed masks cognitive cost’, Forbes Technology Council, 16 March.

Mann, H. (2026) ‘The AI productivity illusion: fixing the blind spots to make genuine gains’, I by IMD, 26 January.

Maslej, N., Fattorini, L., Perrault, R., Gil, Y., Parli, V., Kariuki, N., Capstick, E., Reuel, A., Brynjolfsson, E., Etchemendy, J., Ligett, K., Lyons, T., Manyika, J., Niebles, J.C., Shoham, Y., Wald, R. and Walsh, T. (2025) Artificial Intelligence Index Report 2025. Stanford, CA: Stanford Institute for Human-Centered Artificial Intelligence.

McKinsey & Company (2025) ‘The cost of compute: a $7 trillion race to scale data centers’, McKinsey & Company, 28 April.

Niederhoffer, K., Rosen Kellerman, G., Lee, A., Liebscher, A., Rapuano, K. and Hancock, J.T. (2025) ‘AI-generated “workslop” is destroying productivity’, Harvard Business Review, 22 September.

Noy, S. and Zhang, W. (2023) ‘Experimental evidence on the productivity effects of generative artificial intelligence’, Science, 381(6654), pp. 187–192.

Rogelberg, S. (2026) ‘Nvidia executive: the cost of AI tools is “far beyond” the cost of human workers’, Fortune, 28 April.

Sallam, R. (2024) ‘Gartner predicts 30% of generative AI projects will be abandoned after proof of concept by end of 2025’, Gartner Press Release, 29 July.

Svanberg, M., Li, W., Fleming, M., Goehring, B. and Thompson, N. (2024) ‘Beyond AI exposure: which tasks are cost-effective to automate with computer vision?’, SSRN Electronic Journal, 19 January.