[AI Labor vs. Human Labor] The Four Tests Before AI Replaces Human Labor

Published

Modified

AI will replace human labor only when it becomes cheaper, reliable, and easier to manage than people The next 3–4 years will bring selective task automation, not mass job replacement The main risk is not total unemployment, but weaker entry-level career paths and greater pressure on workers

Sixty-four percent of U.S. adults now believe artificial intelligence will lead to fewer jobs over the next 20 years. But that is not an irrational phobia, as it remains in the early days. The big question is no longer whether AIs can write, code, group, distill, or follow customer instructions. They can do all of that now. The more difficult issue is more precisely where and when AI labor substitution will be low-cost, dependable, and simple to control at a large scale. That is not yet true for most human jobs, and will happen first in a variety of tasks where mistakes cost little, feedback is instant, and the task can be regurgitated again and again until it's of high enough quality. More time-consuming work based on judgment, trust, risk, and messy context will lag behind. The next round of AI labor substitution will not be a single wave but rather a cost test, a reliability test, and a management test. The next 3-4 years will probably not see rapid substitution, but instead a conscious absorption of hardware and people in modest-sized tasks, deeper engagement in professional assignments, and a wait-and-see approach to jobs that borrow trust, judgment, and accountability.

AI Labor Replacement Starts at a Threshold, Not a Demo

The public discourse tends to look at AI labor replacement as a battle between human skills and machine skills. This is the wrong framing. A tool can be very impressive, but still too costly to displace human labor. When does the cost of oversight become low enough for replacement to make sense? A chatbot can get a demo right and still fall down on the job, where a tiny flaw can produce legal, safety, or customer risk. That is why the crucial metric in the AI labor debate isn't the benchmark score. It is the cost of a totally trusted task.

This is important because so many firms are buying AI before they know its true price. The visible price is just what's visible. Those costs matter because they will allow AI to leap from assistance into substitution. They also include the cost of failure. When a human makes an error, a manager can coach, discipline, or fire that person. When an AI system errs at scale, the firm must fix a workflow, trace a model output, explain a decision, and regain trust. That's not cheap labor. That's a new OS for work.

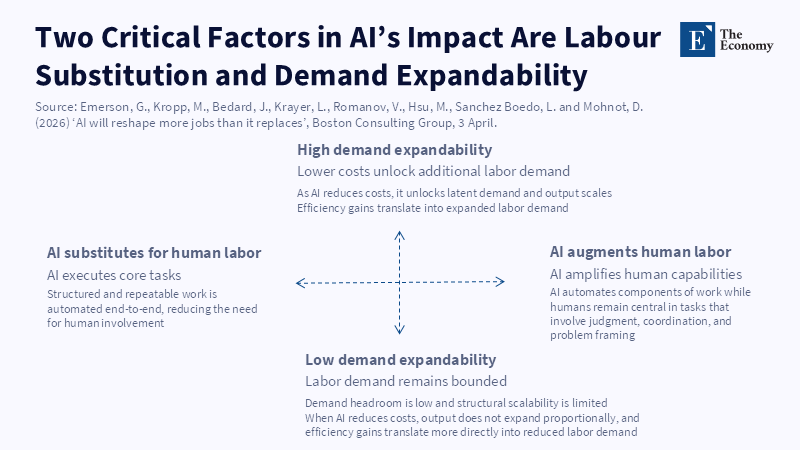

Contrary to this, the strongest evidence is towards a split. AI labor replacement will move quickly for structured, routine, and easy-to-verify work; slow for open-ended tasks or where the right answer depends on why a problem is there. Moving from ordinary least squares to generalized least squares has little to do with trying enough answers until one works. It needs to know that some observations should have different weights, what the problem is in the data, and why the estimator should change. When many options are tested, human knowledge constrains the problem before the learning machine begins calculation. AI will move into trial-and-error work faster than into diagnosis, assumption-testing, and judgment-based work

Falling Inference Cost Will Decide the First Wave

The labor substitution effect will be greater as the cost of inference declines. Inference is the cost of running a model in use, after it has been trained. It's the daily indicator of the use of AI. Higher inference cost means AI is used to assist workers; lower makes them think about redesigning their jobs around AI. That's already happening in planning models. By 2030, Gartner estimates that the cost of inference for a one-trillion-parameter model will have fallen by over 90 percent relative to 2025. The models in this class have similarly shrunk in relative size, from what was popular in 2022—they could be 100 times more cost-effective than the initial models. That's an enormous contribution to shifts in relative cost. Some AI labor that is prohibitively expensive may then become "just software" expensive.

Cheaper tokens do not necessarily imply cheaper labor; more capable AI agents, for a given token price, may require longer prompts, more context windows, repeated internal checks, tool use, and multi-step reasoning. For an AI agent, a simple chatbot reply may be cheap. A job site agent that does scheduling, searching, validation, writing, editing, record keeping, and reporting may be expensive. And that is the trap. In digital markets, as machines become cheaper, firms simply use more of them. Storage became cheaper, so firms kept more. Cable bandwidth got cheaper firms streamed more. And as AI gets cheaper, firms will ask it to do more. The labor effect will be determined by whether the extra utilization replaces paid work (making that work, relatively, more expensive) or just provides more product (making that product, relatively, cheaper).

This is why the hardware bottleneck is at the heart of the labor question. A business cannot replace workers with AI when chips, memory, power, and data center bandwidth are tight or expensive. The 3 to 4 years ahead will thus likely deliver a peculiar combination. At the unit level, AI will improve and become cheaper. But the system may still be costly due to the continually increasing resource demand. Memory shortages and bottlenecks will transit the cheaper models mostly at the upper-end of the market, keeping business-class costs high. The outcome will thus not be replacement but selective replacement. Businesses will focus automation on narrow tasks, offering hard gains, and hold back from replacing jobs in aggregate.

The Pricing Model Will Change the Job Model

The monopoly subscription conceals the true economics of replacing labor with AI. Most workers pay a fixed monthly price for a tool, which, once it's used, is almost free at the margin. But companies operating large AI systems don't face magic. They have to contend with servers, chips, cloud agreements, consumption restrictions, and vendor markups. As AI use rapidly expands, prices are likely to change from seats to pay-as-you-go, differentiated usage rates, pay-for-results, and bundle-in packages. This will matter, as it will allow managers to see the true expenses of each AI task.

When pricing is fine-tuned, firms will categorize work into three types. The first and automatable will be where AI is cheapest, fast enough, and good enough. Basic categorization, drafters, simple or re-draft checkers, routine reply to customers, and low-dollar coding will be in this category. The second will be augmentation, where AI increases output without removing the human worker. Analytics, design, finance, legal review, health care, research, and management will dwell in this category. The third will stay human, where work is based on trust, presence, negotiation, care, or judgment under uncertainty.

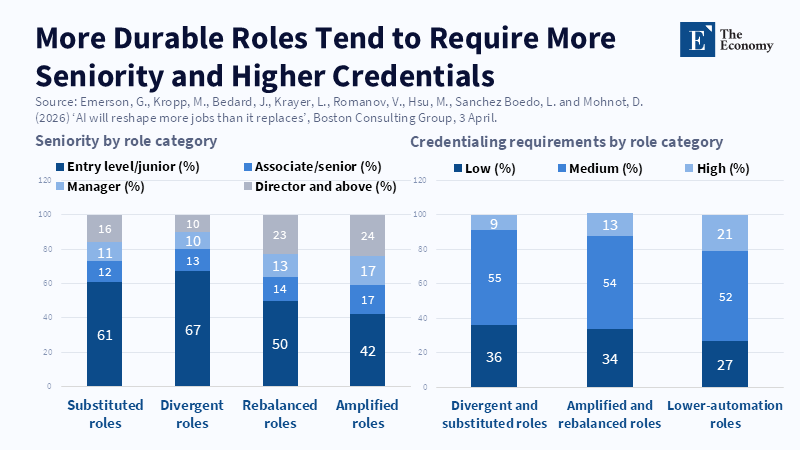

Current labor data already indicates this. Posts for routine, repetitive roles have declined, whereas writing, data analysis, and design jobs are still on the up. This does not suggest AI does no harm. What it suggests is that the first harm may be to the career pyramid before headline unemployment. Lower-paid workers are often trained through simple task subsuming tasks. Remove those with AI, and employers are left with their higher-paid judgments, but no "next wave" of graduate workers to hand down skills to—a silent AI labor substitution risk.

For firms, the answer is not just to automate and eliminate every old task; obviously, that is pointless. The solution is to protect learning, responsibility, and career flow. Firms should be clear about which tasks they use to automate, which tasks remain supervised, and which tasks they use to train judgment. A junior analyst should spend his or her day not copying around files and spreadsheets, but if first draft memos, model checks, and client memos are automated, young workers may never see how good work is built. The simple policy should be to automate low-value repetition but not automate the ladder out of the job.

Reliability Is the Last Barrier to Mass AI Labor Replacement

Reliability marks the difference between AI providing support and AI providing labor. A system that is right most of the time may be extremely helpful, but any replacement system must be right enough to mitigate the risk class of the task. In low-risk applications, the margin for error may be quite modest. In legal, financial, medical, engineering, or otherwise regulated work, this margin is much more significant as errors are more difficult to spot and are much more costly to correct.

This is not speculation but the best currently available forecasts. One U.S. labor-market forecast indicates that perhaps 50 to 55 percent of jobs could be altered by AI in the next two to three years, and perhaps 10 to 15 percent could be vulnerable to complete removal in the longer term - that's still huge. But it is not the same as standing on a street corner and announcing, "Half of all workers are about to be replaced." Only a reshaped job—the permanent unit of business for the coming decade—will accomplish that. In many cases, the job title will remain, but the workflow underneath it will change. The skill premium will transition from manual labor in each process to the judgment, manipulation, and validation of machine output.

That also sheds light on why this broad labor data has not yet shown signs of mass disruption. Firm adoption is growing, and many workers are already using generative AI on the job. Still, current economy-wide indicators continue to provide limited evidence of a large-employment disruption. This can be consistent with some sectors experiencing disruption. Labor markets, of course, adjust through hiring first. A firm could quit hiring junior workers, slow back-office expansion, or eliminate duplication in job responsibilities, without soon undertaking mass layoffs. The early signal, then, could be thinner entry pipelines and increased output demands for still-employed workers.

Policy needs to start there. Policymakers, employers, and training leaders have to stop approaching AI literacy as 'prompt-learning' and start offering more systematic courses on verification, domain logic, data boundaries, and moderation needed for knowing when not to employ any model. Administrators need to redesign entry-level work for the benefit of the youngest workers, helping them learn alongside the AI instead of competing with it from the start. Policymakers need to require better AI cost accounting in public bids, regulated markets, and do better on labor data, because exposure scores alone won't show if automation is happening, or just augmentation, or leaving humans be.

The most likely answer is that this view is pessimistic. AI systems are evolving rapidly. Additionally, some companies have already begun to lay off workers while spending billions of dollars on AI. While that is true, layoffs around an AI bubble do not necessarily indicate AI labor substitution on any scale. Firms have laid off workers for all sorts of reasons-having too many workers, feeble demands, high interest rates, tariffs, shareholder demands, etc. The real question is whether AI can do the work at a lower overall cost, given steady quality. For many jobs, the answer is still no. For many, it is beginning to be yes. It will change all the time as inference costs decline, prices change, and reliability progresses.

Second is the critique that AI labor substitution is mostly hype. That one is far too easy. Technology need not fully displace entire jobs in order to undercut workers. It can shift wages, speed, hiring, and bargaining power;it can turn one individual into a boss of many AI agents;it can drag down average labor costs and increase the premium of rare judgment. It can exacerbate bad management if firms use technology to cut before they understand their own operations. The policy question is not whether AI replaces humans once and for all; it is whether institutions can somehow force what replacements do occur.

Thus, the next three to four years are a window, not a pause. The labor-replacing aspects of AI will be limited in many domains until costs fall further, hardware availability loosens, prices are more realistic, and reliability reaches an acceptable level. But waiting until the onset of a crisis would be a mistake. To put in place the right kinds of policy, the arrangements governing substitution should be in place now. Firms need a plan that states what tasks they will automate, what tasks will be augmented, and what tasks will be kept human in the event of failure, because it would be too risky. Every school and training ecosystem needs to provide a way from novice to expert. Every politician needs to understand the real task change, not just a job change. The labor outcome will not be determined when AI gets clever, but when it gets cheap, dependable, and verifiable enough to clear the labor bar.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Allen, J.S. (2026) ‘Monitoring AI Adoption in the US Economy’, FEDS Notes, Board of Governors of the Federal Reserve System, 3 April.

Azpúrua, A.E. (2026) ‘Enhance or eliminate? How AI will likely change these jobs’, Harvard Business School Working Knowledge, 20 February.

Buchler, E.G. (2026) ‘Will artificial intelligence make human workers obsolete?’, Johns Hopkins Hub, 23 February.

Emerson, G., Kropp, M., Bedard, J., Krayer, L., Romanov, V., Hsu, M., Sanchez Boedo, L. and Mohnot, D. (2026) ‘AI will reshape more jobs than it replaces’, Boston Consulting Group, 3 April.

Gartner (2026) ‘Gartner predicts that by 2030, performing inference on an LLM with 1 trillion parameters will cost GenAI providers over 90% less than in 2025’, Gartner Newsroom, 25 March.

Hi-Tech.ua (2026) ‘DRAM shortage set to last through the end of the decade: Memory market covers only 60% of demand amid AI boom’, Hi-Tech.ua, 23 April.

McClain, C., Kennedy, B., Gottfried, J., Anderson, M. and Pasquini, G. (2025) ‘Public and expert predictions for AI’s next 20 years’, Pew Research Center, 3 April.

Rogelberg, S. (2026) ‘The cost of compute is far beyond the costs of the employees: Nvidia executive says right now AI is more expensive than paying human workers’, Fortune, 28 April.

Sourceability (2026) ‘AI costs will fall despite growing semiconductor supply chain risks’, Sourceability, 10 April.

Svanberg, M., Li, W., Fleming, M., Goehring, B.C. and Thompson, N. (2024) ‘Beyond AI exposure: Which tasks are cost-effective to automate with computer vision?’, MIT FutureTech Working Paper, January.

The Budget Lab at Yale (2026) ‘Tracking the impact of AI on the labor market’, Yale University, 16 April.