Reassessing AI Strategy: Adoption, Sovereignty, and the EU–US Perspective

Published

1 The Economy Research, 71 Lower Baggot Street, Dublin 2, Co. Dublin, D02 P593, Ireland

2 Swiss Institute of Artificial Intelligence, Chaltenbodenstrasse 26, 8834 Schindellegi, Schwyz, Switzerland

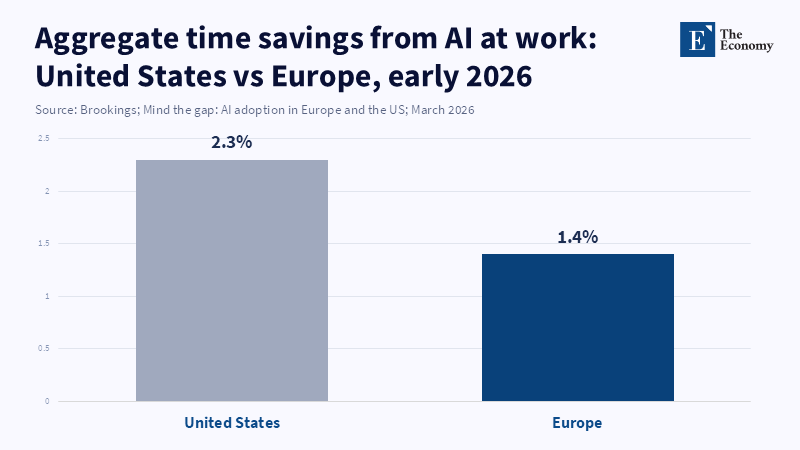

This report argues that Europe’s key policy question is not whether to emulate the US and China by building self-contained, sovereign frontier AI systems, but how to enable the swift, widespread and institutionally robust adoption of AI into firms, labor markets and public systems. While the US has still led Europe on worker-level use of generative AI, new evidence indicates the gap is smaller and more diverse than sovereignty-centric arguments suggest: EU firm adoption has grown quickly and within some frontier European countries, penetration levels rival those of the US. Concurrently, firm-level evidence from Europe shows that AI adoption increases labor productivity, with the most pronounced effects observed among companies equipped to combine AI with the necessary software, data infrastructure and human skills, with no evident near-term negative employment impacts. The report thus advocates that Europe's challenge is fundamentally one of diffusion, absorption and adaptation, rather than of ownership over frontier technology. It seeks to establish this argument by contrasting US and EU policies and approaches, challenging the need for the concept of sovereign AI and demonstrating why the economics of frontier infrastructure inherently limit any follower nations in replicating them. The report concludes that Europe (and almost all other followers) could gain more from investing in skills, governance and digital and organizational capabilities than through a costly, ineffectual replication of the entire AI stack.

1. Introduction - From Sovereignty to Diffusion: Reframing Europe’s AI Challenge

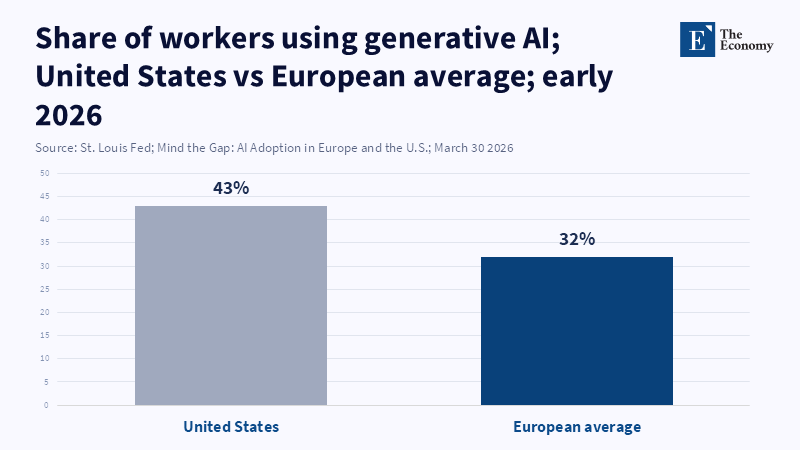

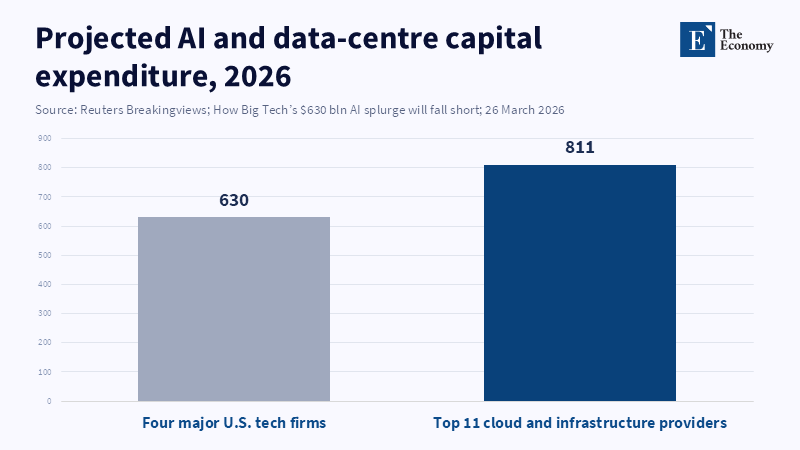

Until recently, the conventional wisdom was that the US would deploy new technologies faster than Europe and generative AI would be no different. According to a report from Axios, European policymakers are emphasizing the importance of clear regulatory "guardrails" through the EU's AI Act to foster trust and stability in the region's technology sector, aiming to provide a unified approach for all member states in contrast to the United States' more state-specific regulations.[1] However, recent data shows that the gap in AI deployment between Europe and the US remains significant. According to a 2026 article from the Federal Reserve Bank of St. Louis, 43% of US workers reported using generative AI in their jobs, while the figure for surveyed European countries ranged from 26% to 36%.[2] This suggests that the issue of AI deployment is still closely tied to differences in adoption rates across regions. The central argument of this paper is that Europe should shift from the costly race for technological sovereignty to a pursuit of widespread adoption. I will demonstrate that efforts toward regulatory rules and national models fail to acknowledge the second-order consequences for learning, work and inequality that follow with increased AI deployment and an "adoptioncentric" approach may be more congruent with Europe's strengths in skills and governance. It is critical to make this shift now because, since 2023-2026, generative AI is diffusing globally at breakneck speed, U.S. and Chinese investors are already channeling trillions into AI infrastructure and in 2026, major U.S. Tech firms are projected to invest nearly 2.2% of U.S. GDP in data centers and hardware.[3] Facing this reality, Europe has to ask: should I join the arms race, or achieve AI success through alternative strategies?

2. The Difference between the US and the EU

To understand why the conventional EU-versus-US framing no longer works, let's review what has been different: the US and Europe generally differ in how they approach market intervention versus precaution. Unlike in Europe, a lighter-touch, market-based approach characterized the US and still does: rather than preemptively forbidding potential uses of technology, US regulators tend to try and protect innovation using IP rules and encourage the market to develop things, even with risk-and quick funding has indeed followed-for cutting-edge research, including through entities like DARPA, NSF grants and the allocation of billions in private capital investment for new AI initiatives. In Europe, more emphasis has been put on ethics and trust: the 2020 White Paper on AI and the 2023 AI Act envision AI as a technology that will have to comply with human-centric values[4] and implement early and strict risk-based regulations (for example, with the classification of high-risk applications requiring more stringent measures). Europe has been focused on the use of public infrastructure: under the Digital Europe program, it is already building up a number of "AI Factories" (shared high-performance computing facilities for universities and SMEs) and is financing billions for the supercomputing project EuroHPC.[5] Thus, as reported in one analysis, the two blocs "have very different models of AI regulation",[6] with the US trusting innovation powered by the private sector and Europe relying on a public and more conservative approach to AI development. These models predictably lead to a consensus that the U.S. would surpass Europe in the implementation of AI and the newest surveys indeed report higher adoption by firms and employees alike.

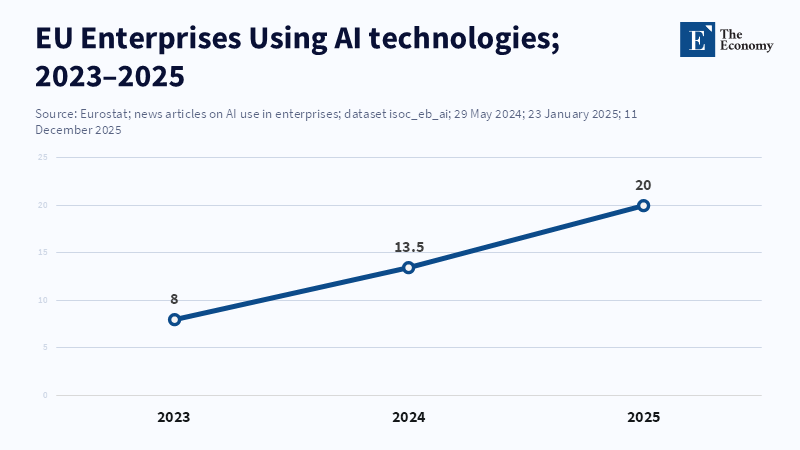

According to the Federal Reserve Bank of St. Louis, as of August 2024, 28% of U.S. workers reported using generative AI at work.[7] In comparison, Eurostat and private surveys indicate that around 20 percent of firms in Europe are using at least one AI tool, with slightly higher adoption rates in Northern European countries.[8]

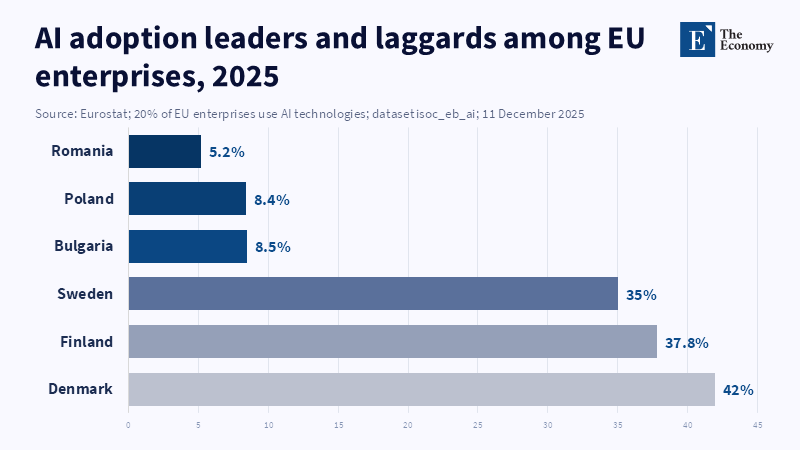

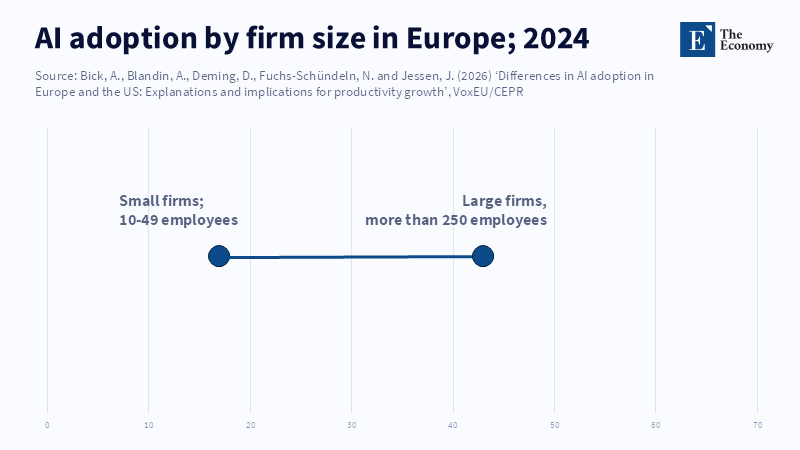

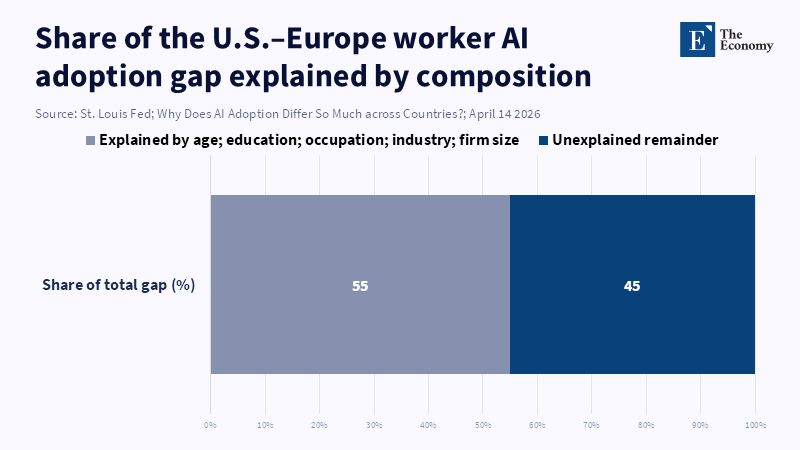

However, upon examination, the growth of adoption in Europe appears to be fast enough to catch up quickly and the key feature is heterogeneous adoption. Rich EU countries and regions seem comparable to the U.S on many dimensions; for example according to CEPR research ". On average AI adoption levels in the EU and the U.S are similar once you allow for cross-country heterogeneity" On average in Sweden and the Netherlands around 36% of firms used AI tools in 2024 - roughly the same magnitude as the US in terms of top performers. Whereas the poorer EU member countries like Romania or Bulgaria see only around 28%. Worker surveys likewise show that the UK(36%) and Germany(just above 30%) are similar in terms of their US counterparts' adoption rates, whereas in Italy, we see around 26%. It has been argued that much of the observed raw gap is compositional, with Europe's industrial structure and business practices, rather than some fundamental delay. According to a report by Alexander Bick and colleagues, differences in worker demographics and firm composition explain a significant part of the gap in AI adoption between the US and Europe. There is no structural barrier that would prevent the EU from reaching US levels.[9]

The takeaway is that the initial gap was determined by the means used to develop it-a mix of bottom-up, flexible innovation (America) and public coordinated, regulated (Europe). However, the factors that initially constrained European application could in fact contribute to adoption later. While stronger worker rights or data protection can hinder initial tests, it will improve public trust and limit further exacerbation of inequality. The signs of convergence are already present, with European countries using more AI in their respective fields, showing higher growth. Thus, while American tech companies were an early driver, this was not a problem that the Europeans could not solve. A conclusion, then, is that assumptions on the path to AI dominance (e.g., 'Europe must be able to spend as much as America') may need to be challenged. The reality is a far more complex one where the EU and Member States have similar incentives to adopt AI despite varied organizations.

This poses the policy question: if the philosophy is unique, how has policy been conducted and what should have been done (or not done) given these developments? Subsequent sections discuss the actual evolution of EU policy, explain why so many are now skeptical of the sovereign-AI perspective and illustrate why moving more quickly – at least with regard to education and the labor market – provides the more pragmatic and beneficial way forward.

3. The EU’s Strategy So Far

The European AI strategy was, from the outset, ambitious but also hesitant. From the earliest days, the European Commission has stated its intention to have the EU become "a world-class hub for AI" but insisted on its remaining "human-centric".[10] The Commission followed this up with public investment in infrastructure and regulation. The EU invested "approximately 10-11 billion" in the digital transition through its 2021-2027 Multiannual Financial Framework (MFF) and built dozens of new "AI Factories" using the framework to set up supercomputing capabilities across the continent. These state data centers were intended to equip European companies and researchers with the necessary processing power to build and run large models. To achieve human-centricity, the EU implemented the AI Act, which establishes rules and processes for risk categorization and compliance, with additional obligations on high-risk applications of AI (such as detailed documentation requirements, testing and human review). The AI Act, which was agreed in 2024 and is entering into force, demonstrates Europe's consistent preference for ethical and responsible innovation with its restrictions on biased scoring and prohibitions on certain types of psychological manipulation.[11]

The official European policy that underlies the three intertwined aims of sovereignty, trust and opportunity, which can be translated into the following: sovereign control of AI through building up the capacity to produce an autonomous EU tech base (AI Factories), trust established through the AI Act and opportunity born from these through new start-ups and industry. The discourse did include some scholars who supported an EU-level AI model. For example, there was commentary from think tanks on the pooling of EU resources into a shared AI fund and model;[12] on the concept of AI as a digital public good. The idea was partly security, partly to make sure EU data remained in Europe, but partly to capture the value for Europe by hosting the next ChatGPT. In all this, there was an underlying assumption about how Europe was failing to “bridge the innovation gap” with the United States in order to increase sluggish productivity growth.

Nevertheless, the EU's strategy had a few uncoordinated elements. As noted by some authors, while Europe was paying attention to the hardware of supercomputers, it was ignoring the markets and capabilities of the markets and skills that an AI enterprise would require. The Bruegel Policy Brief entitled "Catch-up with the US or prosper below the tech frontier? According to the European Commission, recent amendments to the EuroHPC regulation aim to strengthen Europe's capacity for AI and quantum technologies by expanding the mandate of the EuroHPC Joint Undertaking to support advanced computing infrastructures, including AI Gigafactories. Practically, the brief called for Europe to focus instead on "below-frontier" AI[13]: domain-specific AI technologies that didn't require large investments (e.g., AI for improving factory operations, for healthcare diagnostics based on existing AI models) and urged the EU to speed up deployment of AI by clarifying regulation in the AI Act.

This line of criticism showed increasing discomfort with the narrative of 'a world of single-pursuit-of-sovereignty'. Indeed, by 2024 in Europe, the notion that Europe would create alone a trillion-parameter model had already become debatable within the EU. Policymakers worried that money might be used elsewhere for better AI literacy among the workforce, the digitization of small businesses, or changes in the educational systems. The think tank Economy.ac argued the EU did not pursue 'coordinated closed' policy as Fortress Europe would look like, but rather followed a 'coordinated open' similar to what the Swiss developed as the Apertus model, whereby Swiss supercomputers were able to develop an open-weight model (Apertus) with public data, setting a new example. By end of 2025 the EU actions were more balanced; the first batch of AI factories had to serve universities and SMEs jointly and EU funding streams looked into 'adapters' and fine-tuning open models for local languages (e.g. Latam-GPT on Spanish and Portuguese) or support to private initiatives through the InvestEU fund and the AI Act, was including an innovative service sandbox that would allow safe testing of AI models.[14]

In sum, Europe's first AI strategy married high-flown dreams of sovereignty with practical steps to enhance capability. It saw AI as a massive public undertaking - with extensive spending on research & development and on infrastructure - and imposed strict governance over it. This strategy made sense up to a point: it admitted that Europe was struggling with raw research and scale and sought to make up for it. But it could be misleading; while focusing on building capability, it might neglect precisely the human and organizational elements that make technology work. In the following sections, we will look at other options. What would Europe's first AI strategy have looked like if, retrospectively, the EU could have done it differently? And what makes the 'sovereign' road the wrong one in retrospect?

4. What the EU Could Have Done Differently

In theory Europe could take one of two diverging paths: The first, pursue a heavy emphasis on the development of the core model and advanced frontiers of AI in the vein of the US or China and do so by harnessing the entire ecosystem of major tech players and research labs; The second and probably much more feasible for the vast majority of states, concentrate on a few particular areas of expertise and achieve quick adoption. Which path would the EU adopt? For the moment, the EU's rhetoric hints at a path, but there is no actual equivalent to the tech clusters found in Silicon Valley or Shenzhen. It is useful to briefly examine two other nations that have tried to be leading players in the field of AI.

Japan is one of those. In the face of an impending demographic disaster, coupled with its rich history in the robotics industry, the Japanese government is going all out on what it calls "physical AI" – essentially AI integrated with robotics and manufacturing. Last April, a consortium of Japan’s giants – SoftBank, Honda, Sony and many others – declared its intention to build a 1 trillion-parameter Japanese foundation model. Underpinned by government subsidies estimated at around 6.3 billion U.S. Dollars over five years, this initiative's stated aim is to develop and deploy an AI platform for Japanese industries.[15] On paper, this makes sense: Japan's strength is its hardware and some core R&D. Analysts, however, are quick to point out that AI-driven robotics in this age is not just hardware and the real battlefront is the full-stack integration of software, data and machine learning, which Japan's historically protected house system has failed to nurture. According to The Washington Post, Japanese companies are increasingly forming partnerships, such as the recent collaboration between Fujitsu and Nvidia, to advance smart robots and AI innovations, reflecting a shift in the industry as global competitors introduce sophisticated AI technology.[16] In a nutshell, even Japan's high-profile sovereignty push represents a tremendous wager on whether having one national model and its domestic manufacturing prowess will allow it to maintain its competitive edge.

So does Korea, with its promised “AI for All” policy under President Lee Jae-myung, scheduled to make Seoul one of the world's top three AI-ecosystems by 2025. Investments in domestic data-centers are huge and there's talk of building the first Korean-language large-model tailored for local culture, justified on both demographics (aging population must automate) and security (advanced cyber-defenses). The initiative is substantial – even prompting elevation of an AI czar within the presidential staff and greater U.S.- Korean defense-tech collaboration. Korea's position nevertheless demonstrates the diplomatic tightrope of global power struggles in the pursuit of sovereignty – it needs U.S. Hardware, but still wants Chinese investment – indicating the incredible size of the game and the way Lee's program makes Korea an unfortunate participant on the front line of the U.S.-China contest, at a diplomatic cost.

What is the lesson for the EU? First, only a few countries (the US, China, perhaps Japan and Korea) have the resources, scope and context to attempt national AI stacks. It is uncertain whether they will succeed. For the vast majority of EU countries (Germany, France, or small countries), the only way forward is the second one: strive for both high and fair usage of AI technologies, using the existing global stack if possible. This could imply some countries invest in a particular layer of the AI stack in which they have a competitive advantage (hardware, as in the case of Germany, or the AI-based business services, for the small but service-oriented Luxembourg). But an entire replication of an AI pipeline (hardware design, model training and mass deployment) will be too costly and redundant for the EU.

Therefore, a smarter strategy for Europe would have been to choose some sectors to focus on instead of an "all or nothing" approach for this project. In fact, a portion of EU research policy is moving towards this direction, as Horizon Europe projects more and more often subsidize AI tools for health care, climate modeling and data sovereignty as opposed to pushing Europe to come up with the new GPT. States could have joined forces based on their industrial competencies-Finland would have focused more on AI applications for forestry and gaming (given its high level of human capital and English use) and Italy might have invested in AI applications for cultural heritage and tourism. This way, certain niches can be carved out and resources pooled in an effective manner. What is important to note, however, is that this does not imply that every country in the EU should attempt to replicate a new LLM or supercomputer.

In conclusion, Europe (or Asia) in 2026 shouldn't be replicating the U.S. or China models in terms of investment in the AI model races. The only few advanced economies that would actually try to do so are throwing money away and hitting constraints. Most other advanced countries might do better to ride the wave: educating people, investing in software and in networks, to allow anyone to access any available model and gain its advantage, instead of developing it. In other words, the EU should rather ask itself "how do we benefit from AI quickly through our society?" than "how do we win the AI models race?".

5. Why Sovereign AI No Longer Makes Sense

It is becoming increasingly apparent, even in Europe, that a national "build it all" nationalist stance does not make much sense. Just look at the numbers. Today's Frontier AI systems are prohibitively expensive in compute terms. Training a single state-of-the-art LLM might require tens of millions of dollars and tons of electricity. For example, an independent analysis noted that even for big economies like Germany or France, data center space would have to increase by at least a factor of 10 just to train models like GPT-4. In the meantime, the U.S. and China are apparently engaged in a "digital infrastructure arms race": US cloud providers and chip makers have declared that in 2026, they will spend about $630 billion in data centers and chips for AI alone, 2.2% of their national GDP.[17]

Yet even with these huge numbers, we hit constraints. As Reuters pointed out, the real bottlenecks for the U.S. are now less semiconductors and more permits and power. Connecting a data center to the electrical grid might take over ten years in some places; as a result, companies are being forced to either look for obscure locations or on-site power generators.[18] According to a report from Power magazine, due to long wait times for grid connections, some new US data centers are opting for on-site natural gas generators to ensure reliable power for operations like artificial intelligence rather than waiting for traditional utility connections. This shift reflects ongoing physical and regulatory challenges that can reduce the effectiveness of increased funding for national projects as project scale grows.

China is a similar but rather different example. Beijing benefits from the luxury of electricity and scale of manufacture: in China installed generating capacity (2,907 GW) is over twice that of the US (1,334 GW), while they are already investing $590bn through to 2030 to grow their grid (not only for AI, but data centers are one of the main drivers), essentially able to out-compete their Western rivals on expenditure and scale of infrastructure.[19] This places Europe in a difficult predicament, as even if it wanted to match the US/China push on sheer scale of resources and size, that would require either a bankruptcy of the European budget or a neglect of more fundamental and long-term priorities to fund the massive expenditure and scale.

As many economists argue, such duplication is itself a strategic blunder. Broad criticism has been aimed at the "autonomous node" aspect of treating each country as a sovereign state for the purpose of AI system design, producing a mix of duplication and fragmentation. If states are only focused on observable technology - country chips, country models - they 'fund visible computer projects', at the expense of essential requirements like education and training. Ironically, it is precisely the "sovereign" rhetoric that may alter incentives in the right direction, by having the media and politicians in almost every country demand large technology projects, thus starving the subtler policy changes that can be so much more effective. EU and Asia, for example, are already creating protectionist digital policies (data localization requirements, domestic sourcing laws) under the cover of digital sovereignty, which risk not producing sovereignty but creating less innovative economies.

Simply put, AI nationalism can be a pitfall. The Economy report on strategy is stark: reproducing entire stacks "is expensive and the expensive push for sovereign compute usually means foregoing more practical avenues of sovereignty-diversified purchasing, secure supply chains and focus on local capabilities".[20] So, sovereignty should mean choice and resilience, not building every chip and every model from scratch. For any country outside of the US and China, the furthest they can go regarding model-building is to 'blend': combining public money with international cooperation. This is essentially the route Europe is also taking: its AI Factories program is basically a grant for shared infrastructure and European companies such as Mistral and Aleph Alpha are pushing open models. These developments implicitly recognize that Europe will not be able to out-train OpenAI or Google, but it can help create an ecosystem for local model adaptation.

The evidence as to why it doesn't work is quite obvious. Tech giants are feeling the strain even with U.S. backing at the national level. Microsoft's cloud gross margins dropped from 72% to 69%, largely from ramping up AI infrastructure (while still growing revenue). Amazon plans to spend $200 billion a year on tech (largely data centers for AI infrastructure), yet its stock dipped on the realization that all these expenses aren't translating to profit quickly.[21] The strategist warned the world that the race to build the biggest AI is essentially the bubble to blow a giant AI bubble. Long story short, if billion-dollar men and Wall Street struggle with such massive build-out, there is simply no reason that smaller economies should do this.

In contrast, adoption-based (using AI dynamically and non-owned) seems to be the practical solution. Companies in Europe have started to apply generative AI for daily tasks such as customer support, assistance in programming and office management. Unlike purchasing machines and equipment, such AI is available at low costs via a cloud service or open-source software. The main concern is not if people are able to use it, but whether they can and are allowed to use it. If EU governments understand this and now, after a decade waiting for "secure" AI, they realize the restrictions that they are creating only slow down the process.

In summary, the idea of individual "sovereign AI models" appears increasingly untenable. The financial and material costs are vast, the strategic value questionable and the opportunity cost great. Rather than every nation pursuing a replica of GPT or Claude, a more sensible approach is to develop shared models and devote national resources to the utilization of AI in society.5 In the new landscape of AI, no nation, not even the most powerful, will be the author of each element in the stack. Collaboration and specialization will lead, not separate, self-sufficiency. Policy in Europe should reflect this reality.

6. Fast Adoption as the New Strategy

If sovereign AI is off the table, then what? The only plausible alternative strategic imperative then follows: that Europe must push for wider responsible AI use. As is, Northern European countries are already seeing their investment pay off. Oxford Economics shows the share of European firms that were utilizing AI went from ~8 percent to ~20 percent over the space of two years.[22] Crucially, the regions where this has most significantly occurred are where a foundation for AI use has been built-these areas with high digital skills, concentrated ICT industries and excellent infrastructure can yield "AI premiums." In contrast, while Southern and Eastern Europe have low overall adoption rates, this reflects low overall infrastructure and skill readiness. Here too, the difference reflects a north-south gap of readiness over sovereignty and suggests resources should be directed at the former.

What does this look like concretely? Training people in their human capital and developing institutions that allow AI to proliferate and gain leverage. It's revealing that, according to a recent study, the most important determinant of rapid AI adoption is no more than having basic computer literacy and a good command of the English language. As of now, the Netherlands, Finland and Denmark have reported that >80% of the adult population possesses a high digital literacy rate and it was a breeze to adopt the new models (which were written and mainly talked about in English).[23] Workers there were using and teaching each other how to leverage systems like ChatGPT and others in school and workplaces within weeks. In other words, the model appeared ready-made and society had a system of education ready to ingest it. This implies that no country is inherently unable to become a rapid adopter, as long as it focuses on those foundational elements of training and language.

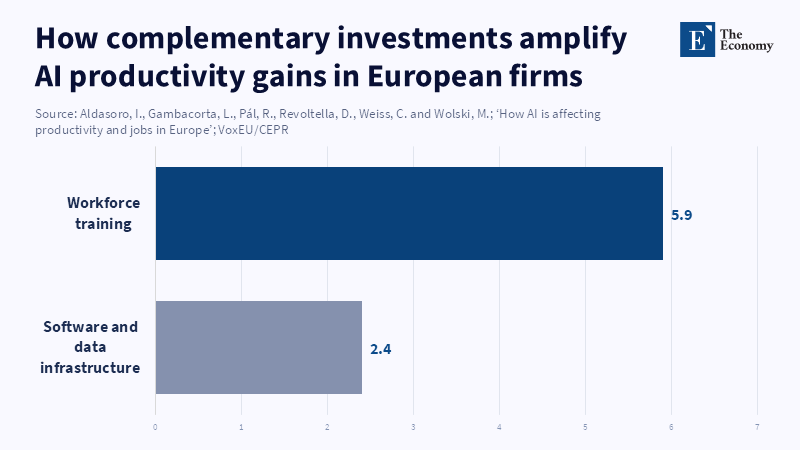

The productivity effect can already be observed. According to a European Investment Bank working paper, companies that adopt AI experience a 4% increase in labor productivity, with this boost mainly coming from capital deepening rather than reductions in jobs. Crucially, no signs of mass unemployment across nations have yet appeared in any of the surveys. The main effect, at this point, is one of capital deepening, labor-augmenting technology that allows workers to do their jobs more quickly and imaginatively. Indeed, the European firms that use AI pay higher than average wages per worker, presumably because technology is enabling them to generate a higher value product.[25] That is not to say that more dramatic effects won't occur; the future mix of jobs may very well change (AI does pose some risk of eliminating some mid-skilled jobs). But the initial order of business suggests productivity gain for no noticeable overall employment decline.

The result above supports a change in strategy: Europe and the other followers could maximize the use of AI more widely, rather than deeply building a model. How to apply this in policy/institutional terms in practice?

To teachers, the response should be one of inclusion, not resistance. The curriculum should focus on the skills that computers and machines struggle with (analysis, problem-solving, creativity) and train students in how to use AI as an assistant. This could involve adding algorithmic thinking to school curricula, or teaching data literacy as a part of the core curriculum and also providing teachers with training on how to integrate AI tools into their teaching (already nearly one third of teachers report using AI in their profession, according to OECD.[26] Tests and examinations should be reconsidered, as essays and computer code are easily generated by AI. Rote knowledge testing must shift towards an assessment that requires novel analysis or teamwork. Just as calculators and other tools were allowed to aid in mathematics, AI should not be banned, but student work with these tools should involve reflecting on the AI-generated output (such as "use ChatGPT to write a short draft of an essay on topic X, then assess its validity and strengths"). Government provision of professional development should not be overlooked, since research has shown that many teachers feel overwhelmed by AI and could be supported with comprehensive training programs and AI-fluent pedagogical materials.[27]

For administrators and educational institutions, the same parallels apply. Universities and colleges must rewrite academic integrity policies to accommodate AI. Rather than a ban on all AI-produced content, institutions should be creating honor codes for the usage of AI (such as: students must acknowledge and cite the use of AI or students must produce successive drafts to document their thought process). Universities can model appropriate AI usage: institutions should have at least some computer labs with secure AI available and encourage students to use AI in their projects. Professional schools (e.g., law, medicine) need to integrate AI literacy so that professionals of the future understand the limitations of AI advice. Ultimately, administrators will need to cease to view AI as an institutionally dangerous problem and instead view AI as an addition to the curriculum to be governed and supported.

The labor market needs to cope with evolving skill requirements. There is already an uneven pattern of adoption (faster in large corporations than SMEs and faster in urban than rural areas) and policymakers could compensate for this disparity through subsidizing skill acquisition among workers in late-adopting sectors (like SME employees and the rural labor force). This would be framed in terms of matching the demand for and supply of skills in labor markets. It may be possible to provide grants to SMEs to support their adoption of AI technologies along with a plan for workforce training. Mid-career workers could also acquire new skills (as in Denmark and Canada's experience) through dedicated adult education courses on using AI technologies. For the rest of the workers, vocational schools and universities need to upgrade their curricula in fields like computer science, engineering and finance with practical AI implementation aspects and open up to other disciplines like data science and the humanities (because all fields of study will inevitably be affected).

For administrators in government and education ministries, there is an immediate task: governance frameworks for AI. They should develop governance frameworks for data (preserving its privacy and ensuring its quality when it is used by the public sector to train AI) and security (protecting AI systems). Simultaneously, government institutions should be model adopters: using non-risky AI, like scheduling assistants, automatic translators or tools for predicting needs for planning, could kick-start a snowball effect of experience. Of course, non-risky AI will need safeguards: any AI in a school or a court will need to be transparent and explainable and will need to have human beings checking it. This speaks to the long-term dilemma: without believable checks and balances on AI, it may fail. So, the government should invest in public-interest auditing and certification of AI, rather than in bans. EU approaches to standards (AI Act) may contribute, but the checks should focus on enabling fair use rather than limiting it.

In a more general way, they should shift funding away from building big-stack infrastructure toward providing the context in which that infrastructure can be widely deployed, for instance by developing parallel infrastructures such as affordable, ubiquitous high-speed broadband and up-to-date data centers, which may well be cloud-based, but on terms that allows SMEs to rent capacity affordably, increasing investment funds that finance the AI services, infrastructure and tools and tax incentives such as the R&D tax credits that incentivise its deployment over its research. The regulation should enable interoperability and cross-border data exchange, so for example, the EU should push the harmonization of data regulation to facilitate the use of Estonian data by a French AI startup.

The long-term human capital strategy involves the pervasive implementation of an "AI layer" throughout education and labor policy. This is conceptually equivalent to how electricity or the internet are conceived as an underlying input or utility that touches all parts of the economy. Governments should analyze the likely impact of AI on labor market demands and adjust educational curricula accordingly. If, for instance, an analysis indicates that generative AI will significantly affect the demand for administrative tasks, schools may then want to shift focus away from these skills and emphasize management and supervision skills instead. Retraining programs for the workers who lose jobs should include specific AI training modules. The labor market information and forecasting system should be systematically reviewed to track trends in the development and application of AI. Social safety nets need to accommodate any transitional hardships workers experience (for example, through support for career transition for those most impacted).

Critically, these recommendations are grounded in quantitative evidence, not just suggestions or hand-waving. According to a study from the European Investment Bank, AI adoption boosts labor productivity by about 4 percent in European firms, primarily due to increased capital investment rather than reductions in employment.[28] This all points to a policy stance focused on creating conditions whereby European individuals and firms can exploit AI, rather than trying to control the AI industry itself, with training and investment enabling them to capitalize on these advances.

To summarize the "fast adoption" strategy: rather than an "AI sector" to gain control of, it views AI as an "all-purpose technology" whose potential is released by a focus on skills, regulation and coordination. Policies are not one-off or sectoral, but systemic: from retraining programs and updated curriculum, to enhancing the digital infrastructure and measuring social effects. It is these measures, implemented consistently through the phase of rapid diffusion seen between 2023 and 2025, that can deliver inclusive long-term growth.

7. Conclusion - Europe’s AI Future Lies in Adoption, Not Replication

The path Europe takes to catching up on AI can be different from what the U.S. and China are doing. In fact, the idea that Europe needs its own ChatGPT needs to be fought: the picture, from recent data, offers a far more encouraging and feasible view-Europe is already catching up in AI use through human capital and organization. Meanwhile, the "AI infrastructure race" of superpowers became too expensive and limiting to be worth the effort for followers. From 2023 to 2026, as multi-billion-dollar funds flooded overseas, Europe's quickest gains were achieved by letting AI enter and then by adjusting accordingly, not by trying to build its own technology stack.

The fundamental revelation is a shift in emphasis, then. Instead of asking "How do we reproduce frontier AI?", our policymakers need to be asking "How do we secure AI's broad, deep and long-term societal good?" Which translates to: educating our children with thinking and digital competencies, so that AI can enhance rather than automate their learning; reskilling and caring for our workforce, so that AI can augment rather than substitute their work; developing exams and qualifications that evaluate what AI can't easily replicate; establishing rules and safeguards that prevent harm to societal values without inhibiting technological progress. In short, the policy priority is on absorptive capacity rather than computational capacity.

We have described how the EU approach may develop in that direction. All the economic data, from surveys on business investment (e.g., OECD, EU-wide surveys, or individual company surveys) to case studies on the firm level, indicate that investment in training, data and an ecosystem produces higher productivity gains than the simple purchase of a hardware component.[29] Therefore, we urge teachers, managers and policymakers to embrace this approach. Teachers should ensure the inclusion of AI in their course and practice, with special attention to competencies such as problem solving or AI literacy; managers should review their integrity regulations and facilitate access to tools; policymakers must devote funds to the digital infrastructure and training programs and the new EU AI Act should be adapted to facilitate new application development.

The "zero-sum game" notion of AI is already over; we need to move to the stage of a common platform for human capacity. If Europe pursues rapid, inclusive adoption - anchored in robust social and institutional scaffolding - it could leverage generative AI as a driver of growth and innovation. The careful calculation of this strategy, rather than a simple race to acquire the technology, places technology's worth into its effective integration with education, economy and governance. If the EU pursues this approach, it can address both the immediate challenge of productivity gaps and long-term social robustness. The only other option is a continent that will be even more hindered by its failure to adopt a landmark technology; the conclusion is unequivocal--Europe's prosperity depends on the wise and widespread deployment of AI.

References

[1] Axios (2026) ‘Inside Europe’s AI playbook: Guardrails first, flexibility later’, Axios, 10 April.

[2] Bick, A., Blandin, A., Deming, D., Fuchs-Schündeln, N. and Jessen, J. (2026) ‘Mind the Gap: AI Adoption in Europe and the U.S.’, On the Economy, Federal Reserve Bank of St. Louis, 30 March.

[3, 17, 18] Reuters Breakingviews (2026) ‘How Big Tech’s $630 bln AI splurge will fall short’, Reuters, 26 March.

[4, 10] European Commission (n.d.) ‘European approach to artificial intelligence’, Shaping Europe’s Digital Future.

[5, 14] EuroHPC Joint Undertaking (2024) ‘Selection of the First Seven AI Factories to Drive Europe’s Leadership in AI’, 10 December.

[6] Dimitriadis, D. (2025) ‘US vs EU AI Plans – A Comparative Analysis of the US and European Approaches’, DCN Global, 31 July.

[7] Bick, A., Blandin, A. and Deming, D.J. (2024) The Rapid Adoption of Generative AI. NBER Working Paper No. 32966.

[8] Eurostat (2025) ‘20% of EU enterprises use AI technologies’, 11 December.

[9] Bick, A., Blandin, A., Deming, D., Fuchs-Schündeln, N. and Jessen, J. (2026) ‘Differences in AI adoption in Europe and the US: Explanations and implications for productivity growth’, VoxEU/CEPR, 9 April.

[11] European Commission (2024) ‘AI Act enters into force’, 1 August.

[12] Tournesac, A., Hjartar, K., Krawina, M., Hillenbrand, P. and Olanrewaju, T. (2025) ‘Accelerating Europe’s AI adoption: The role of sovereign AI’, McKinsey, 19 December.

[13, 20, 29] Martens, B. (2024) ‘Catch-up with the US or prosper below the tech frontier? An EU artificial intelligence strategy’, Policy Brief 25/2024, Bruegel, 21 October.

[14] European Commission (2025) ‘Commission launches AI Act Service Desk and Single Information Platform to support AI Act implementation’, 8 October.

[15] Kim, J.-w. (2026) ‘SoftBank, NEC, Honda, Sony Form “Japan AI Alliance”’, The Seoul Economic Daily, 12 April.

[16] Kageyama, Y. (2025) ‘Nvidia and Fujitsu agree to work together on AI robots and other technology’, The Washington Post, 3 October.

[19] State Council of the People’s Republic of China / Xinhua (2023) ‘China’s renewable energy capacity overtakes thermal power’, 22 December; U.S. Energy Information Administration (2025) ‘Electricity generation, capacity, and sales in the United States’.

[21] Reuters (2026) ‘Amazon shares slide as $200 billion outlay fans fears over AI returns’, 6 February.

[22] Oxford Economics (2026) ‘The North–South regional divide in AI adoption’, 9 March.

[23] Eurostat (2023) ‘56% of EU people have basic digital skills’, 15 December.

[24, 25, 28, 29] Aldasoro, I., Gambacorta, L., Pál, R., Revoltella, D., Weiss, C. and Wolski, M. (2026) ‘How AI is affecting productivity and jobs in Europe’, VoxEU/CEPR, 17 February.

[26] OECD (2025) Results from TALIS 2024: The State of Teaching. TALIS, OECD Publishing, Paris.

[27] Daher, R. (2025) ‘Integrating AI literacy into teacher education: a critical perspective paper’, Discover Artificial Intelligence, 5, 217.