“Designing With a Single Prompt” From a Technology Race to an Applications Race, the AI-Driven Reshaping of the Design Tools Market

Authored On

Modified

The axis of AI competition is shifting from algorithm-centric rivalry to application-ecosystem dominance Canva, Adobe, and Figma are expanding design AI through integrated workflow-based systems A full-scale reshaping of the design market is underway as big tech enters the space and agentization accelerates

The paradigm of the artificial intelligence (AI) industry is shifting away from an algorithm race aimed at securing foundational technologies and toward a contest to dominate the “application ecosystem” that maximizes real-world workplace efficiency. Next-generation design models unveiled in rapid succession by design software companies as well as AI big tech firms are leading market change by establishing integrated workflows that precisely understand user intent and existing work context.

Canva Unveils ‘AI 2.0,’ Letting AI Handle Design Once an Idea Is Entered

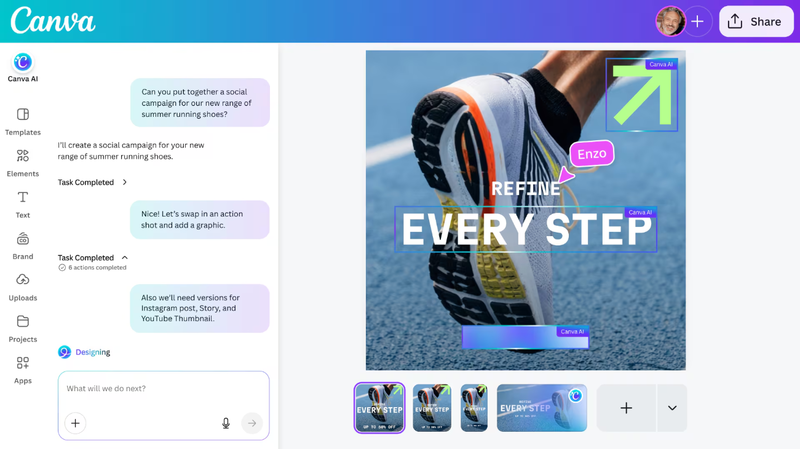

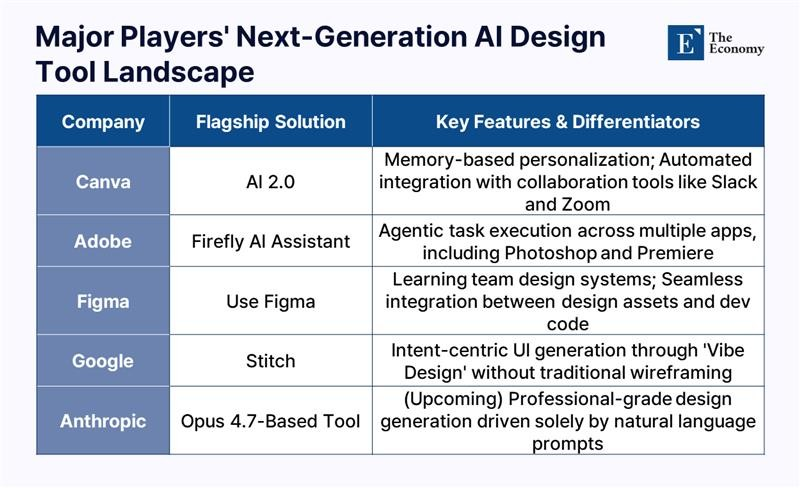

According to the IT industry on April 17, design platform Canva recently declared its transition into an AI-centered company. Its strategy is to evolve beyond merely providing functions for easily creating visual content and become a platform that supports the entire creative workflow, from design conception to final output. Melanie Perkins, Canva’s co-founder and chief executive officer (CEO), introduced the AI-powered design model “Canva AI 2.0” at an online press briefing held on April 10, describing it as “an integrated design AI platform that dramatically boosts productivity in visual content creation.”

Powered by a design-specific AI model developed by Canva’s AI lab, Canva AI 2.0 supports users in completing the entire process, from ideation and material search to actual design work, in one place. It adopts a conversational structure similar to widely used AI chatbots such as OpenAI’s ChatGPT and Google’s Gemini. When users enter requests in text or voice through the chat window, the AI carries out content creation and revisions by referencing their past work history. Canva AI 2.0 is equipped with a memory function, enabling it to learn from users’ previous design outputs and generate more personalized, tailored content.

It also identifies in advance the purpose and intended use of content creation based on conversations exchanged through workplace messaging tools such as email and Slack, and applies that information to the task. It can also generate a customized presentation within one minute based on meeting information uploaded to Zoom or Google Calendar, or automatically create weekly team announcements by referring to the latest information posted on Slack.

Canva, headquartered in Sydney, Australia, ranked third in monthly global visits among generative AI apps in a recent tally by U.S. venture capital firm Andreessen Horowitz (a16z), trailing only ChatGPT and Gemini. a16z described Canva as “a representative case of a software company successfully transforming into an AI company.” While the rise of AI-based image-generation tools, including Google’s “Nano Banana,” had raised concerns that design software companies would come under pressure, Canva has held its ground. After rapidly integrating AI across its platform, Canva posted annual recurring revenue of $4 billion last year, up nearly 50% from a year earlier. Its monthly user base stands at 265 million, of whom 31 million are paid subscribers. As enterprise clients have also increased, related revenue has exceeded $500 million.

Adobe and Figma Also Roll Out AI Design Tools

Adobe, best known for Photoshop, has also signaled its entry into the AI market by launching the AI agent “Firefly AI Assistant.” The feature was first unveiled last October under the name “Project Moonlight” and is set to launch this month under its official name. When users enter a desired task in text, the assistant completes the work by moving directly across multiple apps, including Firefly, Photoshop, Premiere, Lightroom, Express, and Illustrator.

As a result, users no longer need to learn how to use individual apps and can obtain the desired output simply by instructing the AI directly. For instance, when creating a social media advertisement to promote a coffee shop opening, a user only needs to drag in several photos, and the AI assistant automatically processes them through multiple steps, such as adjusting composition and blurring backgrounds, to produce a suitable final format. Outputs can be refined not only through text input but also through buttons and sliders, and the system is designed so users can intervene at any point during the workflow.

Design software company Figma has also moved to expand its portfolio by launching new AI-based products. Last month, Figma released in beta the “Use Figma” tool and the “Skills” feature, which allow AI agents to directly generate and modify designs on the Figma canvas. The functions are provided through Figma’s Model Context Protocol (MCP) server and can be used with major MCP clients such as Claude Code and OpenAI Codex.

The core of the new features lies in enabling AI agents to directly understand and use team design systems. Because assets can be generated and revised based on existing design resources, inconsistencies between design and development can be reduced. In previous workflows, discrepancies repeatedly emerged between AI-generated user interfaces (UI) and actual design systems. The latest features reflect naming conventions and structural library composition, enabling agents to operate within the same context and thereby allowing smoother movement between code and canvas.

Big Tech Players Including Google and Anthropic Join the Fray

What stands out is big tech’s entry into the design market. On March 18, Google unveiled upgraded functions for “Stitch,” an AI-based design canvas that instantly turns ideas into actual software design. The tool’s core innovation is the introduction of the “Vibe Design” concept, which develops design based on user intent and feel rather than requiring a complex wireframe production process. In other words, this marks more than an evolution of simple design tools; it represents a new method of generating UI centered on human “intent.”

Users can begin designing simply by describing in natural language their business goals, the emotions they want users to feel, or examples they find inspiring. The canvas directly accommodates multiple forms of context, including images, text, and code, supporting the entire process from early ideation to the production of functioning prototypes. In particular, the newly introduced “design agent” infers the entire evolutionary process of a project, while the “agent manager” function helps users systematically manage multiple design options in parallel.

Anthropic is also preparing to launch a design AI tool. U.S. IT outlet The Information reported on April 14, citing industry sources, that Anthropic is preparing to release a new design AI tool alongside its next-generation model “Opus 4.7.” The core of the tool likewise lies in generating expert-level designs simply by entering commands. Following the report, the share prices of Adobe, Wix, and Figma each fell more than 2%, reflecting concerns over intensifying AI competition. Because the tool is designed with usability that encompasses not only developers but also non-developers, it is seen as likely to affect the broader design and productivity tools market.