“AI Competition Backlash” Exposes Limits of U.S. Power Grid as Next-Generation Data Center Strategies Accelerate

Input

Modified

Explosive growth in AI data centers intensifies grid bottlenecks Aging transmission infrastructure and shortages of power equipment expose supply constraints Unconventional infrastructure from subsea to orbital data centers gains traction

The rapid proliferation of artificial intelligence (AI) data centers is placing enormous strain across the U.S. power grid system. As competition in generative AI intensifies, hyperscale data center construction is surging simultaneously across the country, while the U.S. grid is already confronting both supply limitations and bottlenecks tied to aging infrastructure. The situation is being further exacerbated by shortages of transformers, transmission lines, and grid interconnection equipment, raising concerns over weakening industrial competitiveness alongside steep increases in household electricity costs.

PJM Warns of Power Shortages Beginning Next Year

According to Bloomberg on the 7th, David Mills, chief executive officer of PJM Interconnection — the largest grid operator in the United States — said in a letter released the previous day that unprecedented growth in electricity demand requires a sweeping overhaul of the U.S. power system. Mills stated that ensuring sufficient electricity supply while simultaneously preventing sharp increases in residential power bills has become impossible.

“The current situation is not tenable,” Mills said, adding that “the pressures reflected in prices, reserve margins, and investment pipelines indicate a more fundamental problem rather than a design issue requiring marginal adjustments.” The crisis confronting PJM includes potential electricity shortages beginning as early as next year, alongside moves by American Electric Power, one of the largest U.S. utilities, to withdraw from the organization.

The primary driver is AI data centers. Data centers account for 94% of incremental electricity demand growth within PJM’s service territory. Big Tech firms including Amazon, Microsoft, Alphabet, and Meta are simultaneously racing to construct hyperscale facilities as part of the generative AI infrastructure war. While data centers are requesting 5–7 gigawatts (GW) of new grid connections annually, newly connected generation capacity remains limited to roughly 2–3GW per year. New supply is failing to keep pace with the acceleration in demand.

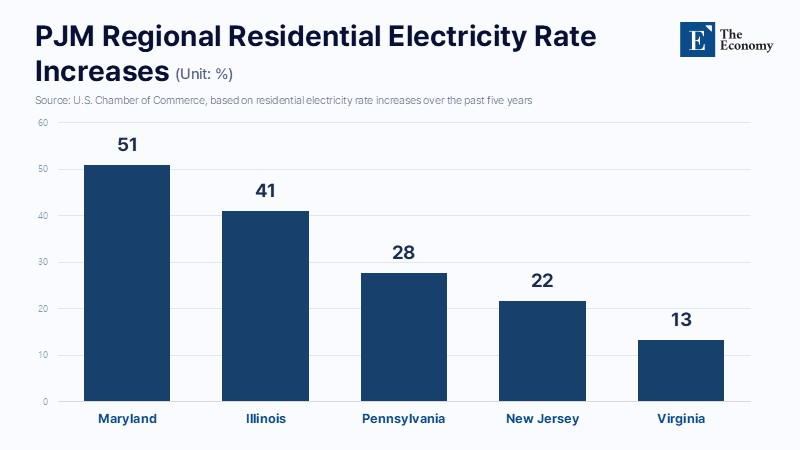

According to a report released on the 5th by the U.S. Chamber of Commerce, electricity prices within PJM’s jurisdiction have posted steep increases over the past five years. Maryland and Illinois in particular saw rates surge 51% and 41%, respectively. Power prices within wholesale electricity markets have also skyrocketed. Clearing prices in PJM capacity auctions jumped from $29 per megawatt (MW) per day for the 2025–2026 period to $329 for 2026–2027 and $333 for 2027–2028. That represents an elevenfold increase within three years. The surge is expected to translate directly into higher household electricity bills, inevitably shifting the burden onto consumers.

PJM consequently warned that power shortages totaling 6.6GW could emerge as early as next summer. The deficit would be equivalent to multiple large-scale nuclear reactors going offline simultaneously. A policy document released alongside the letter outlined three measures aimed at narrowing the “credibility gap” between the need for elevated electricity prices to incentivize new power plant construction and the parallel objective of containing consumer utility costs. Mills emphasized that “power producers, utilities, investors, and consumers must at least believe that the rules are fair, stable, and the product of a trustworthy process.” He added that “there are only years remaining to make these choices carefully, not decades.”

The Next AI Bottleneck Is the Power Grid

Within the U.S. power industry, assessments are rapidly spreading that “the next bottleneck for AI is not semiconductors, but the power grid.” Goldman Sachs estimates that AI data center electricity demand could account for 11% of total U.S. power consumption by 2030. Yet reserve power margins across the United States continue to decline, while the pace of new grid expansion is falling far behind the rate at which aging power plants are being retired. Insufficient grid capacity is already emerging as a major factor slowing data center development across the country. The U.S. power network is fragmented by states and municipalities rather than operating as a unified national grid. Large-scale investment is therefore becoming unavoidable to resolve regional transmission bottlenecks.

An even more serious issue is the shortage of critical electrical equipment such as transformers and switchgear. In particular, shortages of ultra-high-voltage transformers are being identified as one of the most severe constraints. Lead times have become the industry’s most immediate concern. For large power transformers, waiting periods from order placement to installation of two to three years have become common, while some high-capacity transformers now require as much as five years. AI data centers generally demand construction cycles of roughly 18 months, yet the supply chain for power equipment is failing to keep pace.

America’s 110-volt electrical system is also being cited as a key source of inefficiency. Lower voltage requires higher current flow to transmit equivalent amounts of power, and the 110V structure generates significantly greater heat loss from wire resistance than 220V systems. Data centers typically import high-voltage 480V power internally to improve efficiency before converting it again, though the conversion process itself introduces additional energy losses. Industry observers have consequently continued to argue that delivering identical amounts of electricity requires higher current levels, increasing transmission losses and placing heavier burdens on wiring infrastructure. Experts estimate that the United States will require more than $1 trillion in investment over the next decade to modernize its power grid.

From Underwater to Space, Unconventional Alternatives Gain Momentum

Some observers, however, argue that a full-scale replacement of America’s aging power grid is unlikely to achieve either practical feasibility or economic viability simultaneously. AI data center demand is expected to rise far faster than nationwide transmission and distribution upgrades can be completed, despite the enormous capital expenditures required. For that reason, momentum is building behind strategies that concentrate data centers in specific regions to establish self-contained energy ecosystems or deploy unconventional infrastructure capable of simultaneously resolving power and cooling challenges, rather than attempting to comprehensively rebuild the existing grid architecture.

At present, the most closely watched alternative is the orbital data center. Google is reportedly advancing a pilot project to operate its next-generation AI model Gemini within space-based data centers. Orbital facilities can receive uninterrupted solar energy regardless of day-night cycles, enabling power generation utilization rates exceeding 95%, while the vacuum of space also allows for natural cooling. Significant obstacles nevertheless remain, including radiation shielding, debris collisions, and transmission latency. Chris Hayes, chief technology officer at Starcloud, said that “power efficiency can be ten times greater than on Earth, but costs are one hundred times higher,” adding that “space data centers remain at the laboratory stage.”

Subsea data centers have also emerged as a major alternative. Microsoft’s “Project Natick,” conducted off the coast of Scotland in 2018, achieved a Power Usage Effectiveness (PUE) ratio of 1.07, demonstrating world-leading efficiency. PUE — a key metric for data center energy efficiency — measures total facility power consumption divided by the electricity consumed directly by IT equipment such as servers, storage systems, and networking hardware. A PUE of 1.07 indicates that energy used for ancillary functions such as cooling amounted to only 7% of the electricity consumed by core IT operations. Chinese firm Hailanyun later began construction of a subsea data center off the coast of Shanghai integrated with offshore wind power generation. The facility is expected to source 97% of its electricity from renewable energy.

Floating data centers have recently begun attracting increasing attention as well. The concept refers literally to constructing data centers on the ocean surface. While no large-scale commercial examples have yet materialized, countries and corporations seeking to expand AI data center infrastructure have already begun conducting feasibility assessments, with both coastal docking models and fully offshore facilities considered technically viable. One of the biggest advantages is the relative ease of securing land-equivalent space. Constructing both power generation facilities and data centers offshore could substantially reduce the land acquisition costs typically required for terrestrial developments. The approach also offers advantages in managing thermal loads, given the abundant availability of seawater for cooling. Additional reductions in electricity costs may be achievable if floating facilities incorporate proprietary green energy generation systems or leverage nearby offshore wind farms.