AI Regulation Is Not Too Uncertain, It Is Too Heavy

Published

Modified

AI regulation is no longer just uncertain; it is becoming too heavy Layered rules now protect large firms and weaken smaller AI challengers The next AI leaders will be those that balance safety with room to build

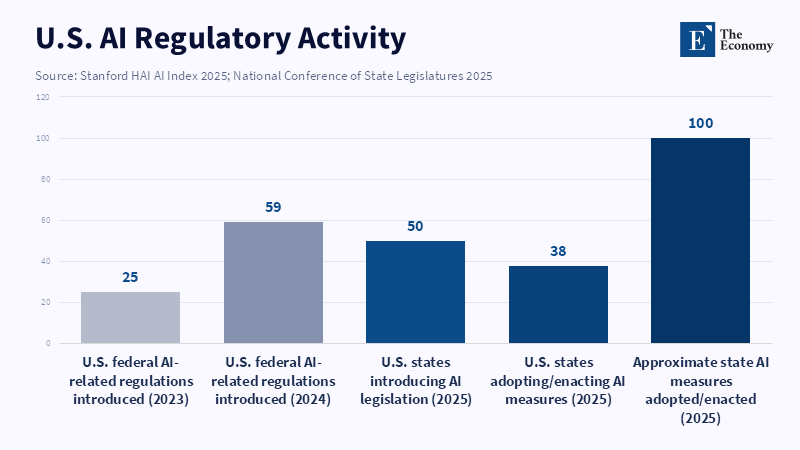

Every one of the 50 U.S. states introduced AI legislation in 2025, and 38 states enacted around 100 AI-related measures. That's not a policy desert. It's the outline of a dense legal wall. The popular narrative that regulation mainly hinders innovation through uncertainty underestimates what businesses, investors, and researchers already know: AI regulation is not a far-future concern for the mature economies. It's a rising pile of actual mandates, overlapping legal authority and climbing compliance risk. The key question is not whether firms get the rules but whether they are becoming too onerous for everyone other than the largest firms. This matters as the global race on AI is moving faster than the legal tools states have to hand and if the US & Europe conflate the weight of regulation with the soundness of policy, they may simply choke off competition and limit who can build and lead the next wave of AI.

AI regulation has become a real cost

The argument that far-off uncertainty is the biggest obstacle to AI innovation sounds logical, but it's incorrect in today's environment. The US government, while fighting for deregulation with the 2025 Trump mandate to fortify American AI access, faces a more complicated legal environment. In 2024, Stanford's 2025 AI Index revealed that US Federal bodies issued 59 regulations on AI, nearly twice as many as in 2023. The pace at the state levels is even more faster, with every state pitching AI legislation in 2025 and 38 states passing close to 100 laws. California’s SB 53, for example, requires frontier AI developers to publish safety frameworks, report critical incidents, and face civil penalties for noncompliance. That's not a dormant market in a haze of unclear legislation; it's a market operating under an expanding rulebook from many sources.

The political climate points to the same conclusion. The White House's push for Congress to supplant what it views as abusive state AI rules was helpful to many in AI, who saw it as a way to escape a complex puzzle of state statutes. But this forecasted simplification makes the obvious point: companies are not reacting to vague future rules; they are already hitting a concrete and visible regulatory wall shaped by conflicts between federal and state authority. When regulation is so active, "uncertainty" is just a smokescreen for a clearer picture. Firms are more worried about how to manage a stringently constrictive, though shifting, legal environment. The weight of regulation increases without pause since lawmakers are still debating final policy principles and this higher weight in turn forces companies to plan across several environments at once.

This is true in Europe too, even when a little regulation is a good thing. The European Commission states that the AI Act came into force in August 2024, with banned operations going into effect in February 2025, general-purpose models mandated from Aug 2025, enforcement starting from Aug 2026 and hazardous use enforcement from May 2026. Moreover, stiff fines of up to €35 mm or 7% of global sales for excessive infractions can be levied on non-compliers. The OECD has also warned that Europe’s AI framework needs less cumulative burden and clearer sector-specific guidance. The same fundamental point applies: While I support the orbiting force of AI policy, the big worry is not lack of clarity but huge compliance costs spread over many frameworks.

AI regulation now prefers scale to velocity

Few regulations hit all business sectors uniformly. New rules demand resources beyond compliance: lawyers, files, risk units, filing systems, monitoring protocols, machine tests and organizing trainings. Heavy companies can bear fixed costs. Smaller competitors can't. That's the structural burden of AI mandates. Venture funding for AI remains healthy,$222 bn in US venture transactions and counting, 65% all deal value and $109 bn poured into US private AI businesses in 2024, but a concentration trend is defining the sector: increased regulations actually entrench the large camps that can roll out an integrating compliance ecosystem well before they take any revenue rather than those with superior innovation. The increased legal frictions act as an enforced 'scale premium', rewarding rich enterprises and deterring future challengers, a trend intensified by the already existing barriers to adopting AI. According to the OECD, issues like maintenance fees, lack of time and lack of human skills continue to be critical reasons in applying digital tools and AI. Additional executive and administrative layers also stall public program applications. The overall industry policy conundrum is also falling on SME shoulders: they are hampered not only by the old problems, but also by several overlapping mandates. In this light, the discussion on "clarity" can be dangerous: when a startup knuckles down to the complex silos of Europe, California and others, they might still find the tangle unmanageable. No misunderstanding, this is not a theoretical uncertainty; this is a hurdle for running a firm. If policymakers overlook, incumbents will win and newcomers will be starved.

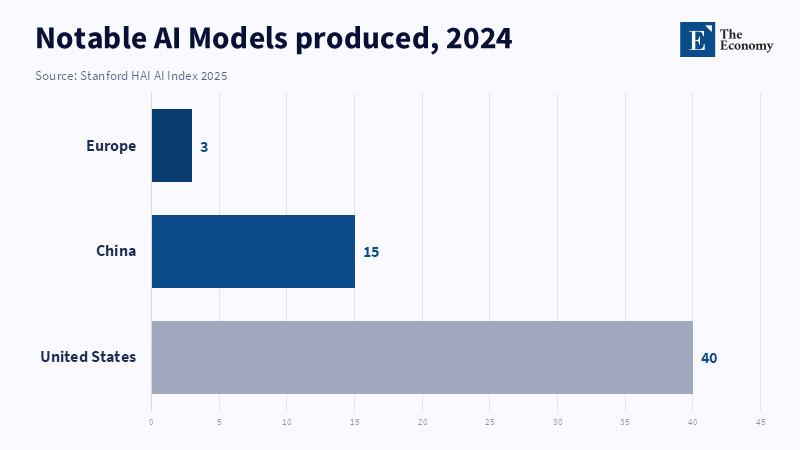

This issue is bigger than domestic policy. It's a matter of industrial strategy. While the US has a lead in funding and model output, its competitive edge is waning where it really makes a difference: how capable they are at producing finished AI models. For instance, during 2024, the US added 40 big new models versus 15 in China and 3 in Europe. Yet the 2026 AI Index shows the U.S and China's frontier performance gap is shrinking.

By March 2026, the leading U.S. model was ahead of the leading Chinese model by only 2.7% on the benchmark reported by Stanford HAI. Nations like China have leapfrogging advantages in provisions, papers, citations, patents and machinery. As the hot debate is between the developed worlds, I can see the most compelling launchpads becoming those with the most money, commodities and broad ruleslessness, so in the established minds of academia and the industrial world. Therefore, we shouldn't think only in terms of the argument of who is the best at designing the most trustworthy AI in this neighborhood, but also who is not strangling innovation behind regulated borders. This is not metaphorical for firms, public agencies, hospitals, banks or research institutions. When the domestic AI capability declines, these entities do not stop utilizing AI. They purchase the best technology tools that are available, even when they are constructed under foreign rules, incentives and strategic goals. This is the more significant price that excessive regulation not only holds back local innovators but also makes economies reliant on tools they didn't create or manage.

AI regulation is narrow in scope but builds state capacity

Advanced AI will exacerbate fraud, increase danger to essential services, create biosafety concerns, harm children, increase discrimination, and contribute to the circulation of lies. Our faith could also depend on our ability to commercialize it. But there is a world of difference between guiding such trust with well-specified laws & trying to topple a vast generalist guideline expecting all else to follow. Drafting a guideline, like California's, should be done in the spirit of guiding the best talent and launching the smallest startups, as well as encouraging many federal states and chiefdoms to conform. The OECD has additional adviceless generalism and more specificity, as in sector rules, less accumulation of mandates and more practical guidance in interpreting regulations. Good regulation is advice, not laxity. It's the opposite of vague. It would facilitate AI obedience, not just check it off. Any new directive should therefore anchor upon narrow limits, with cross-cutting "fixers" such as clear positive or negative list structures, common templates, safe havens and long-term interpretive evolutions to keep the intersection between directive and implementation a smooth ride. The other aspect can't be overstated: it must be matched with state build-up. I am talking about AI regulators and shared testing facilities and hardware, clean purchasing policies and open R&D programs and not only for limited players and states. Without that, a specific regulatory mandate is just a dead document for selective enforcement. No one is truly served if dependability, no undue abuse of mandate, safety for the large public and market predictability in mandates aren't achieved, but only appear in headlines. This is a form of regulation that is robust in the headlines, but failed in execution.

Another equally relevant factor is the false assumption that speed trumps harmony in regulation. Without a federal set of laws that binds the entire industry, the threat of preemption just breeds a different tier of combat, allowing federal and state officials, affected and industry to bow ahead and scramble over jurisdictional bases. The only outcome will be legal wrangles over who applies the best regulation. Only then can consensus on piecemeal mandates for efficiency be achieved. The main point should be toward the reduction of duplicated, overlapping regimes, improvement in audit rules and the enabling of small firms and research in technical standards and government requirements. We should set stable rules that limit real hazards without erecting excessive entry barriers. Only if Washington, DC, London and European capital cities excel at that can the marketplace succeed in clean regulation. Otherwise, it will be based on the richest and fiercest.

The fact that all 50 U.S. states introduced AI legislation does not show an empty policy field. It shows a crowded one. As every single American state is now working on something about AI, the simple fact that each market is underinvested and overly regulated makes it impossible for anyone to ride easily. Certainly, in the area of AI regulation, an industry that disvirtually develops, states should be aware that they just don't need regimes so remarkable that someone, eventually, will find a fulcrum through them. It's not that we're overconscious about precautionary diplomacy & preserving good behavior. The real concern is that fatuously, policymakers seem to equate high volumes of regulation with mastery over a particular technology. In any other technology, that could be just a blunder. But in AI, it might dictate the whole story. The coming decade will not be known for the jurisdiction most eager to cite that every firm provides reliable AI, but the jurisdictions that can guarantee safety, no market entry and long-term reliability of the infrastructure.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Babwah Brennen, S. and Viñals Musquera, A. (2026) ‘Regulatory uncertainty is what actually holds back innovation’, Brookings, 20 April.

Bengio, Y., Clare, S., Prunkl, C., Andriushchenko, M., Bucknall, B., Murray, M., Bommasani, R., Casper, S., Davidson, T., Douglas, R., Duvenaud, D., Fox, P., Gohar, U., Hadshar, R., Ho, A., Hu, T., Jones, C., Kapoor, S., Kasirzadeh, A., Manning, S., Maslej, N., Mavroudis, V., McGlynn, C., Moulange, R., Newman, J., Ng, K.Y., Paskov, P., Rismani, S., Sastry, G., Seger, E., Singer, S., Stix, C., Velasco, L., Wheeler, N., Acemoglu, D., Conitzer, V., Dietterich, T.G., Hinton, G., Jennings, N., Leavy, S., Ludermir, T., Marda, V., Margetts, H., McDermid, J., Munga, J., Narayanan, A., Nelson, A., Neppel, C., Ramchurn, S.D., Russell, S., Schaake, M., Schölkopf, B., Soto, A., Tiedrich, L., Varoquaux, G., Yao, A. and Zhang, Y.-Q. (2026) International AI Safety Report 2026. London: Department for Science, Innovation and Technology.

California State Legislature (2025) Senate Bill No. 53: Artificial intelligence models: large developers. Sacramento, CA: California Legislative Information.

European Commission (2024) ‘AI Act enters into force’, European Commission, 1 August.

European Commission (2026) ‘AI Act’, Shaping Europe’s Digital Future. Brussels: European Commission.

European Commission (2026) ‘Guidelines for providers of general-purpose AI models’, Shaping Europe’s Digital Future. Brussels: European Commission.

European Parliament (2025) ‘EU AI Act: first regulation on artificial intelligence’, European Parliament, 19 February.

Federal Register (2025) ‘Removing Barriers to American Leadership in Artificial Intelligence’, Federal Register, 90(20), 31 January.

Governor of California (2025) ‘Governor Newsom signs SB 53, advancing California’s world-leading artificial intelligence industry’, Office of Governor Gavin Newsom, 29 September.

Kim, S.M. and O’Brien, M. (2026) ‘White House moves to strip California and other states of AI regulation power’, Los Angeles Times, 20 March.

Maslej, N., Fattorini, L., Perrault, R., Gil, Y., Parli, V., Kariuki, N., Capstick, E., Reuel, A., Brynjolfsson, E., Etchemendy, J., Ligett, K., Lyons, T., Manyika, J., Niebles, J.C., Shoham, Y., Wald, R., Walsh, T., Hamrah, A., Santarlasci, L., Betts Lotufo, J., Rome, A., Shi, A. and Oak, S. (2025) The AI Index 2025 Annual Report. Stanford, CA: AI Index Steering Committee, Stanford Institute for Human-Centered Artificial Intelligence.

National Conference of State Legislatures (2025) ‘Artificial Intelligence 2025 legislation’. Denver, CO: National Conference of State Legislatures.

National Venture Capital Association (2026) 2026 NVCA Yearbook. Washington, DC: National Venture Capital Association.

Organisation for Economic Co-operation and Development (2026) Empowering SMEs in the Age of AI: The 2026 OECD D4SME Survey. Paris: OECD Publishing.

Organisation for Economic Co-operation and Development (2026) Progress in Implementing the European Union Coordinated Plan on Artificial Intelligence, Volume 2: Uptake in High-Impact Sectors. Paris: OECD Publishing.

Stanford Institute for Human-Centered Artificial Intelligence (2026) The AI Index 2026 Annual Report. Stanford, CA: Stanford University.

The White House (2025) ‘Removing Barriers to American Leadership in Artificial Intelligence’, The White House, 23 January.

The White House (2025) ‘Ensuring a National Policy Framework for Artificial Intelligence’, The White House, 11 December.