Thin-File Borrowers Need Data Rights, Not Data Silence

Input

Modified

Thin-file borrowers need fair data visibility Privacy should protect, not erase proof Better data rules can expand credit access

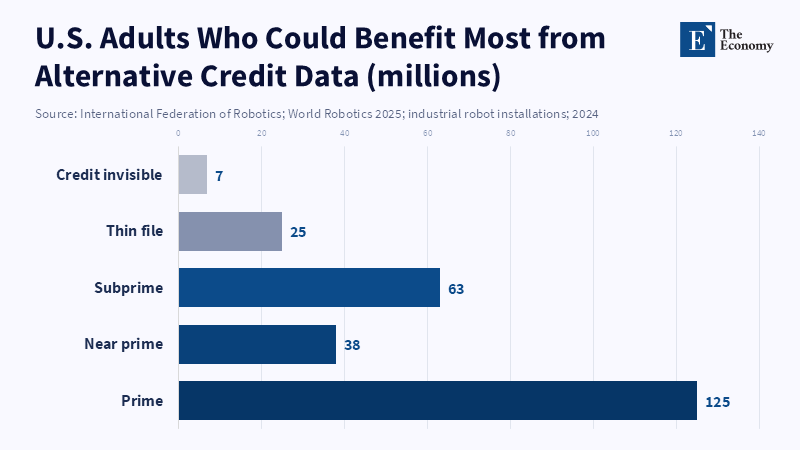

A credit system can be digital and invisible. In the US, a recent estimate by the Federal Reserve was that there were around 32 million Americans "unscorable", with 7 million having no credit history and 25 million with thin files not thick enough to generate a traditional score. Not a trivial margin of error at the edge: a vanished population that equals a big country. Thin-file borrowers rarely lead non-financial lives: they pay the rent, get the paycheck, send cash, top up a phone, pay the bills, steer their way through the flux of jobs and income streams. The problem is that the formal credit file is all-seeing, seeing almost none of it. Privacy is now likely to sit at the heart of the debate. The question is no longer whether privacy should be used to shield thin-file borrowers. It is whether it can shield them without obliterating the data that might show they can repay.

The privacy trade-offs that thin-file borrowers face

The time the credit market was already closed to thin-file borrowers, long before fintech. Data was already closely mapped to the existing distribution of risk. Scores were already based on borrowing, credit card usage and repayment of debts reported to bureaus: in other words, anything that complied with existing risk assumptions. This already suited people who already participated in mainstream credit perfectly, but wasn't suited to young workers, new migrants, gig workers, small businesses, cash-in-hand earners, and families desperately trying to avoid debt. The 'thin file' is read as risk and this isn't just silence. Therefore, the discussion about data protection should shift. Data privacy rules aren't a simple form of self-defense. There are rules about who can be seen and how. But the Agencies have already tried to prove otherwise, by showing that they don't regulate; otherwise, provisions really do lead to them playing a resurgent gatekeeper role, restoring default exclusion from credit for those who don't have the right data altogether.

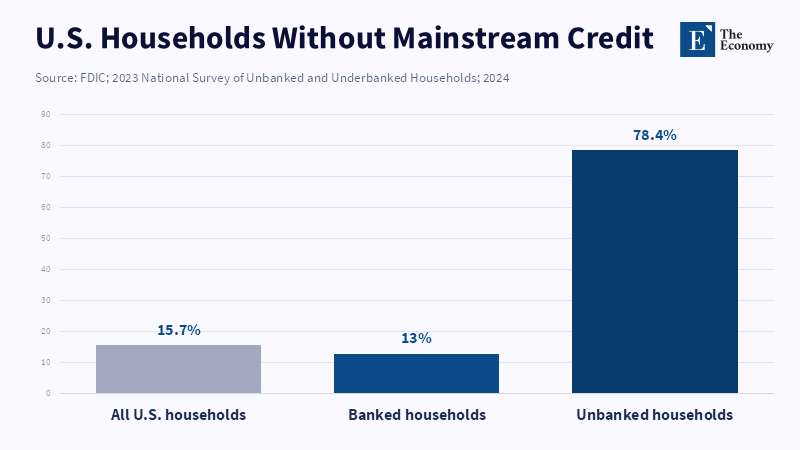

Borrowers without traditional credit histories experience greater affordability constraints and reduced access to credit, perpetuating financial exclusion," said the FDIC and fellow agencies. "Significantly, the use of alternative data in credit decisions allows more consumers to access credit by enabling lenders to evaluate the creditworthiness of individuals who might not otherwise qualify. As recently as 2023, the FDIC found that 15.7% of US households lacked access to mainstream credit (down from 20% in 2017), indicating a significant portion of the population is vulnerable to credit access issues. Moreover, in 2023, the FDIC discovered that approximately 4.2 percent of US households, around 5.6 million people, lacked a bank or credit union account, leaving certain payment activities out of the scope of traditional credit bureaus. A 2023 study of India's largest digital lender also revealed that tighter data regulations increased demand while forcing lenders to undertake more thorough screening processes, disproportionately affecting low-income borrowers, as well as young and first-time applicants. A study by Adam Bouyamourn and Alexander Williams Tolbert shows that risk management caps, like value at risk (and its derivatives), can cause systemic risk to percolate behind the scenes, enabling creditors to exclude certain applicants from the formal credit process altogether, depriving them of early opportunities to gain crucial experience working with formal credit.

This is not to say that privacy rules will disadvantage thin,file borrowers. Those most vulnerable to uncapped consent provisions, broadly phrased data,sharing notices, or insidious profiling are often those least able to combat them; a lender that requests access to one's Facebook page or browser history, contacts list, or health indicators, or even geolocation history, should not necessarily be granted the complementary status of 'innovator.' It may be something more like 'personal life as collateral.' But then again, a blanket ban on potentially relevant financial data would disadvantage precisely those people. Income and expenditure records, rent payments, utility bills, employer payroll records and confirmed bank account performance may not be perfect, but they are at least as good an indicator of repayment capacity as an empty profile; the purpose of the policy is to distinguish the kind of data that reveals one's capacity to pay from the kind of data that leverages one's vulnerabilities.

Linking loans to savings: Borrowers often need to have a means of repaying loans

The primary value proposition for alternative credit data is not that it makes lending more fun, but that it makes the "unknown" knowable. An otherwise thin-file borrower with no credit card history may have two years of perfect rent, stable cashflows, no excessive overdrafts and steady utility bill payments. On a standard bureau report, this borrower scores a zero. On a permissioned cash flow model, this borrower may be low risk. Both the World Bank and the International Committee on Credit Reporting have chronicled examples over the past ten years of using utility, telecom, rent and cash flow data to flag thin and no-file clients and alternative data may boost model accuracy by anywhere from 5 to 20 percent beyond bureau-only scores. The goal is not to replace the digital guesswork of bureaus with digital guesswork, but rather to produce "quasi-files, to populate thin records with highly relevant, well-proven signals.

More broadly, it is also clear that privacy and data use work best when controls are user-centric rather than data elimination. There is research by Trong Nguyen on the evidence that the implementation of the California Consumer Privacy Act was studied in several industries, including mortgages, but the study does not reveal whether there were any conclusions on changes in fintech mortgage rates. A paper published by the Bank for International Settlements indicates that privacy controls may be a barrier to the growth of fintech firms and diminish competition in the financial sector, instead of finding the support that these controls do not impact data-driven lending. A carefully thought-out rule fosters trust, willingness to share information and makes it easier for lenders to price risk more effectively, while a badly designed one takes away the data point and drives the lenders away.

The problem of market design with respect to this issue may be that the debate is still frequently centered on fairness and not the macro market design consequences of exclusion. Fintech comps, banks and credit unions all have an incentive to grow in this segment: All prime borrowers are already fought over by every single data mine with the same bureau. The only ones being mispriced are thin-file borrowers, because the market is unable to distinguish bad repaying behavior from having too little information. This is the precise advantage, which is why lenders are relying on open banking, rent reporting, payroll analysis, device-based fraud detection and cash flow underwriting. Profit motive shouldn't be the main concern of public rules: rules should allow lenders to score risk the same way without adding information about private lives and recognize good borrowers who've been cheated on multiple, separated, siloed and data-rich occasions.

Improved credit rules for thin-file borrowers

Any new rules should ensure that the data used passes a simple test; it is the source directly, clearly and verifiably related to the indicators that determine a person's willingness and ability to pay. Only that type of data should be used and it should be subject to strong regulation. Payroll, recurring direct deposits, utility payments, rent history, steady telecom bill payments, employment verification and regulated lender loan payments and the like would be acceptable; Facebook content, search engine history, disease records, private letters, political preferences, hyperlocal satellite tracking, or location data would not be. Clearly, these other sources may prove some predictor of credit risk, but not all measures of validity are bad if they are not actually relevant to the decision at hand. In the credit market, data should be relevant, fair, interpretable and proportionate, not merely predictive. Otherwise, models not only claim to open the floodgates; they describe the arrival of a more detailed form of banishment.

Second, it could solve the other significant objection to alternative data: that it may simply be biased in a different way. The danger is genuine. Thin-file borrowers tend to be made up of already marginalized populations, and faulty data may treat these bleak realities as if they were sources of risk. Regulations must require lenders to test models as they develop them, monitor how they perform once they are implemented, compare results on acceptance, pricing and default in protected or disadvantaged groups and explain, in generic terms, why a would-be borrower was refused credit. The lender should not be able to reply, "The algorithm said no." The borrower should be able to understand the broad categories that resulted in her rejection and how to adjust her profile accordingly. And that is a particularly significant consideration for thin-file borrowers. If the purpose of new data is to provide access to credit, the consumer must have the ability to understand the means to attain credit.

We need to revisit consent, as in too many instances of digital lending, consent is not optional; a thick web page, a point of leverage, the precondition for being considered. Regulations need to push for granularity, ones and zeros that are revocable and only for the purpose. The borrower might give consent for six months of bank transaction history data to be used to underwrite her loan, not for cross-selling or resale, not for marketing or other third-party purposes. She might give consent to data on her rental history, but not her contacts, not the content of her text messages, or her location. Data should expire without continued justification unless also consented to. The era of blanket consent, as the prelude to finding out what it is for, needs to be over. This is where privacy really can step in for inclusion, allowing thin-file borrowers to open the window to the fields of information they wish to show.

The next hurdle for thin-file borrowers

Critics will caution that additional data means increased surveillance, opacity and digital redlining. This, as a warning, is well taken. The answer is not for all lenders to wield a free hand in carve out their own private scoring kingdoms, but rather to a regulated data marketplace, with boundaries so well defined that responsible data providers can be certified, either by the industry, or by regulators, in a way that can be independently audited, that exclude some unsavory categories of sensitive data and that produce algorithm results that can be rigorously measured against specific performance and fairness outcomes. Safe pilots would be crucial. Sandboxes should be restricted and controlled environments, not a safe haven for the weaker players to test new sources. Rather, they should be proving grounds, showing that new sources result in more credit with no increased risk of default, cost, or discrimination and are to be found wanting when they do not.

The more unsettling criticism of new data is that it will make it too easy to push high-cost credit to the desperate. This is not an abstract concern. A Fed study estimates that in 2023, 3.9 percent of US households used BNPL and approximately 1 in 8 had delinquencies. The study further comments that people who do not hold bank accounts are more likely to use nonbank credit if their credit records are not recognized by large lenders. That suggests the key is actually to connect alternative data to the quality of the product. If nothing changes but the speed at which an individual is shuttled into an expensive product, then new data will have failed; if what changes is just that the pricing is fair, the experience is easy and the information a consumer has buys him or her fresh credit, then market architecture can be transformed.

That's where the solution lies: regulators should not prohibit thin-file borrowers from access, but safeguard it. That safeguard path is through a core of finite, permissioned data able to generate quasi files, but definitely shielded from data intrusion capable of transforming individuals into numbers in a credit rating. That safety should be based on a decision-making process that considers not just average approval rates, but also how many opportunities have been lost or gained in gaining access to the economy. A marketplace qualified to track millions for advertising purposes should not be blind to credit access. The future of fintech regulation should be concerned with the entire spectrum of personal finance. Preserve the individual, bring about evidence, stipulate that all precious data will propel us toward inclusive, not hostile, growth.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Agarwal, S., Ghosh, P., Jin, P., Kundu, S., Vats, N., Wang, X. and Xu, Y. (2026) ‘When privacy protects but excludes: The hidden costs of data restrictions in digital lending’, VoxEU/CEPR, 25 April.

Board of Governors of the Federal Reserve System, Consumer Financial Protection Bureau, Federal Deposit Insurance Corporation, National Credit Union Administration and Office of the Comptroller of the Currency (2019) Interagency Statement on the Use of Alternative Data in Credit Underwriting. Washington, DC: Federal Financial Institutions Examination Council agencies.

Bradford, T. (2023) ‘“Give Me Some Credit!”: Using Alternative Data to Expand Credit Access’, Payments System Research Briefing. Kansas City: Federal Reserve Bank of Kansas City.

Christensen, G., Presler, J. and Weinstein, J. (2024) 2023 FDIC National Survey of Unbanked and Underbanked Households. Washington, DC: Federal Deposit Insurance Corporation.

Creehan, S., Gorin, D. and Noland, K. (2025) ‘Alternative Data: Expanding Access to Credit’, Consumer & Community Context. Washington, DC: Board of Governors of the Federal Reserve System.

Doerr, S., Gambacorta, L., Guiso, L. and Sanchez del Villar, M. (2026) ‘Privacy regulation and fintech lending’, Management Science. doi: 10.1287/mnsc.2025.02874.

Masunda, C. and Jabri, K. (2025) The Use of Alternative Data in Credit Risk Assessment: Opportunities, Risks, and Challenges. Washington, DC: World Bank Group and International Committee on Credit Reporting. doi: 10.1596/42970.