When One Worker Equals Hundreds: The Era of AI Super-Worker Productivity

Published

Modified

AI tools let a handful of workers match whole teams’ output. Job-loss forecasts overlook the widening productivity gulf inside occupations. Spreading agentic-design skills and sharing gains can turn the windfall into broad prosperity.

According to a recent article, researchers tested a prototype AI system for inventory management in a mid-sized retail setting, comparing its performance to established approaches using both artificial and conventional data. On a Monday morning, each analyst faced a backlog of 3,200 shipping discrepancies (Nieves, 2025). According to a Danish survey cited by the OECD, workers in roles exposed to text-based generative AI experienced an average time savings of 2.8 percent of their work hours, reflecting measurable efficiency gains from AI adoption. This story is more than only a curious example; it signals a fundamental change underway. As generative AI systems grow capable of chaining tasks together and interacting seamlessly like modular functions, a small group of workers far surpasses simple efficiency improvements and reaches new, exponential levels of productivity (Tomaz et al., 2026). The common discussion about AI causing job losses or creating new roles misses the point. The growth of these AI-empowered “super workers” is changing how value is created and shared within companies long before workforce numbers show any change.

From Incremental Gains to Exponential Gaps”

Most current economic research treats AI as a straightforward shock to overall labor demand. These studies ask how many jobs will be lost as machines master defined sets of tasks. This perspective underestimates the complexity of reality. Instead of spreading evenly, productivity gains are clustering around a select group that can effectively manage fleets of AI agents (Farach, 2026). According to a PwC report, productivity growth has nearly quadrupled in industries most exposed to AI, such as financial services and software publishing, rising from 7 percent between 2018 and 2022 to 27 percent between 2018 and 2024. They redesign workflows, eliminate bottlenecks, and deploy AI agents that simultaneously generate code, run simulations, and draft regulatory reports (Chen et al., 2026).

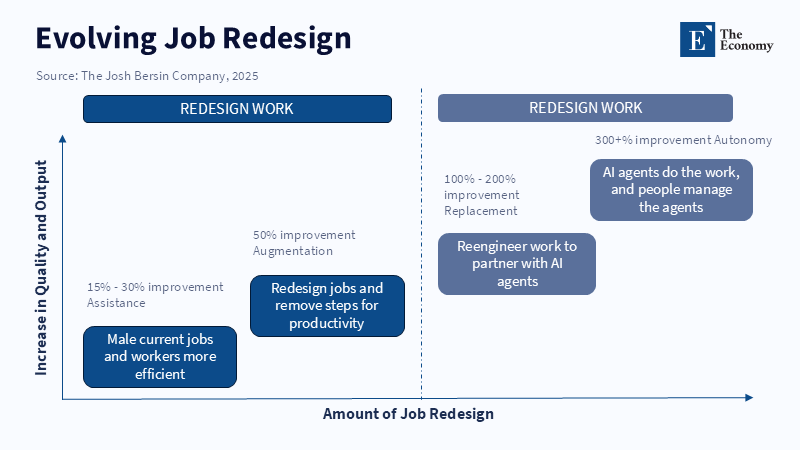

Measured gains follow a clear pattern. Initially, AI tools support human decision processes, delivering moderate improvements of around fifteen to thirty percent (Górka et al., 2025). According to the OECD, the adoption of generative AI-based tools in workplaces, such as conversational assistants for customer support agents, has led to notable productivity gains, with support agents resolving on average 14 percent more issues due to features like reduced handle time and the ability to manage multiple chats at once. For example, in pharmaceutical research, this approach has compressed molecule-screening cycles from several months down to just a few hours (Lopes et al., 2026). While standard labor forecasts interpret this as a gradual productivity rise, in reality, it represents a phase change: one chemist using synthetic data generators and docking simulations can outperform an entire lab team working in wet environments (Khiari et al., 2025).

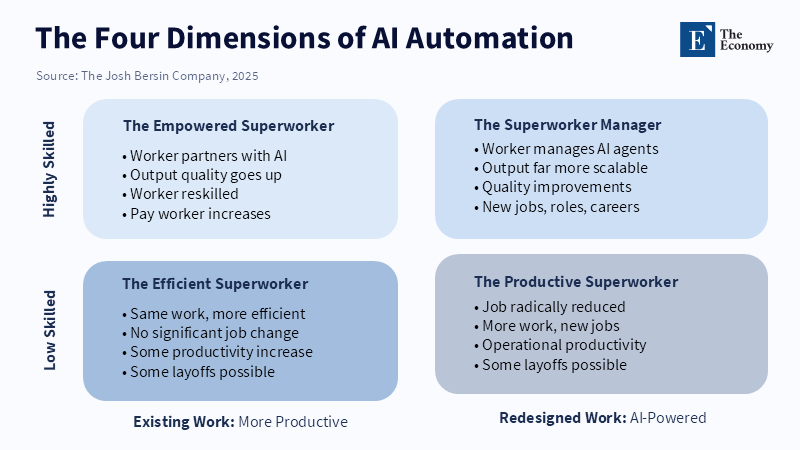

Mapping the Four Faces of Automation

When looking at averages across entire sectors, important differences get lost. According to a 2023 OECD report, automation is displacing jobs in certain sectors, particularly routine and non-creative tasks in manufacturing, and workers in these roles face an increased risk of automation. According to a 2025 report from PwC, the introduction of AI has led to workers becoming more productive and able to command significant wage premiums, with job numbers continuing to grow even in roles that are highly susceptible to automation.s.

These growing disparities are more significant than simple job statistics suggest. According to research published in the Journal of Professions and Organization, auditors view AI as a tool that can markedly increase their productivity by automating previously inefficient tasks, thereby enabling people with similar job titles to achieve very different levels of output due to their AI skills. Traditional collective bargaining, which assumes uniformity among workers within a role, struggles to address the new productivity differences introduced by AI adoption. Pay structures, bonuses, and even professional identities are breaking down as super workers capture increasing shares of value.

Blind Spots in Current Policy Models

Current policy models overlook these shifts. Standard economic models rely on averages and assume that workers in the same occupation remain interchangeable after adjusting for experience or education. Yet the variation within job categories has expanded sharply (Artificial intelligence and intra-firm pay dispersion: Evidence from China, 2025). A World Bank simulation from 2025 modeling AI adoption in six developing countries found that ignoring this variation led to a 37% underestimation of 5-year unemployment insurance costs. Job losses do not spread evenly across workers; they cluster heavily.

Macroeconomic policies also miss a new kind of market power. Traditional antitrust efforts concentrate on firm mergers, but a different issue arises when a small number of super workers control key productivity frontiers. According to Brookings, making certain that workers benefit meaningfully from the gains of AI while preventing possible harms is a key challenge faced by policymakers and employers. Social support systems and wage structures may not keep pace with rapid changes brought by AI, which could leave some workers insufficiently protected.

Education systems are also challenged by the swift development of skill requirements. Government-funded learning programs often last around twelve weeks, trying to teach digital skills (Federal Cyber Defense Skilling Academy Micro-Courses, 2021). But AI models, prompting techniques, and orchestration methods shift so quickly that graduates may find their training outdated by the time they finish. To keep pace, curricula need to focus on “meta-design” abilities—breaking problems into assessable tasks, setting up evaluation methods, and managing knowledge so learners can adapt continually (The 2026 Educational Paradigm: Learning Agility, 2026).

Governing the Super-Worker Economy

Addressing the rise of AI super workers calls for a new set of policies that shift the focus from raw job numbers to the widening gaps in workers' capabilities. Open public libraries of AI agents can lower barriers for smaller firms. According to The Business Times, Singapore’s efforts to support AI adoption among small businesses include the GenAI Playbook, which is designed to make it easier for enterprises at various levels of digital maturity to integrate AI solutions.

Certification systems also need updating. Denmark has started piloting continuous micro-badging, in which workers earn renewable digital badges that show their current ability to manage AI agents, rather than one-time course completion badges. Employers receive wage subsidies if 70% or more of their project team holds active badges, encouraging broader adoption beyond just enthusiasts (H., 2024).

Fiscal measures can help address the concentration of surplus value. According to the OECD’s Economic Survey of Australia 2023, there is no mention of a proposal by Australia’s Productivity Commission for a digital super-profit tax aimed at highly productive individuals. According to the AI Governance International Evaluation Index (AGILE Index) 2025, as with resource royalties, super workers who benefit from shared public infrastructure, such as language models, are expected to contribute a fair share of their additional earnings back to society. The report also indicates that changes in corporate governance practices will be required to adjust to these developments.

Risk management historically focused on top executives, but now even a mid-level analyst equipped with custom AI agents can pose a continuity risk if they leave (Poinski & CIO, 2026). According to a 2026 article by Varun Pratap Bhardwaj, organizations should consider implementing formal Agent Behavioral Contracts to ensure the reliability and accountability of independent AI agents, which could involve redundancy plans and strict audit controls. The article also suggests that regulatory systems may need to distinguish between an individual's prompting skills and a company's intellectual property, similar to current approaches in the biotech industry regarding non-compete agreements.

Economists once framed technology shifts as simple trade-offs between saving jobs and creating jobs. That view is being upended by the rise of AI super workers. When one person with AI tools can outperform entire teams, debates over total employment become less relevant. The real challenge is to spread skills in AI design literacy, monitor how value is captured within firms, and find ways to share the gains across the workforce. Ignoring these issues risks increasing power imbalances, straining social insurance systems, and weakening trust in institutions that still rely on averages. On the other hand, acting proactively could unlock unprecedented wealth while maintaining social cohesion. The future depends on our willingness to recognize and adapt to this new reality—not by watching overall employment numbers, but by understanding how a few are reshaping the very rules of work.

References

Abraham, L. & Li, Q. (2024) Generative AI Pulse: Wage Dispersion in Early Adoption. McKinsey Global Institute.

Artificial intelligence and intra-firm pay dispersion: Evidence from China (2025) China Economic Review, 93.

Australian Productivity Commission (2025) Super-Profit Sharing in a Digital Economy. Canberra: Australian Productivity Commission.

Bersin, J. (2025) The Rise of the Super-Worker: Delivering on the Promise of AI. The Josh Bersin Company.

Brookings Institution (2024) AI, Labour-Market Power and Micro-Monopsonies. Washington DC: Brookings Institution.

Chen, W., Peng, Z., Yin, X., Ni, C., Ying, C., Xie, B. & Luo, Y. (2026) ‘SolAgent: A Specialized Multi-Agent Framework for Solidity Code Generation’. arXiv preprint.

Duarte, P. & Kim, S. (2025) ‘Agentic AI Copilots in Logistics’, Journal of Applied Operations Research, 18 (2), pp. 45–62.

Farach, A. (2026) ‘AI as Coordination-Compressing Capital: Task Reallocation, Organisational Redesign, and the Regime Fork’. arXiv preprint.

Federal Cyber Defense Skilling Academy Micro-Courses (2021) Cybersecurity and Infrastructure Security Agency.

Górka, E., Baran, D., Wojak, G., Ćwiąkała, M., Zupok, S., Starkowski, D., Reśko, D. & Okrasa, O. (2025) ‘The Impact of Artificial Intelligence on Enterprise Decision-Making Process’. arXiv preprint.

H., B. (2024) ‘Denmark’s AI Investment Has Minimal Impact on Productivity’. LinkedIn post, 15 November.

Khiari, S., Masters, M. R., Mahmoud, A. H. & Lill, M. A. (2025) ‘Synthetic Protein-Ligand Complex Generation for Deep Molecular Docking’. arXiv preprint.

Lopes, A. B., Rodrigues, C. F. & Silva, F. A. (2026) ‘From Algorithm to Medicine: AI in the Discovery and Development of New Drugs’, AI, 7 (1).

McGrath, R., Singh, V. & Patel, A. (2024) AI Adoption Cycle Times and Organisational Learning. London: Institute for the Future of Work.

Ministry of Trade and Industry Singapore (2025) OpenAgents Initiative: Progress Report Year One. Singapore: MTI.

Nieves, C. (2025) ‘Container Shipping’s Recycling Backlog Reaches 1.8 Million TEU as Industry Faces Fleet Age Crisis’. World Ports Organization.

OECD (2023) Employment Outlook 2023: Automation, Productivity and Jobs. Paris: OECD Publishing.

OECD (2025) AI Skills Index 2025: Early Evidence from Employer Data. Paris: OECD Publishing.

PwC (2023) Global Workforce Hopes and Fears Survey: AI Exposure and Wage Premiums. London: PwC.

PwC (2025) Sector Deep Dive: AI Adoption and Productivity Growth 2018-2024. London: PwC.

Poinski, M. & CIO (2026) ‘AI Agents Are Employees Now—Here’s How to Manage Them’. Forbes, 22 January.

Sloan Review (2025) ‘Agentic AI at Scale: Redefining Management for a Superhuman Workforce’, MIT Sloan Management Review, 66 (3).

The 2026 Educational Paradigm: Learning Agility (2026) Research Square working paper.

World Bank (2025) Agentic Automation and Labour-Market Transitions in Emerging Economies. Washington DC: World Bank.