Value-Maxxing: The AI Metric That Puts Judgment Back in Charge

Published

Modified

AI adoption is high, but value is still unclear Value-Maxxing judges AI by outcomes, not usage Good AI use needs context, friction, and human judgment

The most significant figure to emerge from the AI discussion isn’t the size of the models, the number of tokens provided, or the price tag of the vendor. It’s 88 percent. That is the share of surveyed organizations that reported using AI in at least one business function in 2025. The market has transitioned from novelty to everyday life. However, everyday life isn’t equivalent to everyday value. More prompts, more tokens and more drafts – even more finished projects pushed through AI – can still produce flimsy ideas, weak decisions and little real productivity gain. The word maxxing began as slang for maximizing something, but in AI, it now exposes a serious management question. That’s why Value-Maxxing should become the next serious AI question. How can we focus on the long-lasting value of AI, rather than on the level of adoption? In this new paradigm, token maxxing, context maxxing and friction maxxing aren’t enemies; they are means. Regulators and policymakers should decide when these practices support innovation and when they only create unproductive noise.

Value-Maxxing Starts Where Token Counts End

The first phase of generative AI usage was full of concrete use cases. Teams measured prompts and companies tracked usage dashboards. Employees gradually brought generative AI into their writing, programming, researching, sales, and service workflows. This phase was necessary. Machines not in use can't change routines. It also alleviated the elephant in the room that many organizations feared: the slow adoption of AI. By 2024, 75% of knowledge workers were using generative AI at work, many with private tools before their organizations had clear rules. The message was simple: workers wanted speed—and not waiting for guardrails. Token maxxing thus became a vehicle to fight fear, procrastination and classic workflows; it brought AI to everyday life and achieved a tangible step.

A high token count should nonetheless not be a policy goal. It conflates input with output, rewarding the employee who generates ten poor drafts with the same result as the producer of a single, accurate query. It also conceals shadow costs. The use of AI has shifted from a niche application software kind of activity to a sizeable budget component that costs money for computing power, data exposure, labor inputs and validation. Value-Maxxing leverages the useful insight from token maxxing but rejects the superficial measure. Utilization is only justified when an increase can be shown to improve outcome quality, speed, scope, or dependability. Organizations should focus on inquiries about the over-utility of their employees' AI output, rather than maximization.

Context Is Useful Only When It Creates Value

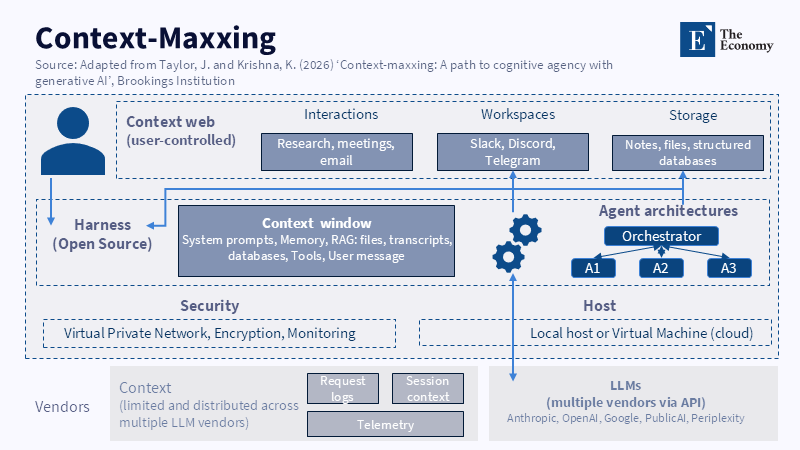

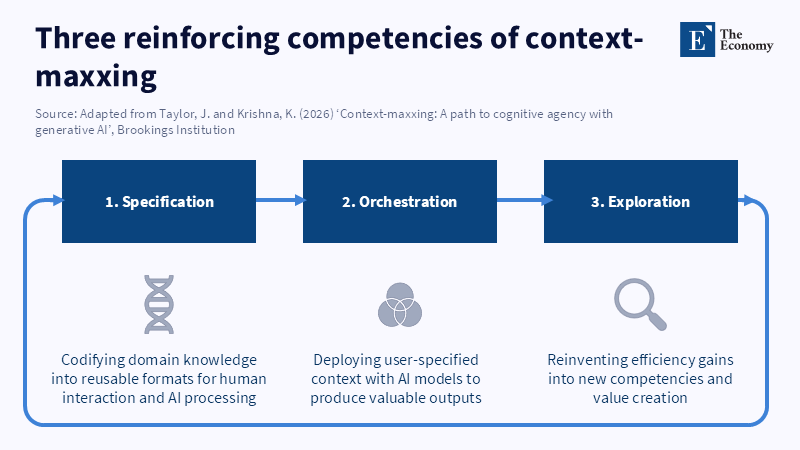

Context maxxing completes the argument with a “human in the loop” conceptual level. Vague prompts tend to give vague answers. Sources, objectives, examples, data and style rules can turn a generic answer into a useful answer. Context is not an adornment or luxury; it is an essential step. It injects the memory, standards, tests and local expertise of an educator into the machine. At the right scale, then the same AI can be a boring lesson plan generator or a fair assessor of progress.

Of course, context isn't an end in itself. You can overload a prompt with context before defining the real task. It can lead to framing errors, private data, stale evidence and pointless examples. It can also give the user the false impression that a lengthy prompt has done all the thinking. In that case, the context and maxxing and token maxxing strategies are two different ways of doing the same thing—trying to give a machine more usable input at the risk of getting less of it. Value-Maxxing asks the hard question: Did the extra context pay for itself? If not, the context becomes another form of waste. The value test should come after the context, not before.

More recent evidence tends to uphold the more cautious stance. An enterprise study found that with AI-supplied assistance, customer service representatives could resolve almost 14 percent more queries per hour, with the biggest gains going to the most junior employees. An experiment tested writing performance and found that participants finished faster and with better quality. A consulting project measured higher productivity, speed and quality on AI-suitable projects with AI users. But the project also exhibited one important pitfall: once jobs were outside the AI's reach, AI users were less likely to find the correct answer. The lesson is that AI does not have a good or bad quality; its value is moderated by the context. It depends on task suitability, human oversight and the subtle synergistic tension between support and dependence.

Why Friction Can Raise AI Value-Maxxing

It might seem odd to talk of "friction maxxing" if AI is supposed to reduce effort and do it in less time. But all steps need not be to produce value—judgment is made at some points. A writer who sets out an argument before consulting an AI tool is more likely to arrive at pertinent questions. A manager who writes out a short briefing paper before consulting an AI will avoid falling off the bottom line. A researcher who checks facts firsthand can spot things a computer system may miss. The right kind of friction is not a throwback to the age before the computer; it is a conscious design decision to keep humans in the loop at points of high value, putting brakes on steps that should not go too fast.

The point of this is that fluency breeds overconfidence: a 2025 study found that technology-caused "greater confidence in generative AI was associated with significantly less critical thinking" and "greater self-confidence was significantly associated with significantly more critical thinking". That finding should matter to policymakers. If "AI" makes bad-quality work seem finished, speed is a trap. Friction-Maxxing is valuable if it forces way-up into "defining the problem, articulating criteria, evaluating outputs and owning the choice"; it is destructive if it is merely ritual, "An unread form is not friction; it's more complicated delay".

For education and training, that means the obvious principle: don’t ban AI as if the pre-AI workplace can be restored. Don’t flood every assignment with it. Do build assignments, staff workflows and public policies with strategic points of valuable friction. Require students to write down a thesis before asking for AI advice. Require staff to mark where they accept/discard/change AI-work. Require vendors to provide audit trails rather than speed buttons. These small measures protect the agency, forcing comparison, explanation and choice, which are the backbone of skills. Value-Maxxing needs this friction because it's not a race about speed; it is about responsibility.

Govern AI by Value, Not Volume

Policy makers should adopt Value-Maxxing as a straightforward rule. First, define value types around human tasks. Some need value in a particular direction—speed, such as summarizing routine dashboard data; trust, like public messaging; or certain knowledge-critical decisions, like curriculum design. Others require value in quality—accuracy, like high-stakes data validation, or novelty, like innovative research ideas or strategy options. Depending on the value type, the minimum rules for AI should be different: support or low-stakes evaluations require broad automation and sampling checks; high-stakes decisions require human oversight, detailed source control and incrementalist deployment; and some tasks require all of those. Any one-size-fits-all AI policy will be either too free or too constrained—Value-Maxxing is a better standard.

Measure impact after adoption. Usage dashboards need to be paired with impact dashboards. Has the error rate reduced? Has employee time changed from indiscriminate drafting to valuable advice? Are students thinking more profoundly or just in neater sentences? Are customers getting faster, more accurate responses? Has context been reused more securely, or has sensitive data leaked into uncontrollable systems? These are practical and, therefore, more difficult questions, but they position AI as a monitored work process instead of a shiny testament to a new fad. The mission is not the proliferation of AI but the search for its return.

What critics might say – this will slow down adoption and they would be partially right. Value-Maxxing will, for some AI uses, slow application down, which is what it should do; it is about slowing processes in which getting it wrong costs money, in which seamless drafts hide thinking gaps, in which trust in a human is justifiable and speeding up things in which the goal and data are well-defined, in which AI experience is good and justifiable. It is not anti-AI; it is about making sure it doesn't become a mirage of work. Those who do it best will be those who can judge when to put and keep on friction, when to give context and which outcomes are worthy of trust.

The 88 percent figure suggests that we are no longer sleeping on AI adoption; the scarce resource is now judgment. The next wave will not be determined by the loudest pronouncements of companies, schools, or governments around their transformational ambitions; it will be determined by the ability of the best of them to make a clear causal linkage between AI adoption and the value created from it. Value-Maxxing makes that link plain, by making tokens the fuel, by making context the navigation and friction the brakes. A value-driven system requires all three: no fuel, no trip; no navigation, no direction; no brakes, no impact. The question is whether AI is making work more valuable, more reliable and more firmly owned by human judgment.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Brynjolfsson, E., Li, D. and Raymond, L.R. (2023) Generative AI at Work. NBER Working Paper No. 31161. Cambridge, MA: National Bureau of Economic Research. DOI: 10.3386/w31161.

Dell’Acqua, F., McFowland III, E., Mollick, E.R., Lifshitz-Assaf, H., Kellogg, K.C., Rajendran, S., Krayer, L., Candelon, F. and Lakhani, K.R. (2023) Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality. Harvard Business School Working Paper No. 24-013.

Jacobi, J. (2026) ‘Why Friction Maxxing changes product strategy in 2026’, Mind the Product, 17 February.

Lee, H-P., Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R. and Wilson, N. (2025) ‘The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers’, Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, pp. 1–22. DOI: 10.1145/3706598.3713778.

Maslej, N., Fattorini, L., Perrault, R., Gil, Y., Parli, V., Kariuki, N., Capstick, E., Reuel, A., Brynjolfsson, E., Etchemendy, J., Ligett, K., Lyons, T., Manyika, J., Niebles, J.C., Shoham, Y., Wald, R., Walsh, T. and Oak, S. (2025) The AI Index 2025 Annual Report. Stanford, CA: Stanford Institute for Human-Centered Artificial Intelligence.

McKinsey & Company (2025) The State of AI: Global Survey 2025. New York: McKinsey & Company.

Merriam-Webster (2025) ‘-maxxing’, Merriam-Webster Slang Dictionary. Springfield, MA: Merriam-Webster.

Microsoft and LinkedIn (2024) 2024 Work Trend Index Annual Report: AI at Work Is Here. Now Comes the Hard Part. Redmond, WA: Microsoft.

Noy, S. and Zhang, W. (2023) ‘Experimental Evidence on the Productivity Effects of Generative Artificial Intelligence’, Science, 381(6654), pp. 187–192. DOI: 10.1126/science.adh2586.

Sanchez, C. (2026) ‘Token Maxxing vs Outcome Maxxing Is the Wrong Question’, dC/dt: The Rate of Change of Competitive Advantage, 20 April.

Singla, A., Sukharevsky, A., Hall, B., Yee, L. and Chui, M. (2025) ‘The State of AI: Global Survey 2025’, McKinsey & Company, 5 November.

Taylor, J. and Krishna, K. (2026) ‘Context-maxxing: A path to cognitive agency with generative AI’, Brookings Institution, 6 May.