AI Energy Regulation Is the Missing Rulebook for the Compute Boom

Published

Modified

AI growth is becoming a major test for energy systems Regulation must track power use, grid pressure, and clean-energy claims AI can expand responsibly only if its energy costs are transparent and fairly managed

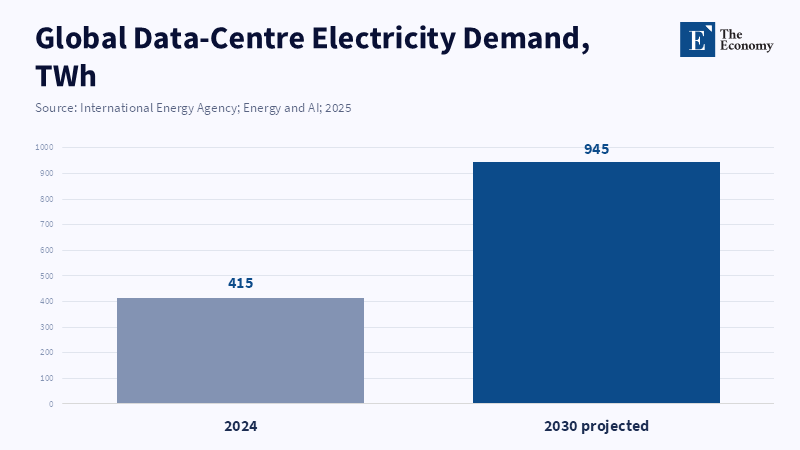

If AI energy regulation remains a side issue, those numbers begin to pull themselves out of the footnotes in the global discussion. Data centers will use up 415 TWh of energy in 2024 and could reach 945 TWh by 2030. The power system is not the whole picture of power; it is a new, sharp load on systems that did not take clusters of tens or hundreds of megawatts on 24/7 into account. The real question is no longer whether AI can be made clean in theory, but whether power demand can be governed before private compute demand becomes public cost . AI policy in the future is not only about model safety, but also about who controls power flow, who funds grid expansion, who sees the data, and who pays for the localized impact when the cloud hits the earth.

AI energy regulation must start with physical constraints

The first step in the AI energy regulation needs a grounding in physical constraints. The prevailing public discourse of AI still treats the technology as immaterial and it requires hardware – the chips, cooling, backup power, land, water, grid connections and the human capital needed to operate-this marks the shift to AI not only as software but as a large power consumer, but an energy commodity both in its training (as an intermittent but large and intense need) and on its day to day inference (as an intermittent or consistent if low watt usage), but policy has not come up with an answer to both these items in any ledger. The policy answer at first should be simple: no large AI model will be tagged as clean, safe, or strategic until its power demand can be predicted accurately by grid planners.

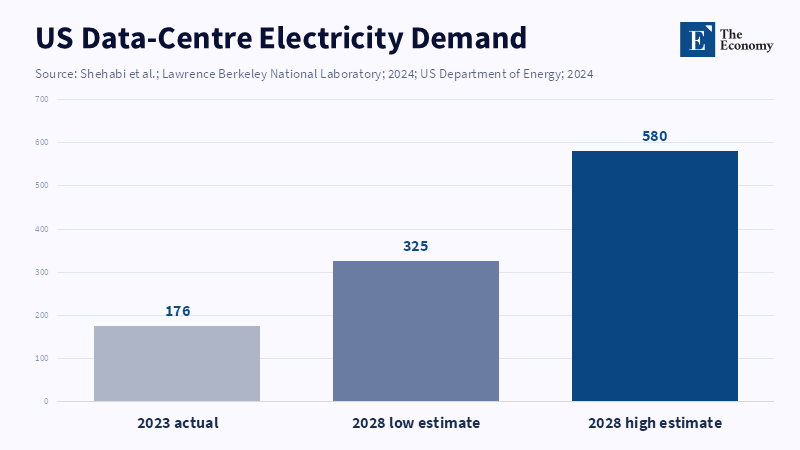

The velocity of the growth calls for the immediacy of this task. Global demand for data center electricity grows faster than overall power consumption growth and the IEA predicts the next growth spurt comes in the form of accelerated servers used for AI. In the United States, data centres consumed about 176 TWh in 2023, equal to 4.4% of total US electricity use, and could reach 325–580 TWh by 2028. The numbers cannot be precisely stated, but they have a clear trend. AI energy regulation needs to reflect these kinds of ranges rather than a false precision: private firms will have to submit enough information that public planners can predict the highest-, middle- and low-demand scenario and the known should be differentiated from the speculation: a facility with confirmed power purchase agreements is fundamentally different from a pending queue and a model with measured training power requirements a few months ago are not the same as a rough chip-hour prediction.

And demand isn't the only aspect. The second deficiency with the current system is a lack of visibility: While most companies offer generalized remarks regarding their sustainability efforts, few have detailed the energy consumption profile for AI, which hinders apples-to-apples comparisons of specific models, data centers, locations and use cases. A low figure for per-prompt energy use may mean massive power consumption when the service scales globally. Similarly, efficiency may be stated for a median prompt, but it still requires the same infrastructure of servers, cooling and power management for the entire fleet. Instead of relying on vague statements of environmental virtue, the task for AI energy regulation will be to be demanding of information for both training and inference power consumption, cooling and water use, greenhouse gas implications based on power grid location, actual clean energy claims and peak load capability.

But there are signals. In the EU AI Act, providers of general-purpose AI are required to retain known or estimated energy consumption information. A revision of the EU Energy Efficiency Directive requires large data centers to systematically report on their power and water usage. The OECD AI Principles have been updated with sustainability as a component of trustworthy AI and the G7 Hiroshima reporting asks that large AI companies identify and describe their practices for managing environmental risks. Currently, these signals are weak and voluntary, but a strong public framework, inspection rights and demands for model-level reporting, where appropriate and data center-level reporting for large facilities, need to emerge.

The grid should not subsidise private speed

The power system should not be asked to pay the price for private rush. The second policy deficiency relates to cost: Private firms can and do build large facilities more rapidly than infrastructure is installed. The constraint is simple but severe: data centres can be approved faster than new transmission lines, substations, and generation capacity can be built. That gap creates a risk that public grids are forced to absorb the cost of private speed. This creates a fundamental mismatch between when companies are willing to bear costs and when power can be delivered to their sites, forcing the grid to speed up deployment and creating implicit subsidies for certain private uses of energy at the public expense. AI energy regulation must place the cost of connection and grid upgrades squarely on large data center developers, and encourage power purchase agreements and, where appropriate, demand response capabilities, as well as local consent on issues of anticipated power use, water usage, local tax generation, jobs created, and consumer power costs.

This is not an argument against building the technology but against letting speed create a subsidy. AI data centers can be a resource for the grid when they are run as flexible loads: certain training runs could be deferred to different hours, inference can be shifted from the location of peak demand to locations of grid excess and there is empirical evidence to suggest that even a small curtailment during peak times on just a few days a year can free up substantial grid capacity. Though not an overwhelming cure-all, the potential should not be wasted and should become an affirmative part of the permit process rather than a voluntary greenwashing measure.

Clean power claims need stricter tests

The claim of clean power needs to have real substance. The third deficiency is the problem of matching renewable procurement with actual, real-time elecricity use: Many operators purchase matching offsets, which only guarantee that total annual renewable energy consumption equals consumption on an annual basis and that they may still draw heavily on fossil fuel sources at peak times. Specifically demanded should be at least hourly matching, where technically possible, full transparency on the grid origin of power drawn and evidence that their PPA adds supply rather than shifting already available supply.

The same policy question should be addressed for nuclear, gas and coal as well. Nuclear power provides predictable, consistent, low-carbon energy, but building new facilities takes significant time and capital. Gas power plants are a faster alternative but present lock-in emissions issues if they are not aggressively regulated for capture and offset. Coal could return indirectly if demand rises faster than clean supply, as a source for these facilities as the grid supply runs out. Rather than pick a favorite technology, policy should be directed at demonstrating the actual contribution to the system, whether that be a strain on resources, or how this new generation impacts consumer costs, or how it impedes residential electrification, or how it impacts grid reliability, or whether the new facility contributes more than deters from local emissions profiles.

AI energy regulation should reward useful intelligence

The real test is not whether AI uses electricity. Every major industrial shift does. It's about every new industrial phase and whose balance sheets reflect its costs. The real question is whether the public can afford to account for the costs before they are "hardened in" to grid infrastructure, electricity bills, land consumption, water use, and postponed climate goals. A spike of 415 TWh in 2024 to 945 TWh in 2030 doesn't warrant stopping every AI system's use. The spike indicates the urgent need to disconnect productive intelligence from uncontrolled infrastructure sprawl. AI systems that directly improve peak load management, grid planning, waste reduction, and clean-energy adoption should be rewarded. AI systems that generate substantial loads without demonstrable public benefit should be subject to increased disclosure and 100 percent cost recovery. AI regulation in the energy context isn't Luddism. It is simply the rules of the road for scaling AI's use of private computer power without translating it into public liabilities.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Cozzi, L., Spencer, T. and Singh, S. (2025) Energy and AI. Paris: International Energy Agency.

European Commission (2025) Energy Performance of Data Centres. Brussels: European Commission.

European Parliament and Council of the European Union (2023) Directive (EU) 2023/1791 on Energy Efficiency and Amending Regulation (EU) 2023/955. Brussels: Official Journal of the European Union.

European Parliament and Council of the European Union (2024) Regulation (EU) 2024/1689 Laying Down Harmonised Rules on Artificial Intelligence. Brussels: Official Journal of the European Union.

OECD (2024) Recommendation of the Council on Artificial Intelligence. Paris: OECD.

OECD (2025) OECD Launches Global Framework to Monitor Application of G7 Hiroshima AI Code of Conduct. Paris: OECD.

Shehabi, A., Smith, S.J., Hubbard, A., Newkirk, A., Lei, N., Siddik, M.A.B., Holecek, B., Koomey, J., Masanet, E. and Sartor, D. (2024) 2024 United States Data Center Energy Usage Report. Berkeley, CA: Lawrence Berkeley National Laboratory.

Tanner, B., Belle, D., Kerry, C.F., Kyosovska, N., Renda, A., Tabassi, E. and Wyckoff, A.W. (2026) ‘Global energy demands within the AI regulatory landscape’, Brookings Institution, 10 April.