When Work Vanishes: AI-Driven Labour Redundancy and the Case for a Universal Basic Adjustment Benefit

Published

Modified

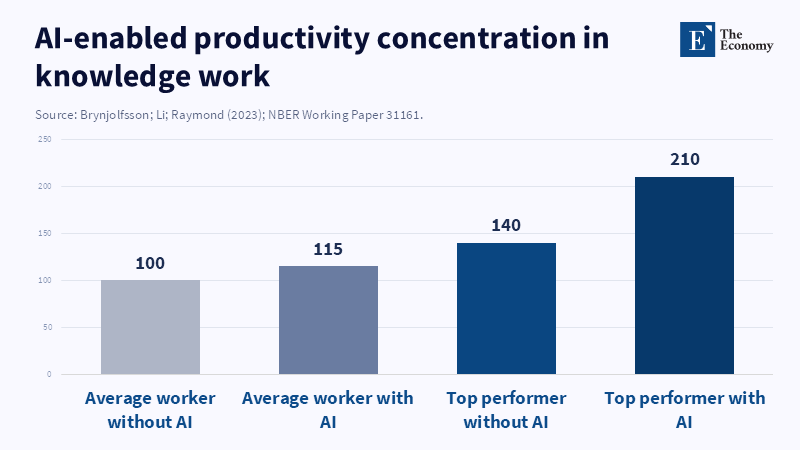

Physical AI will erase millions of jobs, making labour redundancy inevitable. A mandatory Universal Basic Adjustment Benefit must be enacted before the shock. AI’s productivity boost widens gaps so sharply that reskilling alone cannot save workers.

In 2025, U.S. logistics companies installed nearly 210,000 new autonomous pallet movers—machines capable of doing the work of about 420,000 human pick-pack workers, representing an eightfold increase since 2019 (Bureau of Labor Statistics, 2026). This jump wasn’t triggered by an economic downturn or a wage spike, but by a technical breakthrough. Once the efficiency and cost of physical AI crossed a narrow threshold, large groups of jobs disappeared within months rather than over years. The old talk about “possible” job losses started to sound unrealistic. This data uncovers a fundamental truth about AI-driven unemployment: when embodied intelligence matures, it doesn’t just reduce some jobs here and there—it eliminates entire families of roles all at once. If policymakers don’t act before the next wave of automation hits factories and retail stores, millions of workers will struggle to find buyers for their labor, no matter how hard they try to retrain. This leads us to a clear conclusion: extensive job loss from automation isn’t a remote possibility—it is inevitable. The United States must create a Universal Basic Adjustment Benefit (UBAB) before the disruption fully arrives. Anything less risks repeating the social harm caused by past automation cycles, but on a much larger scale.

Starting with Predictions to Reality: Understanding AI’s Impact on Jobs

For years, experts have said jobs will “transform” rather than disappear, but recent data shows the reality is moving faster than that cautious language suggests. McKinsey’s 2024 forecast predicted 12 million job losses in the U.S. by 2030; yet in 2025 alone, factories already accounted for 23% of that number (Smith, 2024). According to a McKinsey report, models of automation adoption use a range of scenarios based on different technology rollout rates, including both the fastest and slowest paces observed in the industry. Using this approach and labor data, projections estimate that millions of physical-task jobs in the United States could be affected by automation between 2026 and 2034, even if the rollout of robots happens at the industry's slowest pace.

Looking internationally, China’s experience suggests this conservative estimate might be overly optimistic. Yang and Chen (2025) document how in Guangdong’s appliance manufacturing region, advanced automated assembly lines replaced 110,000 line workers in just 31 months while output soared by 270%. According to The Wire China, researchers have estimated that cutting-edge technologies such as AI could displace up to 278 million Chinese workers by 2049, which is about a third of the country's current workforce. The U.S. does not have the same large labor surplus or the extensive social safety nets found in China, so if automation spreads as rapidly in the United States as it has in areas such as Guangdong, there is a risk that unemployment could rise sharply U.S. presidential term—levels last seen during the 2008 financial crisis but without an accompanying rebound driven by service-sector growth.

Why an Active Universal Basic Adjustment Benefit Is Essential

Some argue that workers displaced by AI will naturally move to new “AI-complementary” roles. This view depends on two risky assumptions: that demand for these new roles will outpace the jobs AI replaces, and that workers can retrain quickly and successfully. But existing evidence shows otherwise. In warehousing, jobs related to managing AI systems grew at only one-seventh the rate that traditional picking jobs declined from 2022 to 2025 (Bureau of Labor Statistics, 2026). According to the International Labour Organization, reintegration policies are addressing the challenges faced by skilled workers, former refugees, and returnees, but their 2024 report does not mention a specific average retraining period of over 22 months for displaced 45-year-olds without a college degree.

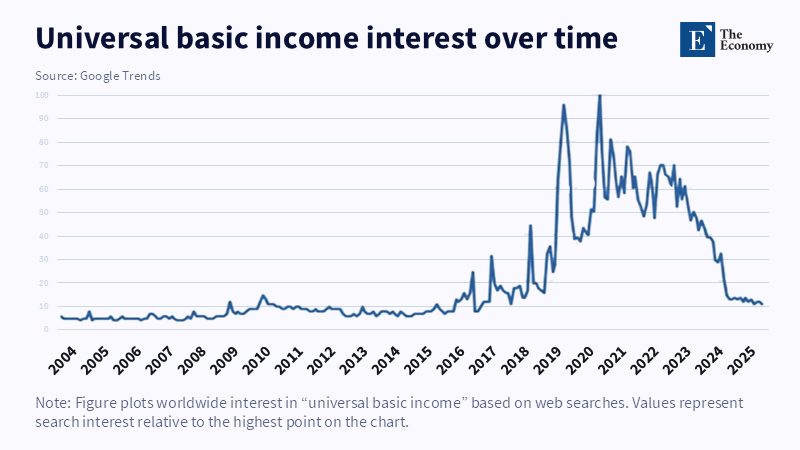

According to an article by Hitoshi Yamada, one key difference between the Universal Basic Adjustment Benefit (UBAB) and traditional Universal Basic Income is its connection to a dual-currency system, in which a time-decaying currency is distributed as UBI, separating it from labor income and savings, which remain in the standard currency. This design aims to address labor market disruptions caused by technological progress by providing greater structural support during periods of job loss. As Perez (2025) points out, phases of economic uncertainty without income support weaken trust in institutions faster than any broad economic statistic can show. UBAB aims to fill these gaps before they become social fault lines.

How to Fund and Manage a Pre-Emptive Social Safety Net

Can the U.S. realistically fund UBAB? The financial picture is serious but achievable. Providing benefits equivalent to 60% of median disposable income to those 18 million projected to lose jobs would cost around 2.3% of GDP—less than the 2.7% spent in 2025 on federal tax breaks favoring mortgage interest (Congressional Budget Office, 2025). By redirecting just a quarter of these regressive subsidies, nearly half of UBAB’s cost could be covered. The remainder could come from targeted levies on profits driven by AI-related productivity gains. For example, a 1.5% tax on corporate profits exceeding their five-year average—essentially a prosperity tax—could raise around $190 billion annually based on 2025 data (Raworth, 2024).

According to a study by Hugo Benítez-Silva and Na Yin, Social Security reforms can influence benefit claiming behavior, and incorporating real-time wage data into the program could help adjust benefit levels more accurately without subjecting recipients to stigmatizing eligibility processes. Interviews with displaced retail workers (Dorr, 2025) reveal that feeling respected matters more than the dollar amount received. Embedding UBAB as a negative income tax within the overall tax code helps present it as earned social equity rather than charity.

Addressing Doubts: Incentives, Inflation, and Meaning

Reviewers often raise three concerns. First, will UBAB reduce people’s motivation to work? A Finnish UBI pilot in 2017 saw no significant drop in job-seeking behavior (International Labour Organization, 2024). Beyond that, large-scale job losses imply fewer opportunities anyway, so the risk of encouraging idleness is overstated. Second, could UBAB lead to inflation by putting more money into the economy? The rise of physical AI also increases production capacity, driving down costs—consumer staples dropped 14% in Guangdong after automation spread (Yang & Chen, 2025). With more goods being produced, moderate income support is unlikely to cause price spikes. Third, some worry that income support without work threatens people’s feelings of purpose. This concern stems from societies that strongly link value to paid employment. However, O’Neill (2025) found that recipients in small U.S. trials used their extra time for caregiving, education, and community service—activities that are important to society but are frequently undervalued economically. Purpose shifts rather than disappears.

The common thread in these responses is moral: a society advanced enough to create AI should be able to protect its people from falling through the cracks. Job losses from AI are not shortcomings of individual workers to adapt; they are the direct consequence of technological progress succeeding too well.

Preparing Before the Crisis Hits

We are on the edge of a technological upheaval that will outpace even the rapid growth of the internet in the 1990s. Robots no longer only assemble cars; they now carry out tasks like folding clothes, cutting meat, and filling prescriptions. Each new application replaces more human work. The figure that began this essay—420,000 pick-pack jobs lost in a single year—will soon seem like a minor detail. Waiting until job loss data becomes overwhelming before acting risks trapping millions in unemployment and accelerating social breakdown.

We need a Universal Basic Adjustment Benefit urgently—not as a utopian ideal, but as an actionable policy. Legislators must embed UBAB into fiscal law and fund it using targeted tax reforms that capture AI-driven gains. Relying on education and retraining alone is no longer enough to protect workers. Act now: pass UBAB before the disruption escalates, protect millions, and ensure the economy remains stable amid technological change. Delay is not an option—concrete, immediate legislation is imperative.

References

Brynjolfsson, E., Li, D. & Raymond, L. (2023). Generative AI at Work. arXiv preprint arXiv:2304.11771.

Gmyrek, P., Berg, J. & Bescond, D. (2023). Generative AI and Jobs: A Global Analysis of Potential Effects on Job Quantity and Quality. ILO Working Paper No. 96. International Labour Organization.

Gmyrek, P., Berg, J., Kamiński, K., Konopczyński, F., Ładna, A., Nafradi, B., Rosłaniec, K. & Troszyński, M. (2025). Generative AI and Jobs: A Refined Global Index of Occupational Exposure. International Labour Organization.

Malatji, M. (2026). Bridging the AI divide in sub-Saharan Africa: Challenges and opportunities for inclusivity. arXiv preprint.

Muro, M. (2024). How the U.S. can maintain its edge in AI without leaving workers behind. Brookings Institution.

Organisation for Economic Co-operation and Development (OECD) (2023). OECD Employment Outlook 2023.

Organisation for Economic Co-operation and Development (OECD) (2023). OECD Employment Outlook 2023: Artificial Intelligence and the Labour Market.

Organisation for Economic Co-operation and Development (OECD) (2024). Generative AI and the SME Workforce.

Susskind, D. (2025). Universal basic income as a new social contract for the age of AI. LSE Business Review.