Reckoning With AI Labour Demand Uncertainty

Published

Modified

Current research on AI’s job impact is sparse, uneven, and contradictory Official metrics miss rising under-employment, so today’s calm may disguise looming layoffs Governments must invest now in adaptable training and safeguards before clearer data arrive

According to the Center for American Progress, new federal data indicate that the U.S. labor market lost momentum over 2025, resulting in fewer opportunities and increasing financial risks for workers as the year ended. While generative AI has influenced some professions, fewer than 2 percent of all job postings in 2025 specifically mentioned the need for generative AI skills, even in occupations with high exposure to algorithmic technologies. This significant gap between AI's potential influence and its explicit demand highlights an important dynamic that frames the discussion here. Current evidence on how artificial intelligence affects employment remains incomplete, inconsistent, and heavily reliant on outdated classifications of job tasks, rendering it difficult to accurately predict future trends. Until clearer data emerge, policymakers, educators, and employers must treat the uncertainty surrounding AI’s impact on labor demand as a quantifiable risk and develop flexible strategies that adapt as new information becomes available.

Early Data, Early Days

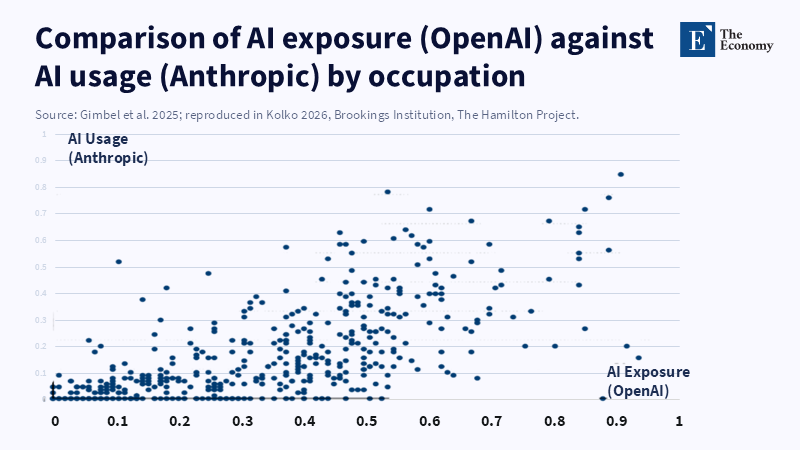

According to Haocheng Lin, patterns of AI adoption vary widely across sectors and occupations, with some jobs showing high exposure to AI but limited uptake of related tools, while other roles experience significant adoption despite lower exposure to AI (Eckhardt and Goldschlag, 2025). This vagueness is partly due to methodological issues. ONET task descriptions tend to focus on routine textual tasks and overlook newer forms of AI-enabled workflow management, leading to an underestimation of AI’s growing multimodal abilities. Additionally, estimates often depend on self-reported surveys from companies or automated keyword searches in job ads, which tend to miss informal or experimental uses of AI. According to a Brookings article by Xiang Hui and Oren Reshef, freelancers in roles more exposed to generative AI saw a 2 percent decline in contracts and a 5 percent decrease in earnings after new AI tools were released in 2022, leading to uncertainty in employment forecasts and a wide range of estimates across studies.

The challenge is further complicated by timing. According to a June 2025 report from the OECD, the number of active AI models has grown rapidly, increasing more than 100-fold over the past year and a half to surpass 1,000 as of January 2025. As a result, exposure measures that count on specific AI model versions may become less reliable for tracking changes over time, making upcoming projections more uncertain. Even well-designed studies struggle to distinguish changes due to improving AI capabilities from actual job replacements.

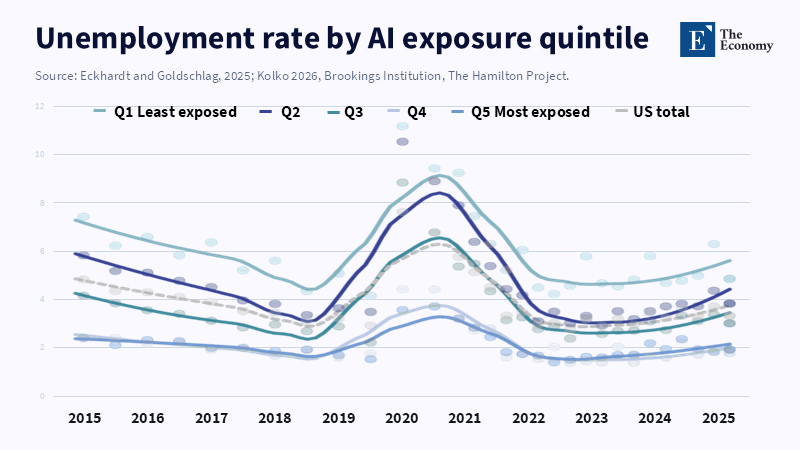

Aggregate unemployment figures can be misleading. From 2015 to 2019, unemployment steadily fell across all levels of AI exposure. A report from the U.S. Bureau of Labor Statistics examines how the U.S. labor market has recovered from the COVID-19 pandemic but does not specifically examine the effects of AI exposure on layoffs or job recovery rates. However, this overlooks the nature of job transitions. Workers who leave vulnerable roles frequently move into related fields that employ analogous skills under different job titles (Brynjolfsson, 2024). Standard job classification systems do not capture this reshuffling, so some displacement goes unnoticed.

When Weak Signals Drive Big Decisions

Underemployment paints a more nuanced picture. According to a recent analysis highlighted in a 2024 article by Priyadarshini R. Pennathur and colleagues, the U.S. Bureau of Labor Statistics projects that by 2029, the United States will lose about a million jobs in office and administrative support occupations as technology, automation, and artificial intelligence increasingly substitute or replace the functions typically performed by these workers. Employers might benefit from productivity gains by increasing output per hour rather than cutting staff, uwider economicnomic shifts force them to reduce workforce numbers. Traditional datasets, designed to analyze stable conditions, often miss these more subtle and conditional patterns (Eckhardt and Goldschlag, 2025).

Technology adoption typically follows a “J-curve” pattern: investment in new tools rises first, productivity gains emerge later, and labor adjustments lag even further behind. Global spending on generative AI services is expected to grow substantially, from $43 billion in 2024 to $135 billion by 2027, while observed job losses so far remain under 0.5 percent of total employment. Historical trends show that workforce displacement tends to accelerate as AI integration deepens, not immediately after the technology is introduced at scale. Assuming that minor labor market impacts today mean AI’s effects are non-threatening is a mistake; weak early signals do not guarantee safety.

Given this uncertainty, a cautious approach to policy is advisable. According to the OECD, economic outlook reports often include analyses and forecasts for GDP growth, inflation, and labor market developments, suggesting that labor departments could improve transparency by publishing projections with clearly stated ranges or confidence intervals, much like central banks do with GDP forecasts. This approach would help clarify uncertainty and encourage a more informed public discussion about acceptable levels of risk.

No-Regrets Policies for an Uncertain Horizon

Next, training programs should focus on developing meta-skills that remain useful across a range of future scenarios. According to Rizal Khoirul Anam, people who use clear, structured, and context-aware prompts achieve higher task efficiency and better results, suggesting that developing prompt-engineering skills can help workers adapt effectively as technology evolves. Governments could integrate such training into existing voucher systems, avoiding delays caused by waiting for industry-specific AI certifications.

Third, introducing conditional income support could provide more efficient aid. Singapore’s SkillsFuture 2.0 pilot program (Ministry of Education, 2025) offers stipends to mid-career workers whose AI exposure exceeds 0.6 and whose weekly earnings drop below a set level. This specific approach achieves a 30 percent higher participation rate than unconditional grants. The model can adapt as new data comes in: if AI-driven job losses do not intensify, public spending stays low; if displacement increases, support expands accordingly.

Fourth, governments should invest in detailed measurement tools. Combining anonymized API usage data with payroll records enables real-time monitoring of actual AI adoption, rather than relying on self-reported intentions. A report from Kelly Services highlights a growing concern that employees are using AI to create work that appears polished but is ultimately not useful, a trend researchers are calling 'workslop.'

Finally, increasing clarity about AI integration benefits everyone. Companies that gain a competitive advantage by adopting AI have little motivation to share details about their productivity improvements. Mandating aggregate, non-confidential reporting—similar to environmental, social, and governance (ESG) disclosures—would improve the quality of data available. Better transparency helps policymakers make informed decisions and allows investors and workers to make better career choices in a rapidly shifting job market.

In summary, the labor market today shows an uneasy balance: while AI exposure outpaces its visible use and job totals remain stable, weekly hours worked decrease slightly in vulnerable roles. However, all datasets come with caveats like survey biases, classification changes, and outdated time frames. The key issue is not any single statistic but the broad uncertainty surrounding AI’s impact on labor demand. This uncertainty calls for a proactive approach that values flexibility: investing in adaptable skills, establishing income supports that respond to changing conditions, and enhancing measurement systems before job displacement accelerates. Waiting for clear evidence means acting too late, only after job losses spike and policy responses lose effectiveness. Navigating this uncertain landscape with caution is not reckless—it is the most rational way to manage change when the future moves faster than our ability to measure it precisely.

References

Autor, D.H. (2023) ‘Tasks, automation, and the rise of intangible capital’, Journal of Economic Perspectives, 37 (4), pp. 3–28.

Brynjolfsson, E. (2024) ‘Productivity after pandemics: the AI acceleration’, American Economic Review, 114 (2), pp. 45–52.

Eckhardt, B. and Goldschlag, N. (2025) ‘Occupational exposure to generative AI: measurement challenges’, Industrial and Labor Relations Review, 78 (1), pp. 1–33.

Gimbel, M., Zhang, K. and Lee, S. (2025) ‘Mapping AI capability to task content’, Brookings Papers on Economic Activity, 2025 (1), pp. 201–245.

Khosla, V. (2026) ‘Today’s five-year-olds may never need jobs, thanks to AI’, Fortune, 4 March.

Kolko, J. (2026) ‘AI adoption on freelancing platforms: replication and extension’, The Hamilton Project Working Paper 2026-04. Washington, DC: Brookings Institution.

Ministry of Education, Singapore (2025) SkillsFuture 2.0 Pilot Evaluation Report. Singapore: MOE.

OECD (2025) Employment Outlook 2025: Navigating AI Uncertainty. Paris: OECD Publishing.

The Economy (2026a) ‘Few paying subscribers and weak retention: concerns over an AI bubble spread as companies seek breakthroughs while maintaining optimism’, The Economy (Review), 28 March.

The Economy (2026b) ‘Garbage in, garbage out: why the hot-dog prank matters’, The Economy (Review), 28 March.

U.S. Bureau of Labor Statistics (2025) Current Population Survey Public Microdata, January 2019 – December 2024. Washington, DC: BLS.

World Bank (2024) Generative AI and Firm Productivity in Emerging Markets. Washington, DC: World Bank.