AI Infrastructure in Education Is the Next Digital Divide

Input

Modified

AI in education now depends on physical compute, not just digital tools Data centre location, grid pressure, and cloud access will shape who gets reliable AI support Education policy must treat AI infrastructure as a new layer of inequality

In 2025, a HEPI survey found that 92% of students were already using AI in some form. That one number should end a stale argument. We still talk about AI in education as if it were mainly a question of plagiarism rules, prompt skills, or staff guidance. It is not. It is now a system question. When almost every student touches AI, education is no longer dealing with a niche tool. It is leaning on a physical network of data centers, fiber, chips, cooling, and power lines. That network has limits. It has busy zones and weak zones. It has places with spare grid capacity and places with long queues. It has regions close to users and regions too far away to feel smooth in real time. The key policy error is to treat AI as a matter of classroom content when it is also a matter of industrial location. The next education divide will not only be about who has a device or a login. It will be about who has steady access to fast, reliable, lawful, and affordable computing. Once AI becomes part of routine study, the back end becomes part of educational justice.

AI infrastructure in education starts with geography, not software

The first point to grasp is simple. AI services do not behave the same way everywhere. User-facing systems work better when the compute behind them is close enough to users to keep latency low. That matters for live captioning, instant translation, search, tutoring, proctoring, and any assistant that is meant to feel responsive. Distance does not add seconds in ordinary cases, but it does add real delay, and enough small delays can ruin a classroom workflow. Cloud firms know this. That is why they build regions around demand centers and fiber routes. That is why they place availability zones close enough to back each other up. And that is why they separate heavy training jobs from the day-to-day inference that people actually feel. Training can often move to places with more land and power. Inference is harder to push away because it serves real users in real time. Once schools and universities build teaching and support around these tools, geography stops being a technical side note. It becomes part of access. It also becomes part of trust, because people judge a system by how it feels when they need it.

This business logic also explains why data center growth does not spread neatly. Capacity first clusters near major cities because those places combine demand, network density, labor, and redundancy. When the core gets too costly, firms push outward to the suburban ring or to nearby secondary hubs with strong links back to the main market. The path is not random. It follows a map of return on investment. A manager building for cloud or AI does not ask only where land is cheap. That manager also asks where users are, where fiber is densest, and where a single site can fail without the whole service going down. The result is a familiar pattern. More capacity grows near people, not far from them. Education inherits that pattern. A university near a strong cloud corridor may get better rollout, stronger support, and better contract terms. A school system outside those corridors may buy the same service name yet receive a weaker version in practice. That weaker version may mean slower response, tighter usage caps, fewer privacy options, or longer waits for support. That is why AI infrastructure in education should be treated as a real access issue, not as a neutral background layer.

AI infrastructure in education is becoming an energy allocation problem

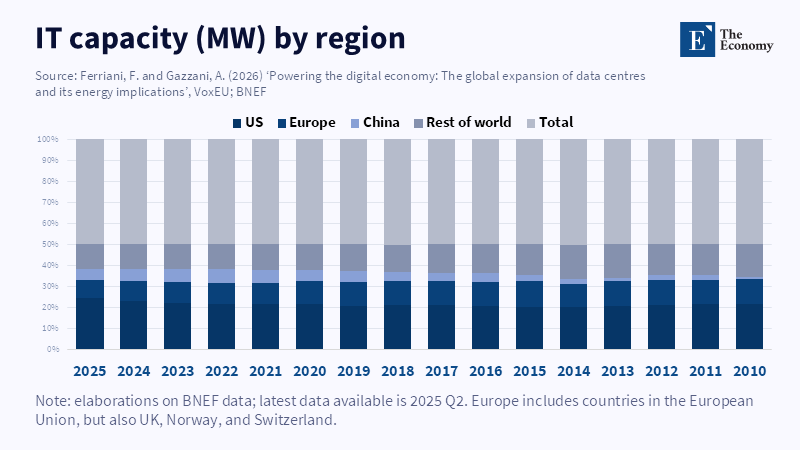

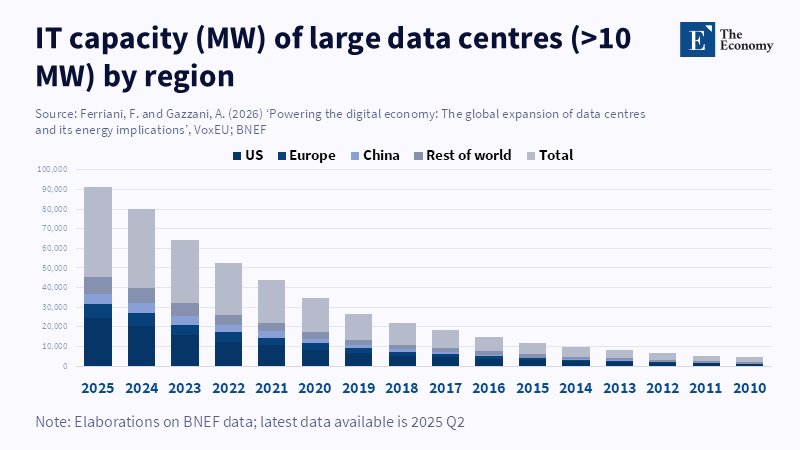

The second point is harder and more urgent. The constraint is no longer only network design. It is energy. In the United States, federal estimates indicate that data centers consumed about 176 terawatt-hours of electricity in 2023, accounting for 4.4% of national demand. The same analysis projects a rise to 325-580 terawatt-hours by 2028. The IEA puts global data-center use at about 415 terawatt-hours in 2024 and projects about 945 terawatt-hours by 2030. Those shares still sound modest when stated at the national or global level. But the local picture is far tighter. Data centers are highly concentrated. The IEA notes that nearly half of U.S. capacity sits in five regional clusters. That means the pressure lands on a few grids, a few substations, a few labor markets, and a few planning systems. For education, the lesson is clear. When a school buys AI, it is not just buying software. It is buying access to a scarce package of power, land, cooling, and network capacity located elsewhere. The price of that package is now moving upward, and the queues around it are getting longer.

That scarcity will matter more as education moves from casual AI use to routine AI use. A static archive can handle delay. A simple chatbot can survive a pause. But live classroom help, campus-wide AI search, instant writing support, multilingual guidance, and always-on student services need low delay and high uptime. That pushes providers toward the same dense markets that other firms also want to build in. In October 2025, S&P Global reported that U.S. data-center grid power demand was set to rise by 22% in 2025 and to nearly triple by 2030. Europe is not exempt. The IEA has warned that data centers could drive 10% of electricity demand growth in the European Union through 2030, while the main FLAP-D hubs already face connection queues of 7 to 10 years. This is why the energy question matters for education policy now. If AI becomes part of normal teaching and administration, then the cost and reliability of AI infrastructure in education will depend on who wins those local power races and who is pushed to the back of the line. In practical terms, that can shape prices, service quality, and the speed at which new educational uses move from trial to full rollout.

AI infrastructure in education will sort institutions before it sorts students

The third point is that this pressure will not hit all institutions in the same way. We already have signs of that. The same 2025 HEPI survey that found near-universal student use also found sharp differences in how students used AI, how confident they felt, and how much support they received. UNESCO’s 2025 survey of higher education institutions showed a similar split. Nearly two-thirds of institutions had AI guidance in place or under development. Yet confidence was uneven; one in four respondents reported ethical problems, and investment in AI tools was rising fast. In other words, adoption is outpacing shared capacity. That is the real policy risk. It is easy to look at mass use and assume the access problem has been solved. It has not. Many students now have some form of AI. Far fewer have access to the same quality of AI-backed learning conditions. A well-funded institution can buy protected enterprise tools, better models, more reliable support, and deeper integration into teaching systems. A weaker institution may rely on free public tools and hope for the best. Over time, those different starting points can lead to different student outcomes.

That gap will widen because computing itself is unevenly distributed. The OECD now treats public cloud AI compute as a physical resource with a real geography. That is an important shift. Cloud regions are not metaphors. They are physical hubs with hardware, laws, grid ties, and network paths. Their location shapes performance, security, and room for policy choice. For education, this means procurement has become strategic. Which region stores student data? Which region handles inference at peak times? Which vendor can meet campus needs when thousands of users log in at once? Which institution can pay for private capacity or local hosting when rules tighten? These are not narrow IT questions anymore. They shape what kinds of teaching, assessment, support, and research an institution can run with confidence. The richest universities will ask these questions early. Many other institutions will meet them late, after the tool is already embedded. AI infrastructure in education may therefore deepen an old divide. Schools and universities will look equally modern on the surface while running on very unequal back-end systems underneath. The old digital divide was about front-end access. The new one is about hidden capacity.

AI infrastructure in education needs a public strategy, not campus improvisation

The answer is not to wait for a perfect technical fix. Better chips will help. Better cooling will help. Better software will help. Efficiency is real, and it matters. An IEA high-efficiency case shows that stronger gains in hardware and model efficiency could cut future data-center demand well below its base path. But efficiency alone will not solve a siting problem, a grid problem, or a fairness problem. Education systems need a public strategy. Light and frequent student-facing tasks should, where possible, move toward smaller, auditable, and more immediate forms of inference. Heavy training jobs should be shared at the regional or national level, not repeated campus by campus. Public buyers should ask vendors where education workloads run, what delay they can guarantee, how energy risk is handled, and what happens when a preferred region is constrained. Governments should also treat AI infrastructure in education as part of digital public infrastructure. Right now, too much of the sector is acting as if this were just a bundle of software subscriptions that each campus can sort out on its own. That view ignores how one campus decision now depends on broader bottlenecks in power and compute.

That view is too small for the moment. Educators need to ask what kinds of systems their students are being asked to trust every day. Administrators need to treat cloud geography, resilience, and power exposure as part of academic planning. Policymakers need to connect AI policy to grid policy, competition policy, data governance, and shared compute capacity for public education. The opening statistic should be read as a warning, not a victory lap. If 92% of students already use AI, then AI infrastructure in education is not a future concern. It is a live systems concern. The institutions that see this early will build calmer and fairer forms of adoption. They will buy more wisely. They will design better rules. They will protect access when the market tightens. They will also be better placed to insist that vendors serve public goals, not only premium clients. The institutions that miss it may learn a harsher lesson. In the AI era, useful intelligence still depends on place, power, and public choices. Education policy should start there, before scarcity hardens into hierarchy. That is a public duty, not a vendor option.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Borgonovi, F., Bastagli, F., Ochojska, M. and Piumatti, G. (2025) AI adoption in the education system: International insights and policy considerations for Italy. OECD Artificial Intelligence Papers, No. 52. Paris/Turin: OECD Publishing and Fondazione Agnelli.

Ferriani, F. and Gazzani, A. (2026) ‘Powering the digital economy: The global expansion of data centres and its energy implications’, VoxEU, 28 March.

Freeman, J. (2025) Student Generative AI Survey 2025. HEPI Policy Note 61. Oxford: Higher Education Policy Institute.

Grand View Research (2025) Hyperscale Data Center Market (2025–2030): Size, Share & Trends Analysis Report by Component (Hardware, Software, Services), by Power Capacity (20 MW to 50 MW, 50 MW to 100 MW), by Enterprise Size, by End-use, by Region, and Segment Forecasts. San Francisco, CA: Grand View Research.

Grundin, G., Frade, M., Cubela, S., Lajous, T., Belda, B. and Salazar, L. (2026) ‘AI infrastructure’, McKinsey, 27 February.

Hering, G. and Dlin, S. (2025) ‘Data center grid-power demand to rise 22% in 2025, nearly triple by 2030’, S&P Global Market Intelligence, 14 October.

House of Commons Library (2025) Data centres: planning policy, sustainability, and resilience. Commons Library Research Briefing CBP-10315. London: House of Commons Library.

International Energy Agency (2025a) Energy and AI. Paris: International Energy Agency.

International Energy Agency (2025b) ‘Overcoming energy constraints is key to delivering on Europe’s data centre goals’, IEA Commentary, 16 November. Paris: International Energy Agency.

Khemani, K. (2026) ‘Inference Energy and Latency in AI-Mediated Education: A Learning-per-Watt Analysis of Edge and Cloud Models’, arXiv preprint arXiv:2603.20223.

Lehdonvirta, V., Wu, B., Hawkins, Z.J., Caira, C. and Russo, L. (2025) ‘Measuring domestic public cloud compute availability for artificial intelligence’, OECD Artificial Intelligence Papers, No. 49. Paris: OECD Publishing.

Leppert, R. (2025) ‘What we know about energy use at U.S. data centers amid the AI boom’, Pew Research Center, 24 October.

MarketsandMarkets (2024) AI Infrastructure Industry worth $394.46 billion by 2030. Northbrook, IL: MarketsandMarkets.

OECD (n.d.) Digital divide in education. Paris: OECD.

OECD and Education International (2023) ‘Opportunities, guidelines and guardrails for effective and equitable use of AI in education’, in OECD Digital Education Outlook 2023: Towards an Effective Digital Education Ecosystem. Paris: OECD Publishing.

Robert, J. and McCormack, M. (2025) 2025 EDUCAUSE AI Landscape Study: Into the Digital AI Divide. Louisville, CO: EDUCAUSE.

Shehabi, A., Smith, S.J., Hubbard, A., Newkirk, A., Lei, N., Siddik, M.A.B., Holecek, B., Koomey, J.G., Masanet, E.R. and Sartor, D.A. (2024) 2024 United States Data Center Energy Usage Report. Berkeley, CA: Lawrence Berkeley National Laboratory.

Srivathsan, B., Sorel, M. and Sachdeva, P. (2024) ‘AI power: Expanding data center capacity to meet growing demand’, McKinsey, 29 October.

Trucano, M. (2023) ‘AI and the next digital divide in education’, Brookings, 10 July.

UNESCO (2025) ‘UNESCO survey: Two-thirds of higher education institutions have or are developing guidance on AI use’, UNESCO, 2 September.