Stop Deleting Politics: A Better Content Moderation Policy for Education

Input

Modified

Broad toxicity rules can distort lawful political speech and narrow debate Platforms should remove illegal and clearly harmful content, not ideological disagreement User-controlled filtering and ranking offer a better balance between safety and free expression

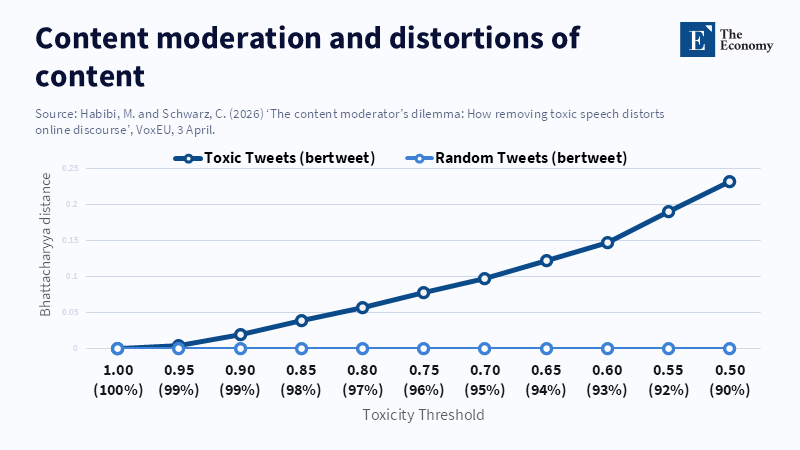

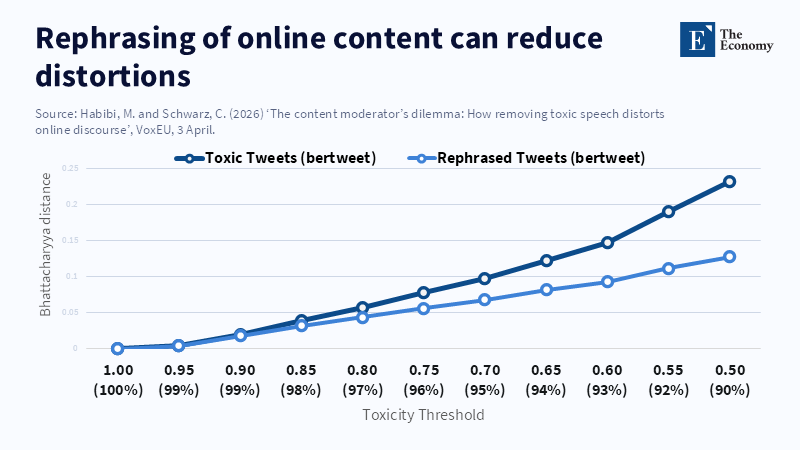

In a sample of 5 million US political posts, researchers found that de,toxicing speech did not simply chill the feed. It changed it. That is the thing about good content moderation. We often pretend there is only chaos or crisis to clean up. There is not. The real debate is between deletion and direction. Do platforms delete everything or control reach? This matters now in education because kids develop civic habits online long before they vote, start college, or hold power. If we teach them that fiery speech is a safety fail, we flatten public debate. If we ignore real harm, we open them to abuse. The right content moderation should take illegal, predatory, and clearly dangerous content down. It should not wipe out all legal political speech because it can be rude, partisan, or tricky for computers to read.

Content moderation involves distribution not merely deletion

The way we debate content moderation is often framed incorrectly. We speak as if the only options were to delete everything or, conversely, do nothing. That's not true. The truth is, nearly every platform already influences what users see through ranking algorithms, recommendation systems, hashtag limits, and spam filtering. The real question isn't just what we'll delete. It's what we'll display, how frequently, and to whom. When moderation overfocuses on removal, it overlooks the effect that distribution has on speech as well.

This is important for more than just minimizing outright misconduct. It has the potential to alter the very shape of public dialogue. If and when some opinions are more likely to be expressed through angry or aggressive language, generalized toxicity schemes and blockages can prevent more than just the tone; they can prevent the subject matter from coming through as well. That results in a quieter, but also narrower feed, and the result can have enduring implications in an educational context. Students are not just passively absorbing information; they are absorbing a sense of what arguments are permissible. When a system arbitrarily filters out legitimate yet confrontational discussion, it enables users to mistake pain for peril. A superior strategy is to keep all legal content theoretically accessible and determine its scope through open, collectively managed controls.

Good content moderation should distinguish illegal harm from political offense

The first lesson of good content moderation is straightforward. We must separate content that is illegal or clearly dangerous from content that is merely offensive, polarizing, or highly political. These are not the same thing. Child sex abuse images, sexual coercion, fraud, targeted threats, criminal sales, doxxing, and blatant incitement should be removed. They have clear victims or significant criminal effects. In 2024, the Internet Watch Foundation analyzed 424,047 reports of suspected child sexual abuse depictions and confirmed 291,273 as criminal. It also said that 62% of the child sexual abuse webpages it processed derived from EU hosting services. The FTC said that in 2024, $12.5 billion was stolen from Americans by fraud, and $1.9 billion of that started with social media scams. That is where overwhelming moderation should be. It is not a good free speech system to leave big clouds of sexual violence, scams, or abuse in the ether.

Legal political speech, on the other hand, can be deeply offensive without being illegal. A far-right screed, a far-left attack, or a savage partisan snark may make a system more uncomfortable. But bad tone is not the same as illegality, and the spectrum of offense is not the same as the spectrum of legal risk. When platforms blur those lines, they cut too much. Newly published evidence shows that the problem is not merely that moderators remove profanity. It is also possible that removal can silence topics often discussed in profanity. That means wide toxicity rules can quietly narrow the political world. For example, a 2025 study comparing more than a dozen hate speech classifiers found that across models, nearly 50% of the very same comments received more than three times the hate score compared to another model, with the results different for women and men and for different racial groups and ages. When moderation tools change this much, vague toxicity rules are too coarse for permissible politics.

This is especially consequential in educational contexts where students are busy learning how to distinguish invasion from discourse. A system that summarily suppresses heated legal political content is unwittingly teaching the wrong answer. It is teaching that conflict itself is the problem; that then institutions should budget discomfort before they have time to evaluate it. The right lesson for civic development, by contrast, is that students must be exposed to unwelcome but lawful opinions and told to gauge firsthand whether those bits of language belong in the debate or in the trash. We are not arguing for unrideable, absurd tolerance. We are proposing that systems should be clear that every excessive post can be interpreted as dangerous or closed-minded, but that we do not interpret dangerous and closed-minded synonyms.

Content moderation should rely on user choice not deletion

Once there is no lien between all hard speech and deletion, an alternative approach is available. Good content moderation can be achieved by enhancing the signals and reach limits currently available. This is not a flaky idea. It is a required one. The Government’s Digital Services Act already supports this. Bigger hosts need to specify the main reasons behind each recommendation; at least one nonprofiled display feed needs to be available; content recs should arrive with contextual signals; and the default restriction on minors can be stronger than for the rest of us. According to an article by Lindsay Blackwell, major platforms and startups in 2025 have begun implementing reforms to their content moderation systems, opening the possibility for similar approaches to become standard practices for students and teachers. Feed moderation should be user-responsive rather than deletion-responsive.

Just as we accept today that media can change without you switching channels, we can also demand that platforms learn from repeated gestures. A user can mute, unfollow, hide, or stop sorting certain types of content. Platforms can observe that a user frequently hits "hide politics" or "skip sensational" and learn to serve those options more often. This is not censorship. It is choice learning. The takeaway for schooling and campuses should be that student digital citizens should not merely be told that they can complain about bad content; they should be taught how to curate it. The workplace does not design every meeting to get people to agree. Why should the feed do the same? A mature feed offers broad truth and many feedback paths.

This model, at the very least, should inspire more confidence. Systems that immediately delete huge torches of opinion produce endless blame games where users and regulators alike are never happy. But they can, at least, reveal what is really going on: show less of this; restore chronology; clarify why this post got recommended; let me switch; let me appeal; let me report. Simple, leading safety control inputs make every platform more trustworthy and reduce suspicion. For educational institutions, that is crucial. Only when students see how workaday disagreement looks unpolished can they see that institution-based moderation need not hold every contentious fact. Content moderation should defend safety with force; it should defend debates with confidence.

Content moderation should be tougher on crime and softer on permissible politics

A narrowed removal standard is not a softer internet. It is a more focused one. The scope of this task is already obvious. According to a report by CBS News, the Internet Watch Foundation found a staggering increase in AI-generated child sexual abuse material in 2025, identifying 3,440 such videos, which represents a 26,362% surge from the previous year. These numbers highlight the severe and urgent challenges faced by platforms and regulators in managing harmful online content. If people spend too much of their teams’ time fighting indignant political commenters, they will underinvest in the hardest issues of sexual abuse, blackmail, fraud, coercion, and violence. The right content moderation is a just system. It is one that prioritizes.

The counter to this argument is easy to store away. If platforms fail to remove free beast extreme political content, they may also push moderation towards polarization, segregation, and radicalization as angry fans leave in disgust. Those fears are well-placed. But deletion is not always the solution. It can be complemented by down, ranking, delayed publishing, warning messages, content labels, reply friction, age controls, heightened defaults for minors, and appealable reach limits. A paper informing this debate also proposes rephrasing a slurry of toxic composites so that meaning is retained, but the script drops out. Admittedly, that is impractical for every case and is obviously useless for blatantly illegal material. But it just proves that the interesting thing here is that the choice is not always deletion or nothing; it is sometimes slower and narrower.

Fundamentally, too, we should be cautious about conflating kindness with the suppression of visibility. A platform that incidentally leaves legal political content online is still less weighty than a platform that refuses to push it into every feed. That approach leaves a record trail; it makes room for counterspeech; it keeps the perception that Facebook and Twitter are Western editors to a minimum. All of that matters for schools and colleges because the students need to see what bans on political disagreement really look like, they need to understand that institutions should not claim every opinion is yours or nobody’s, and they should be told that doing fundamental civic moderation well may give students the confidence to face a more contentious online world. The answer is not always full removal.

The answer is good moderation that promotes discernment. The difficulty in all these issues is that there should always be a test of judgment, not just obedience. Content moderation should be tough on harm, soft on permissible politics, and backed by visibility controls and appeal processes. We are positioning the ideal for the education community precisely where it should be, from a focus on removal to an emphasis on choice and informed filtering. Guided by that goal, we can improve the safety of political dialogue in schools and universities without deepening its polarization or sluggishness. The key point is that the subtle guardrails, signals and preferences we ask for today in business moderation are equally consequential on campus. When we want kids to learn political judgment, their feed should respect it.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Cunningham, M. (2026) ‘AI videos of child sexual abuse surged to record highs in 2025, new report finds’, CBS News, 16 January.

European Commission (2025) ‘Digital Services Act: keeping us safe online’, European Commission, 22 September.

Federal Trade Commission (2025) ‘New FTC data show a big jump in reported losses to fraud to $12.5 billion in 2024’, Federal Trade Commission, March.

Fasching, N. and Lelkes, Y. (2025) ‘Model-dependent moderation: inconsistencies in hate speech detection across LLM-based systems’, Findings of the Association for Computational Linguistics: ACL 2025, pp. 22271–22285.

Google (2025) Transparency Report: Content moderation and removal statistics, Google.

Habibi, M. and Schwarz, C. (2026) ‘The content moderator’s dilemma: how removing toxic speech distorts online discourse’, VoxEU, 3 April.

Habibi, M., Hovy, D. and Schwarz, C. (2024) The Content Moderator’s Dilemma: Removal of Toxic Content and Distortions to Online Discourse, arXiv preprint. doi:10.48550/arXiv.2412.16114.

Internet Watch Foundation (2025) Annual Data and Insights Report 2024, Internet Watch Foundation.

National Center for Missing and Exploited Children (2025) ‘Online enticement reports and trends’, National Center for Missing and Exploited Children.

Yoder, M. M., Ng, L. H., Brown, D. W. and Carley, K. M. (2022) How hate speech varies by target identity: a computational analysis, arXiv preprint. doi:10.48550/arXiv.2210.10839.