The AI Productivity Paradox: Why Faster Tools Often Mean Longer Work

Published

Modified

AI speeds up routine work, but complex tasks still need expert judgment The AI productivity paradox shows that faster outputs can create more review work Sustainable AI use requires strong human oversight and better workflows

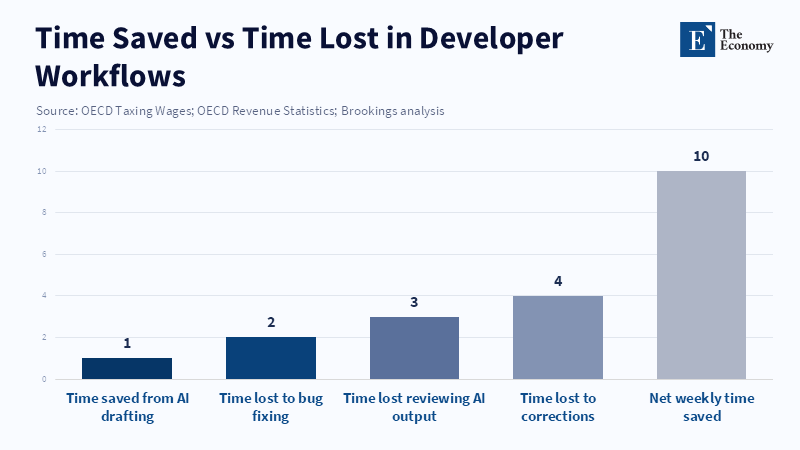

AI was initially presented as a way to free up time. However, there's a gap between what's promised and what people actually experience. For example, according to a 2025 report by Pelayo Arbues, 68 percent of developers said they saved over ten hours each week in their workflows thanks to generative tools. In contrast, other studies and observations also indicate that challenging coding tasks often still take longer to finish, with additional time required for reviewing and correcting the work. Taken together, these facts illustrate the AI productivity paradox: it's easy to create drafts with generative systems, but this does not lower the cost of ensuring the final result is good. Fast drafts lead to more reviews; quick prototypes mean extra work integrating them later; and being able to produce more work often raises the bar for how much and how complex the work should be. Ultimately, it's not just about being more efficient. Instead, the kind of work changes, shifting effort from creating the initial draft to the human work of checking, adapting, and taking responsibility when the AI's output isn't perfect.

The AI productivity paradox in practice

At a large scale, the paradox persists. According to Forbes, many employees report that generative AI tools save them at least an hour per task, as these tools typically complete a task in 30 minutes compared to 90 minutes manually. Yet, when researchers examine complex tasks, results differ: experienced developers sometimes take longer with AI assistants than without. Research across workplaces shows a similar pattern: AI makes starting easier, so people try to do more, but this broader scope increases the need for checking, coordination, and fixing—more work AI can't eliminate. AI changes how quickly we create drafts and what we're expected to deliver.

According to Claes et al., organizations that view the drafting stage as the endpoint may see increased working hours, whereas practices such as rapid releases can improve engineers' work-life balance. These trends are due to human factors and workflow management. Generative outputs are probabilistic; they create plausible text, code, and summaries, but can fabricate, misuse rules, or omit key information. If a draft appears sound, managers might treat it as correct, turning it into a decision point that requires time to verify its origins and fit. Research by Anand Kumar et al. shows teams improved productivity—such as a 31.8% reduction in pull request review time—when they clearly defined AI and human roles and treated AI output as temporary, with time reserved for review.

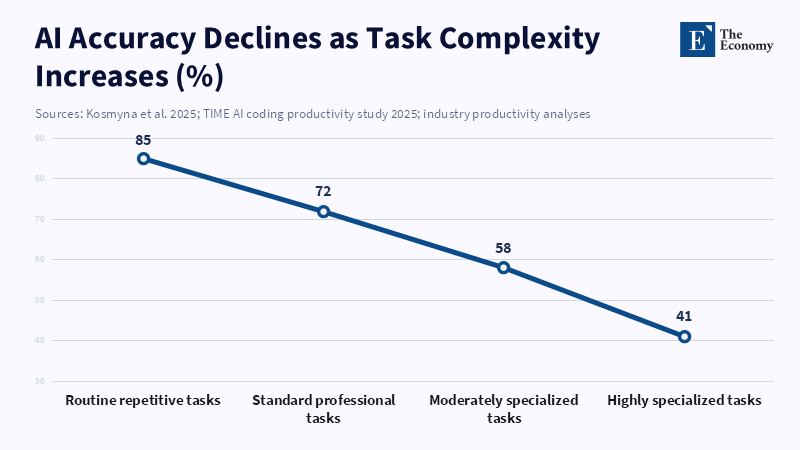

Why the AI productivity paradox hits specialists hardest

This paradox is even more pronounced when expert knowledge and judgment are needed. For instance, in fields such as medical practice, advanced engineering, custom software, and specialized teaching, outputs must be tailored to specific situations. Generative models give a general answer that's often a good starting point, but specialists are needed to turn that starting point into a reliable solution that fits the context. Here, human review isn't just proofreading; it's translating the information, finding errors, and reducing risks. Expecting machines to replace these skills is a mistake. When organizations use AI to speed up specialist work without providing time for the specialist review that ensures results are safe and lasting, they create systems that are easily broken, accumulate hidden errors, and become harder to maintain over time.

There is additional evidence supporting this idea. According to a recent article by James Bono and Alec Xu, Copilot users saw marked improvements in both speed and accuracy, with a 29.79 percent reduction in task completion time and a 34.53 percent increase in overall accuracy across all scenarios and tasks. Meanwhile, strict review processes remain crucial. Research also shows the mental cost: a recent report found that 7 in 10 U.S. managers say employees have made mistakes using artificial intelligence tools in the past year, with some costing employers over $50,000. Overall, AI is helpful for routine tasks, but for specialized judgment, it's like an accelerator that still needs an expert driver.

Policy fixes to resolve the AI productivity paradox

To fix this paradox, organizations need a clear approach to using and measuring AI. They should explicitly plan for the time needed to check AI's work. Instead of assuming speed gains will cut total work, organizations must expect that faster drafts require review and allocate time and staff for these reviews. This involves adding review stages, assigning responsibility for checking, and formally tracking AI-caused errors to prevent them from becoming invisible extra work. Making review an official workflow step ensures that time savings are reliable, not just apparent.

Second, organizations should change the rewards and measurements they use. Productivity measures that reward volume or first drafts encourage the misuse of AI. Instead, they should implement better measures that evaluate quality: how many verified, appropriate outputs does a team deliver in a week, not just how many drafts were produced. In education, for example, learning outcomes and the reproducibility of materials should matter most; in software, time to release a stable version and post-release error rates matter more than lines of code or the number of merged pull requests. Changing the way we measure success reshapes behavior and protects time for the judgment work that machines can't do.

Third, organizations should improve how humans and machines interact, especially by making AI's uncertainty clear and its sources easy to see. Tools that flag uncertain passages, show source information, and explain the AI's reasoning make verification easier and less mentally demanding. These interface improvements help reviewers spot errors and reduce the mental effort required to decide which parts of an output need attention. Along with training, these informative interfaces let experts focus on adapting the AI's output rather than searching for mistakes. Finally, education and training should explicitly teach verification skills. Knowing how to question an AI-generated claim, run quick tests to disprove it, or adapt a general solution to a specific situation should be essential skills for anyone who uses AI.

Buy time by paying for judgment

The AI productivity paradox is a practical problem, not a philosophical one. Tools that make drafting easy are valuable, but their value is only fully realized when organizations accept the true cost of reliable results. That cost is human judgment, and it must be visible, supported, and measured. If organizations want AI to save time, they must decide in advance who will spend the time on verification—and they must reward that work. Otherwise, the same thing will keep happening: faster drafts, higher expectations, and more time spent fixing mistakes. To break this cycle, organizations should design workflows where the machine's output is seen as temporary, set goals that reward quality and reproducibility, and teach people how to certify AI work. These aren't complicated ideas; they are simply the basics of responsible adoption. By following these principles, the AI productivity paradox becomes a manageable problem instead of a hidden cost on attention and expertise.

Editorial and methodological note (brief): Key claims in this article are based on big developer surveys and studies done in 2025, and on research that followed employees over several months. The large survey reports how many developers said they saved a lot of time each week; the study that measured coding tasks found slower completion times when certain AI assistants were used; the workplace research followed about 200 employees over eight months and found increased workloads. These studies are different in scope and method—surveys capture perceived time savings, studies measure task completion under controlled conditions, and research shows how expectations change in practice. They all help explain why apparent speed can lead to more work.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Atlassian (2025) State of Developer Experience 2025. Atlassian.

Counts, L. (2026) ‘AI promised to free up workers’ time. UC Berkeley Haas researchers found the opposite’, Berkeley Haas Newsroom.

Kosmyna, N., Hauptmann, E., Yuan, Y.T., Situ, J., Liao, X.-H., Beresnitzky, A.V., Braunstein, I. and Maes, P. (2025) ‘Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task’, arXiv preprint.

Lee, T.B. (2026) ‘Sorry skeptics, AI really is changing the programming profession’, Understanding AI.

Markman, J. (2026) ‘Workplace impact of AI: Evidence from an 8-month study of 200 workers’, Forbes, 12 February.

Melendez, S. (2026) ‘Why developers using AI are working longer hours’, Scientific American.

Perrigo, B. (2025) ‘AI promised faster coding. This study disagrees’, TIME.

Ranganathan, A. and Ye, X.M. (2026) ‘AI doesn’t reduce work—It intensifies it’, Harvard Business Review.

Resume.org (2026) AI Slop Crisis: 7 in 10 Managers Report Recurring, Costly Errors from Direct Reports’ AI Use. Resume.org.

Sheridan, L. (2026) ‘AI promised to save time. Researchers find it’s doing the opposite’, Inc.

TechRadar (2025) ‘Using AI might actually slow down experienced devs’, TechRadar, 11 July.

Watkins, R. and Rogelberg, S. (2025) Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity. arXiv preprint 2507.09089.

Comment