When Safety Meets the War Machine: Rethinking AI safety and national security

Published

Modified

AI safety and national security now shape how institutions teach and build technology Procurement incentives quietly determine what engineers learn to design and value Education reform is the only durable way to align AI safety with national security

The Pentagon's recent consideration of suspending a $200 million agreement with an artificial intelligence company goes beyond business; it illustrates broader lessons about motivation in the technology sector. The main issue lies in the tension between the government's desire for advanced AI models to be available for all legal applications and some developers' moral boundaries, specifically about mass surveillance and autonomous weapons. This conflict tests whether market forces, government contracts, and national defense priorities will influence the principles that AI engineers prioritize. Alternatively, it questions whether modifications to education and procurement procedures can ensure human input and public transparency in AI development. If government purchasing prioritizes speed, widespread adoption, and broad application over the ability to audit AI systems and the presence of restrictions, future AI systems and the students who build them will learn the wrong lesson. Teaching and buying AI in a different way can establish safety as a reliable standard within the industry, rather than just a superficial promise.

AI Safety and National Security: A Source of Contention

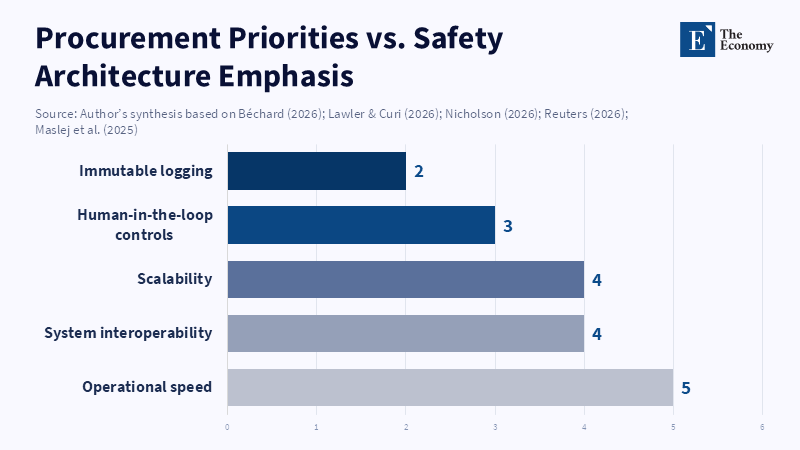

The ongoing disagreement between a prominent AI lab and the Department of Defense underscores a growing policy divide. One outlook emphasizes the importance of AI that can quickly improve military capabilities, be deployed across various operational areas, and operate within secure, confidential networks. The other view claims that using AI without limits could endanger civil liberties and civilian lives. This isn't simply a theoretical debate. The specific language used in procurement, along with certification standards and contract provisions, drives vendors toward specific technological paths. A purchaser who values smooth operation, compatibility, and simple integrations will naturally shape how vendors plan their products. Over time, these vendor plans determine hiring practices, training programs, and what is regarded as good engineering. Therefore, the procurement process has a substantial educational impact.

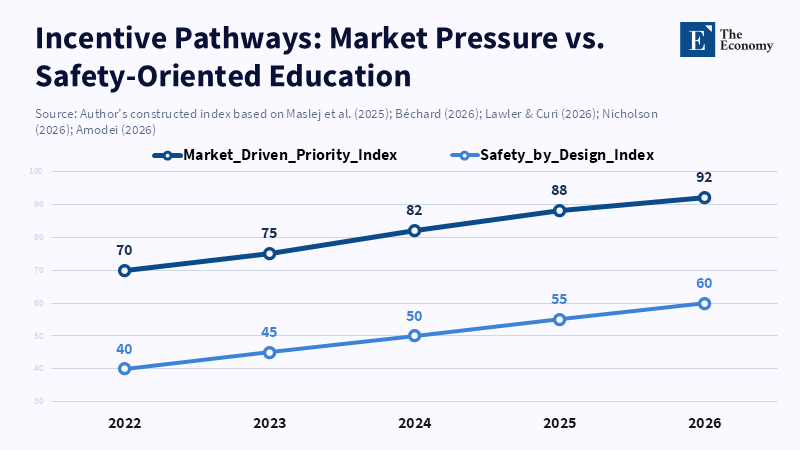

This connection from procurement to education is important for curriculum design. Ethical workshops alone aren't enough. Students will favor designs that stress practical application if they see the market and major clients reward flexibility over built-in limitations. To change how AI is developed, the emphasis in education must change. This involves practical, competitive exercises where teams are required to create AI systems that meet performance goals and provide irrefutable demonstrations that safety requirements cannot be circumvented. Student work needs to be evaluated not only on overall accuracy as well as on auditability and protection against tampering. Equally important is teaching students how to put safety measures into contract terms that procurement officials can enforce. These are concrete, teachable skills that will shape future behavior.

The significance of this matter is heightened by ambiguous legal and operational aspects. Phrases such as surveillance and autonomy look straightforward in policy papers but prove complex when lawyers, operators, and engineers have to analyze records and implement approval processes. An AI model capable of processing large amounts of data and managing various tasks could be used to support analysis, compile detailed files and flag individuals of interest. Whether this support remains purely analytical or crosses into active targeting depends on design decisions, specifically what data the model has permission to access, how its outputs are acted upon, and how humans check the reasoning behind its suggestions. Students who are able to discern between analysis and action and measure the uncertainty in a model's outputs represent the workforce required to protect both national security and civil liberties.

AI Safety and National Security: Balancing Costs and Design Realities

Financial considerations considerably influence these decisions. The Department of Defense's offers, which include large-scale contracts, funding for integration, and long-term agreements, establish strong incentives. Vendors must decide how to allocate their limited engineering resources when a program offers millions for improvements. Will engineers emphasize continuous operation, minimal delays, and integration with old classified systems? Or will they invest in creating specialized safeguards that could complicate deployment and raise costs? When money encourages the first choice, the standard technical approach shifts. Consequently, national security decisions start to affect the capabilities of general-use technology and the education of engineers.

Looking at a specific example can clarify this trade-off. Consider an intelligence unit where analysts spend 2,000 hours each year on a task that an AI model could halve. This frees up staff to focus on faster decision-making. If this reduction saves around \$1 million per similar unit annually, expanding AI usage becomes more attractive across multiple units and operational theaters. This creates pressure on procurement, which in turn leads vendors to make compromises, shaping what students are taught to build. This progression isn't mysterious; it's based on economics and how organizations function. Teaching students to analyze these incentives makes them better policy contributors, not just more competent coders.

From a design standpoint, features that appear purely technical carry normative implications. A few settings determine whether a system serves merely to advise or to effectively take action: the scope of context it considers, the external tools it can employ, whether its outputs can automatically trigger actions, and how logs are kept and reviewed. Minor choices, like weak approval processes, excessive tool access, and insufficient logging, can turn a support model into one that impacts critical, even lethal, decisions. Procurement terms need to clearly lay out measurable technical guidelines, specifying which records must be kept, for how long they are stored, what qualifies as human consent, and which actions are prohibited. Engineers need to be trained to build in accordance with these guidelines. Without proper training, safety measures become optional and insufficient.

AI Safety and National Security: Steps for Educators, Administrators, and Decision Makers

First, incorporate expertise in preventing misuse into core technical curricula. Students should create systems with embedded checkpoints for human control at the API level. Students must learn to build immutable logging and reproducible audit trails, and to simulate attempts to evade security measures during red-team exercises. Most importantly, they should learn how to turn technical safeguards into contract clauses. This moves safety from an abstract idea to a practical application, especially if a student can write an audit requirement that a procurement officer can use. These skills need to be evaluated and certified.

Second, change the incentives within institutions. Hiring committees should give preference to candidates who have demonstrated a dedication to safety. Student projects should be assessed not only on maximum performance, but also on thorough documentation of safety measures and on the analysis of potential failures. Grant-awarding bodies should require dual-use risk statements and monitor adherence after the award. Accreditation organizations should emphasize governance and oversight skills so that graduates not only possess technical abilities, but also the capability to design auditable systems. When certifications value safety, attitudes in the market change, making safe practices a viable career option rather than only a moral consideration.

Third, change procurement and policy to reward demonstrable safety. Contracts should include independent safety audits as milestones. Payment schedules can be linked to verifiable audit results and to transparency that continues after deployment. Tender documents should include specific technical markers—such as immutable logging, defined human-approval workflows, and forbidden tool calls—that third parties can test. Liability clauses can make misuse costly. International coordination is also vital: aligning standards with allies reduces the temptation to weaken safeguards in the name of competition. Clear procurement regulations also assist public oversight and help universities defend partnerships publicly.

Finally, carry out pilot programs, measure progress, and expand successful approaches. Start with pilot programs across a small number of engineering programs and procurement offices. Connect a small number of government contracts to safety milestones and publicly release the results. Use these pilot programs to develop metrics, credibility standards, and third-party auditing procedures. If evidence indicates safer deployments, expand the approach through accreditation and procurement standards. Education is not only a supply route; it's a tool for governance. By using it in this way, the factors shaping future systems will promote moderation and responsibility.

A procurement dispute over \$200 million provides valuable lessons. It teaches companies, investors, and students about important values. We can view it as just another procurement challenge and let short-term operational logic take over. Alternately, we can use this opportunity to develop sustainable institutions: curriculum standards that teach competence in misuse prevention, procurement regulations that value auditability, accreditation standards that certify governance skills, and pilot programs that generate verifiable results. These are practical measures, not idealistic sentiments. They require collaboration across many fields, pilot project funding, and political dedication. But they are obtainable. If democratic societies want both safety and national security, they must design incentives encouraging the industry to respect both values.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Amodei, D. (2026) The Adolescence of Technology [essay]. DarioAmodei.com.

Béchard, D.E. (2026) ‘How Anthropic’s safety-first ethos collided with the Pentagon’, Scientific American, 21 February 2026.

Maslej, N., Fattorini, L., Perrault, R., Gil, Y., Parli, V., Kariuki, N., Capstick, E., Reuel, A., Brynjolfsson, E., Etchemendy, J., Ligett, K., Lyons, T., Manyika, J., Niebles, J.C., Shoham, Y., Wald, R., Walsh, T., Hamrah, A., Santarlasci, L., Lotufo, J.B., Rome, A., Shi, A., Oak, S. (2025) Artificial Intelligence Index Report 2025. Stanford HAI.

Nicholson, A.-M. (2026) ‘U.S. Defense Department Clashes With Anthropic Over “AI First” Doctrine, Ethical Dispute Intensifies Over Autonomous Weaponization’, The Economy, 3 February 2026.

Reuters (2026) ‘US Defense Secretary Hegseth summons Anthropic CEO for tough talks over military use of Claude, Axios reports’, Reuters, 23 February 2026.

Axios (Lawler, D. & Curi, M.) (2026) ‘Exclusive: Pentagon threatens to cut off Anthropic in AI safeguards dispute’, Axios, 14 February 2026.

Comment