Pentagon AI Procurement: Why Replacing Anthropic With OpenAI Reveals a Deeper AI Governance Crisis

Published

Modified

The Anthropic–OpenAI shift exposes flaws in Pentagon AI procurement Replacing an AI model triggers deep operational and institutional disruption Procurement reform is needed to balance speed, ethics, and security

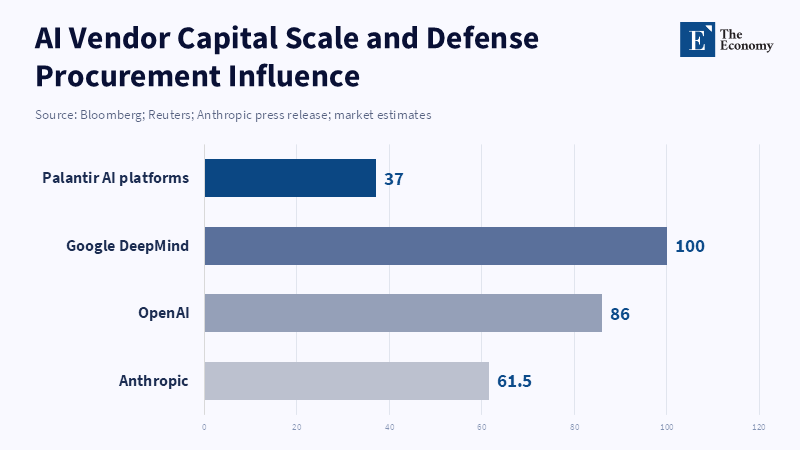

The pivot in one hard number: in the space of a few months the Department of Defense told contractors to purge Anthropic’s Claude from classified systems while the company it was replacing rose from a $61.5 billion post-money valuation in March 2025 to raising a $13 billion Series F in September 2025 — and then, as the political fight unfolded, Anthropic’s fundraising streak continued into 2026. That mismatch between procurement leverage and corporate firepower—small, urgent government timelines set against newly enormous private capital—explains why a technically quick “model swap” has become a political, logistical, and ethical morass. The phrase “Pentagon AI procurement” should no longer read as a dry acquisition category; it is a pressure cooker where wartime tempo, vendor lock-in, and investor incentives collide. What happened in the Claude-to-OpenAI shuffle is not a tale of simple substitution. It is a test of whether public authorities can run mission-critical AI programs without turning procurement into a forced choice between expediency and ethical restraint.

Pentagon AI procurement: the swap was technical, although the cost is human and institutional

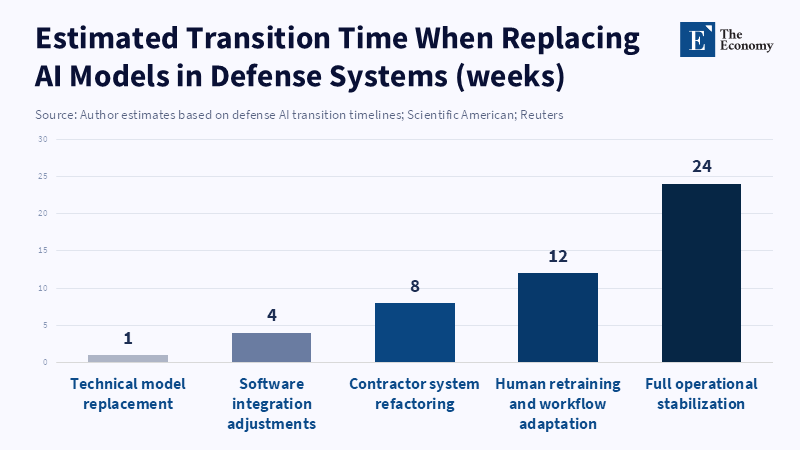

The Pentagon’s stated timeline — phase Claude out of classified networks within six months — sounds operationally neat: models plug in, models unplug. In practice, the model is only one part of an ecosystem. Defense teams trained workflows, prompts, and validation routines around Claude; external contractors wired it into analytics pipelines and targeting aids; and dozens of human teams learned to expect particular failure modes and guardrails. Pulling Claude out is therefore not simply replacing one software package with another. It means unlearning a set of human-machine habits and renewing trust at the human level. That process is slow, risky, and expensive. Scientific American’s reporting and Pentagon-adjacent sources make clear the technical swap can happen in hours, but human and procedural unwinding takes months.

The downstream cost is shown in the concrete contract math. Reuters and other outlets documented DoD agreements in the vicinity of the $200 million mark with major AI labs and noted that Palantir’s Maven system uses Anthropic code in workflows that underpin intelligence analysis. Mandating an immediate cutover forces contractors to refactor complex software that touches classified systems and, in some cases, to rebuild entire modules that had been co-developed with Claude. That work is not zero-sum: it consumes scarce engineering hours, delays project schedules, and invites human mistakes during high-stress transition. Palantir’s own disclosures and reporting indicated replacement could take months and could affect contracts worth hundreds of millions. The supply-chain decision, therefore, flows from a political posture into operational risk.

Pentagon AI procurement: political incentives beat ethical constraints unless rules change

Viewed from the outside, Anthropic’s choices read as the posture of a high-capital, safety-oriented vendor prepared to say “no” to certain military uses. The Economy’s market coverage and contemporaneous reporting show Anthropic’s public statements and founder essays stressing ethical limits — refusing to supply models for domestic mass surveillance or to build guardrails that permit fully autonomous lethal weapons. That stance has costs. When the Pentagon framed the issue as a national-security imperative requiring opeoperational leewayhe administration moved to treat that refusal as an unacceptable constraint — a supply-chain risk. The consequence was an executive move to phase Anthropic out and accelerate the adoption of a vendor more willing to accept DoD terms. The result looks less like a sober ethics doctrine being adjudicated and more like a procurement system rewarding acquiescence.

A cynical reading — supported by market and media traces — is that the government, under pressure to maintain wartime agility, picked the path of least institutional friction: a vendor willing to accept broad usage terms. That is not inherently illegal or even necessarily unreasonable in acute crisis modes. But it is a procurement design failure if the system cannot credibly weigh ethical constraints as procurement criteria. When insurgent politics, investor stakes, and a rush to operationalize AI drive a binary choice — use this model now or face delay — the incentive tilts toward immediate availability and away from lasting, licit-interest safeguards. This situation explains why, despite Anthropic’s deep pockets and rapid revenue growth, the firm became collateral in a political showdown.

Pentagon AI procurement: what practical policy fixes would actually reduce risk?

First, procurement must recognize safety features as evaluative criteria carrying contractual weight. If a model’s guardrails, usage covenants, or ‘no-go’ provisions are treated as competitive virtues, vendors gain economic room to refuse ethically fraught requests without fear of automatic blacklisting. That means rewritten solicitations that ask not only for performance and cost but for auditable safety commitments, third-party verifications, and legally enforceable operational constraints. Making such provisions a scored part of RFPs would encourage labs to compete on safety design rather than reward those who remove restrictions to win work. The immediate counterargument — that such constraints tie the hands of commanders — is serious, but it’s manageable: contracts can include narrowly scoped exceptions, emergency escalation mechanisms, and review panels of mixed civil-military expertise to adjudicate extreme cases.

Second, modularization and redundancy should be prioritized in procurement. The most painful operational exposures come from deep vendor lock-in: the Palantir case shows platforms built around a single model create brittle points of failure. Contracts and engineering standards should require modular interfaces and interchangeable model adapters, with documented test suites and standard data exchange formats. That reduces the human-unlearning burden and limits the time and cost of software refactoring when a supplier relationship changes. Yes, building for interchangeability raises short-term costs. But viewed across a five-year operational horizon, it is a rational insurance premium against politically induced churn.

Third, disclosure and oversight are not abstract luxuries; they are operational risk reducers. When the public procurement record, non-classified briefings, and redacted oversight memos are made available to legislative and independent reviewers, political decisions become harder to exploit for short-term advantage. Opponents will call for secrecy because of operational grounds. Naturally, certain tactical details stay classified. But procurement rationales, scoring rubrics, and the legal threshold for supply-chain risk designations should be auditable to prevent arbitrary use. Creating a statutory standard for “supply-chain risk” designations — clarifying scope, appeal routes, and remedial timelines — would protect both national security and civil liberties. The recent lawsuits and industry backlash underline that clarity is overdue.

Fourth, investing in a public-interest R&D and hosting option would reduce leverage imbalances. Anthropic’s rapid revenue growth and funding rounds — from the March 2025 capital infusions to large 2025–26 financings — show private capital can buy scale and negotiating leverage. Public investments to host and certify neutral models for sensitive environments would give the government an option that is not hostage to a single corporate bargain. That could be a scaled-up national computing corridor or a federated hosting agreement with commercial providers that accepts stringent governance terms in exchange for stable procurement relationships. This is costly, but the alternative is repeated cycles of scramble, litigation, and mission disruption every time politics shift.

Anticipating and answering the critiques

Some will say these changes are naive because war demands speed; bureaucracies that add process will lose the race. The answer is empirical: a rushed swap invites errors, automation bias, and workflow breakdowns while human operators relearn failure modes; downstream harms can carry greater strategic costs than a deliberate, slightly slower transition. Scientific American’s coverage of personnel retraining and the risk of automation bias captures this point — fast is not always better if it comes at the expense of functional reliability. Critics will also argue that any ethical constraints are naïvely ‘soft’ in crisis. The corrective is to embed enforceable contractual clauses and emergency review processes that allow constrained deviations only under tightly defined conditions.

Others will argue that vendor ethics are a fig leaf for market protectionism: that a well-funded Anthropic can afford to be choosy precisely because investors back it, while smaller firms would be punished. That is true in part — market power matters — which is why procurement reform must combine ethics scoring with support for modularization and public hosting options so that smaller vendors can compete on capabilities they lack, without sacrificing principled stances. Finally, skeptics who point to national security precedence will note that any government must retain the final say. Precisely. The goal is not to bind commanders’ hands but to give them better, clearer options and to prevent the automatic selection of vendors whose terms undermine long-run democratic oversight.

Policy, not Posturing: Turn procurement into a public good

The Claude–OpenAI pivot should be a moment of institutional learning, not a cycle of recrimination. “Pentagon AI procurement” must cease to be shorthand for political triage and become an organized, accountable system capable of managing speed with moral guardrails, vendor diversity with risk management, and classified needs with transparent oversight. That starts with scoring safety as a procurement attribute, insisting on modular technical architectures, funding neutral hosting capacity, and clarifying the legal hooks for supply-chain designations. If policymakers want resilient, mission-ready AI without surrendering democratic norms, they must fix procurement rules so that commercial incentives and public values align rather than collide. The current scramble proves the point: when the state treats the marketplace as a pressure valve, it transfers policy choices to investors and corporate boards. A more deliberate procurement design would shift those choices back to democratically accountable institutions — without degrading readiness. The time to move is now.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

AP News (2026) Pentagon says it is labeling AI company Anthropic a supply chain risk “effective immediately”.

Axios (2026) Anthropic sues Pentagon over rare “supply chain risk” label.

Economy.ac (2026a) Anthropic CEO issues stark AI warning, humanity’s “technological adolescence” with an oversized body. The Economy.

Economy.ac (2026b) U.S. Defense Department clashes with Anthropic over ‘AI First’ doctrine, ethical dispute intensifies over autonomous weaponization. The Economy.

Economy.ac (2026c) Secures $30 billion in funding: Anthropic accelerates AI agent-led expansion, escalating B2B and IPO rivalry with OpenAI. The Economy.

Frazee, J.R., Prairie, J. and Hickey, A.S. (2026) Pentagon designates Anthropic a supply chain risk — what government contractors need to know. Mayer Brown.

Goldman Sachs Asset Management (2025) Anthropic raises $13B Series F at $183B post-money valuation.

Maher, T. (2026) Pentagon surges Palantir Maven Smart System contract spending to more than $1B. InsideDefense.

Mayer Brown (2026) Pentagon designates Anthropic a supply chain risk — what government contractors need to know.

Reuters (2026) Anthropic sues to block Pentagon blacklisting over AI use restrictions.

Reuters (2026) Palantir faces challenge to remove Anthropic from Pentagon’s AI software.

Sullivan, E. (2026) How exactly does the Pentagon evict Claude?. Scientific American.

TechCrunch (2025) Anthropic raises $13B Series F at $183B valuation.

Wysocki, K. (2026) Palantir’s Pentagon AI platform scrambles after Anthropic gets “supply-chain risk” label. Bez-Kabli.