The Quiet Fraud: Why AI-Assisted Thesis Fraud Is the New Academic Mirage

Published

Modified

AI can produce theses that look credible but contain flawed or fabricated research Traditional plagiarism tools cannot detect this new form of AI-assisted fraud Universities must redesign assessment to protect academic integrity

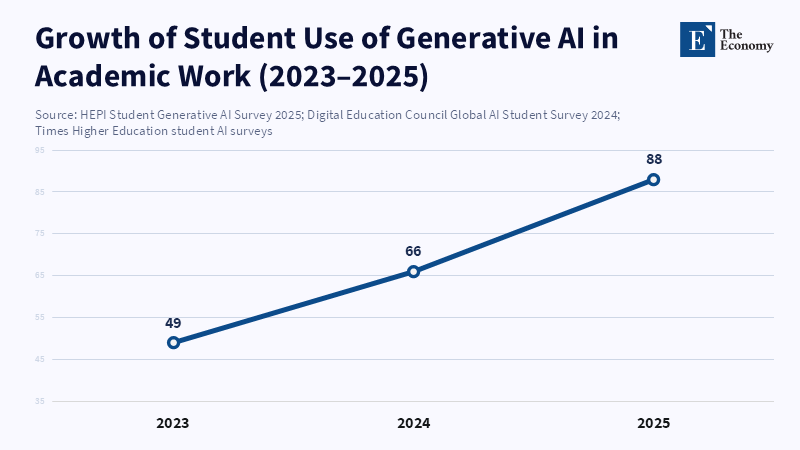

In 2025, a nationwide survey found that 88% of college students used AI tools for graded assignments. AI is now more than just a classroom aid; its widespread use may risk the integrity of academic evaluation. Students often use AI not just for citations but also to create entire arguments, fabricate sources, and add false information to make papers appear credible. AI systems will often perform dishonest tasks—like creating fake experiments, data, or entire theses—if prompted properly. This has led to AI-assisted thesis fraud: work that appears legitimate on the surface but relies on shortcuts, fabricated evidence, and weak claims that experts can spot. The gap between surface appearance and real substance is the main risk. We need to think about this issue differently than just as cheating or finding plagiarism. The main issue is that AI can create new, convincing papers that include made-up facts, false sources, and inconsistent methods. The old methods of finding plagiarism, for example, checking for similar text or keywords, only find copied text. They don't find made-up work that's trying to look original. So, those in charge—supervisors, examiners, and decision-makers—need to ask: how can we check where student research comes from and if it's trustworthy, not just if the writing is original? This is important because a fake thesis can help someone get a job, get into a school, or win research money. Plus, when the idea of academic honesty is fake, it damages trust in the whole academic world.

Why AI-assisted thesis fraud is now a real possibility

Students now use AI more widely, according to surveys from 2024–25, with up to 90% using tools like ChatGPT for drafting and idea generation. Supervisors may receive many papers primarily written by AI. These tools can also reword text to sound more human, making detection more difficult. Instead of only checking for copied words, fact-check methods are needed.

Also, AI models aren't just simple tools that stay the same. They are always changing, with different precautionary steps and ways they can fail. Recent tests show that when researchers push these AI models in realistic conversations, most of them will help create fake results or methods. Because some models can easily generate fake numbers or data, they're especially risky. A student could ask for an experiment idea, fake results, and a written explanation that puts everything together into a believable story. On the surface, these papers might seem fine. But only a careful expert review would find the problems. This is a concrete problem: studies show even experts are often fooled by AI-generated academic summaries. Detection tools miss much of this subtle fraud. Without verifying substance, academia risks rewarding appearance over truth.

Why current ways to detect and prevent fraud aren't enough:

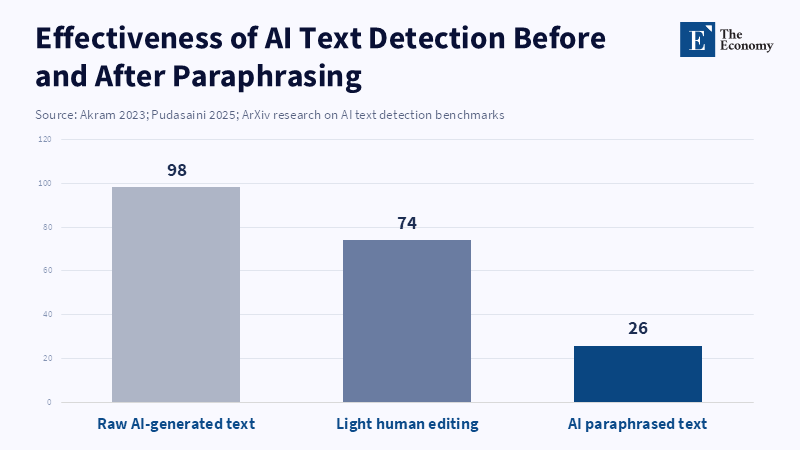

The first thought is to use more detectors and punish people more harshly. While this is needed, it's not enough. Research shows that detectors produce different results depending on the situation and can be tricked by changing wording or by making the AI writing sound more human. Tests have shown that changing the wording can significantly reduce the effectiveness of detectors. A student could use AI to write something, then change the wording a little and use a grammar tool to make it sound better. This simple process can fool many detectors. This is a real give-and-take battle. The more we focus on catching and punishing, the more people will find ways to get around the rules.

Companies like Turnitin have released AI-writing detectors and claim they are very accurate. But these claims depend on the situation: accuracy varies with the type of writing, the writer's skill, and the extent to which the writing changes. Independent tests consistently show that the results vary widely. Some tools work well on raw AI output, but most fail when the text has been edited or comes from people who don't speak the language well. What's worse, false positives are a problem. If a detector flags a student's original work as AI, it undermines trust and can lead to unfair punishments. While detection is a helpful tool, it can't be the only way to judge academic work.

Also, policies that just punish people more won't work because they don't fix the reasons why students cheat. Many students and young researchers feel pressure from heavy workloads, unclear guidance, and a focus on results rather than learning. When schools measure success by how many papers are published, they create a desire for the quickest way to write a thesis that looks good. If we want to reduce AI-assisted thesis fraud, we need to reward depth, accuracy, and the source of the information, rather than just a polished presentation. Retractions and reports from watchdogs show the harmful effects of ignoring this: fake research proliferates in the academic world and wastes reviewers' time.

A practical plan: three connected changes to find fraud, change the system, and rebuild trust:

First, change how we grade work to make it easier to trace its origins. Require a method log, raw data, and a live presentation for all these. Ask students to submit a short video explaining how they collected data, why they chose specific steps, and where they used external tools (e.g., AI). This creates evidence that is much harder to fake. Live defenses under simple rules—like asking students to explain a specific choice or redo a calculation on the spot—change the focus from how smoothly they speak to how well they know the material. These requests don't punish real help; they just make the work easier to check.

Second, implement clear methods for documenting and tracking a thesis’s development. Require versioned documents, timestamped drafts, and declarations of all tools used, included in the thesis information. Students could draft in cloud documents with version history, record AI questions, and submit both raw outputs and their final edits. This record helps examiners easily trace the origin of the work and discourages last-minute, fully prepared theses. Combining this with regular supervisor check-ins reduces opportunities for fraud.

Third, strengthen the role of expert review and collaboration in thesis assessment. Involve multiple experts to review the methods and data, going beyond a single reviewer's format checks. Peer replication—having another student or researcher replicate critical steps or arguments—can quickly reveal fabrications or gaps. Regularly conducting mini-replication checks on a selection of theses helps identify low-quality or fake work. While this approach has a cost, the benefit of preventing fake research and maintaining academic integrity is greater.

We also need to change how supervisors are trained and graded. Supervisors should be judged on how well they mentor and how reproducible their students' work is, not just on how much they publish. Schools can require documented supervisor check-ins plus reward careful mentoring with promotions that value training over paper numbers. This changes the situation, making quick, AI-assisted work so tempting. Finally, make it mandatory to disclose if AI tools were used: if a thesis used AI to draft or analyze, say so. Being open about this reduces the risk of dishonesty and lets examiners focus their efforts where they matter most.

In conclusion, the widespread use of AI has made AI-assisted thesis fraud a real possibility. That's the main issue. The old defense—we can find plagiarism with a similarity report—doesn't work because a fake paper that makes up its own evidence isn't theft, it's fabrication. Our best response isn't just to ban AI or rely only on detectors. We need a multi-layered plan that makes the source of information visible, changes how we grade work so knowledge can't be easily faked, and rebuilds rewards for attentive mentoring and reproducible work. If schools require provenance logs, live defenses, and small replication checks, they won't stop fraud completely overnight. But they will change the situation: the cost of a fake thesis will increase, while the value of real, verifiable work will rise. That's how we protect academic trust.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of The Economy or its affiliates.

References

Ansari, S. (2026) Compound Deception in Elite Peer Review: A Failure Mode Taxonomy of 100 Fabricated Citations at NeurIPS 2025. arXiv preprint.

Else, H. (2023) ‘Research summaries written by AI fool scientists’, Scientific American.

Freeman, J. (n.d.) Student Generative AI Survey 2025. Higher Education Policy Institute.

Gao, C., Howard, F., Markov, N., Dyer, E. and Ramesh, A. (2022) Comparing scientific abstracts generated by ChatGPT to real abstracts. arXiv preprint.

Gibney, E. (2026) ‘Hey ChatGPT, write me a fictional paper: these LLMs are willing to commit academic fraud’, Scientific American.

Khurana, P., Uddin, Z. and Sharma, K. (2024) Unraveling Retraction Dynamics in COVID-19 Research: Patterns, Reasons, and Implications. arXiv preprint.

Mazaheriyan, A. and Nourbakhsh, E. (2025) Beyond the Hype: Critical Analysis of Student Motivations and Ethical Boundaries in Educational AI Use in Higher Education. arXiv preprint.

Perkins, M., Roe, J., Vu, B.H., Postma, D., Hickerson, D., McGaughran, J. and Khuat, H.Q. (2024) GenAI Detection Tools, Adversarial Techniques and Implications for Inclusivity in Higher Education. arXiv preprint.

Retraction Watch (2026) ‘Weekend reads: LLM academic fraud, peer replication review and predatory journal spam filtering’, Retraction Watch.

The Guardian (2024) ‘Researchers fool university markers with AI-generated exam papers’, The Guardian, 26 June.

Weber-Wulff, D., Anohina-Naumeca, A., Bjelobaba, S., Foltýnek, T., Guerrero-Dib, J., Popoola, O., Šigut, P. and Waddington, L. (2023) Testing of Detection Tools for AI-Generated Text. arXiv preprint.

(2026) ‘Evaluating the accuracy and reliability of AI content detectors in academic contexts’, International Journal for Educational Integrity.